CSC/ECE 506 Spring 2012/11a hn: Difference between revisions

No edit summary |

No edit summary |

||

| Line 66: | Line 66: | ||

Shasta uses an interesting approach for inter and intra-node communication. Firstly, it uses timely checks to determine if misses occur and processes them in software. The shared address space is divided into blocks, which are different for different systems. The blocks are further divided into lines, and each line maintains its state information in a state table. These periodic checks for misses take up a mere 7 instructions. Stack and memory local to a thread is not checked because they are not shared among nodes. Optimizing these checks are done by aggregating checks for nearby blocks. | Shasta uses an interesting approach for inter and intra-node communication. Firstly, it uses timely checks to determine if misses occur and processes them in software. The shared address space is divided into blocks, which are different for different systems. The blocks are further divided into lines, and each line maintains its state information in a state table. These periodic checks for misses take up a mere 7 instructions. Stack and memory local to a thread is not checked because they are not shared among nodes. Optimizing these checks are done by aggregating checks for nearby blocks. | ||

[[image:Store_miss_code.jpg|thumb|center|400px|alt=CC-NUMA.|Periodic miss checks done in software[[#References|<sup>[7]</sup>]].]] | |||

As mentioned in the previous paragraph, there is an interesting optimization technique for communication between and within nodes. Within a node, race conditions are avoided by locking blocks individually during coherence protocol operations. Instead of a bus based system within a node, messages are sent between nodes instead. Processes must also wait until all operations on the node pending are complete. If the program already has provisions for handling race conditions, this time wait required for release can be removed. Messages from other processors are done through polling. No interrupt based mechanism is used for message handling because of a high cost associated with it. This way, the processor is free to do other tasks. Polling is done when waiting for messages, and the polling location is common to all processors within a node, which is very efficient. Also just three instructions are needed to do the polling activity.[[#References|<sup>[7]</sup>]] | As mentioned in the previous paragraph, there is an interesting optimization technique for communication between and within nodes. Within a node, race conditions are avoided by locking blocks individually during coherence protocol operations. Instead of a bus based system within a node, messages are sent between nodes instead. Processes must also wait until all operations on the node pending are complete. If the program already has provisions for handling race conditions, this time wait required for release can be removed. Messages from other processors are done through polling. No interrupt based mechanism is used for message handling because of a high cost associated with it. This way, the processor is free to do other tasks. Polling is done when waiting for messages, and the polling location is common to all processors within a node, which is very efficient. Also just three instructions are needed to do the polling activity.[[#References|<sup>[7]</sup>]] | ||

Revision as of 00:42, 15 April 2012

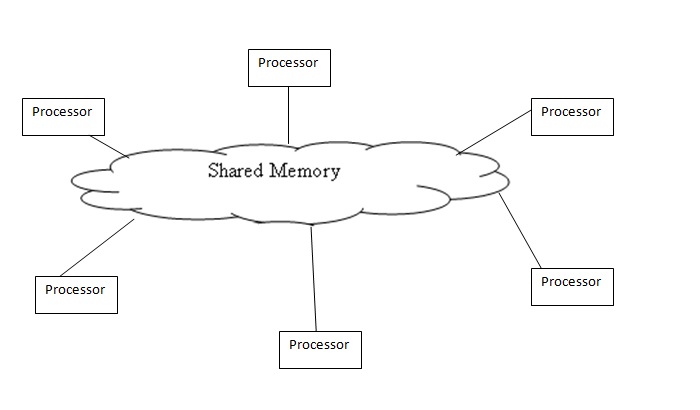

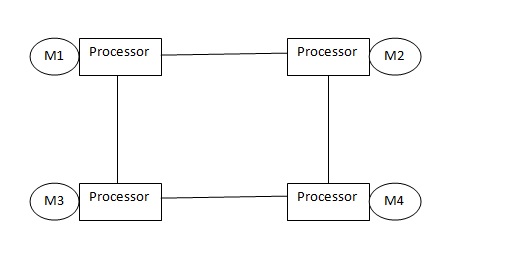

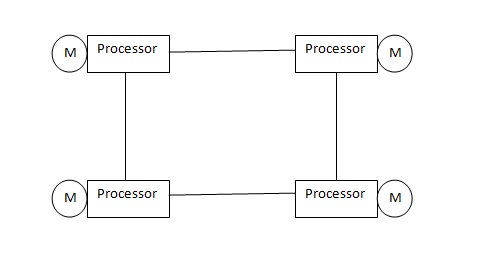

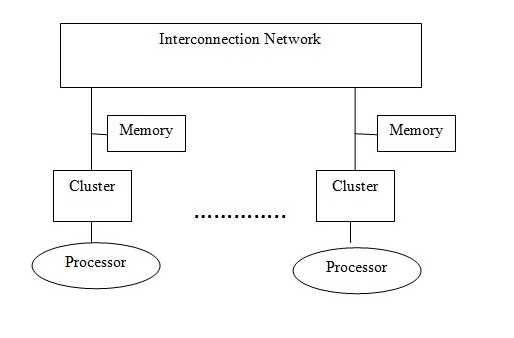

The Distributed Shared Memory (DSM) system is a combination of a Shared Memory system and a Distributed Memory systems. The Shared Memory system has a group of processors sharing global memory which allows any processor to access the memory location[1]. The Distributed memory systems have a cluster each (i.e each processor has its own memory location ) and use message passing to communicate between the clusters[2] The DSM on the other hand has several processors sharing the same address space i.e a location in memory will be the same physical address for all processors. Unlike the Shared Memory System they do not actually use a global memory which is accessible by all processors, instead it is a logical abstraction of a single address space for different memory locations which can be accessed by all processors.

From the above pictures it can be noticed that in Distributed Systems each processor as its own memory,but in case of Distributed Shared memory though each processor has its own memory the memory is the same i.e The main memory of a cluster of processors is made to look like a single memory with a single address space. The Distributed systems are scalable for a large number of processors but is difficult to program because of message passing. The Shared Memory on the other hand is less scalable but is easy to program as all the processors can access each others data and makes parallel programming easier. Therefore DSM uses a more scalable and less expensive model by supporting the abstraction of shared memory by using message passing. The DSM allows the programmer to share and use variables without having to worry about their management. Hence a processor can access a address space held by other processors main memory. It allows end-users to use the shared memory without knowing the message passing,the idea is to allow inter processor communication which is invisible to the user.

A DSM system in general has clusters connected to a interconnection network. A cluster can have a uni-processor or multi-processor system with local memory and cache to remove memory latency. The local memory of a cluster is entirely or partially mapped to the DSM global memory system. A directory is maintained which has information of location of each data block and the state of the block. Directory organization can be that of a linked list,double linked list or a full map storage,the organization of directory will affect the system scalabilty[5].

A DSM system provides the shared memory abstraction by creating a software which would be cost efficient as it would not change anything in the cluster. Some systems provide the abstraction in hardware which could cost extra but the performance of hardware based DSM is better than software based [4].

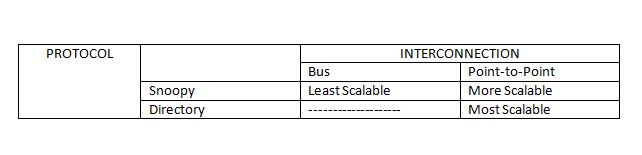

Why not use Bus based multiprocessing for the DSM?

The Distributed Shared Memory(DSM) architecture requires a shared memory space. Message passing using interconnect networks is the preferred mode of communication to maintain coherence in a DSM. This is because

- Physically, the DSM is designed to allow a large number of nodes to interact with each other. With a bus-based system, the bus become simply too long to connect various nodes of a DSM. In a bus based multiprocessor for a smaller set of nodes having a common memory, latency of communication is not discernable. Also because the nodes of a bus-based system use snoopy protocols, clock frequency synchronization is also critical. With larger number of nodes and a longer bus, maintaining clock frequency becomes a bigger challenge. - Whatever cache coherence protocol is used, a larger number of nodes leads to a larger number of transactions on the bus. The bus using a shared channel to transfer cache blocks/lines, an increase in bus traffic causes greater latency in communcation. - It is preferable to design a DSM on a non-uniform memory access(NUMA) based architecture, because that would allow the use of memory to be addressed globally, but placed locally to each node.

For the above mentioned reasons, a message passing model is used for communication between nodes. A message passing model augmented with a point to point connection between nodes is also preferable. The following section explains why.

Not Bus based, so where does the coherence happen?

As explored in the previous section, it is not preferable to use a monlithic bus for communication. Instead, short wires are used for point-to-point interconnections. As a result, these wires can afford to have greater frequency clocked. The bandwidth can also now afford to increase.

As illustrated in the figure, a point-to-point interconnection is coupled with a directory based protocol for a DSM. Since the memory is distributed, a directory can be maintained, which has information about which caches have a copy of memory blocks. Having a directory based protocol, with each node having information about the blocks maintatined by the caches, each time a read/write is done by a cache of a node, based on it being a hit or miss, allows a node to contact the directory system, and then send updates/signals to only those nodes in the system, thus avoiding traffic.

Hardware DSM

To achieve the hardware DSM we need special kind of network interfaces and cache coherence circuits. There are 3 prominent groups of hardware which are interesting

- Cache Coherent Non Uniform Memory Architecture (CC-NUMA)

- Cache only Memory Architecture(COMA)

- Reflective Memory systems

CC-NUMA being the most important is discussed here.

CC-NUMA

A CC-NUMA architecture has its shared virtual address space distributed between various clusters local memories so that the local processor as well as other processors in the network can access with different access time as it is a non uniform memory architecture. "The address space of the architecture maps onto the local memories of each cluster in a NUMA fashion[5]". The cache in each cluster is used to replicate blocks of memory which are reserved in remote hosts.

There if a fixed physical memory location for each data block in CC-NUMA. Directory based architecture is used in which each cluster has equal parts of systems shared memory which is called the home property.The various coherence protocols, Memory consistency models like weak consistency and processor consistency are implemented in CC-NUMA. The CC-NUMA is simple and cost effective but when multiple processors want to access the same block in succession there can be a huge performance downfall for CC-NUMA [6].

The hardware DSM fairs better than software DSM in terms of performance but the cost of hardware is very high and hence changes are being made in the implementation of software DSM for better performance and hence this article will deal in detail with software DSM ,their performance and performance improvement measures.

Software components of a DSM

The software components of a DSM form a critical part of the DSM application. Robust Software design decisions better help the DSM system to work efficiently. The idea of a good software component for a DSM depends on factors like portability to different operating systems, efficient memory allocation, and good coherence protocols to support data up to date. As we shall see further, in modern day DSMs the software needs to co-operate with the underlying hardware in order to ensure ideal system performance.

In case of a distributed shared memory, it is imperative that a software component that can ease the burden of the programmer writing message passing programs. The software distributed shared memory is responsible for actually providing the abstraction of a shared memory over a hardware.

In the early days of the DSM development, a so-called "fine-grained" approach was implemented which used virtual memory based pages. Page faults caused invalidations and updates to occur. But the challenges caused by this model are the fact that synchronization within a page causes great overhead.

The coarse-grained approach is more a VM-based approach while the fine-grained approach is mostly an instrumentation-based approach. This section looks at how fine-grained and coarse grained approaches perform under similar underlying hardware. A good example of a fine-grained approach is the Shasta approach, while an example for the coarse-grained approach is the Cashmere approach.

It is shown that the Shasta performs better in applications that involve synchronization at a finer level instruction-set, while Cashmere, predictably, has a better performance on applications that have a coarse level synchronization structure. At granularity levels larger than a cache line, Shasta performs better because Shasta has more useful data in the form of pages owing to its finer granularity. If large amounts of private data needs to be synchronized, then the coarser Cashmere is preferred. In case of false sharing, Cashmere, which allows aggregation of protocol operations, performs better. False sharing is defined as misses on memory/caches that occur due to the fact that multiple threads share different blocks on different words residing in the same cache block. But in case of applications that have a high level of false write-write sharing, page based finer protocols perform better, because the software overhead required to maintain coherence is removed. This is because coherence within nodes is managed by the hardware itself.

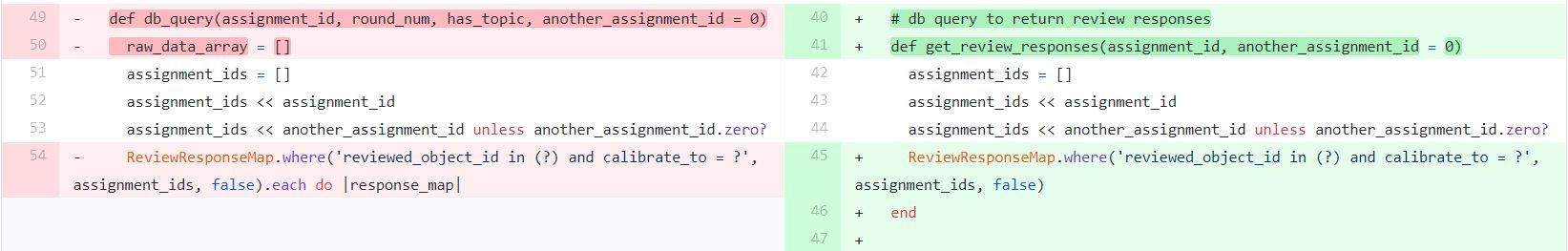

Shasta uses an interesting approach for inter and intra-node communication. Firstly, it uses timely checks to determine if misses occur and processes them in software. The shared address space is divided into blocks, which are different for different systems. The blocks are further divided into lines, and each line maintains its state information in a state table. These periodic checks for misses take up a mere 7 instructions. Stack and memory local to a thread is not checked because they are not shared among nodes. Optimizing these checks are done by aggregating checks for nearby blocks.

As mentioned in the previous paragraph, there is an interesting optimization technique for communication between and within nodes. Within a node, race conditions are avoided by locking blocks individually during coherence protocol operations. Instead of a bus based system within a node, messages are sent between nodes instead. Processes must also wait until all operations on the node pending are complete. If the program already has provisions for handling race conditions, this time wait required for release can be removed. Messages from other processors are done through polling. No interrupt based mechanism is used for message handling because of a high cost associated with it. This way, the processor is free to do other tasks. Polling is done when waiting for messages, and the polling location is common to all processors within a node, which is very efficient. Also just three instructions are needed to do the polling activity.[7]

Performance of DSM

Memory Consistency Model

A memory consistency model in a shared memory space gives the order in which the memory can be accessed. The model of memory consistency model chosen will affect the performance of the system. In DSM using a strict model make it easier for the programmer an example of strict memory consistency model is sequential consistency. When using sequential consistency the results are similar to executing all the instructions in sequential order and the instructions should be executed in the program order specified for each processor. These properties of sequential consistency decrease the performance of parallel programs and can cause an overhead for performance for programs which have a higher parallelism. The higher granularity leads to false sharing which is also another performance issue that can cause an overhead for software DSM.

Improvement for Memory Consistency Using a memory consistency model cannot be avoided and hence many relaxed consistency models were developed with a goal to improve performance of software DSM systems. Release consistency showed better performance than most other relaxed consistency models. Number of messages sent is also affecting the performance of software DSM as sending a message is more costly than hardware DSM. In a lazy implementation of Release consistency all the messages are buffered until a release occurs and are sent out as a single message which decreases the communication overhead also. The modifications done by a processor are also transmitted to only the processor which acquires the block and hence also decrease the amount of data sent along with number of messages sent.

Performance Improvement

References

- Shared Memory System

- Distributed Memory system

- Distributed Shared Memory Systems

- Providing Hardware DSMPerformance at Software DSM Cost

- Distributed Shared Memory: Concepts and Systems

- CC-NUMA

- Comparative Evaluation of Fine- and Coarse-Grain Software Distributed Shared Memory

- Fundamentals of Parallel Computer Architecture