6 Multi: Difference between revisions

No edit summary |

No edit summary |

||

| Line 31: | Line 31: | ||

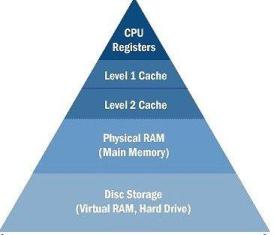

Most modern computers use the following memory hierarchy. The figure below shows an uni processor memory hierarchy & a recent dual core memory hierarchy (A multi core architecture having two cores is dual core processor) | Most modern computers use the following memory hierarchy. The figure below shows an uni processor memory hierarchy & a recent dual core memory hierarchy (A multi core architecture having two cores is dual core processor) | ||

[[ image: | [[ image:Picture4.jpg]] [[ image: multi.jpg]] | ||

Uni processor memory hierarchy Dual core memory hierarchy | Uni processor memory hierarchy Dual core memory hierarchy | ||

(ref 3) (ref 2) | (ref 3) (ref 2) | ||

Revision as of 20:17, 21 February 2010

ref 1: http://www.buzzle.com/articles/basics-of-dual-core-process-computer.html ref 2: yan solihin tb ref 3 : sir rotenbergs slides. ref 4 : http://www.karbosguide.com/books/pcarchitecture/chapter10.htm ref5: http://www.real-knowledge.com/memory.htm ref 6: http://www.pcguide.com/ref/mbsys/cache/funcMapping-c.html ref 7 http://en.wikipedia.org/wiki/Very_Long_Instruction_Word ref 8 imp. http://en.wikipedia.org/wiki/CPU_cache#Multi-level_caches rref 9 imp: http://en.wikipedia.org/wiki/Multi-core_processor

Multi core architecture:

The term ‘multicore’ means two or more independent cores.( For eg, ‘dual core’ means each processor contains 2 independent cores. )The term ‘architecture’ means the design/layout of a particular idea. Hence the combined term ‘multicore architecture’ simply means the integration of cores and its layout.

NUMA architectures: The term NUMA is an acronym for Non Uniform Memory Access. It is one way of organizing the MIMD (Multiple Instruction Multiple Data) architecture. It is also known as distributed shared memory. Each processor has an independent cache, memory and they are connected to a common interconnection network like a crossbar, mesh, hypercube, etc.

‘Cache’ is a storage device having small memory.

Why were caches introduced? (ref 4) The CPU works at clock frequencies of 3 GHZ and most common RAM speeds are about 400 MHz. Hence, there needs to be a data transfer to and fro from RAM and CPU. This will increase the latency and CPU will have to be idle most times. The main reason of introducing caches was because of this increase in latency to access data from main memory. These small buffers operate at higher speeds than normal RAM and hence data can be accessed quickly from the cache.

The cache which is closest to CPU is called Level 1 Cache or L1 cache. It is also known as primary cache. The size is between 8 KB – 128 KB. The next level is L2 cache (secondary cache) whose size is between 256 KB – 1024 KB. If the data that CPU is looking for is not found in registers, it seeks it in L1 cache, if not found, then in L2 cache, then to the main memory, then finally to an external storage device. This leads us to the introduction of two new terms: cache hit and cache miss. The data searched for by registers, if found in cache, results in cache hit, and if not found in cache, results in cache miss. With the introduction of multiple cache levels, it can be individually stated as L1 cache hit/miss, L2 cache hit/miss and so on.

Most modern computers use the following memory hierarchy. The figure below shows an uni processor memory hierarchy & a recent dual core memory hierarchy (A multi core architecture having two cores is dual core processor)

File:Picture4.jpgUni processor memory hierarchy Dual core memory hierarchy

(ref 3) (ref 2)

Previous processors had an unified L1 cache i.e. data and instruction cache were unified in one. If the Instruction fetch unit wants to fetch data from the cache and the memory unit wants to load an address simultaneously, then the memory operation is given priority and fetch unit is stalled. Hence, L1 cache was split into L1 data cache (L1 – D) and L1 instruction cache (L1 -I) so that it helps in concurrency. One more advantage is fewer number of ports required.

L2 cache is still unified. As the latency difference between main memory and the fastest cache has become larger, some processors have begun to utilize L3 cache, some don’t. Hence the figure shows 2 boxes, one which includes L1 and L2, and the other which combines L3 with them. The L3 cache is roughly about 4 MB – 32 MB in size.

In addition to these 3 levels of cache, some multi processors use: 1. Inclusion property 2. Victim cache 3. Translation LookAside Buffer

Inclusion Property: Here, the bigger in size lower level cache (L2) includes all the data of upper level (L1) cache. The advantage of this property is that if a value is not found in the lower level cache, it is guaranteed it is not present in the upper level cache, which reduces the amount of checking. The disadvantage is if L2 cache is smaller in size, most of it contains only L1 cache data and hardly any new data, defeating the purpose of another level of cache altogether.

A victim cache is a cache used to hold blocks evicted from a CPU cache upon replacement. When main cache evicts a block, the victim cache will take the evicted block. This block is called the victim block. When the main cache misses, it searches the victim cache for recently evicted blocks. So, a hit in victim cache means the main cache doesn’t have to go to the next level of memory.

The TLB is a small cache holding recently used virtual-to-physical address translations. TLB is a hardware cache that sits alongside the L1 instruction and data caches.

Parameters of a cache:

1. Size: cache data storage 2. Associativity: Number of blocks in a set 3. Block Size: Total number of bytes in a single block.

A set is a fancy term used for the row of a cache.

Placement Policy:

The placement policy is the first step in managing a cache. This decides where a block in memory can be placed in the cache. The placement policies are:

1. Direct mapped:

If each block has only one place it can appear in a cache, it is called direct- mapping. It is also known as one way set associative. (ref 6) The main memory address (32 bits) is broken down as: Assumed 8 blocks and 8 lines in cache.

26 bits 3 bits 3 bits TAG INDEX OFFSET

No. of offset bits = log 2 Block Size

No. of index bits = log 2 Number of sets No. of Tag bits = 32- offset bits- index bits

Each line has its own tag associated with it. The index will tell which row of cache to look at. To search for a word in the cache, compare the tag bits of the address with the tag of the line. If it matches, the block is in the cache. It is a cache hit. Then select the corresponding word from that line.

2. Fully Associative:

If any line can store the contents of any memory location, it is known as fully associative cache. Hence, no index bits are required. The main memory address (32 bits) is broken down as:

29 bits 3 bits

TAG OFFSET

It is also known as Content Addressable Memory. To search for a word in the cache, compare the tag bits of the address with the tag of all lines in the cache simultaneously. If any one matches, the word is in the cache. Then select the corresponding word from that line.

3. Set Associative:

Direct Mapped Fully Associative Advantages: Fast lookup, Less hardware Minimal contention for lines Disadvantages: Contention for cache lines Maximum Hardware & Cost

Hence, a compromise between the fully associative cache and the direct mapping technique is made, known as set associative mapping. Almost 90% of the current processors use this mapping. Here the cache is divided into ‘s’ sets, where s is a power of 2. The associativity can be found by using the following relation:

Associativity = Cache size/ (Block Size * No. of sets). For an associativity of ‘n’, the cache is ‘n- way’ set associative. The following diagram shows a 2 way set associative mapping.

(ref 3)

Here the cache size is the same, but the index field is 1 bit shorter. Based on the index bits, select a particular set. To search for a word in the cache, compare the tag bits of the address with the tag bits of the line in each set (here 2). If it matches with any one of the tags, it is a cache hit. Else, it is a cache miss and the word has to be fetched from main memory.

Conceptually, the direct mapped and fully associative caches are just "special cases" of the N-way set associative cache. If you make n=1, it becomes a "1-way" set associative cache. ie: there is only one line per set, which is the same as a direct mapped cache. On the other hand, if you make ‘n’ to be equal to the number of lines in the cache, then you only have one set, containing all of the cache lines, and each and every memory location points to that set. This gives us a fully associative cache.

Replacement Policy:

This decides which block needs to be evicted to make space available for a new block entry. The replacement policies used are: LRU, Psuedo LRU, FIFO, random, etc. The most commonly used is LRU (Least recently used replacement policy). It works as follows:

1. Make small counters per block in a set and the no. of bits in each counter = log2 (Associativity) 2. If there is a cache hit, make the current block’s counter value as 0, which indicates that it is the most recently used one. Increment the counters of other blocks whose counters are less than the referenced block’s old counter value 3. If there is a cache miss, make the newly allocated block’s counter value as 0 and then increment the counters of all other blocks

One eg: trace ABCEF

0 1 2 2 0 B 1 A 0 C 1 B 2 A 1 C 2 B 0 E 2 C 0 F 1 E

When E came in, the block with the highest count here 2, block A was evicted since it was least recently used and the value of other counters were incremented accordingly. Similarly, when F came in, block B was evicted.

FIFO policy simply removes the cache block which was brought in earliest in the cache, irrespective of it being least, most recently used.

Write Policy:

1. Write Through: Whenever a value is written in the cache, it is immediately written in the next level of memory hierarchy. The main advantage is that both levels have the recent updates value at all times. No dirty bit is required. The disadvantage is that since it writes every time, it consumes a lot of bandwidth. A bit stored in the cache can flip its value when struck by alpha particles resulting in soft errors. The effect of this is that the value in the cache is lost. Since, lower level of cache contains the same updated value, it is safe to discard the block, since it can be easily re fetched. So, only error detection is enough.

2. Write Back: The next level of memory hierarchy is not updated as soon as a value is written in the cache, but is updated only when a block is evicted from the cache. To cope up with the problem of having most recent value, a dirty bit is associated with the cache block. When there is a write to the cache, the dirty bit is set 1. So, if this block were to be evicted, indication of dirty bit being 1, will make the block to be written back to the main memory. When a new block is written in cache, the dirty bit is made 0. Since, lower level of cache does not contain the same updated value, error correction is necessary.

Thus in most processors, L1 cache uses a WT policy and L2 cache uses a WB policy.

Write Allocate policy: On a write miss, the block is brought back into the cache before it is written. A write back policy generally uses write allocate.

Write No Allocate Policy: On a write miss, the write is propagated to the lower level of memory hierarchy but it is not brought back into the cache. A write through policy generally uses write no allocate.

Now recent multi core architectures:

Cores in multi-core systems may implement architectures such as superscalar, VLIW, vector processing, SIMD, or multithreading.

Super scalar architecture implements Instruction level parallelism within a single processor. A superscalar processor executes more than one instruction during a clock cycle.

ref 7 Very long instruction word (VLIW) refers to a CPU architecture designed to take advantage of instruction level parallelism (ILP). It includes out of order execution. It is a type of MIMD ( multiple instruction multiple data). VLIW CPUs offer significant computational power with less hardware complexity (but greater compiler complexity) than is associated with most superscalar CPUs. One VLIW instruction encodes multiple operations; specifically, one instruction encodes at least one operation for each execution unit of the device

Simultaneous multithreading (SMT) is a technique for improving the overall efficiency of superscalar CPUs with hardware multithreading. SMT permits many independent threads of execution to make better utilization of the resources provided by modern processor architectures.

1. Intel Clovertown: Processor Name: Intel Xeon Frequency: 2.66 GHz L1 D cache: 4*32 KB L1 I cache: 4*32 KB L2 cache: 2*4096 KB 2 processors each having 4 cores and 4 threads.

Allendale Wolfdale Kentsfield Yorkfield dual (65 nm) dual (45 nm) quad (65 nm quad (45 nm) Jan 2007 Feb 2008 Jan 2007 Mar 2008

Tigerton

Dunnington

Dunnington dual (65 nm)

quad (45 nm)

six (45 nm) Sep 2007

Sep 2008

Sep 2008

Wolfdale DP Clovertown Harpertown Nehalem-EP Bloomfield dual (65 nm) quad (65 nm) quad (45 nm) dual/quad (45 nm) quad (45 nm) Nov 2007 Nov 2006 Nov 2007 Mar 2009 Mar 2009

2. Wolfdale DP 2007- present

Intel Xeon

2 cores

L2: 6 MB

3. xeon 3200 series quad cores 7 jan 2007 The 2x2 "quad-core" (dual-die dual-core[11]) comprised two separate dual-core die next to each other in one CPU package. L2: 2* 4 MB

4. xeon harpertown nov 2007 clock: 2G- 3.4 G 4 cores L2: 2*6 MB

5. xeon dunnington 15 sep 2008 4 core/6 cores L2: 3*3 MB L3: 16 MB

6. Intel xeon gainestown dec2008 4 cores L2: 4*256 KB L3: 8 MB

7. intel xeon jasper forest 2010 4 cores L2: 4*256 KB L3: 8 MB

8. intel xeon becktown 2010 8 cores L2: 8*256 KB L3: 24 MB

9 intel Celeron M merom –L L1: 64 Kb L2: 1MB intergarted 2008

10.intel core i5 Lynnfield 45 nm sep 8, 2009 L3: 4 MB

11. intel core i7 Bloomfield 45nm L2: 256 Kb L3: 8 MB

12. intel Celeron Conroe - L 2007 L1 d : 32 KB L1 code: 32 KB L2: 1024 kB

13. mar 2009 intel merom/intel Conroe L2: 4 MiB

14 mar 2008 intel penryn, wolfdale L3: 6 MiB

15 intel penryn QC 4 cores: core 2 Quad L2: 6-12 MB

16. intel penryn XE Core 2 extreme L2: 12 MiB

17 . AMD Barcelona 4cores auf-sep 2007 L2: 512 Kb L3: 2 MB shared

18: IBM cell 9 cores 256 Kb local

19 amd phenom 4 cores L2, L3

20. Intel i7 8 cores L3: 8 M

amd plans • Prefetch directly into L1 cache as opposed to L2 cache with K8 family • 32-way set associative L3 victim cache sized at least 2 MB, shared between processing cores on a single die (each with 512 KB of independent exclusive L2 cache), with a sharing-aware replacement policy. • Extensible L3 cache design, with 6 MB planned for 45 nm process node, with the chips codenamed Shanghai.

21.amd phenom 4 cores L1 cache: 64 KB + 64 KB[51] (data + instructions) per core L2 cache: 512 KB per core, full-speed L3 cache: 2 MB shared between all cores nov 19, 2007 and mar 2008

22, amd toliman Three AMD K10 cores L1 cache: 64 KB data and 64 KB instruction cache per core L2 cache: 512 KB per core, full-speed L3 cache: 2 MB shared between all cores April 23, 2008

23. amd Phenom II Models [Deneb (45 nm SOI with Immersion Lithography) Four AMD K10 cores L1 cache: 64 kB + 64 kB (data + instructions) per core L2 cache: 512 kB per core, full-speed L3 cache: 6 MB shared between all core 8 January 2009 clock : 2.5 G

24. amd Callisto (45 nm SOI with Immersion Lithography) Two AMD K10 cores using chip harvesting technique, with two cores disabled L1 cache: 64 kB + 64 kB (data + instructions) per core L2 cache: 512 kB per core, full-speed L3 cache: 6 MB shared between all cores 1 June 2009 (C2 Stepping) Clock rate: 3000 to 3100 MHz 25. amd Athlon II Models [edit]Regor (45 nm SOI with Immersion Lithography) Two AMD K10 cores, June 2009 L1 cache: 64 kB + 64 kB (data + instructions) per core L2 cache: 1024 kB per core, full-speed 26. Propus (45 nm SOI with Immersion Lithography) [52][53] Four AMD K10 cores L1 cache: 64 kB + 64 kB (data + instructions) per core L2 cache: 512 kB per core, full-speed September 2009 27. In March 2007 IBM announced that the 65 nm version of Cell BE i 28. in February 2008, IBM announced that it will begin to fabricate Cell

29. In May 2008, IBM introduced the high-performance double-precision floating-point version of the Cell processor, the PowerXCell 8i

30. In May 2008, an Opteron- and PowerXCell 8i-based supercomputer, the IBM Roadrunner system, became the world's first system to achieve one petaFLOPS, and was the fastest computer in the world until fall 2009.

31. Azul Systems released the Vega 3 7300 Series in May 2008. The 7300 series contains up to 864 processing cores with 768 GB of memory.

32. azul Systems released the Vega 2 7200 Series, in June 2007. The 7200 series contains up to 768 processing cores on 16 processor chips with 768 GB of memory. Azul designed the 48 core Vega 2 processor chip. Taiwan Semiconductor Manufacturing Company (TSMC) fabricated the Vega 2 processor

// ref 9: http://en.wikipedia.org/wiki/Power_Architecture 33. In April 2007, Freescale and IPextreme opened up a licensing program for Freescale's PowerPC e200 core.[8] 34In May 2007 IBM launched its POWER6 high end microprocessor at speeds up to 5.0 GHz, doubling the performance of the previous POWER5. The POWER6 added AltiVec to the POWER series and an FPU supporting decimal arithmetic. The same day AMCC announced its Titan high end embedded processor, reaching 2 GHz while consuming very little power. It uses innovative logic design from Intrinsity and will be available in 2008. 35The members of Power.org finalized the Power ISA v.2.04[9] specification in June 2007. Improvements are mainly focused on server applications and virtualization. 36At the Power Architecture Developer Conference in September 2007, drafts to Power ISA v.2.05 and ePAPR specification were shown, and a Linux based reference design based on PowerPC 970MP was revealed.[10] 37The Power ISA v.2.05 specification was released in December 2007.[11] 38In April 2008, IBM rebranded their Power Architecture based hardware, System p and System i. They are now called "Power Systems". At the same time they rebranded the i5/OS operating system "IBM i". 39On May 25, 2008, IBM was the first to break the 1 Petaflops barrier with the Roadrunner supercomputer.[12] In June 2008, it entered the Top500 list of the fastest computers in the world on first place, replacing the BlueGene/L which had held that position since November 2004. 40On June 16, 2008, Freescale announced QorIQ families P1, P2, P3, P4 and P5, the evolution of PowerQUICC, featuring the eight-core P4080.[13] 41In September 2008, the POWER7-based supercomputer, Blue Waters, got the green light.[14] For a cost of $208 million, it will contain 200,000 processors, bringing multi-petaflopsperformance in 2010-2011. 42In December 2008, the ePAPR v.1.0 specification for embedded Power Architecture based computers was finalized.[15] 43The Power ISA v.2.06 specification was released in February 2009.[16] 44Mentor Graphics enables the Android mobile operating system on Freescale's QorIQ and PowerQUICC III platforms in July 2009.[17] 45At the ISSCC 2010 conference in February 2010, IBM released the POWER7 processor and revealed the PowerPC A2 "wire-speed processor". Both massively multicore and multithreadedserver oriented processors comprising over 1 billion transistors each. 46According to the June 2008 TOP500 list, the third and sixth fastest supercomputers in the world; and 22 of the 50 fastest supercomputers use IBM's technologies based on Power Architecture. Of the top ten, five use Power Architecture processors as computing elements and one use them as communications processors. // ref 9 ends 47. Nvidia, The GeForce 9 Series is the ninth generation of NVIDIA's GeForce series of graphics processing units, the first of which was released on February 21, 2008.

48. GeForce G 100 March 10, 2009

49. Sun Microsystems' UltraSPARC T2 microprocessor is a multithreading, multi-core CPU. It is a member of the SPARC family, and the successor to the UltraSPARC T1. The chip is sometimes referred to by its codename, Niagara 2. Sun started selling servers with the T2 processor in October 2007. eight CPU cores, and each core is able to handle eight threads concurrently. T L2 cache size increased to 4 MB (8-banks, 16-way associative) from 3 MB 50. On April 9, 2008, Sun announced the UltraSPARC T2 Plus. 51. TILE64 is a multicore processor manufactured by Tilera. It consists of a mesh network of 64 "tiles", where each tile houses a general purposeprocessor, cache, and a non-blocking router, which the tile uses to communicate with the other tiles on the processor. 2007 The short-pipeline, in-order, three-issue cores implement a MIPS-derived VLIW instruction set. Each core has a register file and three functional units: two integer arithmetic logic units and a load-store unit. Each of the cores ("tile") has its own L1 and L2 caches plus an overall virtual L3 cache which is an aggregate of all the L2 caches.[1] A core is able to run a full operating system on its own or multiple cores can be used to run a symmetrical multi-processing operating system. 52. 2010 TILE-Gx is a future multicore processor family by Tilera. It consists of a mesh network of 100 cores. It will be produced by TSMC with 40 nm. 64-bit core (3-issue) 32 KB L1 I-cache, 32 KB L1 D-cache (per core) 256 KB L2 cache (per core) up to 26 MB L3 cache (per chip)

NUMA:

Running the examination code presented above on a Sun Fire V40z server equipped with four Opteron 875 (dual-core) processors, as sketched in the picture, delivers the following results:

• 4 numa nodes: The four sockets of the system with locally attached memory (DRAM).

• Processor masks (long long): 3, 12, 48, 192.

• 4 processor cores (flag=1): The four multicore processor packages on the four sockets.

• 12 caches:

• 1 L1 data cache per core (thus 2 per socket)

65k in size, 64b cache line size

• 1 L1 instruction cache per core

65k in size, 64b cache line size

• 1 L2 cache per core (thus 2 per socket)

1mb in size, 64b cache line size

amd hammer

Hammer sports three features aimed at getting code and data from main memory to the processor as quickly as possible: an on-die memory controller, improved caches, and a so-called "large workload" translation look-aside buffer (TLB

128KB, two-way set associative, on-die L1 cach

L2 size goes up to 1MB

"victim cache

sgi origin Origin 39004 to 5121 GB to 1 TB1 to 4 tall racks? November 200229 December 2006

altix

he Altix UV supercomputer architecture was announced in November 2009. Codenamed Ultraviolet during development, the Altix UV combines a development of the NUMAlink interconnect used in the Altix 4000 (NUMAlink 5) with quad-, six- or eight-core "Nehalem-EX" Intel Xeon processors. Altix UV systems run either SuSE Linux Enterprise Server or Red Hat Enterprise Linux, and scale from 32 to 2,048 cores with support for up to 16 terabytes of shared memory in a single system ima

Ibm p690

expired.

recent uni processotr

2.Intel i3 Clarkdale

Processor Name: Intel Core i3 530

Technology: 32nm

Frequency: 2.94 GHz

L1 D cache: 2*32 KB, 8 way set ass.

L1 I cache: 4*32 KB, 4 way

L2 cache: 2*256 KB, 8 way

L3 cache: 4 MB, 16 way

1 processors having 2 cores and 4 threads.