CSC/ECE 506 Spring 2014/1b ms

The supercomputer landscape today

Introduction

A Supercomputer is a computer at the leading edge of state of the art processing capacity specifically designed for fast calculation speeds. The early 1960’s saw the advent of such machines and in the 1970’s, systems comprising a few processors were used which subsequently increased to thousands during the 1990’s and by the end of 20th century, massive parallel supercomputers with tens of thousands of processors with extremely high processing speed came into prominence<ref>http://en.wikipedia.org/wiki/Supercomputer#History</ref>. Supercomputers play important role in the field of computational science as well as in cryptanalysis. They are also used in the quantum mechanics, weather forecasting and climate research and oil and gas exploration. Earlier supercomputer architectures were aimed at exploiting parallelism at the processor level like vector processing followed by multiprocessor systems with shared memory. With an increasing demand for more complex and faster computations, processors with shared memory architectures were not enough and this paved way for hybrid structures which included formation of clusters of multi-node mesh networks were each node is a multiprocessing element<ref>http://en.wikipedia.org/wiki/Supercomputer#Performance_measurement</ref>.

Characteristics of supercomputers

The definition of a supercomputer established in the previous section gives some insights into the nature and features of such computing systems. As a result, certain patterns or a combination of features emerge, which are unique to supercomputers. This section discusses those features and characteristics<ref>http://royal.pingdom.com/2012/11/13/new-top-supercomputer-dumps-cores-and-increases-power-efficiency/</ref>

- Processing Speed:

The common feature uniting the Human Genome Project, the Large Hadron collider and other such challenging scientific experiments of our era has been their demand for massive computing power. The ever accelerating growth of our knowledgebase coupled with the IT revolution has made processing speed as the most critical parameter in characterizing supercomputers. This hunger for increasing processing speed is evident from the fact that the faster supercomputer today is a thousand times faster than the fastest supercomputer a decade ago. As supercomputers find more and more applications is a wide horizon of fields such as meteorology, defense and biology; this demand for high computing power is far for subsiding.

- Massive Parallel System:

The need to perform complex mathematical tasks demanded by several applications of the type discussed in the preceding paragraph is beyond the capabilities of a single or a group of processing units. A large number of processing units need to cooperate and collaborate to accomplish tasks of such magnitude in a timely fashion. This concept of a massive parallel system has become an inherent feature of contemporary supercomputers.

- Power consumption:

The system comprising of hundreds and thousands of processing elements entails a huge demand of electrical power. As a result power consumption becomes an important factor in designing supercomputers with performance per watt being a critical metric. With the rapid increase in the usage of such systems, strides are being made towards designing more power efficient systems<ref>http://asmarterplanet.com/blog/2010/07/energy-efficiency-key-to-supercomputing-future.html</ref>. Along with the list of top 500 supercomputers, the top500 organization also publishes a Green500 list which ranks the systems with their power efficiency measured in FLOPS per watt.

- Heat management:

As mentioned in the previous paragraph, today’s supercomputers consume large amounts of power and a major chunk of it is converted to heat. This poses some significant heat management issues for the designers. The thermal design considerations of supercomputers are far more complex than those of tradition home computers. These systems can be air cooled like the Blue Gene<ref>http://www-03.ibm.com/ibm/history/ibm100/us/en/icons/bluegene/</ref>, liquid cooled like the Cray 2<ref>http://www.craysupercomputers.com/cray2.htm</ref> or a combination of both like the System X<ref>http://www-03.ibm.com/systems/x/</ref>. Consistent efforts are being made to improve the heat management techniques and come up with more efficient metrics to determine a power efficiency of such systems<ref>http://en.wikipedia.org/wiki/Supercomputer#Energy_usage_and_heat_management</ref>.

- Cost:

The cost of a typical supercomputer usually runs into multiple hundred thousand dollars with some of the fastest computers going into the multimillion dollar range. Apart from the cost of installation which involves the support architecture for heat management and electrical supply, these systems exhibit very high maintenance cost with a huge power consumption and a high failure rate of processing elements.

Performance evaluation

As supercomputers become more complex and powerful, measuring the performance of a particular supercomputer by just observing the specifications is rather tricky and is most likely to produce erroneous results. Benchmarks are dedicated programs which compare the characteristics of different supercomputers and are designed to mimic a particular type of workload on a component or system. Benchmarks provide a uniform framework to assess different characteristics of computer hardware such as floating point operations performance of a CPU.

Amongst the various types of the benchmarks available in the market, kernel types such as LINPACK are specially designed to check the performance of the supercomputers. LINPACK benchmark is a simple program that factors and solves a large dense system of linear equations using Gaussian elimination with partial pivoting. Supercomputers are compared using FLOPS– floating point operations per second. In addition, a software package called LINPACK is a standard approach to testing or benchmarking supercomputers by solving a dense system of linear equations using the Gauss method. However, LINPACK benchmarking software is not only used to benchmark supercomputers, it can also be used to benchmark a typical user computer.

Certain new benchmarks introduced in November 2013 when TOP500 was updated. LINPACK is becoming obsolete as it measures the speed and efficiency of the linear equations calculations and fails when it comes to evaluating computations which are nonlinear in nature. A majority of differential equation calculations also require high bandwidth and low latency and access of data using irregular patterns. As a consequence, the founder of LINPACK has introduced new benchmark called high performance conjugate gradient (HPCG)<ref>http://www.sandia.gov/~maherou/docs/HPCG-Benchmark.pdf</ref> which is related to data access patterns and computations which relate closely to contemporary applications. Transcending to this new benchmark will help in rating computers and guiding their implementations in a direction which will better impact the performance improvement for real application rather than a blind race towards achieving the top spot on top500.

Architecture of parallel computers

The architecture of parallel systems<ref>https://computing.llnl.gov/tutorials/parallel_comp/#Whatis</ref> can be classified on the basis of the hardware level at which they support parallelism. This is usually determined by the manner in which the processing elements are connected with each other, the level at which they communicate the memory resources they share.

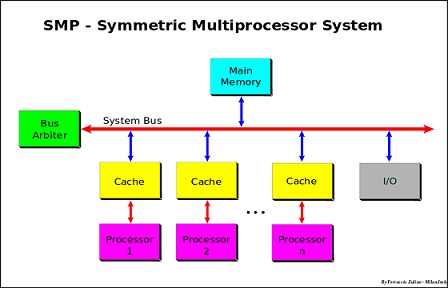

Symmetric Multiprocessing

Symmetric multiprocessing is a type of tightly coupled multiprocessor systems with pool of processors running independently and sharing the main memory.In essence symmetric multiprocessing involves a multiprocessor computer hardware and software architecture where two or more identical processors are connected to a single shared main memory and are controlled by a single OS instance. Most of these processors are connected using buses, crossbar switches or on-chip mesh networks.SMP systems have centralized shared memory called Main Memory (MM) operating under a single operating system with two or more homogeneous processors. Usually each processor has an associated private high-speed memory known as cache memory (or cache) to speed-up the MM data access and to reduce the system bus traffic. This allows the processor to work on any task exploiting locality of reference, provided that each task in the system is not in execution on two or more processors at the same time. Data can easily move between the processors and this helps in achieving superior workload balance. Multithreaded applications work efficiently on SMPs and a different programming model is used to achieve maximum performance.

Two approaches are usually followed while parallelizing on SMP’s. In the first approach the application is broken down into multiple processes which communicate using inter – process communication schemes such as semaphores and/or shared memory. They use locks to coordinate access between the shared data. Another approach is to use a portable operating system interface for UNIX (POSIX) threads<ref>http://publib.boulder.ibm.com/infocenter/aix/v6r1/index.jsp?topic=%2Fcom.ibm.aix.prftungd%2Fdoc%2Fprftungd%2Fsmp_concepts_arch.htm</ref>.

Distributed Computing

The processing elements in a distributed system are connected by a network. Unlike symmetric multiprocessors, they don’t share a common bus and have different memories. Since there is no shared memory, processing elements communicate by passing messages. Distributed computing systems exhibit high scalability as there are no bus contention problems.Supercomputers belonging to this category can be classified into the following domains<ref>http://en.wikipedia.org/wiki/Distributed_computing</ref>.

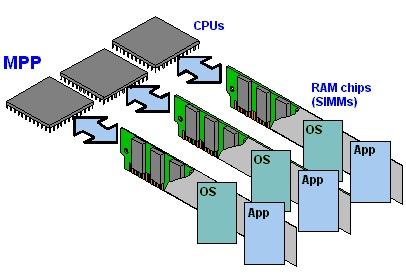

Massive Parallel Processing

Massively Parallel Processing or MPP supercomputers are made up of hundreds of computing nodes and process data in a coordinated fashion .Each node of the MPP generally has its own memory and operating system and can be made up of nodes that have multiple processors and/or multiple cores.

Processing elements in a Massive Parallel Processing (MPP's) have their own memory modules and communication circuitry and they are interconnected by a network. Contemporary topologies include meshes, hyper-cubes, rings and trees. These systems differ from cluster computers, the other class of distributed computers, in their communication scheme. MPP’s have specialized interconnect network where as clustered systems use off the shelf communication hardware.

The IBM Blue Gene/L<ref>http://en.wikipedia.org/wiki/Blue_Gene</ref> is a MPP system in which each node is connected to three parallel communication networks - a 3D toroidal for peer-to-peer communication between compute nodes, a network for collective communication (broadcasts and reduce operations), and a global interrupt network.

The main advantage of MPP's is their ability to exploit temporal locality and alleviate routing issues when a large number of processing elements are involved. These systems find extensive use in scientific simulations where a large problem can be broken into parallel segments – discrete evaluation of differential equations is one such example.

Disadvantages of MPP architecture includes absence of general memory which reduces speed of an inter-processor exchange as there is no way to store general data used by different processor. Secondly local memory and storage can result in bottlenecks as each processor can only use their individual memory. Full system resource use might not be possible with MPP architecture as each subsystem works individually. MPP architectures are costly to build as it requires separate memory, storage and CPU for each subsystem.

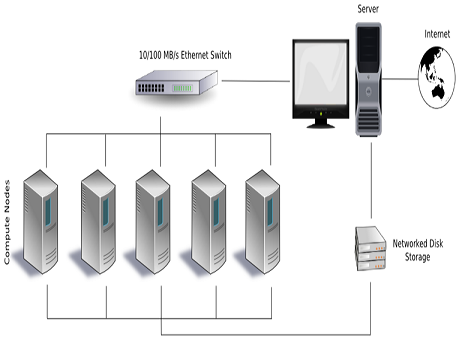

Cluster Computing

Clusters are group of computers which are connected together through networking and they appear as a single system to the outside world. All the processing in this architecture is carried out using load balancing and resource sharing which is done completely on the back ground. Invented by Digital Equipment Corporation<ref>http://en.wikipedia.org/wiki/Digital_Equipment_Corporation</ref> in the 1980's, clusters of computers form the largest number of supercomputers available today.

TOP500.org<ref>http://www.TOP500.org TOP500.org</ref> data as of November 2013 shows that Cluster computing makes up the largest subset of supercomputers at 84.6 percent.

In cluster architecture computers are harnessed together and they work independent of the application interacting with it. In fact the application or user running on the architecture sees them as a single resource<ref>http://wiki.expertiza.ncsu.edu/index.php/CSC/ECE_506_Spring_2013/1b_dj#Supercomputers Architecture</ref>.

There are three main components to cluster architecture namely interconnect technology, storage and memory.

Interconnect technology is responsible for coordinating the work of the nodes and for effecting fail over procedures in the case of a subsystem failure. Interconnect technology is responsible for making the cluster appear to be a monolithic system and is also the basis for system management tools. The main components used for this technology are mainly network or dedicated buses specifically used for achieving this interconnect reliability.

Storage in cluster architecture can be shared or distributed. The picture on the right shows an example of shared storage architecture for clusters. As you can see in here all the computers use the same storage. One of the benefit of using shared storage is it has less overhead of syncing different storages. Additionally, shared storage makes sense if the applications running on it have large shared databases. In distributed storage each node in cluster has its own storage. Information sharing between nodes is carried out using message passing on network. Additional work is needed to keep all the storage in sync in case of failover.

Lastly memory in clusters also comes in shared or distributed flavors. Most commonly in clusters distributed memory is used but in certain cases shared memory can also be used depending on the final use of the system.

Some of the benefits of using cluster architecture are to produce higher performance, higher availability, greater scalability and lower operating costs. Cluster architectures are famous for providing continuous and uninterrupted service. This is achieved using redundancy<ref>http://en.wikipedia.org/wiki/Computer_cluster</ref>

Other Cluster Types

As mention in the previous section, a cluster is parallel computer system consisting of independent nodes each of which is capable of individual operation.

A commodity cluster is one in which the processing elements and network(s) are commercially available for procurement and application. A proprietary hardware is not essential. There are two broad classes of commodity clusters – cluster-NOW (network of workstations) and constellation systems. These systems are distinguished by the level of parallelism each one of them exhibit. The first level of parallelism is the number of nodes connected by a global communication backbone. The second level is the number of processing element in each node, usually configured as an SMP<ref>http://escholarship.org/uc/item/95d2c8xn#page-6</ref>.

If the number nodes in the network exceed the number of processing elements in each node, the dominant mode of parallelism is at the first level and such cluster are called cluster-NOW<ref>http://now.cs.berkeley.edu/</ref>. In constellation systems, the second level parallelism is dominant as the number of processing elements in each node is more than the number of nodes in the network.

The difference also lies in the manner in which such systems are programmed. A cluster-NOW system is likely to be programmed exclusively with MPI where as a constellation is likely to be programmed, at least in part, with OpenMP using a threaded model.

On demand supercomputing and cloud

The current paradigm in the cloud computing<ref>http://en.wikipedia.org/wiki/Cloud_computing</ref> tribe will be the availability of supercomputing application to ubiquitous computer. Computing big analytical calculations using supercomputer available to small industries or individuals using cloud will change the dynamics of process of manufacturing in small scale industries. On demand supercomputing will enable companies and enterprises to significantly decrease the development and evaluation time of their prototype and this will help them change their costing structure along with decrease in time to market a product<ref>http://arstechnica.com/information-technology/2013/11/18-hours-33k-and-156314-cores-amazon-cloud-hpc-hits-a-petaflop/</ref>.

The OpenPOWER Foundation is one a prominent example which in a way represents the shape of things to come. It is a consortium initiated by IBM in collaboration with Google, Tyan, Nvidia and Mellanox as the founding members. IBM is opening up its POWER Architecture technology on a liberal license which will enable the server vendor ecosystem build and configure their own customized server, networking and storage hardware for cloud computing and data centers<ref>http://en.wikipedia.org/wiki/OpenPOWER_Foundation</ref>.

Though the cloud a viable platform for medium scale applications; interconnects and bandwidth still is a major bottleneck to executing large scale tightly coupled high performance computing applications on the cloud. The large scale interest and migration to optical networks does instill some hope but it is highly unlikely that the cloud can ever replace supercomputers<ref>http://www.computer.org/portal/web/computingnow/archive/september2012</ref><ref>http://gigaom.com/2011/11/14/how-the-cloud-is-reshaping-supercomputers/</ref>.

Summary

The supercomputer landscape of today is a very heterogeneous mix of varying architectures and infrastructures and this had made segregation of supercomputer into well defined subgroups rather arbitrary; there seem a lot many overlapping or hybrid examples. Supercomputing has found uses in a wide horizon of domains and their potential uses continue to increase at an exciting rate. High performance computing has made large scale simulations and calculations possible which has led to successful execution of some of humanities’ most ambitious projects and this has had profound impact on our well being.

Clustered computers have clearly taken over their MPP counterparts in terms of performance as well market share. This is primarily due to their cost effectiveness and the ability to scale well in terms of the number of processing elements.

The future of supercomputing lies in its entwining with cloud based computing and development in the cloud infrastructure and high speed communication networks will lead to a rapid rise in cloud use especially by small to medium scale institutions.According to the current press release by IBM researchers supercomputer will have more optical components to reduce the power consumption<ref>http://www.technologyreview.com/view/415765/the-future-of-supercomputers-is-optical/</ref>.

References

<references />