CSC/ECE 517 Fall 2019 - E1993 Track Time Between Successive Tag Assignments

Introduction

The Expertiza project takes advantage of peer-review among students to allow them to learn from each other. Tracking the time that a student spends on each submitted resources is meaningful to instructors to study and improve the teaching experience. Unfortunately, most peer assessment systems do not manage the content of students’ submission within the systems. They usually allow the authors submit external links to the submission (e.g. GitHub code / deployed application), which makes it difficult for the system to track the time that the reviewers spend on the submissions.

Special note

Note that we are not just "copying" from the project description, since I, Kai, wrote the final project description on Google doc at the first place and is a part of the mentoring team of this very project. So technically I wrote both documents and do not want to be accused copying.

Project Description

CSC/ECE 517 classes have helped us by “tagging” review comments over the past two years. This is important for us, because it is how we get the “labels” that we need to train our machine-learning models to recognize review comments that detect problems, make suggestions, or that are considered helpful by the authors. Our goal is to help reviewers by telling them how helpful their review will be before they submit it.

Tagging a review comment usually means sliding 4 sliders to either side, depending on which of four attributes it has. But can we trust the tags that students assign? In past semesters, our checks have revealed that some students appear not to be paying much attention to the tags they assign: the tags seem to be unrelated to the characteristic they are supposed to rate, or they follow a set pattern, like repeated patterns of one tag yes, then one tag no. Studies on other kinds of “crowdwork” have shown that the time spent between assigning each label indicates how careful the labeling (“tagging”) has been. We believe that students who tag “too fast” are probably not paying enough attention, and want to set their tags aside to be examined by course staff and researchers..

Proposed Approach

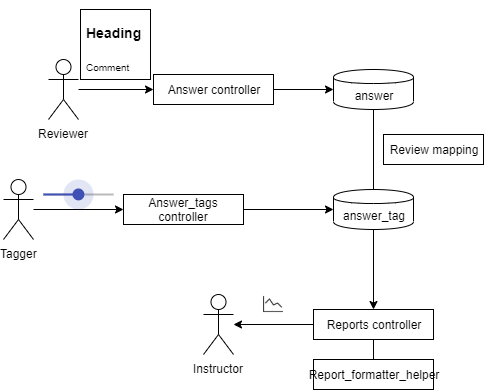

We would like to modify the model reflecting review tagging actions (answer_tag entity), adding new fields to track the time interval between each tagging action, as well as revise the controller to implement this interval tracking functionality. A few things to take into consideration: A user might not tag reviews in sequence, they may jump through them and only tag the one he or she is interested When annotator tags 1 rubric of all reviews then move onto the next, their behavior will be much different compared with those who tags 4 rubrics of each review. Sometimes an annotator could take long breaks to refresh and relax, some even take days off, these irregularities needs to be handled. A user may slide the slider back and forth for a number of times, then go to the next slider; they may also come back and revise a tag they made, these needs to be treated differently. The first step would be to examine the literature on measuring the reliability of crowdwork, and determine appropriate measures to apply to review tagging. The next step would be to code these metrics and call them from report_formatter_helper.rb, so that they will be available to the course staff when it grades answer tagging. There should also be a threshold set so that any record in the answer_tags table created by a user with suspect metrics would be marked as unreliable (you can add a field for that to the answer_tags table).

We propose to use `updated_at` in `answer_tags` model for each user to calculate gaps between each tagging action, then use `report_formatter_helper` together with `reports_controller` to generate a separate review view. Filtered gaps would be presented in the form of line-chart, with the `chartjs-ror` gem connecting the `Chart.js` component in front end.

This specific project does not require a design pattern, since it only requires using same type of class in generating same type of objects.

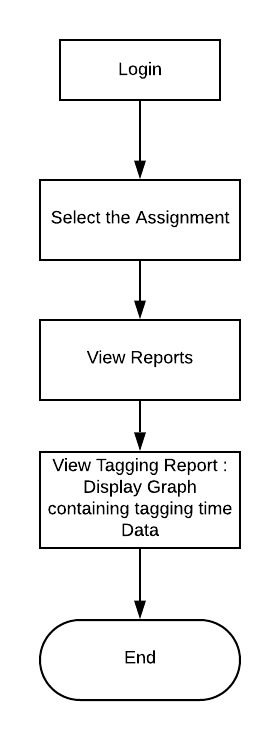

Behavior Diagram for Instructor/TA Diagram

Implementation

To create a chart within the front end, we went through the list of already installed gems to avoid enrolling complexity to the existing environment. We did find a gem chartjs-ror and decide to bring out our implementation with the package.

What chartjs does is it takes a series of parameter and compile them into different kinds of graphs when getting called in front-end, so this project is divided into several components:

Preparing

- Extracting data

- First we would need to see what data is needed in generating report, we found that:

- We would need student information to generate report for the specific student

- We would need Assignment information to generate report for assignment (currently the report button is under each assignment)

- We would need Review data to see what could be tagged

- And we would need tagging information to generate time associated with it

- First we would need to see what data is needed in generating report, we found that:

- Compiling chart parameters

- This stage requires understanding of the gem, this particular gem takes two piece of information

- Data, which include the data to be presented on the chart as well as labels associated with axis's

- Options, which captures everything else, including size of chart and range of data to be displayed

- This stage requires understanding of the gem, this particular gem takes two piece of information

- Calling gem to generate graph

Implementation

- Data

Data is stored across multiple tables, specifically if we need to gather Assignment, review, tagging, and user data, we would need at least Tag_prompt_deployment and answer_tags table. The report_formatter_helper file calls the assignment_tagging_progress function while generating rows (taggers) within a report, so we added a function within to calculate time intervals of everyone's tagging actions:

def assignment_tagging_progress

teams = Team.where(parent_id: self.assignment_id)

questions = Question.where(questionnaire_id: self.questionnaire.id, type: self.question_type)

questions_ids = questions.map(&:id)

user_answer_tagging = []

unless teams.empty? or questions.empty?

teams.each do |team|

if self.assignment.varying_rubrics_by_round?

responses = []

for round in 1..self.assignment.rounds_of_reviews

responses += ReviewResponseMap.get_responses_for_team_round(team, round)

end

else

responses = ResponseMap.get_assessments_for(team)

end

responses_ids = responses.map(&:id)

answers = Answer.where(question_id: questions_ids, response_id: responses_ids)

answers = answers.where("length(comments) > ?", self.answer_length_threshold.to_s) unless self.answer_length_threshold.nil?

answers_ids = answers.map(&:id)

users = TeamsUser.where(team_id: team.id).map(&:user)

users.each do |user|

tags = AnswerTag.where(tag_prompt_deployment_id: self.id, user_id: user.id, answer_id: answers_ids)

tagged_answers_ids = tags.map(&:answer_id)

# E1993 Track_Time_Between_Successive_Tag_Assignments

# Extract time where each tag is generated / modified

tag_updated_times = tags.map(&:updated_at)

# tag_updated_times.sort_by{|time_string| Time.parse(time_string)}.reverse

tag_updated_times.sort_by{|time_string| time_string}.reverse

number_of_updated_time = tag_updated_times.length

tag_update_intervals = []

for i in 1..(number_of_updated_time -1) do

tag_update_intervals.append(tag_updated_times[i] - tag_updated_times[i-1])

end

percentage = answers.count == 0 ? "-" : format("%.1f", tags.count.to_f / answers.count * 100)

not_tagged_answers = answers.where.not(id: tagged_answers_ids)

# E1993 Adding tag_update_intervals as information that should be passed

answer_tagging = VmUserAnswerTagging.new(user, percentage, tags.count, not_tagged_answers.count, answers.count, tag_update_intervals)

user_answer_tagging.append(answer_tagging)

end

end

end

user_answer_tagging

end

end

Proposed Test Plan

Automated Testing

Automated testing is not available for this specific project because:

- To test the review tagging interval one has to

- Create courses

- Create assignment

- Setup assignment dues dates as well as reviews and review tagging rubrics

- Enroll more than 2 students to the assignment

- Let students finish assignment

- Change due date to the past

- Have students review each other's work

- Change review due date to the past

- Have students tag each other's work, with time intervals (pause or sleep between each review comment, having at lease one interval longer than 3 minutes)

- Generate review tagging report

- Generate review tagging time interval line chart

And you cannot verify what's on the line chart with Rspec since it's a image, we wouldn't be able to see if the interval greater than 3 minutes is filtered

Each time this script runs, it would take minutes to test, let along having the intervals, and each time Expertiza runs a system level testing, this script would be included, adding who knows how long to the already too long testing process.

Thus we propose to verify this function in not automated ways

Team Information

- Anmol Desai(adesai5@ncsu.edu)

- Dhruvil Shah(dshah4@ncsu.edu)

- Jeel Sukhadia(jsukhad@ncsu.edu

- YunKai "Kai" Xiao(yxia28@ncsu.edu)

Mentor: Yunkai "Kai" Xiao (yxiao28@ncsu.edu) Mohit Jain(mjain6@ncsu.edu)