CSC/ECE 506 Spring 2013/12a TS

Interconnection Network Architecture

In a multi-processor system, processors need to communicate with each other and access each other's resources. In order to route data and messages between processors, an interconnection architecture is needed.

Typically, in a multiprocessor system, message passed between processors are frequent and short1. Therefore, the interconnection network architecture must handle messages quickly by having low latency, and must handle several messages at a time and have high bandwidth.

In a network, a processor along with its cache and memory is considered a node. The physical wires that connect between them is called a link. The device that routes messages between nodes is called a router. The shape of the network, such as the number of links and routers, is called the network topology.

History of Network Topologies

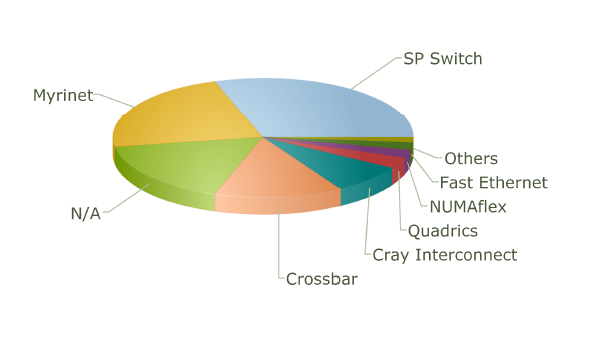

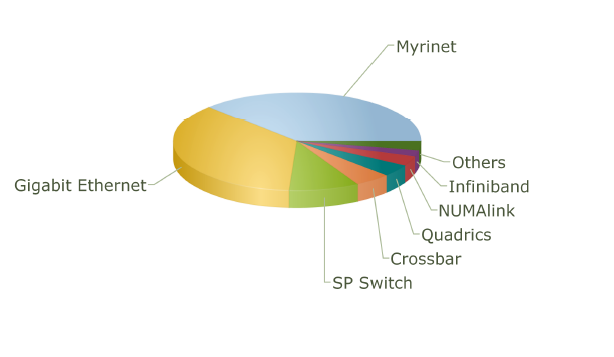

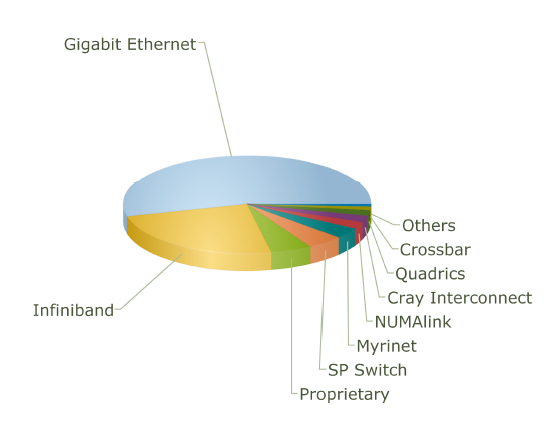

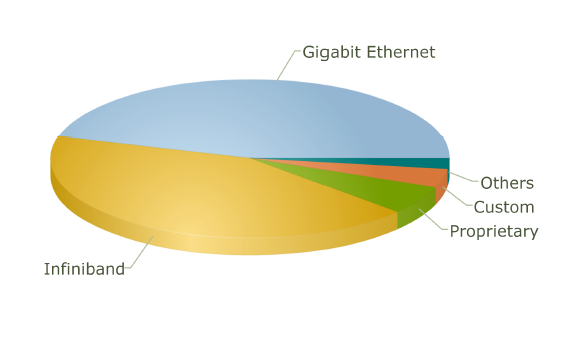

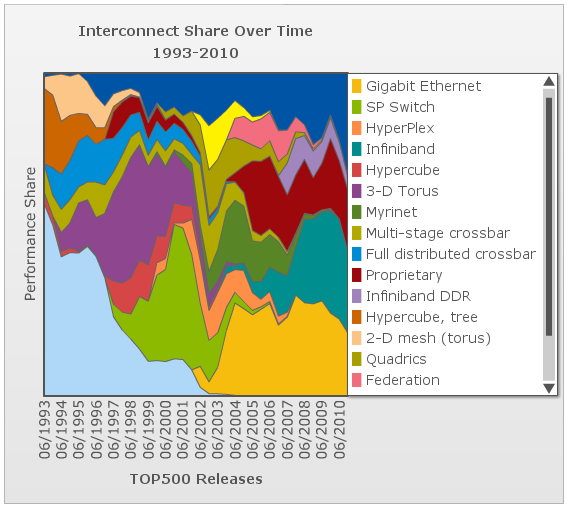

The use of different topologies has changed over the years. The following pie charts from top500.org show the share of different network topologies over the years.

Interconnect Family Market Share for 2001

Interconnect Family Market Share for 2001

Interconnect Family Market Share for 2004

Interconnect Family Market Share for 2004

Interconnect Family Market Share for 2007

Interconnect Family Market Share for 2007

Interconnect Family Market Share for 2010

Interconnect Family Market Share for 2010

Performance Metrics for Interconnection Network Topologies

The several metrics used for evaluating characteristics of a network topology like latency, bandwidth, cost, etc. are as follows:

- Diameter: This is the longest distance between any pair of nodes of the network. It is measured in terms of network hops (the number of links the message must travel before reaching the destination).

- Bisection Bandwidth: This is the minimum number of links that one must cut in order to partition the network into two halves.

- Degree: This is the total number of links in and out from a router.

The Diameter and Bisection bandwidth are the measure of performance of a network while the degree along with the total number of links is the measure of cost.

Interconnection evolution in the Top500 List

This chart shows the evolution over time of the different interconnect topologies by their dominance in the top500 list of supercomputers 7. As one can see, many technologies came into vogue briefly before losing performance share and disappearing. In the early days of the list, most of the computers list that the interconnect type is not applicable. However, the trailing end of the hypercube phase is clear in burnt orange. The dark blue at the top is "other" and the dark red in the middle is "proprietary", so we can only speculate about what topologies they might employ. The toroidal mesh appears briefly at the start in a cream color, and slightly outlasts the hypercube. The two crossbar technologies (blue and olive) followed the toroidal mesh. The fully-distributed crossbar died out quickly, but the multi-stage crossbar lasted longer but wasn't ever dominant. The 3-D torus (purple) dominates much of the 90s with hypercube topologies (dark pink) enjoying a short comeback in the later part of the decade. SP Switch (light olive), an IBM interconnect technology which uses a multi-stage crossbar switch replaced the 3-D torus8. Myrinet, Quadrics, and Federation all shared the spotlight in the mid 00s each used a similar fat-tree topology9,10. The current class of supercomputers is dominated by nodes connected with either Infiniband or gigabit ethernet. Both can be connected in either a fat-tree or 2-D mesh topology. The primary difference between them is speed. Infiniband is considerably faster per link and allows links to be ganged into groups of 4 or 12. Gigabit ethernet is vastly less expensive, however, and some supercomputer designers have apparently chosen to save money on the interconnect technology in order to allow the use of faster nodes 11,12.

Why Do InfiniBand and Gigabit Ethernet Dominate?

While custom/proprietary interconnects have the performance share according to Top500.org, these do not have the largest market share. Gigabit Ethernet has a large market share due to the cost vs. performance it provides. The bandwidth is very high, high enough for many applications, but the hardware is very commoditized. This means that the performance provided by GigE is available at a much lower price point since the technology is used for many purposes outside of supercomputer interconnection networks.

InfiniBand has a large percentage of both the performance and market share. It has high performance since the latest iteration of QDR InfiniBand supports fiber networks supporting lower latencies and longer network lengths. Also, InfiniBand benefits from commoditization to some extent. It is becoming a popular choice for use in commercial data centers, with products using this technology being offered by companies such as IBM and HP.

Types of Network Topologies

Several metrics are normally choose to represent the cost and performance for a certain topology. In this section, degree, number of links, diameter and bisection width will be calculated for each topology.

Linear Array

The nodes are connected linearly as in an array. This type of topology is simple, however, it does not scale well.

- Diameter: p-1

- Bisection BW: 1

- # Links: p-1

- Degree: 2

A linear array is the cheapest way to connect a group of nodes together. The number of links and degree of linear array have the smallest value of any topology. However, the draw back of this topology is also obvious: the two end points suffer the longest distance between each other, which makes the diameter p-1. This topology is also not reliable since the bisection bandwidth is 1.

Ring

Similar structure as the linear array, except, the ending nodes connect to each other, establishing a circular structure.

- Diameter: p/2

- Bisection BW: 2

- # Links: p

- Degree: 2

Compared with the cheapest linear array topology, the ring topology uses least effort (only add one link) to get a relatively big improvement. The longest distance between two nodes is cut into half. And the biseciton bandwidth has increased to 2.

2-D Mesh

The 2-D mesh can be thought of as several linear arrays put together to form a 2-dimensional structure. This topology is very suitable for some of the applications such as the ocean application and matrix calculation.

- Diameter: 2(sqrt(p)-1)

- Bisection BW: sqrt(p)

- # Links: 2sqrt(p)(sqrt(p)-1)

- Degree: 4

Nodes that are not on the edge have a degree of 4. To calculate the number of links, add the number of vertical links, sqrt(p)(sqrt(p)-1), to the number of horizontal links, also sqrt(p)(sqrt(p)-1), to get 2sqrt(p)(sqrt(p)-1). The diameter is calculated by the distance between two diagonal nodes which is the sum of 2 edges of length sqrt(p)-1.

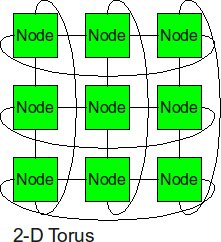

2-D Torus

Similarly as the trick we did from linear array to ring topology, the 2-D torus takes the structure of the 2-D mesh and connects the nodes on the edges.

- Diameter sqrt(p)–1

- Bisection BW 2sqrt(p)

- # Links 2p

- Degree 4

With end-around connection, the longest distance has been cut. And the biseciton bandwidth also increased. Of course, the cost from 2-D mesh to 2-D torus almost increased twice.

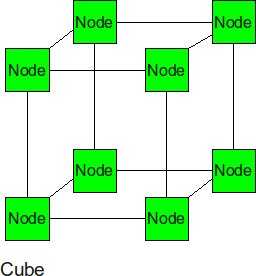

Cube (3-D Mesh)

If we add two more neighbor to each node, we can get a cube. The cube can be thought of as a three-dimensional mesh.

- Diameter: 3(p1/3-1) --this is the corner-to-corner distance, analogous to the 2-d mesh formula

- Bisection BW: p2/3 -- p1/3 rows of p1/3 links must be cut to bisect a cube

- # Links: 3*p2/3 -- there are p2/3 links in each of 3 dimensions.

- Degree: 6 (from the inside nodes)

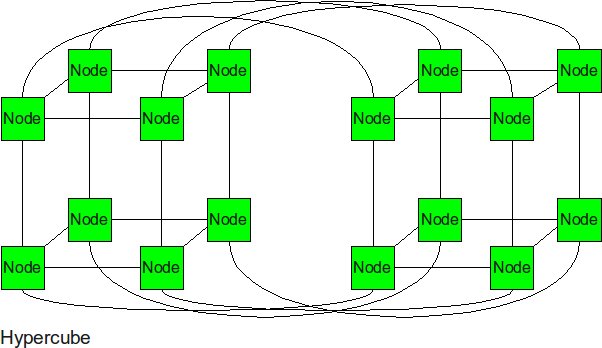

Hypercube

In the N-dimensional cube, the boundary nodes are normally the one who hurts the performance of entire network. Thus, we can fix it by connecting those boundary nodes together. The hypercube is essentially multiple cubes put together.

- Diameter: log2(p)

- Bisection BW: p/2 -- p/2 links run from one N-1 cube to the other.

- # Links: p/2 * log2(p) -- each node has a degree of log2(p). Multiply by p nodes and divide by 2 nodes per link.

- Degree: log2(p)

From the metrics we can see, the diameter and bisection bandwidth are significantly improved for the high order topologies. Each node is numbered with a bitstring that is log2(p) bits long. The farthest away node is this bitstring's complement. One bit can be flipped per hop so the diameter is log2(p).

k-ary d Mesh

An hypercube can be though of as a 2-ary N-dimensional mesh. The example above shows a 2-ary 4-dimensional mesh. Expanding beyond two nodes in the first dimension, we can generalize this interconnection network into a k-ary d mesh, where k is the number of nodes in each dimension, and d is the number of dimensions.

- Diameter: d(k-1) -- (k-1) links needed to navigate through the nodes in a single dimension, and d times this for the number of dimensions.

- Bisection BW: kd-1 -- One entire dimension of links must be broken with k nodes per dimension, connected to each other in d-1 other dimensions.

- # Links: dkd-1(k-1) -- Consider this as the number of bisection links (kd-1), multiplied by the number of dimensions (d), multiplied by the number of links between nodes in one dimension (k-1).

- Degree: 2d -- Each node needs to have links to nodes in both directions (2) along each dimension (d).

The degree of the network grows linearly with the number of dimensions. However, the total number of links grows O(dk2). This means that increasing the number of nodes per dimension or the number of dimensions is very expensive in terms of the number of links needed.

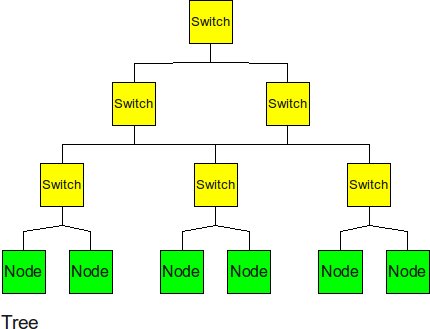

k-ary Tree

The tree is a hierarchical structure nodes on the bottom and switching nodes at the upper levels.

- Diameter: 2logk(p) -- the path from a leaf through the root to the farthest leaf on the other side

- Bisection BW: 1 -- breaking any link to the root bisects the tree

- # Links: k(p-1) -- there are k links for each router and there are p routers if p is a power of k.

- Degree: k+1 -- interior routers have k links to child nodes and 1 link to the parent node.

The tree experiences high traffic at the upper levels. Since almost 1/k of the messages need go through the root node, the root of the tree becomes the bottom neck of the tree topology. Also, the other disadvantage of tree topology is that the bisection bandwidth is only 1.

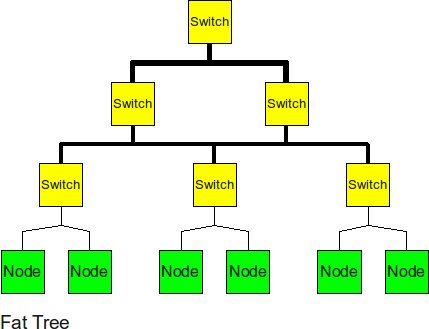

k-ary Fat Tree

In order to improve the performance of the tree topology, the fat tree alleviates the traffic at upper levels by "fattening" up the links at the upper levels.

- Diameter: 2logk(p) -- same as a regular k-ary tree

- Bisection BW: p/k -- if parent node links are k times wider than child node links this is the bandwidth of each link at the root

- # Links: k(p-1) -- same as a regular k-ary tree

- Degree: k+1 -- same as a regular k-ary tree

The fat tree relieved pressure of root node, the biseciton bandwidth has also been increased.

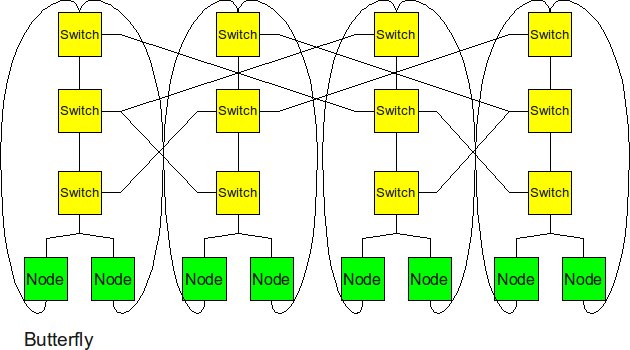

Butterfly

The butterfly structure is similar to the tree structure, but it replicates the switching node structure of the tree topology and connects them together so that there are equal links and routers at all levels.

- Diameter: 2log2(p) -- same as a tree since butterfly has the same depth as a tree (just with p nodes at each level)

- Bisection BW: p -- p links connect the two halves at the top level

- # Links: 2p*log2(p) -- there are 2*p links at each level times log2(p) levels.

- Degree: 4 -- the routers in the middle levels all have 4 links. The leaves and routers at the top level each have 2 links.

Butterfly has similar performance to Hypercube. In terms of cost, butterfly has a smaller degree (so cheaper routers can be used) but hypercube has fewer links.

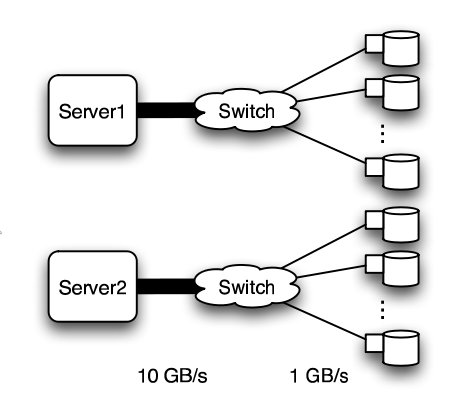

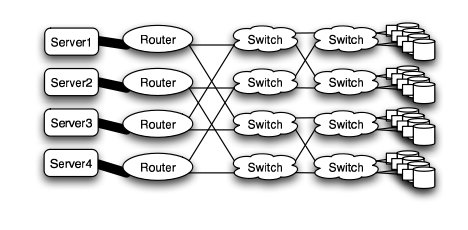

Real-World Implementation of Network Topologies

In a research study by Andy Hospodor and Ethan Miller, several network topologies were investigated in a high-performance, high-traffic network2. Several topologies were investigated including the fat tree, butterfly, mesh, torii, and hypercube structures. Advantages and disadvantages including cost, performance, and reliability were discussed.

In this experiment, a petabyte-scale network with over 100 GB/s total aggregate bandwidth was investigated. The network consisted of 4096 disks with large servers with routers and switches in between2.

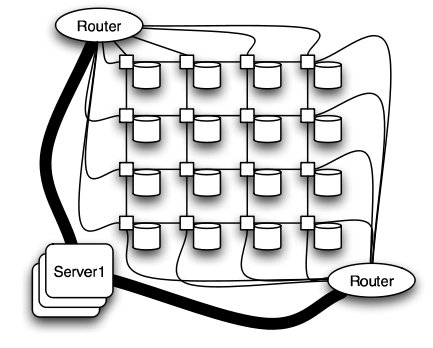

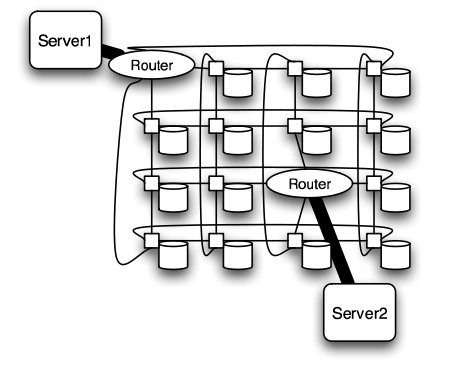

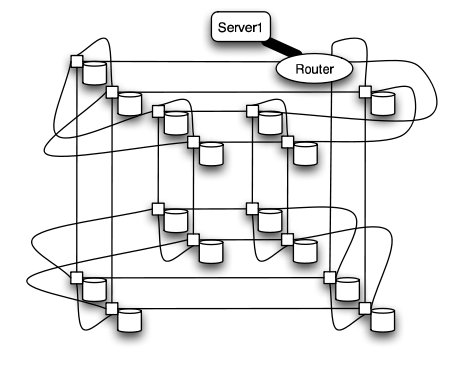

The overall structure of the network is shown below. Note that this structure is very susceptible to failure and congestion.

Fat Tree

In large scale, high performance applications, fat tree can be a choice. However, in order to "fatten" up the links, redundant connections must be used. Instead of using one link between switching nodes, several must be used. The problem with this is that with more input and output links, one would need routers with more input and output ports. Router with excess of 100 ports are difficult to build and expensive, so multiple routers would have to be stacked together. Still, the routers would be expensive and would require several of them2.

The Japan Agency for Marine-Earth Science and Technology supercomputing system uses the fat tree topology. The system connects 1280 processors using NEC processors7.

Butterfly

In high performance applications, the butterfly structure is a good choice. The butterfly topology uses fewer links than other topologies, however, each link carries traffic from the entire layer. Fault tolerance is poor. There exists only a single path between pairs of nodes. Should the link break, data cannot be re-routed, and communication is broken2.

Meshes and Tori

The mesh and torus structure used in this application would require a large number of links and total aggregate of several thousands of ports. However, since there are so many links, the mesh and torus structures provide alternates paths in case of failures2.

Some examples of current use of torus structure include the QPACE SFB TR Cluster in Germany using the PowerXCell 8i processors. The systems uses 3-D torus topology with 4608 processors7.

Hypercube

Similar to the torii structures, the hypercube requires larger number of links. However, the bandwidth scales better than mesh and torii structures.

The CRAY T3E, CRAY XT3, and SGI Origin 2000 use k-ary n-cubed topologies.

It is worth to mention that even though there are many topologies have much better performance than 2-D mesh, the cost of these advanced topologies are also high. Since most of the chips is in 2-D space, it is very expensive to implement high dimensional topology on 2-D chip. For hypercube topology, the increases of number of node will cause higher degree for each node. For the butterfly topology, although the increases of degree is relatively slow but the required number of links and number of switches increases rapidly.

Comparison of Network Topologies

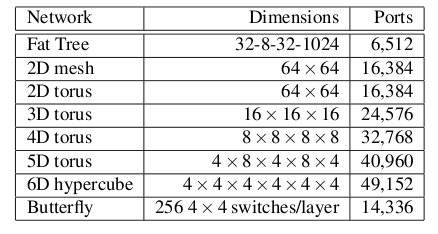

The following table shows the total number of ports required for each network topology.

Number of ports for each topology2

As the figure above shows, the 6-D hypercube requires the largest number of ports, due to its relatively complex six-dimensional structure. In contrast, the fat tree requires the least number of ports, even though links have been "fattened" up by using redundant links. The butterfly network requires more than twice the number of ports as the fat tree, since it essentially replicates the switching layer of the fat tree. The number of ports for the mesh and torii structures increase as the dimensionality increases. However, with modern router technology, the number of ports is a less important consideration.

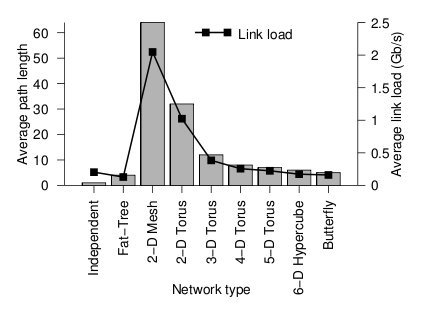

Below the average path length, or average number of hops, and the average link load (GB/s) is shown.

Average path length and link load for each topology2

Looking at the trends, when average path length is high, the average link load is also high. In other words, average path length and average link load are proportionally related. It is obvious from the graph that 2-D mesh has, by far, the worst performance. In a large network such as this, the average path length is just too high, and the average link load suffers. For this type of high-performance network, the 2-D mesh does not scale well. Likewise the 2-D torus cuts the average path length and average link load in half by connected the edge nodes together, however, the performance compared to other types is relatively poor. The butterfly and fat-tree have the least average path length and average link load.

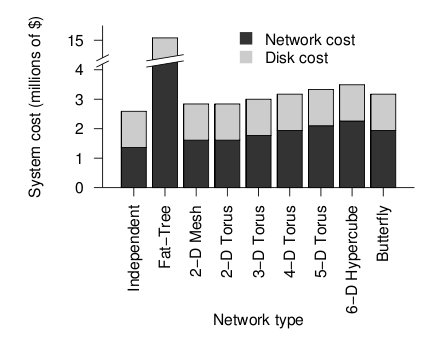

The figure below shows the cost of the network topologies.

Cost of each topology2

Despite using the fewest number of ports, the fat tree topology has the highest cost, by far. Although it uses the fewest ports, the ports are high bandwidth ports of 10 GB/s. Over 2400, ports of 10 GB/s are required have enough bandwidth at the upper levels of the tree. This pushes the cost up dramatically, and from a cost standpoint is impractical. While the total cost of fat tree is about 15 million dollars, the rest of the network topologies are clustered below 4 million dollars. When the dimensionalality of the mesh and torii structures increase, the cost increases. The butterfly network costs between the 2-D mesh/torii and the 6-D hypercube.

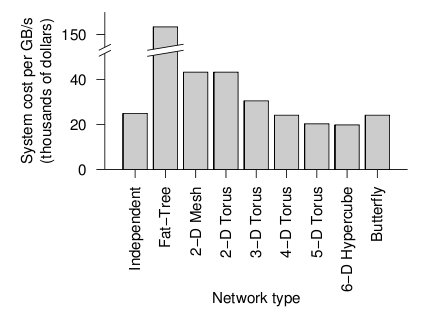

When the cost and average link load is factored the following graph is produced.

Overall cost of each topology2

From the figure above, the 6-D hypercube demonstrates the most cost effective choice on this particular network setup. Although the 6-D hypercube costs more because it needs more links and ports, it provides higher bandwidth, which can offset the higher cost. The high dimensional torii also perform well, but cannot provide as much bandwidth as the 6-D hypercube. For systems that do not need as much bandwidth, the high-dimensional torii is also a good choice. The butterfly topology is also an alternative, but has lower fault tolerance. However, when the number of nodes increases, the relative cost of the higher-dimensional topologies increases far faster than their relative performance when compared to a 2-D mesh. This is because the 2-D mesh only uses low-cost, short links. The higher-dimensional structures must be projected onto our 3-dimensional world, and thus require many long, expensive links that wrap around the outside of the system like an impenetrable tangle of jungle vines. Maintaining such a network is also quite slow and tedious.

References

1 Y. Solihin, Fundamentals of Parallel Computer Architecture. Madison: OmniPress, 2009.

2 Interconnection Architectures for Petabyte-Scale High-Performance Storage Systems

3 The Odd-Even Turn Model for Adaptive Routing

4 Interconnection Topologies:(Historical Trends and Comparisons)

This link is down at this time. Google Cache

5 Efficient mechanisms to provide fault tolerance in interconnection networks for PC clusters, José Miguel Montañana Aliaga.

6 Adaptive Fault Tolerant Routing Algorithm for Tree-Hypercube Multicomputer, Qatawneh Mohammad

7 TOP500 Supercomputing Sites

8 Understanding and Using the SP Switch

9 Myrinet Overview

10 QsNet (Quadrics' network)

11 Dragonfly Topology

12 Flattened Butterfly Topology