CSC/ECE 506 Spring 2011/6a ms

Cache Architecture

CPU caches are designed to mitigate the performance hit of reading and writing to main memory. Since main memory clock speeds are typically much slower than processor clock speeds, going to main memory for every read and write can result in a very slow system. Caches are constructed on the processor chip and take advantage of spatial and temporal locality to store data likely to be needed again by the processor.

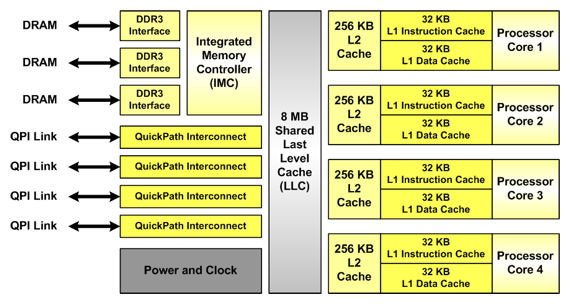

Modern processors typically have two to three levels of cache. Each processor core has private level 1 (L1) data and instruction caches and a L2 combined data+instruction cache. Sometimes, a shared L3 cache is used to serve as an additional buffer between the cache and main memory. The figure below shows the cache hierarchy in a modern processor.

Modern Cache Architectures

To give an idea of typical cache sizes, examples of modern processor caches are given in the table below.

| Vendor and Processor | Cores | L1 cache | L2 cache | L3 cache | Date |

|---|---|---|---|---|---|

| Intel Pentium Dual Core | 2 | I: 32KB D: 32KB | 1MB 8-way set-associative | - | 2006 |

| Intel Xenon Clovertown | 2 | I: 4x 32KB D: 4x 32KB | 2x 4MB | - | January 2007 |

| Intel Xenon 3200 Series | 4 | 2x 4MB | - | January 2007 | |

| AMD Athlon 64 FX | 2 | I: 64KB D: 4KB 2-way set-associative | 1MB 16-way set-associative | - | May 2007 |

| AMD Athlon 64 X2 | 2 | I: 64KB D: 4KB 2-way set-associative | 512KB/1MB 16-way set-associative | 2MB | June 2007 |

| AMD Barcelona | 4 | I: 64KB D: 64KB | 512KB | 2MB | August 2007 |

| Sun Microsystems Ultra Sparc T2 | 8 | I: 16KB D: 8KB | 4MB 8 banks, 4-way set-associative | - | October 2007 |

| Intel Xeon Wolfdale DP | 2 | D: 96KB | 6MB | - | November 2007 |

| Intel Xeon Harpertown | 4 | D: 96KB | 2x 6MB | - | November 2007 |

| AMD Phenom | 4 | I: 64KB D: 64KB | 512KB | 2MB shared | November 2007, March 2008 |

| Intel Core 2 Duo | 2 | I: 32KB D: 32KB | 2-4MB 8-way set-associative | - | 2008 |

| Intel Penryn Wolfdale | 4 | 6-12MB | 6MB | March, August 2008 | |

| Intel Core 2 Quad Yorkfield | 4 | D: 96KB | 12MB | - | March 2008 |

| AMD Toliman | 3 K10 | I: 64KB D: 64KB | 512KB | 2MB shared | April 2008 |

| Azul Systems Vega 3 7300 Series | 864 | 768GB total | - | - | May 2008 |

| IBM RoadRunner | 8+1 | 32KB | 512KB | - | June 2008 |

| FreeScale QorIQ P1,P2,P3,P4,P5 Power QUICC | 8 core P4080 | I: 64KB D: 64KB | 512KB | - | June 2008 |

| Intel Celeron Merom-L | 2 | 64KB | 1MB | - | 2008 |

| Intel Xeon Dunnington | 4/6 | D: 96KB | 3x 3MB | 16MB | September 2008 |

| Intel Xeon Gainestown | 4 | I: 64KB D: 64KB 4-way set-associative | 4x 256KB 8-way set-associative | 8MB 16-way set-associative | December 2008 |

| AMD Phenom II Deneb | 4 K10 | I: 64KB D: 64KB | 512KB | 6MB shared | January 2008 |

| Intel Celeron Merom | 2 | 32KB | 4MB | - | March 2009 |

| Intel Nehalem EP | 2/4 | 64KB | 256KB | 8MB | March 2009 |

| AMD Castillo | 2 K10 | I: 64KB D: 64KB | 512KB | 6MB shared | June 2009 |

| AMD Athlon II Regor | 2 K10 | I: 64KB D: 64KB | 1MB | - | June 2009 |

| Intel Core i5 Lynnfield | 4 | I: 32KB D: 32KB | 256KB | 4MB | September 2009 |

| AMD Propus | 4 K10 | I: 64KB D: 64KB | 512KB | - | September 2009 |

| Intel Core i3 Clarkdale | 2 | I: 32KB D: 32KB | 256KB | 4MB | January 2010 |

| IBM Power7 | 8 | D: 32KB | 256KB | 32MB | February 2010 |

| Sun Niagara 3 | 16 | 64KB | 6MB unified | 2MB | February 2010 |

| Intel Xeon Jasper Forest | 4 | 64KB | 4x 256KB | 8MB | 2010 |

| Intel Xeon Becktown | 8 | 64KB | 8x 256KB | 24MB | 2010 |

| Intel Extreme Edition Gulftown | 6 | 64KB | 256KB | 12MB unified | March 2010 |

| Intel Core i7 Bloomfield | 4 | I: 32KB D: 32KB | 256KB | 8MB | 2010 |

Write Policy

When the processor issues a write, the write policy used determines how data is transferred between different levels of the memory hierarchy. Each policy has advantages and disadvantages.

Write Hit Policies

Write hit policies determine what to do when a write hits in cache, and are primarily concerned with reducing traffic.[2]

Write-through: In a write-through policy, changes written to the cache are immediately propagated to lower levels in the memory hierarchy. [3]

Write-back: In a write-back policy, changes written to the cache are only propagated to lower levels when a changed block is evicted from the cache.[3]

Write Miss Policies

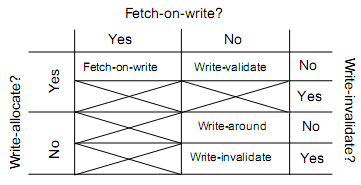

Write miss policies determine what to do when a write misses in cache, and are primarily concerned with reducing latency.[2] The Solihin text discusses write misses in terms of write-allocate versus write-no-allocate. However, write miss policies can be analyzed more in depth by looking at three different aspects of a write miss.

Write-allocate In a write-allocate policy, writes that miss have a line allocated in the cache. Conversely, in write-no-allocate policy writes that miss have no like allocated for them in the cache. Subsequent reads of the written value have to wait for the value to be fetched from a lower level of memory[2]

Fetch-on-write In a fetch-on-write policy, the block being written is fetched from lower levels of memory whenever there is a write miss. This fetch causes a delay. This policy usually implies write allocate. In no-fetch-on-write policy the processor does not have to wait for the block being written to be fetched.[2]

Write-invalidate In a write-invalidate policy, a written block is marked invalid and the value is passed to lower level of memory.[2]

Different combinations of these write miss policy aspects can form different cohesive cache write policies. Some combinations are not useful and do not produce a working cache. The four useful write policies are explored below.

Fetch-on-write This policy is the same as the write-allocate policy mentioned in the Solohin text. It is used by Intel architectures such as the Nehalem architecture.[5]

Write-validate This policy is formed by the combination of no-fetch-on-write, write allocate, and no-write-invalidate. In this policy, a write causes no fetch of data and only the written data is marked valid. This is useful in situations where data is read while other data is being written. [2]

Write-around This policy is formed by the combination of no-fetch-on-write, no-write-allocate, and no-write-invalidate. It is called write-around because data goes directly to the next level of memory, around the cache. This policy is useful in situations where a processor makes many writes but rarely has to read data it has written.[2] Sun's Niagara architecture uses this policy.[6]

Write-invalidate Since this policy does not have fetch-on-write or write-allocate, it is known as write-invalidate. It functions as described above.[2]

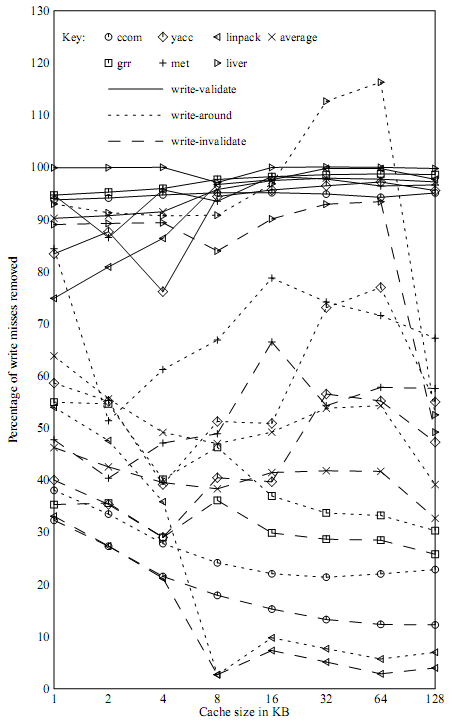

Analyzing write miss policies using three criteria gives us more options that the single criteria mentioned in the Solihin text. The following graph shows the write miss rate reductions achieved for various benchmarks for the write-validate, write-around, and write-invalidate policies. A reduction is defined as a write miss that does not cause data to be fetched.[2] We can see that they can be very effective depending on the application.

Prefetching

Prefetching is used to try and reduce cache misses by bring data into the cache before it is needed. The reduced miss rate comes at the cost of increased memory bandwidth usage, some of which is wasted if the prefetched data is unused.

Prefetching can be hardware or software based. Hardware based prefetching looks ahead to try and predict memory accesses. Software prefetching allows programmers or compilers to put instructions in programs that prefetch data they know will be used in the future.

Prefetching in parallel architectures

Shared memory parallel architectures introduce additional complexity into prefetching. Since multiple cores share memory, some single core prefetching schemes may not scale well. For instance, prefetches from many cores could choke the memory bus and reduce performance. Prefetching can also affect cache coherency in shared memory architectures.

One multicore prefetching scheme is Server-based push prefetching. In this scheme, one core is dedicated to predicting and prefetching data for other cores. This has the advantage of removing the task of prefetching from other cores and memory. The disadvantage is that one core cannot be used, but if there would be idle cores anyways, it is very effective.[4]

One way to solve the cache coherency problem is to simply drop prefetch write requests for shared data. This way prefetching can't mess up cache coherency, but will still give reads a performance boost. This solution is not good in write heavy applications, as much of the data will not be prefetched.[4]

Costs and benefits

While prefetching schemes are harder to implement for parallel architectures, they can still provide a performance boost for some workloads. Other workloads, however, will suffer from prefetching.

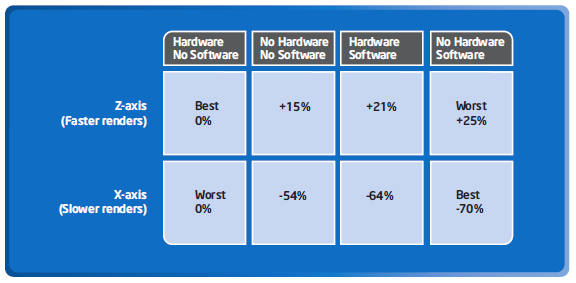

An Intel study of the effects of prefetching on the performance of a medical imaging application illustrates the varying levels of performance that prefetching can achieve. Intel studied combinations of hardware and software prefetching on their Core 2 Duo processor and how it affected to performance of a medical imaging application. For some workloads, hardware prefetching gave the best performance, and for others software prefetching gave the best performance. In some cases, prefetching actually reduced performance compared to having no prefetching at all.[7] The figure below summarizes the results of Intel's study.

References

1. http://embedded.communities.intel.com/community/en/rovingreporter/blog/tags/GE

2. "Cache write policies and performance," Norman Jouppi, Proc. 20th International Symposium on Computer Architecture (ACM Computer Architecture News 21:2), May 1993, pp. 191-201.

3. Fundamentals of Parallel Computer Architecture by Yan Solihin

4. "A Taxonomy of Data Prefetching Mechanisms," Surendra Byna, Yong Chen, Xian-He Sun. http://www.cs.iit.edu/~chen/docs/ispan08-survey.pdf

5. http://software.intel.com/en-us/articles/performance-insights-to-intel-hyper-threading-technology/

6. http://apt.cs.man.ac.uk/COMP60012/UoM-COMP60012-2009-slides-day1-7.pdf

7. ftp://download.intel.com/technology/advanced_comm/315256.pdf