CSC/ECE 506 Spring 2010/8a sk

Introduction

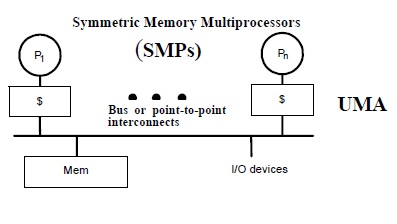

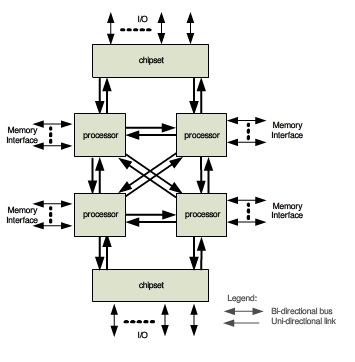

Symmetric multiprocessing (SMP) involves a multiprocessor computer

hardware architecture where two or more identical processors are connected to a single shared main memory via system bus. An SMP provides symmetric access to all of main memory from any processor and is the building block for larger parallel systems.

If each processor has a cache that reflects the state of various parts of memory, it is possible that two or more caches may have copies of the same line. It is also possible that a given line may contain more than one lockable data item. If two threads make appropriately serialized changes to those data items, the result could be that both caches end up with different, incorrect versions of the line of memory. In other words, the system's state is no longer coherent because the system contains two different versions of what is supposed to be the content of a specific area of memory. Various protocols have been devised to address the issue of cache coherence problem, such as MSI, MESI, MOESI, MERSI, MESIF, Synapse, Berkeley, Firefly and Dragon protocol. In this wiki article, MSI, MESI, MESIF, MOESI and Synapse protocol implementations on real architectures will be discussed.<ref>Cache Coherence</ref>

MSI Protocol

MSI protocol is a three-state write-back invalidation protocol which is one of the simplest and earliest-used snooping-based cache coherence-protocols. According to this protocol, a cache block can be in one of the Modified (M), Shared (S), and Invalid (I) states.

- Modified state indicates that the variable in the cache has been modified and therefore has a different value from that found in the main memory. A cache line in this state thus needs to be written back to the block to memory during eviction.

- Shared state indicates that the block exists in one or more caches, and is clean, implying that its value is consistent with the one in main memory. So a cache block in this state can be evicted without having to write it back to the main memory.

- Invalid state indicates that the cache block is invalid.

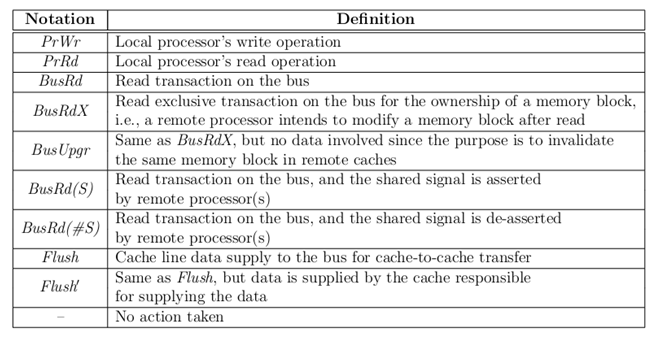

In MSI protocol, processor requests to the cache include:

- PrRd: Processor requests read to a cache block.

- PrWr: Processor requests write to a cache block.

In MSI protocol Bus-side requests include:

- BusRd: BusRd transaction is generated by a PrRd that misses in the cache, and the processor expects a data response as a result. The cache controller puts the address on the bus and asks for a copy that it does not intend to modify. The memory supplies the data.

- BusRdX: BusRdX transaction is generated by a PrWr to a block that is either not in the cache or is in the cache but is not in modified state. The cache controller puts the address on the bus and asks for an exclusive copy that it intends to modify. The memory system provides the data. All other caches are invalidated. Once the cache obtains the exclusive copy, the write can be performed in the cache.

- Flush: Flush is a snooped request that indicates that an entire cache block is written back to the main memory by another processor.

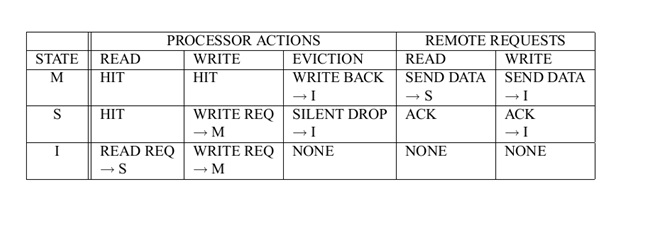

The MSI states under different conditions are shown below:<ref>Verification of Hierarchical Cache Coherence Protocols for Futuristic Processors</ref>

The three states in the MSI protocol are used to directly enforce the cache coherence by allowing only a single processor in the modified state at a given time, allowing multiple processors in the shared state concurrently, and disallowing other processors in the shared state while a processor is in the modified state. In the MSI system, there is a serious drawback that an explicit BusRdX transaction is required for a read followed by a write, even if there are no other shared copies. When a processor reads in and modifies a data item, two bus transactions are generated in this protocol even in the absence of shared copies. The first is a BusRd that gets the cache block to S state, and the second is a BusRdX(or BusUpgr) to invalidate other cached copies. The BusRdX is useless in case there are no other shared copies.

Implementation complexities

In the MSI system, an explicit upgrade message is required for a read followed by a write, even if there are no other sharers. When a processor reads in and modifies a data item, two bus transactions are generated in this protocol even in the absence of sharers. The first is a BusRd that gets the memory block in S state, and the second is a BusRdX(or BusUpgr) that converts the blcok from S to M state. In this protocol, the complexity of the mechanism that determines the exclusiveness of the block is an aspect that needs attention. Also, in snoop-based cache-coherence protocols, the overall set of actions for memory operations is not atomic. This could lead to race conditions, and the issues of deadlock, serialization, etc. make it harder to implement.<ref>Snoop-based Multiprocessor Design</ref>

MSI in Silicon Graphics IRIS 4D multiprocessors

SGI(Silicon Graphics, Inc) had used a protocol much like MSI in the IRIS 4D multiprocessor series. The bus used in this workstation is a pipelined, block transfer bus that supports the cache coherence protocol. The 4D-MP graphics superworkstation brought 40 MIPS(million instructions per second) of computing performance to a graphics super workstation. The cache coherence protocol had to support synchronization as well as sharing, so improvement in efficiency operations necessitated a compromise in the efficiency of the data sharing protocol. With the simple rules of MSI protocol enforced by hardware, efficient synchronization and efficient data sharing are achieved in a simple shared memory model of parallel processing in the 4D-MP graphics super workstation. So the simple MSI protocol is well-suited to these needs.<ref>Silicon Graphics Computer Systems</ref>

MESI Protocol

MESI protocol is a 4-state protocol, in which a cache block can have an 'Exclusive' state apart from the Modified, Shared and Invalid state as in MSI protocol.

- Modified : The cache line contains valid data for an external memory location. However, the data does not match the data in the external since the processor has modiified the data since it was loaded from external memory. A cache that contains a modified line is responsible for ensuring that the data is properly maintained.

- Exclusive : The cache line contains valid data for some external memory location. The data exactly matches the data in the external memory location. Thus the cache block is clean, valid and exists only in one cache.

- Shared : The cache line conatins valid data for an external memory location, the data is shared by another cache, and the shared data matches the data in the external memory exactly; or the cache line is in write-through mode.

- Invalid : The cache line does not contain valid data for any external memory location. An invalid line does not participate in the cache coherence protocol.

The advantage of the MESI protocol over the MSI protocol lies in the fact that if the current cache has the Exclusive state, it can silently drop the cache block without issuing the expensive writeback operation. With the MESI protocol, the processor obtains the most current value everytime it is required.

In the MESI protocol, the same as the MSI protocol, processor requests to the

cache include:

- PrRd: processor-side request to read to a cache block

- PrWr: processor-side request to write to a cache block

Bus-side request, include:

- BusRd: snooped request that indicates there is a read request to a cache block made by another processor.

- BusRdX: snooped request that indicates there is a read exclusive (write)request to a cache block made by another processor which does not already have the block.

- BusUpgr: snooped request that indicates that there is a write request to a cache block that another processor already has in its cache.

- Flush: snooped request that indicates that an entire cache block is written back to the main memory by another processor.

- FlushOpt: snooped request that indicates that an entire cache block is posted on the bus in order to supply it to another processor. We distinguish FlushOpt from Flush because while Flush is needed for write propagation, FlushOpt is not required for correctness. It is implemented as a performance enhancing feature that can be removed without impacting correctness. It is referred to as an optional block flush cache-to-cache transfer.

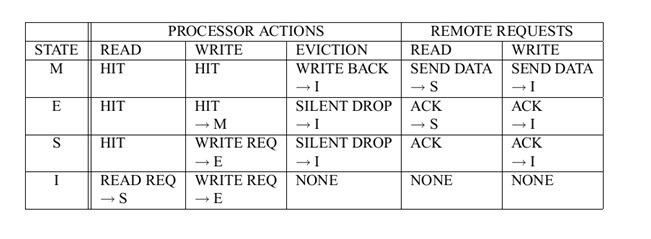

MESI states under different conditions is shown in the figure below:

Implementation Complexities

During replacement of a cache block, some MESI implementations require a message to be sent to memory when a cache line is flushed - an E to I transition, as the line was exclusively in one cache before it was removed. In alternate implementation, this replacement message could be avoided if the system is designed so that the flush of a modified /exclusive line requires an acknowledgment from the memory. However, this requires the flush to be stored in a 'write-back' buffer until the reply arrives to ensure the change is successfully propagated to memory.

According to the application, there is a bandwidth trade-off in both these applications. The MESI protocol has greater complexity in terms of block states and transitions. It requires a priority scheme for cache-to-cache transfers to determine which cache should supply the data when in shared state. In commercial implementations, usually the memory is allowed to update data. Like in the MSI protocol, this protocol too has the issues of complexity in issues of serialization, handshaking, deadlocks, etc. Also, the implementation of write-backs necessitates a write-buffer. The bus transactions relevant to buffered blocks must be handled carefully<ref>Discussion of cache coherence protocol implementation</ref>

MESI in Intel's IA32 Pentium processors

MESI protocol is used in Intel's IA32 Pentium class processors. In Intel Pentium 4 IA-32 Architecture Processor, the data cache uses

MESI protocol to support more efficient write-back cache in addition to the write-through cache previously used by the Intel486 processor.

According to Intel's processor manual, IA-32 processors (beginning with the Pentium processor) and Intel 64 processors use MESI (modified, exclusive, shared, invalid) cache protocol to maintain consistency with internal caches and caches in other processors.

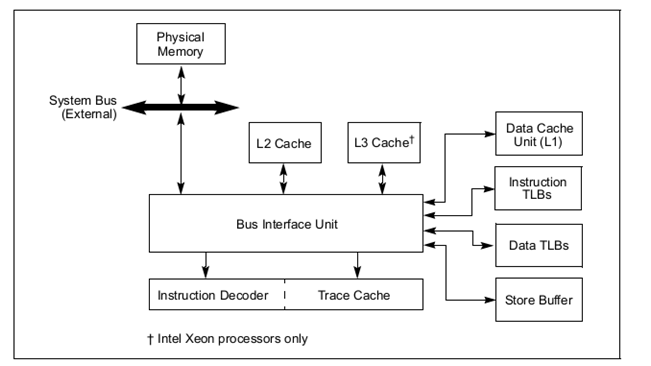

The figure below represents the cache structure of Intel Pentium 4 processors:

In the L1 data cache and in the L2/L3 unified caches, the MESI (modified, exclusive, shared, invalid) cache protocol maintains consistency with caches of other processors. The L1 data cache and the L2/L3 unified caches have two MESI status flags per cache line. Each line can be marked as being in one of the states defined in the table shown below. In general, the operation of the MESI protocol is transparent to programs. The L1 instruction cache in P6 family processors implements only the “SI” part of the MESI protocol, because the instruction cache is not writable. The instruction cache monitors changes in the data cache to maintain consistency between the caches when instructions are modified.

During replacement of a cache block, some MESI implementations require a message to be sent to memory when a cache line is flushed - an E to I transition, as the line was exclusively in one cache before it was removed. In alternate implementation, this replacement message could be avoided if the system is designed so that the flush of a modified /exclusive line requires an acknowledgment from the memory. However, this requires the flush to be stored in a 'write-back' buffer until the reply arrives to ensure the change is successfully propagated to memory.

There is a bandwidth trade-off in both these implementations.<ref>Intel® 64 and IA-32 Architectures Software Developer’s Manual</ref>

MESI in AMD's AM486DX microprocessors

AMD's enhanced Am486DX microprocessors implement the MESI protocol on systems with write-back cache support. These microprocessors allocate memory in the cache due to a read miss. Write allocation is not implemented.

To maintain coherence between cache and main memory, this protocol is implemented with the following characteristics:

- The system memory is always updated during a snoop when a modified line is hit.

- If a modified line is hit by another master during snooping, the master is forced off the bus and the snooped cache writes back the modified line to the system memory.

After the snooped cache completes the write, the forced-off bus master restarts the access and reads the modified data from memory.A cache line can occupy one of the four legal states M, E, S, I which can be indicated by the 2 bits in the tag entry.<ref>AMD-K6 -2E Embedded Processor Data Sheet Publication</ref>

MESIF Protocol

The MESIF protocol is a cache coherency and memory coherence protocol developed by Intel for cache coherent non-uniform memory architectures.The protocol consists of five states, Modified (M), Exclusive (E), Shared (S), Invalid (I) and Forward (F).

The M, E, S and I states are the same as in the MESI protocol. When a processor requests a cache line that is stored in multiple locations, every location might respond with the data. However, the requesting processor only needs a single copy of the data, so the system is wasting a bit of bandwidth. Intel's solution to this issue is rather elegant. They adapted the standard MESI protocol to include an additional state, the Forwarding (F) state, and changed the role of the Shared (S) state. In the MESIF protocol, only a single instance of a cache line may be in the F state and that instance is the only one that may be duplicated. Other caches may hold the data, but it will be in the shared state and cannot be copied. In other words, the cache line in the F state is used to respond to any read requests, while the S state cache lines are now silent. This makes the line in the F state a first amongst equals, when responding to snoop requests. By designating a single cache line to respond to requests, coherency traffic is substantially reduced when multiple copies of the data exist.

When a cache line in the F state is copied, the F state migrates to the newer copy, while the older one drops back to S. This has two advantages over pinning the F state to the original copy of the cache line. First, because the newest copy of the cache line is always in the F state, it is very unlikely that the line in the F state will be evicted from the caches. In essence, this takes advantage of the temporal locality of the request. The second advantage is that if a particular cache line is in high demand due to spatial locality, the bandwidth used to transmit that data will be spread across several nodes.

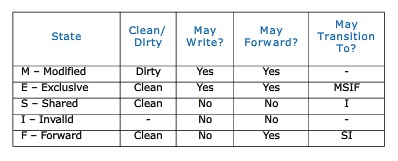

The table below summarizes the MESIF cache states:

Implementation Complexities

In comparision with the MESI protocol, the addition of 'Forward' state in the MESIF protocol reduces the complexity due to a reduction in the extent of communication between cores. This reduced communication is due to the design of specific state such that a single cache will respond to all the read requests for shared data. The implementation of write-backs necessitates a 'write-buffer' like in the case of MESI protocol, and the bus transactions relevant to these buffered blocks require careful handling. Though the inclusion of an additional state does increase design complexity, the working of this additional state and the concomitant decrease in communication between cores leads to the overall implementation complexity being similar to that in the MESI protocol.

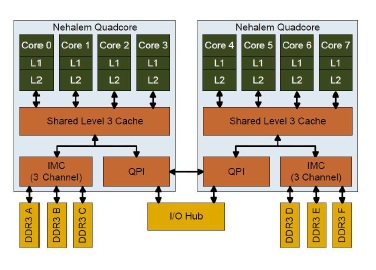

MESIF in Intel Nehalem Computer

Intel Nehalem Computer uses the MESIF protocol. In the Nehalem architecture each core has its own L1 and L2 cache. Nehalem does has a shared cache, implemented as L3 cache. This cache is shared among all cores and is relatively large. This cache is inclusive, meaning that

it duplicates all data stored in each indivitual L1 and L2 cache. This duplication greatly adds to the inter-core communication efficiency because any given core does not have to locate data in another processor’s cache. If the requested data is not found in any level of the core’s cache, it knows the data is also not present in any other core’s cache. To ensure coherency across all caches, the L3 cache has

additional flags that keep track of which core the data came from. If the data is modified in L3 cache, then the L3 cache knows if the data came from a different core than last time,and that the data in the first core needs its L1/L2 values updated with the new data. This greatly reduces the amount of traditional “snooping” coherency traffic between cores.<ref>Comparing Cache Organization and Memory Management of the Intel Nehalem Computer Architecture</ref>

The cache organization of a 8-core Intel Nehalem Processor is shown below:

MESIF in Intel® QuickPath Interconnect

The Intel QuickPath Interconnect is a high speed packetized point-to-point interconnect used in Intel's next generation of microprocessors introduced in second half of 2008. The narrow high speed links stitch together processors in distributed memory style platform architecture. The Intel QuickPath Interconnect includes the cache coherency protocol, MESIF to keep the distributed memory and shared caching coherent during system operation. It supports both low latency source snooping and direct cache-to-cache transfers for low latency.

The Intel® QuickPath Interconnect coherency protocol consists of two distinct types of agents: caching agents and home agents. A micro-processor will typically have both types of agents and possibly multiple agents of each type. A caching agent represents an entity which may initiate transactions into coherent memory, and which may retain copies in its own cache structure. The caching agent is defined by the messages it may sink and source according to the behaviors defined in the cache coherence protocol. A caching agent can also provide copies of the coherent memory contents to other caching agents.A home agent represents an entity which services coherent transactions, including handshaking as necessary with caching agents. A home agent supervises a portion of the coherent memory. Home agent logic is not specifically the memory controller circuits for main memory, but rather the additional Intel® QuickPath Interconnect logic which maintains the coherency for a given address space. It is responsible for managing the conflicts that might arise among the different caching agents. It provides the appropriate data and ownership responses as required by a given transaction’s flow.

There are two basic types of snoop behaviors supported by the Intel® QuickPath Interconnect specification. Which snooping style is implemented is a processor architecture specific optimization decision. To over-simplify, source snoop offers the lowest latency for small multi-processor configurations. Home snooping offers optimization for the best performance in systems with a high number of agents.The home snoop coherency behavior defines the home agent as responsible for the snooping of other caching agents.The Intel® QuickPath Interconnect home snoop behavior implementation typically includes a directory structure to target the snoop to the specific caching agents that may have a copy of the data. This has the effect of reducing the number of snoops and snoop responses that the home agent has to deal with on the interconnect fabric. This is very useful in systems that have a large number of agents, although it comes at the expense of latency and complexity. Therefore, home snoop is targeted at systems optimized for a large number of agents.The source snoop coherency behavior streamlines the completion of a transaction by allowing the source of the request to issue both the request and any required snoop messages.The source snoop behavior offers lower latency at the expense of requiring agents to maintain a low latency path to receive and respond to snoop requests; it also imparts additional bandwidth stress on the interconnect fabric, relative to the home snoop method. Therefore, the source snoop behavior is most effective in platforms with only a few agents.<ref>The Common System Interface: Intel's Future Interconnect</ref>

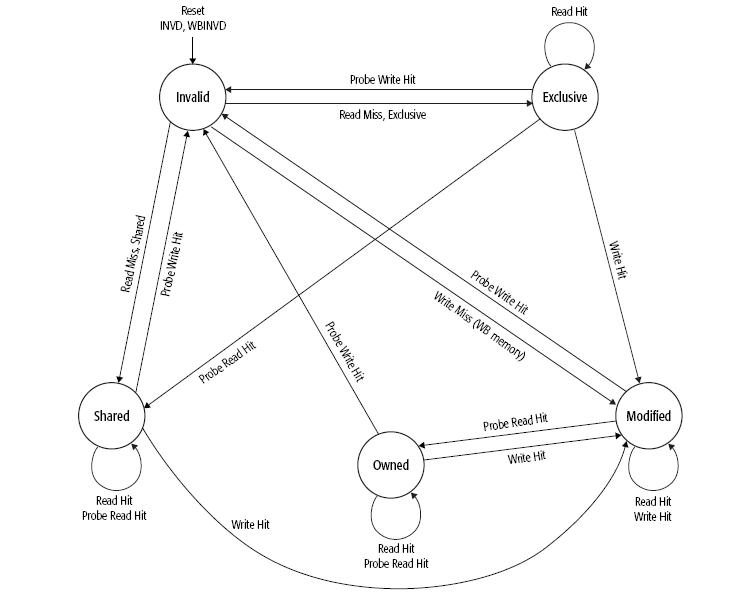

MOESI Protocol

MOESI is a five state cache coherence protocol with the following states:

- Invalid: A cache line in the invalid state does not hold a valid copy of the data. Valid copies of the data can be either in main memory or another processor cache.

- Exclusive: A cache line in the exclusive state holds the most recent, correct copy of the data. The copy in main memory is also the most recent, correct copy of the data. No other processor holds a copy of the data.

- Shared: A cache line in the shared state holds the most recent, correct copy of the data. Other processors in the system may hold copies of the data in the shared state, as well. If no other processor holds it in the owned state, then the copy in main memory is also the most recent.

- Modified: A cache line in the modified state holds the most recent, correct copy of the data. The copy in main memory is stale (incorrect), and no other processor holds a copy.

- Owned: A cache line in the owned state holds the most recent, correct copy of the data. The owned state is similar to the shared state in that other processors can hold a copy of the most recent, correct data. Unlike the shared state, however, the copy in main memory can be stale (incorrect). Only one processor can hold the data in the owned state—all other processors must hold the data in the shared state.

MOESI is a more elaborate version of the simpler MESI protocol and avoids the need to write a dirty cache line back to main memory when another processor tries to read it. Instead, the Owned state allows a processor to supply the modified data directly to the other processor. This is beneficial when the communication latency and bandwidth between two CPUs is significantly better than to main memory. An example would be multi-core CPUs with per-core L2 caches. While MOESI can quickly share dirty cache lines from cache, it cannot quickly share clean lines from cache. If a cache line is clean with respect to memory and in the shared state, then any snoop request to that cache line will be filled from memory, rather than a cache. If a processor wishes to write to an Owned cache line, it must notify the other processors that are sharing that cache line. Depending on the implementation it may simply tell them to invalidate their copies (moving its own copy to the Modified state), or it may tell them to update their copies with the new contents (leaving its own copy in the Owned state).

Implementation Complexities

This protocol is a lot more complex in terms of block states and transition. While MOESI can quickly share dirty cache lines from cache, it cannot quickly share clean lines from cache. If a cache line is clean with respect to memory and in the shared state, then any snoop request to that cache line will be filled from memory, rather than a cache. The MOESI protocol isn't perfect as well. As a matter of fact, the condition of Owned gives all power to master processor, but places other processors in read only position. So, if any of them needs to perform a write to its Shared line, it has to be done to operating memory as well, thus terminating the master processor's state of Owned. That's all right for most multiprocessor environments featuring only one active writer and several readers usually, but it's a different story for multithreaded environments where several processors or processor cores may execute different threads of the same process concurrently. An additional condition, Written, has been introduced in order to improve performance in this area, and it has resulted in creation of the MOWESI protocol. When a write to a Shared line occurs, it changes the status to Written, all other processors either invalidate or update their obsolete copies, after that it needs to change the line status to Modifiedor Owned respectively. An operating memory update has been avoided successfully either way. This protocol has been introduced in the 2-core IBM POWER5 processor.

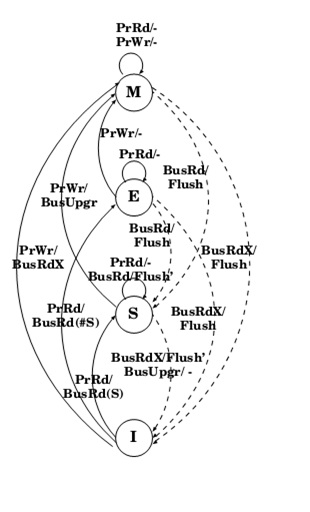

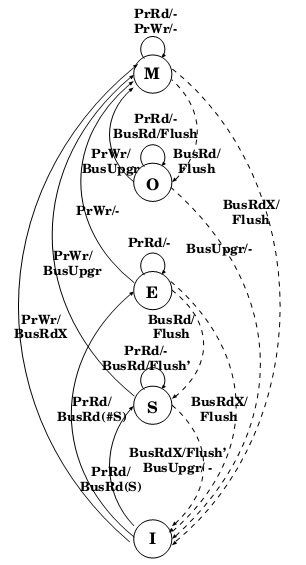

MOESI in AMD64 architecture

The cache-coherency protocol supported by the AMD64 architecture is theMOESI. The figure above below shows the general MOESI state transitions possible with various types of memory accesses in AMD64 architecture. This is a logical software view, not a hardware view, of how cache-line state transitions. Instruction-execution activity and external-bus transactions can both be used to modify the cache MOESI state in multiprocessing or multi-mastering systems. To maintain memory coherency, external bus masters (typically other processors with their own internal caches) need to acquire the most recent copy of data before caching it internally. That copy can be in main memory or in the internal caches of other bus-mastering devices. When an external master has a cache read-miss or write-miss, it probes the other mastering devices to determine whether the most recent copy of data is held in any of their caches. If one of the other mastering devices holds the most recent copy, it provides it to the requesting device. Otherwise, the most recent copy is provided

by main memory.<ref>AMD 64 Technology</ref>

There are two general types of bus-master probes:

- Read probes indicate the external master is requesting the data for read purposes.

- Write probes indicate the external master is requesting the data for the purpose of modifying it.

The state transitions involving probes are initiated by other processors and external bus masters into the processor. Some read probes are initiated by devices that intend to cache the data. Others, such as those initiated by I/O devices, do not intend to cache the data.

Some processor implementations do not change the data MOESI state if the read probe is initiated by a

device that does not intend to cache the data.

State transitions involving read misses and write misses can cause the processor to generate probes into external bus masters and to read main memory. Read hits do not cause a MOESI-state change. Write hits generally cause a MOESI-state change into the modified state. If the cache line is already in the modified state, a write hit does not change its state. The specific operation of external-bus signals and transactions and how they influence a cache MOESI state are implementation dependent. For example, an implementation could convert a write miss to a WB memory type into two separate MOESI-state changes. The first would be a read-miss placing the cache line in the exclusive state. This would be followed by a write hit into the exclusive cache line, changing the cache-line state to modified.

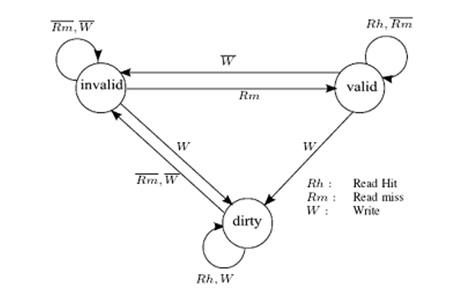

Synapse Protocol

In Synapse protocol, cache blocks are in one of the following states:

- Invalid: Indicates that the block is invalid

- Valid: Indicates that the cache block is unmodified and possibly shared

- Dirty: indicates that the cache block is modified and there are no other copies.

Only blocks in state Dirty are written back when replaced. Any cache with a copy of a block in state Dirty is called the owner of that block. If no Dirty copy exists, main memory is the owner.

Implementation Complexities

The Synapse Expansion Bus includes an ownership level protocol between processor caches. It employs a non-write-through algorithm to minimize the bandwidth between cache and shared memory is employed in the cache to reduce memory contention. This protocol does not require a great deal of hardware complexity. Since an extra bit is added to the main memory to indicate whether a cache has an exclusive(Dirty) copy of the block, this needs to be implemented right to prevent malfunction of the protocol.<ref>Synapse tightly coupled multiprocessors: a new approach to solve old problems</ref>

Synapse N+1 multiprocessor

Synapse protocol was used in the Synapse N + 1, a multiprocessor for fault-tolerant transaction processing. The N + 1 differs from other shared bus designs considered in that it has two system buses. The added bandwidth of the extra bus allows the system to be expanded to a maximum of 28 processors. A single-bit tag is included with each cache block in main memory, indicating whether main memory is to respond to a miss on that block. If a cache has a modified copy of the block, the bit tells the memory that it need not respond. Thus if the bit is set, an access to the block fails until the data is written back to memory by the cache with the exclusive copy and the bit is reset. This prevents a possible race condition if a cache does not respond quickly enough to inhibit main memory from responding.<ref>Cache Coherence Protocols: Evaluation Using a Multiprocessor Simulation Model</ref>

Prefetching

With processor speeds continuing to outpace the memory subsystem, cache missing memory operations increasingly dictate application performance. While one typically thinks in terms of data misses and data prefetching, instruction cache misses can also represent a significant performance loss for modern processors. Indeed, instruction misses are usually more expensive than data misses as instruction misses stall the processor pipeline, while a significant proportion of data misses can often be overlapped with other instructions.

For many modern commercial workloads where the instruction working set is sufficiently large to cause significant stalls due to both Level 1 (L1) and Level 2 (L2) cache misses, instruction prefetching schemes can be utilized to reduce the performance impact of these misses. Prefetching schemes speculatively initiate a memory access for a cache line, bringing the line into the caches before the processor requests the cache line, minimizing, or even eliminating, the stall experienced by the processor. Instruction prefetching is not a new topic and a variety of different hardware (HW) and software instruction prefetch schemes have been proposed. However, most modern processors only support very rudimentary HW instruction prefetchers that target only sequential misses.

Furthermore, as the relative distance to memory increases, prefetches must be issued increasingly far in advance. Today, this look ahead distance is in the order of hundreds of processor clock cycles. This necessitates that prefetchers become increasingly speculative and introduces new challenges: i) how to identify the direction the application is headed sufficiently early to allow timely prefetches to be issued and ii) although the prefetcher is speculating on program behavior hundreds of cycles in the future, it must be able to do so accurately, in order to minimize the prefetch bandwidth requirements and cache pollution. These challenges are even more relevant for Chip Multi-Processors(CMP), where there is the potential for inter-core pollution via the shared L2 cache, and the per-core bandwidth and cache resources are much more limited.

Due to prefetching, it is possible that the data can be modified in such a way that the memory coherence protocol will not be able to handle the effects. An example of this type of a situation is a page-table update followed by accesses to the physical pages referenced by the updated page tables. Because of prefetching there may be problem with correctness. Lets say for example, software wants to change the page table entry for virtual page A from physical-page M to physical-page N. For this to be done, the tables that translate virtual-page A to physical-page M are brought into main memory and its cached copies will be invalidated. Once this is done, page-table entry for virtual-page A in main memory can be changed by the software to point to physical page N which was initially pointing to physical-page M. Once the page table update is done, software expects the processor to access the data from physical-page N. However, it could be possible that the processor had prefetched the data from physical-page M before the page table for virtual page A was updated. Because the physical-memory references are different, the processor may not recognize them as requiring coherence checking. So now if the data from virtual-page A is accessed, the data from physical-page M might be returned, while it is expected to return data from physical page N.In order to prevent such errors from occurring, software must use serializing instructions or cache-invalidation instructions to guarantee subsequent data accesses are coherent.

Summary

The implementation of these cache coherence protocols in write-back caches saves off-chip bandwidth consumption significantly in comparision with write-through caces. The simplest implementation is the three-state MSI protocol. The Synapse protocol is similar to this, with an extra bit to indicate whether the cache has an exclusive copy of the block. The extra Exclusive state in the MESI protocol removes the drawback of incurring two bus transactions on a read-write sequence regardless of whether block is stored in only one cache or not in this protocol. This state distinguishes between a block that is clean and excusively cached versus a block that is clean and stored at multiple caches. Since the extra bus transaction is eliminated, this protocol avoids penalizing sequential programs and highly-tuned paralel programs. However, the MESI protocol incurs additional complexity compared to the MSI protocol due to the addition of the Shared status line and it's associated logic. The addition of the Forwarding state to take advantage of the temporal and spatial locality of the request gives rise to the MESIF protocol. A more complex protocol with better performance is the MOESI protocol which improves on the MESI protocol with an additional Owned state. Since dirty sharing is supported by allowing the dirty block to be shared by multiple caches, a cache flush does not need to update main memory. Compared to the MESI protocol, the MOESI protocol does not reduce the bandwidth usage on the bus, but it does reduce the bandwidth use to the main memory. <ref>Parallel Computer Architecture: A Hardware/Software Approach, By David E. Culler, Jaswinder Pal Singh, Anoop Gupta</ref>

Glossary

The terms that have been used are defined below:<ref>Integration and Evaluation of Cache Coherence Protocols for Multiprocessor SoCs</ref>

References

<references/>