CSC/ECE 506 Spring 2012/11a ht

Introduction

When dealing with a relatively small number of processors (8-16), according to Solihin 320, using a bus based shared memory structure is fine. Unfortunately, when you need to provide a shared memory structure for processors much greater than that, you will need a different set of organization. This new organization is needed due to the physical limitations of the bus. There are two ways you can create such a system. These include Distributed Shared Memory (DSM) or Non-Uniform Memory Access (NUMA). The benefits of having a DSM and NUMA is that we can now scale to a larger amount of processors. The disadvantage is that scaling in such a way may not be the most cost-effective solution, Solihin 320. For the remainder of this section, we will be discussing the performance of DSM's.

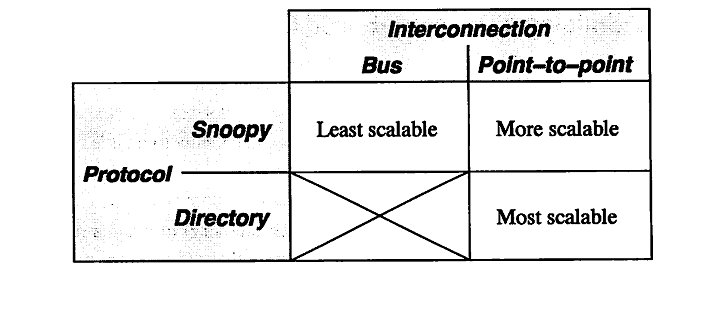

According to Solihin 320, there are two aspects that restrict the scalability of bus-based multiprocessors. These include the physical limitations of interconnections and the limitations of the protocol. To explain in detail, on a bus-based system, adding a processor will not affect any other physical restrictions on the system. Unfortunately, when adding a new processor, you will be reducing the speed of the bus. Second, the protocol needed to keep coherence does not scale well. As you increase the number of processors to the system, the amount of traffic also increases. This means that you might run the risk of overwhelming the bandwith. According to Solihin, there are a few ways that we can mitigate this problem. The following is from 321 of the Solihin textbook.

From the table, we can see that there is three ways to scale a multiprocessor system. The first being a single bus system. This is the least scalable due to the limitations of the bus wire itself. As you add processors you will decrease the bus speed due to having to increase the wire length. Also, you run into an issue of overwhelming the bus due to the amount of traffic. The second way is to use a point-to-point bus system. This allows for the speed of the bus to remain relatively fast, but since the traffic will also scale with the number of processors, there will be a limitation due to overwhelming the bus system with traffic. Lastly, the most scalable system to date is using a directory system. This allows for the bus to remain fast due to the short wires, and the bus traffic to remain low since the directory holds information on cache locations.

Protic <ref name="protic">Protic, J.; Tomasevic, M.; Milutinovic, V.; , "Distributed shared memory: concepts and systems," Parallel & Distributed Technology: Systems & Applications, IEEE , vol.4, no.2, pp.63-71, Summer 1996 doi: 10.1109/88.494605 paper</ref> defines a DSM to "consists of multiple independent processing nodes with local memory modules, connected by a general interconnection network." What this means is that rather than having these processors connected on a single bus line, there is a network of bus lines. The new issues that arise from such a method involves how to communicate with another node. This is usually solved using a message passing model that is effective depending on the topology that is used. According to Protic, "... compared to shared-memory systems, hardware problems are easier and software problems more complex in distributed-memory systems." What we can walk away with is that even though you have made the hardware limitation less of a factor, the software problem is now more complex in nature.

According to Nitzberg <ref name="nitzberg">Nitzberg, B.; Lo, V.; , "Distributed shared memory: a survey of issues and algorithms," Computer , vol.24, no.8, pp.52-60, Aug. 1991

doi: 10.1109/2.84877

paper</ref> DSM has been researched since the 1980's. There are many reasons why DSM has been an area of research focus. Uniprocessor bus-based systems suffer from a hardware and software limitation that can be mitigated using a DSM. But, this has only become an issue as we start having more and faster processors that we want to add to the system. There are three approaches that have been used to implement a DSM system. These include hardware, operating system, and compiler implementations.

Optimization Implementations

Shasta Protocol

The Shasta protocol improves on the regular software disributed shared memory system such that the shared data can be kept coherent at fine granularity. This is implemented by inserting inline code that checkes the cache state of shared data before each load or store. This protocol also allows the coherence granularity to be varied across different shared data structures in a single application, thus alleviating any potential inefficiencies that arise from the fixed large(page-size) granularity of the communication typical in most software shared memory systems. As in the hardware cache-coherent multiprocessors, shared data in this systems has three states - Invalid, Shared and Exclusive. This protocol provides a number of mechanisms for dealing with the long communication latencies in a workstation cluster. It minimizes extraneous coherence messages, and hence requires fewer messages to satisfy shared memory operations compared to protocols commonly used in hardware DSM systems. It also includes optimizations such as non-blocking stores, that aggressively exploit a relaxed memory consistency model. Other optimizations include detection of migratory data sharing, issuing multiple load misses simultaneously, merging of load and store misses to the same cache line, and support for prefetching and home placement directives. <ref name="shasta protocol">Scales, D.J.; Gharachorloo, K.; , "Shasta : A system for Supporting Fine-Grain Shared Memory across Clusters" Eighth SIAM conference on Parallel Processing for Scientific Computing, March 1996 [1]</ref>

Multiple Coherence Granularity

The Shasta protocol can support multiple granularities for communication and coherence, even with a single application. This can provide a significant performance boost in a software DSM system, since data with good spatial locality can be communicated at a coarse grain to amortize large communication overheads , while data prone to false sharing can use a finer sharing granularity. The implementation automatically chooses a block size based on the allocated size of a data structure. The basic heuristic is to choose a block size equal to the object size up to a certain threshold; the block size for objects larger than a given threshold is simply set to the base Shasta line size (typically set to be 64 bytes). The rationale for the heuristic is that small objects should be transferred as a single unit, while larger objects (e.g. large arrays) should be communicated at a fine granularity to avoid false sharing. Since the choice of the block size does not affect the correctness of the program, the programmer can freely experiment with various block sizes to tune the performance of an application. Controlling the coherence granularity in this manner is significantly simpler than approaches adopted by object-based or region-based DSM systems, since the latter approaches can affect correctness and typically require a more substantial change to the application.

Minimization of Protocol Messages

The Shasta protocol is designed to minimize extraneous coherence messages, given the relatively high overheads associated with handling messages in software DSM implementations. In this protocol, the current owner node specified by the directory guarantees to service a request that is forwarded to it. The fact that the current owner guarantees to service a request that is forwarded to it allows the protocol to complete all directory state changes when a requestor first reaches the home. This property eliminates the need for extra messages that are sent back to the home to confirm that the forwareded request is satisfied. Since this protocol supports dirty sharing, it also eliminates the need for sending an up-to-date copy of the line back to the home in case of read transaction when the home node is remote and the data is dirty in another node. Supporting exclusive requests reduces the need for fetching data on a store if the requesting processor already has the line in shared state. Also, the number of invalidation acknowledgements that are expected for an exclusive request are piggybacked on on of the invalidation acknowledgements to the requestor instead of being sent as a separate message.

Exploitation of Relaxed Memory Models

This protocol emulates the behavior of a processor with non-blocking loads and stores and a lockup-free cache, thus exploiting release consistency. Non-blocking stores are supported by issuing a read-exclusive or exclusive request, recording where the stre occured, and continuing. This information allows the protocol to appropriately merge the reply data with the newly written data that is already in memory. This protocol also exhibits a limited form of non-blocking load behavior

due to the batching optimization, since batching can lead to multiple outstanding loads . Lockup-free behavior for lines that are in a pending state is also supported by allowing writes to a pending line to proceed by storing the newly written data into memory and recording the location of the stores in the miss handler invoked due to the pending state.

Detection of Migratory Sharing Patterns

The Shasta protocol provides a sophisticated mechanism for detecting data that is shared in a migratory fashion and optimizing accesses to such data. Migratory sharing occurs when data is read and modified by different processors, leading to the migration of the data from one processor to another. By keeping extra information at each directory entry, the protocol detects whether the data in each line exhibits migratory behavior. A line is designated for migratory conversion after the migratory sharing pattern is successfully observed for a threshold number of times. A read request to a line that is designated for migratory conversion is automatically converted to a read-exclusive request at the directory. This conversion avoids the load miss followed by a store miss to the same line that is typical for migratory shared data. The protocol provides a mechanism to revert a line from migratory conversion. The reply data for a converted read request is cached with a special caching state , which is called exclusive migratory. Operations by the owner processor treat the line as exclusive, and a subsequent store by that processor changes the line to the ordinary exclusive state. The protocol detects a break in the migratory behavior if an incoming request from another processor arrives before the owner processor writes to the line ,i.e., while line is still in exclusive-migratory state. In this case, a message is sent to the home directory to nullify or revert the migratory conversion for that line. The line may subsequently be designated for migratory conversion if migratory behavior is observed again.

Prefetching and Home Placement Directives

The Shasta protocol allows the application to explicitly specify the home processor for individual pages instead of relying on the default round-robin allocation. The protocol also supports non-binding prefetch and prefetch-exclusive directives. The Shasta system can optionally supply information on source code lines that suffer the most number of remote misses by keeping extra state within the protocol. The programmer can use this information to identify places where prefetching may be helpful.

Parallel File Input/Output in Cohesion system

A parallel file I/O system that is independent of the memory consistency models reduces the file-related network traffic in the page-based software DSM systems built on a network of workstations. File accesses in page-based software Distributed Shared Memory (DSM) systems are usually performed by a single node, which may lead to a poor overall performance because a large amount of network traffic is generated to transfer data between this file handling node and the other nodes. This system alleviates this problem. The two main features in this design are the adaptive data distribution scheme and the delayed file access mechanism. The former distributes file blocks among the nodes according to the access pattern of the application, while the latter ensures that the data are transferred to the consumer node instead of the request node by exploiting the memory mapping features of the virtual shared address space of the DSM systems. Previously, most researches had been focused on the speedup of the computation phase of a DSM application via relaxed memory consistency models. The time-consuming file access operations were neglected. For ease of programming, the file accesses in a DSM application are usually performed in the sequentially executed initialization and completion phases on a certain node. As a result, a large amount of network traffic is generated to distribute the file data to other nodes during computation. This may degrade system performance since the networks employed in many DSM systems are usually slow compared to other components such as CPU, or even disk. The parallel file I/O system helps combat this network traffic. The effectiveness of this design has been verified by implementing a prototype on a page-based software DSM system named Cohesion.

Design

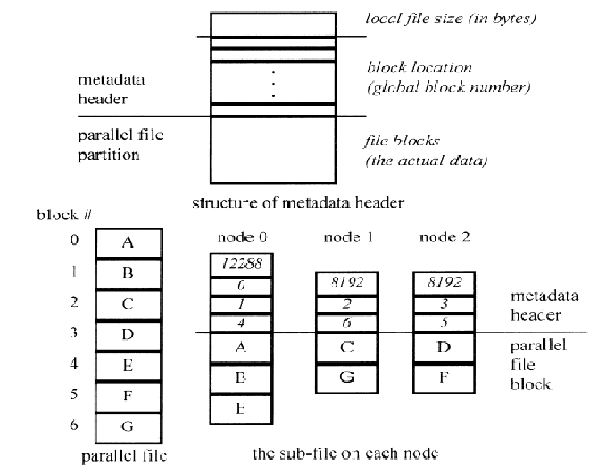

The two maingoals in the design are to parallelize the file accesses and reduce network traffic, and to minimize modifications on the existing DSMapplications. In this parallel file I/O system, every computation node also acts as a storage node. A parallel file is partitioned into file blocks, which are scattered among the nodes so that each node has a disjoint portion of the parallel file. In the pre-run of an application, the files are placed on the root node(node 0), which is the node that initiates the DSM system and the application. The data access pattern of the application is collected and the files are then partitioned into file blocks and redistributed among all nodes via an adaptive distribution scheme. In the subsequent executions, the root node issues the read requests via a delayed file access mechanism to ensure that the file blocks are placed in the consumer nodes instead of the requester node, i.e., the root node. Whenc omputation is completed, write requests are issued by the root node via the delayed access mechanism so that the data on each node are stored in its local disk instead of the root node, thereby reducing the network traffic.

Adaptive Data Distribution Scheme

An adaptive data distribution scheme is used to reduce the amount of network transfers. In this scheme, file blocks are distributed among nodes according to the application's access pattern. It is divided into the metadata file and the information collection technique. A metadata file for each parallel file on each node is required to keep track of the global block numbers of the file blocks in the local file portion, as the file blocks are distributed on the nodes according to the access patterns, which vary across applications. Every file block stored in the disk of a node has an entry in the associated metadata file, and this entry records the global block number of that file block in the parallel file. When the parallel file is opened, a request is broadcasted and the metadata files are opened. When the file read is issued, a read request is broadcasted and each node reads the file blocks from its local disk into the specified shared memory locations according to the metadata file and the starting memory address given in the read function call. When a file is written,the owner node of each memory page of the result data writes that page into its local disk,and the metadata are generated by recording its block number. An information collection technique records the access pattern of a DSM application. In the pre-run execution, the whole input file of the application is placed at the root node. During initialization, the root node reads the file into its local memory pages and maps these pages to their corresponding global virtual addresses spaces. During computation, page faults will be generated when the file-related pages, i.e., the memory pages containing the file data, are accessed bythe other nodes except the root node. This page fault information is collected by our information collection module. By the end of the computation phase, each node has a list of the previously accessed file-related pages and this list is sent to the root node for parsing. In this way, the application's access pattern of the input file is ascertained and the file blocks are then redistributed accordingly. After redistributing the file blocks, a metadata file on each node is then created according to this information. In case of a file write, the owner nodeof a target page writes that page into the local disk, a nd each node creates the metadata file accordingly. With this technique, the amount of network traffic during computation is greatly reduced, except in the pre-run execution of the application.

Delayed File Access Mechanism

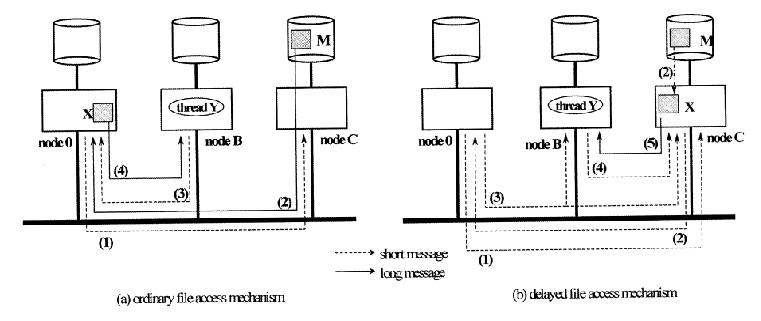

When a system employs ordinary file access mechanism, all file data are sent to the root node when a file is read no matter how we distribute the file blocks, because the requester node is always the root node in our system. The file data are then serialized by the network, negating the benefit of file I/O parallelization. Furthermore, the root node may use only a portion of the file data during computation. As a result, moving all file data to the root node for each file access induces unnecessary network traffic.

In the delayed file access mechanism, the requested file block is directly put into the specified memory page of the storag e node inst ead of the re-quester node. When the root node issues a file read operation, a file read request with the memory address specifed in the read operation is broadcasted . Every node then reads a file block from its local disk and stores the block in a local memory page. The metadata header is checked to find the virtual shared add ress space where this local memory page should be mapped, and the local memory page is then mapped to the corresponding virtual shared address space. The reading of the file blocks and the address mapping are repeated until all file blocks are processed. Each node then returns the file block numbers that it has handled to the root node, and the root node broadcasts the node locations of all memory pages containing the file blocks so that other nodes can locate these pages when page faults occur. In this way, the actual network transfer of file blocks is delayed until the data are actually used during computation. The amount of network messages in this mechanism may not be less than that in the previously mentioned ordinary mechanism, but the amount of file data transfer across network is lessened with the delayed mechanism.

Implementation in Cohesion system

Cohesion is a page-based software DSM system built on a network of intel ´86 based microcomputers running MSDOS with DOS-extender and iRMK Real -Time Kernel . The shared address space of a program running on Cohesion can be divided into two regions, which are categorized as the conventional memory and the eager release memory. A programmer can choose a region for his shared object by inheriting the corresponding base class provided by the system. In Cohesion, we have developed a kernel- level MSDOS-compatible file manipulation module which provides the basic file services such as open/close and read/write. There are four parallel file operations in this proto-type: POpen,PRead,PWrite, and PClose. POpen opens a parallel file for read or write access . An open notification is broadcasted by the root node. Each node then opens its local file portions and reads the metadata headersin case of a file read, or creates a local file in case of a file write. Via our delayed file access mechanism, PRead reads the content of the parallel file from the disks to the virtual shared address space and PWrite writes the contentof a virtual shared memory region to the disks. Experimentally, the total execution time of the 2048 * 1024 20-iterations Successive Over Relaxation program on eight nodes was reduced from 196 seconds with sequential I/O to 108 seconds with parallel I/O, while it was reduced in the 512 * 512 Matrix Multiplication program on eight nodes from 100 seconds with sequential I/O to 84 seconds with parallel I/O.

References

<references />