CSC/ECE 506 Fall 2007/wiki2 4 BV

MapReduce

One of the major problems faced in the network world, is the need to classify huge amounts of data into easily understandable smaller data sets. It might be the problem of classifying hits for a website from different sources or classifying the frequency of occurrence of words in text, the problem involves analyzing large amounts of data into small understandable data sets.

MapReduce is a programming model for processing large data sets. This was developed as a scalable, multiprocessing model by Google. This model boasts of high scalability and high fault tolerance. MapReduce helps in mapping large data sets to smaller number of keys, thus reducing the data set itself to a more manageable proportion. More specifically, users specify a map function to process a key/value pair and generate a set of intermediate key/value pairs. The reduce function merges these intermediate key/values and generates the consolidated output.

Some of the examples, where MapReduce can be employed are:

- Distributed Grep

- Count of URL Access Frequency

- ReverseWeb-Link Graph

- Term-Vector per Host

Steps of Parallelization

The programs written based on this model can be parallelized and executed concurrently on a number of systems. The model provides the capability for partitioning of data, assignment to different processes and merging the results.

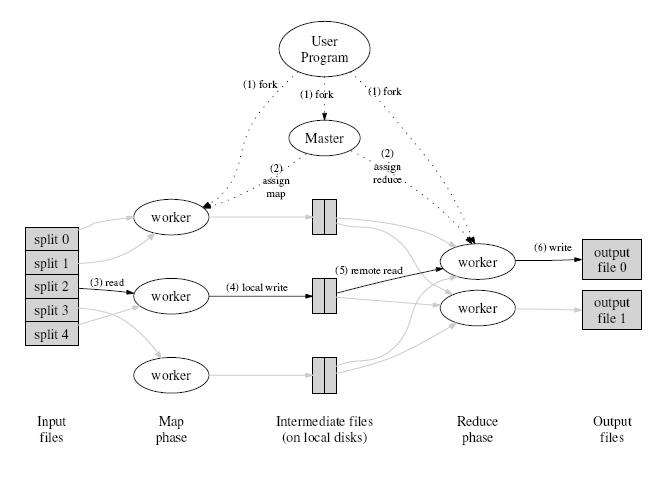

Execution Overview

Decomposition

Decomposition is a step that divides the problem into manageable tasks. In MapReduce the input data set is divided into M splits. Typically a master process takes care of the decomposition. The MapReduce algorithm tries to map a large dataset into a smaller number of key/value pairs.

The number of key/value pairs to be arrived at, will determine the size of the split. For example, the grep problem, which counts the number of occurrences of a specific word or a group of words in a document, the document is split into M equal parts. The size of each part is based on the number of distinct word(s)to be searched. Hence searching for larger group of words requires the document to be divided into smaller parts.

Thus MapReduce provides an adaptive method for decomposing a problem.

Assignment

Assignment is step that assigns the related decomposed tasks to processes. In MapReduce splits are assigned to idle map workers that generate R partial/complete outputs. These partial outputs are consolidated into a final result. In the grep problem, each worker is assigned a part of the document along with the word(s) to be searched. The word(s) to be searched represents the key/value pair. Each worker searches the part of the document assigned to it and generates a partial count of the occurrences. The partial counts form map workers are reduced into a final count of occurrences by the reduce workers. The master is responsible for assigning M parts of the document and the R intermediate counts to idle workers. MapReduce provides for load based task assignment which is beneficial in a scalable multiprocessor architecture

Orchestration

The main purpose of orchestration is to reduce the synchronization and communication overhead between the processes. The high I/O traffic generated may become a crucial problem. In the above example, the intermediate values generated by the processes are consolidated into a final output. The reduce workers read the data from the map workers through remote procedure calls. The data read is sorted based on the intermediate keys so that all occurrences of the same key are grouped together. This increases the probability that each reduce process may work on the same key values.

Mapping

This phase will assign the processes to different processors with an intent of minimizing the communication between them. Considering the above mentioned problem, the document parts are mapped to different map workers (i.e processors) by the master. It is quite possible that much of the time is spent on transferring the data ( i.e. document parts and intermediate key/value pairs) across the network. One way of solving the problem is to, store replicas of the document parts at different worker locations. The master can now assign the M parts in such a way that the replicas of the input document part is local to the processor on which it is processed. This will eliminate the actual file transfer from the master to the map worker and thereby reducing the communication.