Text metrics

This is the design review of our final project as part of CSC 517 in Fall 2016. The Project is titled Text Metrics.

Description

This project will facilitate few text metrics to evaluate student's peer reviews. The text metrics will provide a result about the textual content, the nature of the review, whether it contains any sort of offensive or improper language, and quantify the sincerity of the review in general.

Tasks to be completed

- Create DB table [review_metrics] to record all the metrics of volume, presence of suggestions, errors/problems pointed out by the reviewer and offensive words used in the review text if any.

- Add a pop-up dialog or a label for both students (student_review/list) and for instructors by adding content in review_mapping/response_report page metrics column

- Make sure the code works for both assignments with and without "vary rubric by rounds" selected.

- Make sure the code updates the review text metrics table when the peer reviews are updated.

- Sort the reviews based on the text metrics on the “Your scores” pages of students’ view

- Create tests to make sure the test coverage increases

Current Implementation

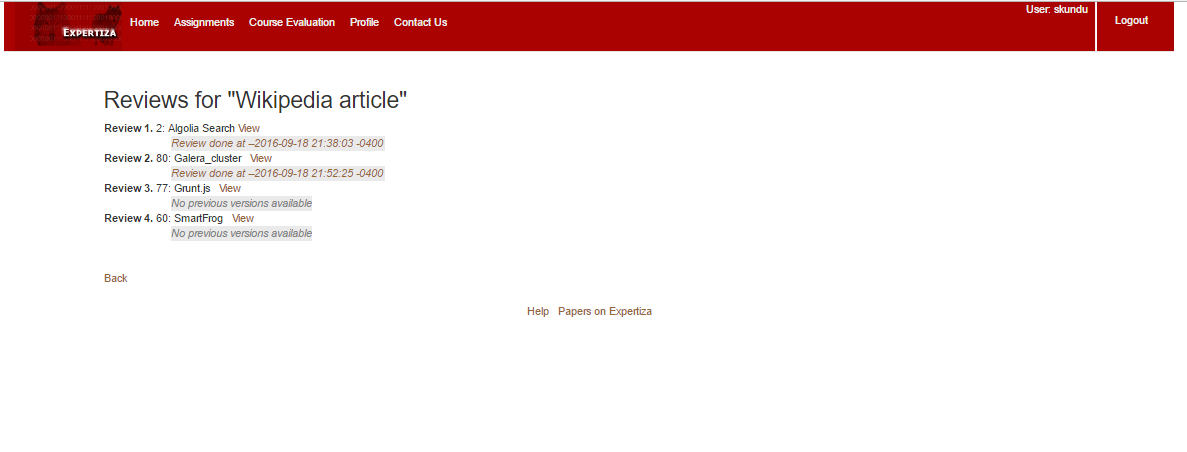

Currently, after completion of reviews, the reviewers can view their responses as shown in the screenshot below:

Instructors can see the response of the reviewers. However, in order to analyze the quality of review given by the students, the instructors have to manually go through all the reviews given by every student one at a time. This could be a time-consuming process, and the reviewers may not be properly assessed.

In order to ease the process of evaluating the reviewers, metrics that can analyze the text written by the reviewers can be added. This will give more information about the reviews given by the reviewers to the instructors evaluating them.

Proposed Design

With reference to the current tasks, we will be adding a new model Review_metrics. This model will include all the metrics as follows:

- response_id → int(11) → foreign key

- volume → int(11) → # of [different] words

- suggestion → tinyint(1) → if suggestion is given in the peer-review

- problem → tinyint(1) → if problems or errors in the artifact are pointed out in the peer-review

- offensive_term → tinyint(1) → if the peer-review contains any offensive terms

There will be a consequent review_metric_controller which will include all the necessary CRUD operations for review metrics such as add, remove and update review metrics.

We will be dealing with the following View files:

- student_review/list.html.erb - for the student

- review_mapping/response_report.html.erb - for the instructor

Design Pattern Used

Iterator pattern is used as the design pattern. This pattern is used in order to allow us to iterate over the elements of a collection regardless of their implementation. In our case, the collection is the different responses given by a reviewer in one single feedback. Since we will be iterating over the different responses in a given feedback by a reviewer (where the different responses in a given feedback are the elements of the collection) in order to collect the text metrics, the design pattern used will be an iterator pattern. This will provide a standard interface for starting an iteration and moving to the next element, regardless of the implementation of the collection.

Features to be added

Added label or pop up which will indicate the metrics as icons, as shown below. These icons will directly summarize the review in terms of predefined metrics. The reviews will be sorted according to the text metrics and will be displayed to the student on the 'Your Scores' page.