CSC/ECE 506 Spring 2013/4a aj

Introduction

Automatic parallelization, also auto parallelization, autoparallelization, or parallelization, the last one of which implies automation when used in context, refers to converting sequential code into multi-threaded or vectorized (or even both) code in order to utilize multiple processors simultaneously in a shared-memory multiprocessor (SMP) machine.<ref>http://en.wikipedia.org/wiki/Automatic_parallelization</ref> Developers desire parallelization as it can provide significant performance gains by reducing the amount of time a particular program or routine takes to complete by spreading the work across multiple processing elements. Ideally, the developer(s) would architect their applications to take advantage of parallel computers, but this may not occur for a few reasons: he/she inherited a sequentially written legacy program, lack of understanding how to program for parallel computers, or the simplicity of developing a sequential program is desired. In these cases, the developer needs to rely on another person to transform the code to support parallel execution or to rely on a compiler to identify and exploit parallelism in the source code. In the past decades the ability of compilers to extract parallelism was minimal or non-existant. Today, a majority of compilers are able to identify and extract parallelism from source code. This wiki article was directed by Wiki Topics for Chapters 3 and 4

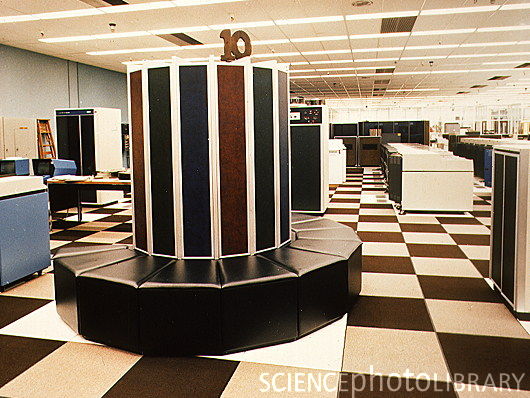

Identification <ref>http://www.bukisa.com/articles/13059_supercomputer-evolution</ref>

A primary goal of the compiler is to identify opportunities for parallelization and to this end it may use feedback from the developer, high-level language constructs, low-level language constructs, and/or runtime data. Some of the simplest opportunities for parallelization can be found in the high-level code; and two of these opportunities are described in sections below. The data parallel style is based on the ability to have part of the data worked on by each processor. The functional style is based on the ability to simultaneously execute different statements or subroutines on different processors.

Data Parallelism

//Embarrassingly parallel or pleasingly parallel code

for (int i=0; i<N; i++)

{

for (int j=0; j<N; j++)

{

A[i][j] = B[i][j] + C[i][j]; //no dependencies on previous iterations

}

}

In the code above, both loops can be parallelized. You can imagine an NxN grid of processors, with each processor working on one element of the array. However, this is often not done by compilers as the inner loop often incurs too much overhead, so in this case you can imagine a row of processors with each processor responsible for a single column.

//Not obviously parallelizable code

for (int i=1; i<N; i++)

{

for (int j=1; j<N; j++)

{

A[i][j] = B[i-1][j] + C[i][j-1]; //dependencies on both previous iterations

}

}

However, the code above is parallelizable by sweeping the anti-diagonals. A problem with this approach however will be maintaining a reasonable load across all the processors as some processors will have little work.

Algorithm/Functional Parallelism

//Functionally parallel code

for (int i=0; i<N; i++)

{

A[i][j] = B[i][j] + C[i][j]; //no dependencies on previous iterations

D[i][j] = E[i][j] + C[i][j]; //no dependencies on previous iterations

}

The above code can be split out as two for loops that execute simultaneously

for (int i=0; i<N; i++)

{

A[i][j] = B[i][j] + C[i][j]; //no dependencies on previous iterations

}

for (int i=0; i<N; i++)

{

D[i][j] = E[i][j] + C[i][j]; //no dependencies on previous iterations

}

Parallelism was identified by determining that the order of the operations was not important. Therefore, the operations could be conducted in parallel.

Parallelization Techniques in Compilers<ref>http://www.csc.villanova.edu/~tway/publications/DiPasquale_Masplas05_Paper5.pdf</ref>

Automated parallelization can take place at compile time where the compiler will perform different kinds of analysis in order to determine what can be parallelized. There are many ways to perform this analysis, including scalar analysis, array analysis, and commutativity analysis. By using different types of analysis, the compiler can find different ways that it is possible to parallelize a program. With the growing need for parallel and distributed computing environments, it is often up to the compiler to determine ways in which a users' program can be parallelized. Giving the traditional software developer who hasn't been trained in parallelization technniques a way to automatically parallelize their programs by using a compiler to parallelize their program is advantageous. However, this has proved to be a very difficult task, given that the compiler knows nothing about the program besides the code that it is given. By using different types of analysis like scalar, array, and commutativity analyses, compilers can make an attempt at making a parallelizable program.

Scalar and Array Analysis

Scalar and array analyses are usually performed in conjunction with each other in imperative, low-level languages like C or FORTRAN. "Scalar analysis breaks down a program to analyze scalar variable use and the dependencies that they have." The scalar analysis will find anything that it deems can be parallelized and any sections that it cannot find to be parallelizable, it is left to the array analysis.

One type of array analysis is called array data-flow analysis where the compiler will look for array data that can be privatizable. This privatization can allocate a local copy of an array so different threads in the program can work on different parts of the array. However, if there are any loop-carried dependencies, the analysis will deem that the array is not privatizable, thus not parallizable.

Although these types of analysis are powerful, there are limitations. The most significant limitation is that it cannot support some subsets of the C programming language, which includes pointers. These types of compiler analyses cannot handle pointers since at compile-time it is impossible to determine if a dynamic pointer will contain data objects that are parallelizable. So, this kind of analysis is limited to loop parallelization where a data access pattern is known.

Commutativity Analysis

Description about use of commutativity analysis in compilers here...

Paradigms<ref>http://www.cray.com/Assets/PDF/about/CrayTimeline.pdf</ref>

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

Static<ref>http://www.versionone.com/Agile101/Methodologies.asp </ref>

Static analysis is the most rudimentary form of automatic parallelism, simply relying on the input source code for information.

Speculative <ref>http://www.netlib.org/benchmark/top500/reports/report94/Japan/node5.html</ref>

In 1977 Fujitsu produced the first supercomputer prototype called the F230-75 APU which was a pipelined vector processor added to a scalar processor. This attached processor was installed in the Japanese Atomic Energy Commission (JAERI) and the National Aeronautic Lab (NAL).

Dynamic

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

Adaptive/Interactive

This paradigm has the ability to extract additional coarse-grained parallelism by seeking feedback from the developer.

Polytope Model

Deals with an abstraction to polygons, polyhedrons, or more generally polytopes. http://en.wikipedia.org/wiki/Polytope_model

Profile-driven Model

Deals with an abstraction to

Pitfalls

Hitachi has been producing supercomputers since 1983 but differs from other manufacturers by not exporting them. For this reason, their supercomputers are less well known in the West. After having gone through two generations of supercomputers, the S-810 series started in 1983 and the S-820 series in 1988, Hitachi leapfrogged NEC in 1992 by announcing the most powerful vector supercomputer ever.The top S-820 model consisted of one processor operating at 4 ns and contained 4 vector pipelines with four pipelines and two independent floating-point units. This corresponded to a peak performance of 2 Gflop/s. Hitachi put great emphasis on a fast memory although this meant limiting its size to a maximum of 512 MB. The memory bandwidth of 2 words per pipe per vector cycle, giving a peak rate of 16 GB/s was a respectable achievement, but it was not enough to keep all functional units busy.

The S-3800 was announced two years ago and is comparable to NEC's SX- 3R in its features. It has up to four scalar processors with a vector processing unit each. These vector units have in turn up to four independent pipelines and two floating point units that can each perform a multiply/add operation per cycle. With a cycle time of 2.0 ns, the whole system achieves a peak performance level of 32 Gflop/s.

The S-3600 systems can be seen as the design of the S-820 recast in more modern technology. The system consists of a single scalar processor with an attached vector processor. The 4 models in the range correspond to a successive reduction of the number of pipelines and floating point units installed. Link showing the list of the top 500 super computers top 500 super computers. Link showing the statistics of top 500 supercomputer statistics

Limitations

Luke Scharf, of the National Center for Supercomputing Applications, describes well the limitations of automatic parallelization when he wrote of the problem of parallelization in general, "we usually solve this problem by putting a smart scientist in a room with a smart parallel programmer and providing them a whiteboard and a pot of coffee. Until a compiler can do what these people do, the utility of automatic parallelization is very limited." Compilers are thus limited by the amount and the type of information that is made available to them. Yan Solihin provides a great example of this when describing the ocean current problem in which each loop iteration in the code dependent upon previous iterations. He explains that the code introduces these dependencies, but they are not relevant to solving the problem. At this stage he describes that each point simply takes into account their neighbor and creates a result. Therefore, he discerns that the grid points can be separated by red-black partitioning where each neighbor is a different color, and each partition is assigned to a processor. This is something the compiler will probably never accomplish.[1]

Fine-grained Parallelism

blah blah blah

Course-grained Parallelism

blah blah blah

Examples<ref>http://http://www.top500.org</ref>

Power FORTRAN Analyzer

A commercial product by Silicon Graphics International Corp.

Polaris

Designed in the early 1990s to take a sequential FORTRAN77 program and output an optimized version suitable for execution on a parallel computer. This compiler supported inter-procedural analysis, scalar and array privatization, and reduction recognition.<ref>http://polaris.cs.uiuc.edu/polaris/polaris-old.html</ref>

Stanford University Intermediate Format (SUIF 1,2)

Started out as an NSF-funded and DARPA-funded collaboration between a few universities in the late 1990s with a goal of creating a universal compiler. A major focus of SUIF was parallelization of C source code, and this started with taking an intermediate program representation of the code. At this stage, various automatic parallelization techniques were used including: interprocedure optimization, array privatization, and pointer analysis, reduction recognition.

Jade

A DARPA-funded project that focused on the interactive technique for automatic parallelization. Using this technique, the programmer is able to exploit coarse-grained concurrency.<ref>http://www-suif.stanford.edu/papers/ppopp01.pdf</ref>

Kuck and Associates, Inc. KAP

A commercial compiler that was later acquired by Intel. KAP was an early product which supported FORTRAN and C that featured advanced loop distribution and symbolic analysis.

Intel C and C++ Compilers

A contemporary, advanced compiler that can incorporate multiple parallelization paradigms including: static analysis, interactive, and adaptive/profile-driven. Flags:

-O0, no optimization -O1, optimize without size increase -O2, default optimization -O3, high-level loop optimization -ipo, multi-file inter-procedural optimization -prof-gen, profile guided optimization -prof-use, profile guided optimization -openmp, OpenMP 3.0 support -paralell, automatic parallelization

Legend

- Vendor – The manufacturer of the platform and hardware.

- Rmax – The highest score measured using the LINPACK benchmark suite. This is the number that is used to rank the computers. Measured in quadrillions of floating point operations per second, i.e. Petaflops(Pflops).

- Rpeak – This is the theoretical peak performance of the system. Measured in Pflops.

- Processor cores – The number of active processor cores used.

Top 10 supercomputers of today<ref>http://www.junauza.com/2011/07/top-10-fastest-linux-based.html</ref>

Below are the Top 10 supercomputers in the World(as of June 2011). An effort has been made to compare the architectural features of these supercomputers.

1.K-computer:

- K-computer is currently the world's fastest supercomputer. It is developed by Fujitsu at the RIKEN Advanced Institute for Computational Science campus in Kobe, Japan.

- As per the LINPACK benchmarking standards, K-computer managed to give a peak performance of a mind-blowing 8.16 petaflops toppling Tianhe-1A off its number one spot.

- This supercomputer uses 68,544 2.0 GHZ 8-core SPARC 64 VIIIfx processors packed in 672 cabinets, for a total of 548,352 cores. In layman's term, K-computer's performance is almost equivalent to the performance of 1 million desktop computers.

- The file system used here is an optimized parallel file system based on Lustre, called Fujitsu Exabyte File System.

- One of the disadvantage with this high-performer is it consumes about 9.8 MW of power, that's the amount of power that would be enough to light 10,000 houses. When compared with its closest competitor, that is the Tianhe-1A, the K-computer is miles ahead and it is highly unlikely that it would lose its number 1 spot any time soon.

Supercomputer Programming Models<ref>http://books.google.com/books?id=tDxNyGSXg5IC&pg=PA4&lpg=PA4&dq=evolution+of+supercomputers&source=bl&ots=I1NZtZyCTD&sig=Ma2fHyp336BSp4Yv2ERmfrpeo4&hl=en&ei=IAReS4WbM8eUtgf2u8GnAg&sa=X&oi=book_result&ct=result&resnum=5&ved=0CB4Q6AEwBA#v=onepage&q=evolution%20of%20supercomputers&f=false</ref>

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. The base language of supercomputer code is, in general, Fortran or C, using special libraries to share data between nodes. Now environments such as PVM and MPI for loosely connected clusters and OpenMP for tightly coordinated shared memory machines are used. Significant effort is required to optimize a problem for the interconnect characteristics of the machine it is run on. The aim is to prevent any of the CPUs from wasting time waiting on data from other nodes.

Now we will discuss briefly regarding the programming languages mentioned above.

1) Fortran previously known as FORTRAN is a general-purpose, procedural, imperative programming language that is especially suited to computation like numeric and scientific computing. It was originally developed by IBM in the 1950s for scientific and engineering applications,then became very dominant in this area of programming early on and has been in use for over half a century in very much computationally intensive areas such as numerical weather prediction, finite element analysis, computational fluid dynamics (CFD), computational physics, and computational chemistry. It is one of the most popular and highly preferred language in the area of high-performance computing and is the language used for programs that benchmark and rank the world's fastest supercomputers.

Fortran a blend derived from The IBM Mathematical Formula Translating System encompasses a lineage of versions, each of which evolved to add extensions to the language while usually retaining compatibility with previous versions. Successive versions have added support for processing of character-based data (FORTRAN 77), array programming, modular programming and object-based programming (Fortran 90 / 95), and object-oriented and generic programming (Fortran 2003).

External links

3.Top500-The supercomputer website

5.Supercomputers to "see" black holes

6.Supercomputer simulates stellar evolution

7.Encyclopedia on supercomputer

10.Water-cooling System Enables Supercomputers to Heat Buildings

13.UC-Irvine Supercomputer Project Aims to Predict Earth's Environmental Future

14.Wikipedia

15.Parallel programming in C with MPI and OpenMP ByMichael Jay Quinn

19.SuperComputers for One-Fifth the Price

References

<references/>