CSC/ECE 506 Spring 2012/10a vm

Prefetching and consistency models.

Prefetching

Introduction to prefetching

Almost all processors today, use prefetching as a means to speed up execution. Primarily, prefetching is used to shorten the amount of time a processor is in the wait state by predicting which cache block would be accessed next, so that where there is a cache miss the old block in the cache can be immediately replaced with the block that is prefetched thus decreasing the idle time of the processor. Performance in prefetching is best when it is done by following the program order. However it need not always be that prefetching is done in program order, a processor trying to guess the result of a calculation during a complex branch prediction algorithm will need to anticipate the result and fetch the right set of instructions for execution. Things get more complex when it comes to graphical processing units or GPUs. Prefetching can take advantage of spatial coherence and the data that is prefetched are not a set of instructions but instead they are texture elements that can be mapped to a polygon.<ref>Instruction prefetch wiki article.</ref>

Types of prefetching

Prefetching can be primarily classified based on whether it is binding or non-binding and whether it is hardware or software controlled. Using a binding prefetch, the value of a later reference, like a register load, is bound at the time the prefetch completes. However this comes with restrictions because the value in the prefetch might not be accurate, there might be invalidations that can be caused by another processor in the time frame between prefetch and reference by the current processor. Coming to the non-binding prefetch, for the data is got into the processor cache, the coherence is maintained till the processor reads or writes the value. This approach unlike the binding prefetch will not have an effect on the correctness of any consistency model. This can serve as a serious improvement in terms of performance.

The kind of prefetch that is interesting to us is the hardware controlled and non-binding prefetch. Prefetching done is software involves significant effort from the user side and it may not be scalable as the length of the program increases. Whereas non-binding prefetch yields significantly more performance when compared to the binding prefetch. The major performance enhancement from prefetching comes with decreasing the memory latency that are caused by all consistency models.

In the case of a read operation, the read prefetch is issued to get the data in a read-only shared state in the cache. Since we are considering the non-binding prefetch there is a guarantee that the read operation is going to return a correct value when it is allowed to run irrespective of the actual prefetch completion time. However there is also a catch to this, the value read might not always be correct. Consider a case where there is a write operation performed on the memory location that was just read-prefetched. Thus the value can be read again depending on whether an invalidation or an update based coherence scheme is being used. If an update based coherence scheme is being used, we can be assured that the other processor sends a bus update and the current processor can pick it up to update its value. However, if an invalidation based coherence scheme is used, there would be a coherence miss and the value will be read again from the system memory giving an illusion that the prefetch did not occur.

In the case of a write operation, to acquire the exclusive ownership of the line, a read-exclusive prefetch can be used. Since it is cached in the exclusive mode, a write operation can proceed without incurring an invalidate or an update on other caches which have cached the same line or block. The write operation performs quickly because the value is already cached and there is not coherence miss, thus reducing the idle time of the processor. However, it is to be noted that the read-exclusive prefetch is only possible if the coherence scheme is invalidation based and not an update one. The same logic of incorrectness applies here that we have seen in the read case, suppose that another processor asks for a write to the same block, the exclusive ownership will no longer be maintained. Instead the cache block will be invalidated.

How does prefetching work ?

In a multiprocessor environment, each processor has a load and a store buffer. The way in which consistency models work is that the accesses to memory locations are delayed until the previous requests have finished. While issuing a read or a write request, we can implement the prefetch by making the hardware automatically issue a prefetch for requests which are in the load or store buffer and those requests which cannot be honored right away because of the delays incurred due to constraints.

Let us trace through the steps a prefetch request would follow. First it looks up the cache to see whether the block is already present. If it is indeed available, the request is terminated. If it is not present, the prefetch issued to memory. Depending upon what type of prefetch technique we are using, the response to the prefetch might be immediate or not. The response is then placed in the cache. This process can be optimized by detecting duplicate requests to the same location and then combining the responses to each individual request to point to the prefetch request thereby preventing the issue of duplicate prefetch requests being issued to memory. This mechanism also taxes the memory system in the form of requirements. Firstly, it requires hardware coherent caches and the the block that is prefetched should fit into the cache. Then for the write prefetch to work efficiently, there should be an invalidation based coherence scheme in place. Not only these, the bus bandwidth should be high in order to handle several prefetch requests at the same time. The caches will also be more busy when prefetch is implemented.

The amount of lookahead we do for a prefetch needs to be controlled, in the sense that if more number of blocks are prefetched than required, then the processor might not ever use it and it would also result in cache misses of actually required blocks. Thus the extent to which lookahead is done should dynamically adjust depending on whether the processor actually uses the blocks or just evicts them without a read or a write reference to it.

Prefetching with Sequential Consistency

Sequential Consistency (SC) might cause a reader to assume that its implementation will need a single memory module. This is however not true, both SC and the relaxed memory models allow many optimizations within them in order to deliver high performance. SC and the relaxed models allow non-binding prefetching along with coherent caching.<ref>Cache consistency and sequential consistency</ref>

Let us take a look at an example and see how SC vs prefetching with SC performs

Assumptions being made are that the processor has non-blocking reads with branch prediction machinery, invalidation based cache coherence scheme is used, if there is a cache hit the latency is 1 clock cycle and the cache miss incurs a latency of 100 clock cycles.

The following code segment represents a common producer scenario:

1) lock L (miss) 2) write A (miss) 3) write B (miss) 4) unlock L (hit)

Measuring how the code performs under SC only

Since we are considering a system with SC, we know that an access to memory cannot be issued until the previous access has completed. The first three accesses are misses and they occur one after the other without any interleaving, which gives us a total latency of 100 x 3 = 300 clock cycles. The fourth operation however is a cache hit, thus adding only 1 clock cycle. The four accesses take up 301 clock cycles to run.

Measuring how the same code performs under prefetching with SC

With prefetching we can get significant improvement in terms of performance. Since the system now has the ability to do prefetching and the processor has branch prediction machinery, let us assume that the branch predictor takes the loop in which the lock L has been successfully acquired. The lookahead operates and A and B are prefetched into memory in the read exclusive mode. When the statement lock L executes, the write accesses to A and B are served in parallel as well because they are prefetched. Thus the total would sum to 102 clock cycles (2 hits in parallel and 1 miss). The fourth memory access is a hit and takes only 1 clock cycle to finish thus giving a total of 103 clock cycles.

Comparing the 103 clock cycles in prefetching under SC .vs. SC only we see that there is nearly a 200 clock cycle difference which is a significant improvement. As the number of accesses increase, the difference is only going to get larger until a certain point where "SC only" would no longer be a viable option.

Another example showing how SC vs prefetching with SC performs

The same assumptions are being made which we made for the first example.

The following code segment represents a common producer scenario:

1) lock L (miss) 2) read C (miss) 3) read D (hit) 4) read E[D] (miss) 5) unlock L (hit)

Measuring how the code performs under SC only

Under the critical section we see that there are three read accesses. Read D is assumed to be a hit and read E[D] may be a hit or a miss depending on the value D. Doing the same math that we have done above, under SC only we see that the total number of clock cycles are 302.

Measuring how the same code performs under prefetching with SC

With prefetching, we see that the total time taken will be 203 (2 misses & 3 hits).

Thus again, we see that prefetching with SC performs better than "SC only".

Evaluating the performance of prefetching with SC

We have two examples, where prefetching with SC does a better job than "SC only". But what we need to look at is whether this is the maximum performance which can be extracted. Consider the second example where we had 3 reads in a critical section. The address which E[D] is trying to access will depend on the value of D. Read D was a cache hit, and E[D] is not allowed to perform under SC until the read C finishes. Thus, even though prefetching increases performance by reducing the time delay caused due to consistency constraints, it does not deal with situations where out of order usage of the values returned by memory access is critical for the processor to proceed efficiently. That is prefetching fails to do a good job when out of order consumption of values is important.<ref>Memory access dependencies in shared-memory multiprocessors</ref><ref>Performance evaluation of memory consistency models for shared-memory multiprocessors</ref>

Even though the main use of prefetching is to enable the servicing of the requests faster when compared to the delay constraints imposed by the memory contraint models, correctness needs to be maintained at all times. The number of times a prefetched value is incorrect should be small, and luckily this is indeed true in the real world for several reasons. The invalidation of the prefetched values is loosely coupled with the delay to obtain a correct execution sequence. These delays are imposed by the memory consistency models, and in SC which is the stricter of all models imposes many delays. Usually, the time when one process releases a synchronization is much earlier when compared another process trying to acquire it. Thus the delays introduced by SC are unnecessary and therefore taxes the system. <ref>Memory access buffering in multiprocessors</ref>

Such cases are not difficult to find in real applications and hence prefetching cannot be used all the time. Therefore prefetching under SC was not widely adopted and hence new schemes had to be developed in order to overcome the drawbacks of this method.

Solution#1 Prefetching with Release Consistency

Prefetching under release consistency does at least as good as prefetching under SC. Let us evaluate its performance in the two examples we looked at previously. We follow the same assumptions about the system which we have followed previously.

The first example

The following code segment represents a common producer scenario:

1) lock L (miss) 2) write A (miss) 3) write B (miss) 4) unlock L (hit)

Measuring how the code performs under RC only

Since we are considering a system with RC, we know that write accesses to memory are delayed until the lock access can be performed. The lock L operation takes 100 clock cycles to finish, followed by another 100+1 cycles for write A, write B to finish and then finally the fourth operation takes 1 clock cycle to finish because it is a hit. Thus the total number of clock cycles are 202 under the "RC only" approach.

Measuring how the same code performs under prefetching with RC

With prefetching we can get significant improvement in terms of performance. Since the system now has the ability to do prefetching and the processor has branch prediction machinery, let us assume that the branch predictor takes the loop in which the lock L has been successfully acquired. The lookahead operates and A and B are prefetched into memory in the read exclusive mode. When the statement lock L executes, the write accesses to A and B are served in parallel as well because they are prefetched. Thus the total would sum to 102 clock cycles (2 hits in parallel and 1 miss). The fourth memory access is a hit and takes only 1 clock cycle to finish thus giving a total of 103 clock cycles.

In this example, we found that prefetching with SC and prefetching with RC take the amount of time.

The second example

The following code segment represents a common producer scenario:

1) lock L (miss) 2) read C (miss) 3) read D (hit) 4) read E[D] (miss) 5) unlock L (hit)

Measuring how the code performs under RC only

Under the critical section we see that there are three read accesses. Read D is assumed to be a hit and read E[D] may be a hit or a miss depending on the value D. Doing the same math that we have done above, under "RC only" we can see that the total number of clock cycles are 203.

Measuring how the same code performs under prefetching with RC

With prefetching, we see that the total time taken will be 202 (2 misses & 2 hits pipelined).

Thus again, we see that prefetching with RC performs better than "RC only".

However, we have not got a significant performance gain in the second example. Thus even though RC allows more relaxation on the order of operations issued to memory, the gain is not much. Relaxed models offer more options on the hardware implementation perspective than SC so we might get tempted to conclude that hardware must use the relaxed models instead of SC. On the other hand, the performance gain we expect too see is not delivered under prefetching with RC, thus it cannot be justified to be a good approach either. Even though it performs better than prefetching under SC.

Also, the same argument that we used for SC by mentioning that it taxes the system by causing unwanted delays to ensure correctness can be applied to the RC model as well. Though unlike SC, it may not tax the system much because in RC the delays are only inserted at synchronization points. Even then it forms a part of the argument that prefetching under RC is not a very good method.

Solution#2 Speculative Execution

Speculative execution does impose many constraints which relaxed and SC implementations do, but rather it allows them to be more aggressive. Speculative execution allows the processor to execution instructions eagerly. However the same strictness when it comes to being correct applies. That is the instructions must be undone when speculations prove incorrect. This scenario can be found in wrongly predicted branches. When the processor knows that it cannot be wrong, it does a commit of the instruction which frees up the resources that were used for implementation. There are two strict ruls about instructions being committed: <ref>Speculative Execution wiki article</ref>

- All instructions prior to the current instruction must be committed, & - The current instructions operation commits.

For example, when we consider a load or store operation, we can be assured that it commits when it does a read or a write on a memory value. With speculative execution, both relaxed and SC implementations can perform operations out of order and in a speculative fashion. While comparing the peer performance of relaxed .vs. SC,

read A (miss) read B (hit)

When dealing with SC, read A occurs first, gets committed and then read B gets committed. However, with relaxed models, read B can get committed before read A gets committed. The difference in performance between relaxed and SC is ver narrow. However, the measurement of the difference in performance depends upon benchmarks, latencies etc.

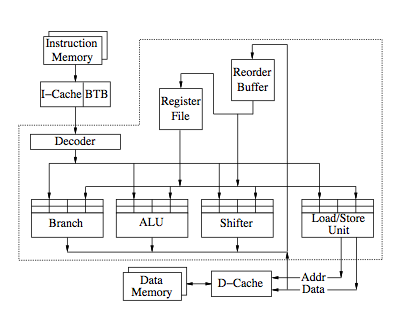

The figure below shows an example implementation of a SE system.<ref>Two Techniques to Enhance the Performance of Memory Consistency Models by Kourosh Gharachorloo, Anoop Gupta, and John Hennessy</ref>

Idea behind speculative execution

The core idea which forms the crux of speculative execution is simple and easy to understand. For example, let us consider there are two accesses a and b in the program order. a is considered to have a large latency whereas b is simply a load access. Also, the consistency model needs a to complete before b can finish. The speculative execution for load accesses proceeds as follows. The processor obtains the value returned by access b before a can complete and proceeds. Now when a completes, the processor checks to see if the speculated return value of b before the completion of a is same as the actual value obtained after a completes. If they both match then the speculation was correct and the processor proceeds without any undo of instructions. However, if the values do not match then we need to redo the access b to get the correct value. These steps in speculative execution can be summarized by 3 different mechanisms. Namely,

a) The speculative mechanism b) The detection mechanism c) The correction mechanism

a) The speculative mechanism - In the speculative mechanism, the most appropriate thing to do would be to perform the access, get the returned value and proceed to use the returned value. If there is a cache hit, the value will be returned instantly, else if it is a miss the access is pipelined with other accesses just like we have seen in prefetching.

b) The detection mechanism - The only way to detect if the speculated value is correct or not, is by redoing the access after the consistency model would have actually allowed it do be done without speculation. This however might not be the only way if we could monitor the coherence transactions on that particular location in cache to see if there is an invalidation. Thus another improvement when compared to the prefetch technique is that, in speculative execution the cache is only accessed once unlike twice which is the norm in prefetch technique. Going back to our example of two accesses a and b, the consistency model requires that access b is deferred until access a is complete. However, under the speculative execution, b can proceed without waiting for a to complete. The detection mechanism works by monitoring whether there is an update or invalidation at location b which is an indication that the speculation is incorrect. However, if there are no update or invalidation messages, we can be assured that the speculated value is correct.

c) The correction mechanism - Once the detection mechanism determines that the speculated value is incorrect, the correction mechanism comes into play. The first step that the correction mechanism does is that it discards the computation of the speculated value. The load accesses and the computations after the speculated value can be discarded too, just like the correction mechanism used in processors when there is a branch prediction that has gone wrong.

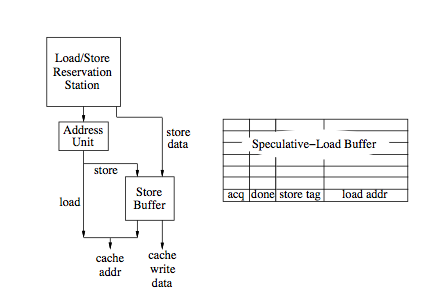

The figure below, shows the organization of a load/store functional unit of a SE system.

The speculative mechanism is superior to the prefetch mechanism (both under SC and RC) by giving an opportunity to use out of order speculated values.

Referring back to the example we discussed while talking about performance of prefetching with RC/SC

The following code segment represents a common producer scenario:

1) lock L (miss) 2) read C (miss) 3) read D (hit) 4) read E[D] (miss) 5) unlock L (hit)

The pipelining in both prefetching and speculative execution will be same and thus we might expect the same level of performance. But however read D does not cause a bottle neck because under speculative execution, its value can be immediately consumed before the previous accesses commit. Thus we expect both the SC and RC implementations to finish in 104 clock cycles (analogous to 4 hits and 1 miss).

As soon as we know the address for the access, the load access can be issued under speculative execution irrespective of the consistency model that is being supported. Even in speculative execution, the technique will impose requirements on the system. For all the three mechanisms discussed, we expect hardware support, else we might not get the performance gain that we expect if we implement the same in software.

Illustrative example

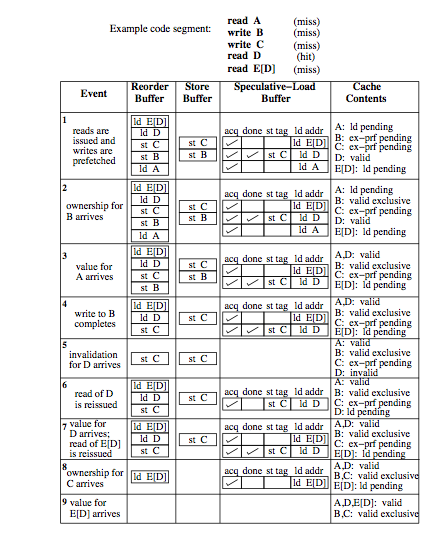

This is the example which we will be taking up for discussion to see how SE works,

In the above figure, the contents of various buffers are showed along with the cache content for each even that occurs. All three mechanisms which have discussed will be traced in the example. The reorder buffer holds the instructions that are fetched and decoded. Tracing through the set of events, initially the loads and prefetches (exclusive mode) are issued for the corresponding stores. In the meantime, while the store buffer is working on the store operations, the speculative load buffer has three loads. The returned value of read D has already been used by the processor. The second event indicates an ownership for B, however the it is not immediately completed. Its completion has been reordered and delayed by the reorder buffer. The third event indicates that the value of A arrives and the entrees for A are purged from both the speculative-load and reorder buffers. Once the load A completes the reorder buffer signals the store buffer to allow the store B to complete. And this is a hit because the value is already cached and thus finishes quickly. Store C is now next to be served in the reorder buffer and its access is merged with the previous prefetch request for the same location.

Now to make things interesting, let us assume that there is an invalidation for D. This would cause a mismatch in the value speculated for D and hence the load D and the instructions following it are purged, indicated by event 5. The 6th event shows the correction mechanism by fetching the two instructions again and yet again load D will be a speculative event because store C has not finished. The 7th event shows new value in location D and since it is cached and known, E[D] proceeds. The 8th event shows that store C has finished and since it does not effect D, the speculative value is correct and and is retired from the reorder and speculative-load buffers. The last even signifies the completion of E[D].

Future directions and ambitious implementations

The paper by Adve and Hill <ref>Implementing Sequential Consistency In Cache-Based Systems</ref> proposes an implementation for sequential consistency that is more efficient than conventional implementations. Their scheme requires an invalidation-based cache coherence protocol. At points where a conventional implementation stalls for the full latency of pending writes, their implementation stalls only until ownership is gained. To make the implementation satisfy sequential consistency, the new value written is not made visible to other processors until all previous writes by this processor have completed. The gains from this are expected to be limited, however, since the latency of obtaining ownership is often only slightly smaller than the latency for the write to complete. In addition, the proposed scheme has no provision for hiding the latency of read accesses. Since the visibility-control mechanism reduces the stall time for writes only slightly and does not affect the stall time for reads, we do not expect it to perform much better than conventional implementations. In contrast, the prefetch and speculative load techniques provide much greater opportunity for buffering and pipelining of read and write accesses.

The paper by Stenstrom <ref>A latency-hiding scheme for multiprocessors with buffered multistage networks</ref> proposes a mechanism for guaranteeing access order at the memory instead of at the processor. Each request contains a processor identification and a sequence number. Consecutive requests from the same processor get consecutive sequence numbers. Each memory module has access to a common data structure called next sequence-number table (NST). The NST contains P entries, one entry per processor. Each entry contains the sequence number of the next request to be performed by the corresponding processor. This allows the mechanism to guarantee that accesses from each processor are kept in program order. Theoretically, this scheme can enhance the performance of sequential consistency. However, the major disadvantage is that caches are not allowed. This can severely hinder the performance when compared to implementations that allow shared locations to be cached. Furthermore, the technique is not scalable to a large number of processors since the increment network used to update the different NST’s grows quadratically in connections as the number of processors increases.

To gain significant improvement in terms of performance, there have been a number of memory consistency models proposed. One disadvantage of the relaxed memory consistency models is that they present a more complex programming model to the user. The cost of this added performance benefit is however the extra hardware complexity that comes with it. The prefetch technique is simple and can easily be absorbed into the modern multi processor architectures while the speculative execution technique is not that easy when compared to the prefetch technique. Things get more complicated when the speculative execution technique is to be incorporated into multi processor systems. In future, we might expect better implementations of the speculative execution technique which will enable easy absorption into multi processor architectures.

References

<references />