CSC/ECE 506 Spring 2011/ch6a jp

Cache Hierarchy

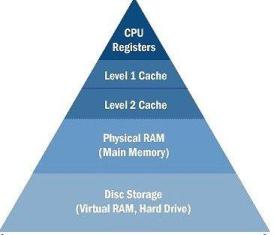

In a simple computer model, processor reads data and instructions from the memory and operates on the data. Operating frequency of CPU increased faster than the speed of memory and memory interconnects. For example, cores in Intel first generation i7 processors run at 3.2 GHz frequency, while the memory only runs at 1.3GHz frequency. Also, multi-core architecture started putting more demand on memory bandwidth. This increases the latency in memory access and CPU will have to be idle for most of the time. Due to this, memory became a bottle neck in performance.

To solve this problem, “cache” was invented. Cache is simply a temporary volatile storage space like primary memory but runs at the speed similar to core frequency. CPU can access data and instructions from cache in few clock cycles while accessing data from main memory can take more than 50 cycles. In early days of computing, cache was implemented as a stand alone chip outside the processor. In today’s processors, cache is implemented on same die as core.

There can be multiple levels of caches, each cache subsequently away from the core and larger in size. L1 is closest to the CPU and as a result, fastest to excess. Next to L1 is L2 cache and then L3. L1 cache is divided into instruction cache and data cache. This is better than having a combined larger cache as instruction cache being read-only is easy to implement while data cache is read-write.

Cache Write Policies

Write hit policies

Write-through

Also known as store-through, this policy will write to main memory whenever a write is performed to cache.

Write-back

Also known as store-in or copy-back, this policy will write to main memory only when a block of data is purged from the cache storage.

Write miss policies

Write-allocate vs Write no-allocate

When a write misses in the cache, there may or may not be a line in the cache allocated to the block. For write-allocate, there will be a line in the cache for the written data. This policy is typically associated with write-back caches. For no-write-allocate, there will not be a line in the cache.

Fetch-on-write vs no-fetch-on-write

The fetch-on-write will cause the block of data to be fetched from a lower memory hierarchy if the write misses. The policy fetches a block on every write miss.

Write-before-hit vs no-write-before-hit

The write-before-hit will write data to the cache before checking the cache tags for a match. In case of a miss, the policy will displace the block of data already in the cache.

Combination Policies

Write-validate

It is a combination of no-fetch-on-write and write-allocate[4]. The policy allows partial lines to be written to the cache on a miss. It provides for better performance as well as works with machines that have various line sizes and does not add instruction execution overhead to the program being run.

Write-invalidate

This policy is a combination of write-before-hit, no-fetch-on-write, and no-write-allocate[4]. This policy invalidates lines when there is a miss.

Write-around

Combination of no-fetch-on-write, no-write-allocate, and no-write-before-hit[4]. This policy uses a non-blocking write scheme to write to cache. It writes data to the next lower cache without modifying the data of the cache line.

Prefetching

Cache is very efficient in terms on access time once that data or instructions are in the cache. But when a process tries to access something that is not already in the cache, a cache miss occurs and those pages need to be brought into the cache from memory. Generally cache miss are expensive to the performance as the processor has to wait for that data (In parallel processing, a process can execute other tasks while it is waiting on data, but there will be some overhead for this). Prefetching is a technique in which data is brought into cache before the program needs it. In other words, it is a way to reduce cache misses. Prefetching uses some type of prediction to mechanism to anticipate the next data that will be needed and brings them into cache. It is not guaranteed that the perfected data will be used. Goal here is to reduce cache misses to improve overall performance.

Some architectures have instructions to prefetch data into cache. Programmers and compliers can insert this prefect instruction in the code. This is known as software prefetching. In hardware prefetching, processor observers the system behavior and issues requests for prefetching. Intel 8086 and Motorola 68000 were the first microprocessors to implement instruction prefetch. Graphics Processing Units benefit from prefetching due to spatial locality property of the data[8].

Advantages

- Improves overall performance by reducing cache misses.

Disadvantages

- Wastes bandwidth when prefetched data is not used.

- Hardware prefetching requires complex architecture. Second order effect is cost of implementation on silicon and validation costs.

- Software prefetching adds additional instructions to the program, making the program larger.

- If same cache is used for prefetching, then prefetching could cause other cache blocks to be evicted. If the evicted blocks are needed, then that will generate a cache miss. This can be prevented by having a separate cache for prefetching but it comes with hardware costs.

- When scheduler changes the task running on a processor, prefetched data may become useless.

Effectiveness

Prefetching effectiveness can be tracked by following matrices

- Coverage is defined as fraction of original cache misses that were prefetched resulting in cache hit.

- Accuracy is defined as fraction of prefetches that are useful.

- Timeliness measures how early the prefetches arrive.

Ideally, a system should have high coverage, high accuracy and optimum timeliness. Realistically, aggressive prefetches can increase coverage but decrease accuracy and vice versa. Also, if prefetching is done too early, the fetched data may have to be evicted without being used and if done too late, it can cause cache miss.

Stream Buffer Prefetching

This is a technique for prefetching which uses a FIFO buffer in which each entry is a cacheline and has address (or tag) and a available bit. System prefetches a stream of sequential data into a stream buffer and multiple stream buffers can be used to prefetch multiple streams in parallel. On a cache access, head entries of stream buffers are check for match along with cache check. If the block is not found in cache but found at the head of stream buffer, it is moved to cache and next entry in the buffer becomes the head. If the block is not found in cache or as head of buffer, data is brought from memory into cache and the subsequent address as assigned to a new stream buffer. Only the heads of the stream buffers are checked during cache access and not the whole buffer. Checking all the entries in all the buffers will increase hardware complexity.

INSERT IMAGE HERE

Above plot shows the cache hit improvements for with respect to number of stream buffers on different programs. Graph on left compares 8 programs from NAS suite while graph on right shows programs from Unix unity suite[7].

Prefetching in Parallel Computing

On a uniprocessor system, prefetching is definitely helpful to improve performance. On a multiprocessor system prefetching is useful, but there are tighter constrains in implementing because of the fact that data will be shared between different processors or cores. In message passing parallel model, each parallel thread has its own memory space and prefetching can be implemented in same way as for uniprocessor system.

In shared memory parallel programming, multiple threads that run on different processors share common memory space. If multiple processors use common cache, then prefetching implementation is similar to uniprocessor system. Difficulties arise when each core has its own cache. Some of the case-scenarios that can occur are:

- Processor P1 has prefetched some data D1 into its stream buffer but is not used it. At the same time processor P2 reads data D1 into its cache and modifies it and one of the cache coherency protocols would be used to inform processor P1 about this change. D1 is not in P1’s cache so it many simply ignore this. Now, when P1 ties to read D1, it will get the stale data from its stream buffer. One way to prevent this is by improving stream buffers so that they can modify their data just like a cache. This adds complexity to the architecture and increases cost[6].

- Prefetching mechanism can fetch data D1, D2, D3,….D10 into P1’s buffer. Now due to parallel processing, P1 only needs to operate on D1 to D5 while P2 will operate on remaining data. Some bandwidth was wasted in fetching D6 to D10 into P1 even though it did not use it. There is a trade off to be made here, if prefetching is very conservative then it will lead to miss and if not then it will waste bandwidth.

- In a multiprocessor system, if threads are not bound to a core, Operation system can rebalance the treads of different cores. This will require the prefetched buffers to be trashed.

References

1. http://www.real-knowledge.com/memory.htm

2. Computer Design & Technology- Lectures slides by Prof.Eric Rotenberg

3. Fundamentals of Parallel Computer Architecture by Prof.Yan Solihin

4. “Cache write policies and performance,” Norman Jouppi, Proc. 20th International Symposium on Computer Architecture (ACM Computer Architecture News 21:2), May 1993, pp. 191–201.

5. Architecture of Parallel Computers, Lecture slides by Prof. Edward Gehringer

6. “Parallel computer architecture: a hardware/software approach” by David. E. Culler, Jaswinder Pal Singh, Anoop Gupta (pg 887)

7. “Evaluating Stream Buffers as a Secondary Cache Replacement” by Subbarao Palacharla and R. E. Kessler. (ref#2)

8. http://en.wikipedia.org/wiki/Instruction_prefetch

| Header 1 | Header 2 | Header 3 |

|---|---|---|

| row 1, cell 1 | row 1, cell 2 | row 1, cell 3 |

| row 2, cell 1 | row 2, cell 2 | row 2, cell 3 |

| row 3, cell 1 | row 3, cell 2 | row 3, cell 3 |

| × | 1 | 2 | 3 |

|---|---|---|---|

| 1 | 1 | 2 | 3 |

| 2 | 2 | 4 | 6 |

| 3 | 3 | 6 | 9 |