CSC/ECE 506 Fall 2007/wiki1 4 a1

Architectural Trends

During past 10 years microprocessor architecture has evolved to the direction of increasing parallelism; ILP and TLP. As superscaler processor prevails, the techniques around superscalar come in. These techniques try to overcome the control and data hazard as deep pipelining and multiple issue overwhelms as well as increase the throughput of computing by TLP.

VLIW(Very Long Instruction Word)

VLIW is one way to expedite ILP with multiple issue. Multiple-issue processors come in two basic flavor: superscalar and VLIW. Superscalar processors issue varying number of instructions per clock and are either statically scheduled or dynamically scheduled.

In contrast to superscalar, VLIW is based on statically sceduled processing which is performed by the compiler. The compiler analyzes the programmer's instructions and groups multiple independent instructions into a large package. The first multiple-issue processors that required the instruction stream to be explicitly organized to avoid dependences used wide instructions with multiple operations per instruction. For this reason, this architecture was named VLIW. VLIW issues a fixed number of instructions formatted either as one large instruction or as a fixed instruction packet with the parallelism among instructions explicitly indicated by the instruction.

E.g. Trimedia, i860

one VLIW instruction encodes multiple operations; specifically, one instruction encodes at least one operation for each execution unit of the device. For example, if a VLIW device has five execution units, then a VLIW instruction for that device would have five operation fields, each field specifying what operation should be done on that corresponding execution unit. To accommodate these operation fields, VLIW instructions are usually at least 64 bits in width, and on some architectures are much wider.

The instruction scheduling logic that makes a superscalar processor is just boolean logic. In the early 1990s, a significant innovation was to realize that the coordination of a multiple-ALU computer could be moved into the compiler, the software that translates a programmer's instructions into machine-level instructions.

This type of computer is called a very long instruction word (VLIW) computer.

Statically scheduling the instructions in the compiler (as opposed to letting the processor do the scheduling dynamically) can reduce CPU complexity. This can improve performance, reduce heat, and reduce cost.

Unfortunately, the compiler lacks accurate knowledge of runtime scheduling issues. Merely changing the CPU core frequency multiplier will have an effect on scheduling. Actual operation of the program, as determined by input data, will have major effects on scheduling. To overcome these severe problems a VLIW system may be enhanced by adding the normal dynamic scheduling, losing some of the VLIW advantages.

Static scheduling in the compiler also assumes that dynamically generated code will be uncommon. Prior to the creation of Java, this was in fact true. It was reasonable to assume that slow compiles would only affect software developers. Now, with JIT virtual machines for Java and .net, slow code generation affects users as well.

There were several unsuccessful attempts to commercialize VLIW. The basic problem is that a VLIW computer does not scale to different price and performance points, as a dynamically scheduled computer can. Another issue is that compiler design for VLIW computers is extremely difficult, and the current crop of compilers (as of 2005) don't always produce optimal code for these platforms.

Also, VLIW computers optimise for throughput, not low latency, so they were not attractive to the engineers designing controllers and other computers embedded in machinery. The embedded systems markets had often pioneered other computer improvements by providing a large market that did not care about compatibility with older software.

In January 2000, a company called Transmeta took the interesting step of placing a compiler in the central processing unit, and making the compiler translate from a reference byte code (in their case, x86 instructions) to an internal VLIW instruction set. This approach combines the hardware simplicity, low power and speed of VLIW RISC with the compact main memory system and software reverse-compatibility provided by popular CISC.

Multi-threading

Multi-threading enables exploiting thread-level parallelism(TLP) within a processor. Multithreading allows multiple threads to share the functional units of a single processor in an overlapping mannor. In order to this sharing, the processor have to maintain duplicated independent state of each thread - regiter file, PC, page table and so on.

In multithreading, when the processor has to fetch data from slow system memory, instead of stalling for the data to arrive, the processor switches to another program or program thread which is ready to execute. Though this does not speed up a particular program/thread, it increases the overall system throughput by reducing the time the CPU is idle.

Simultaneous multithreading (SMT) is a kind of multithreading which uses the resources of a multiple-issue, dynamically scheduled processor to exploit TLP at the same time it exploits ILP. In the SMT, TLP and ILP are exploited simultaneously, with multiple threads using the issue slots in a single clock cycle.

Multi-core

Multi-core CPUs are typically multiple CPU cores on the same die, connected to each other via a shared L2 or L3 cache, an on-die bus, or an on-die crossbar switch. All the CPU cores on the die share interconnect components with which to interface to other processors and the rest of the system. These components may include a front side bus interface, a memory controller to interface with DRAM, a cache coherent link to other processors, and a non-coherent link to the southbridge and I/O devices. The terms multi-core and MPU (which stands for Micro-Processor Unit) have come into general usage for a single die that contains multiple CPU cores

Multi-core chips do more work per clock cycle, and thus can be designed to operate at lower frequencies, than their single-core counterparts. Since power consumption goes up proportionally with frequency, multi-core architecture gives engineers the means to address the problem of runaway power and cooling requirements.

Speculative Execution

While trying to get more ILP, managing control dependencies becomes more important but more burden. To remove the pipeline stall, branch prediction is applied for the instruction fetching stage. However, for the processor which executes multiple instructions per clock, it is not sufficient to predict accurately. A wide issue processor may need to execute a branch every clock cycle to attain the maximum performance. Under speculative execution, we fetch, issue, and execute instructions as if our branch predictions were always correct. When we meet the misprediction, the recovery mechanism can handle the situation.

E.g. PowerPC 603/604/G3/G4, MIPS R10000/R12000, Intel Pentium II/III/4, Alpha 21264, AMD K5/K6/Athlon

One problem with an instruction pipeline is that there are a class of instructions that must make their way entirely through the pipeline before execution can continue. In particular, conditional branches need to know the result of some prior instruction before "which side" of the branch to run is known. For instance, an instruction that says "if x is larger than 5 then do this, otherwise do that" will have to wait for the results of x to be known before it knows if the instructions for this or that can be fetched.

For a small four-deep pipeline this means a delay of up to three cycles — the decode can still happen. But as clock speeds increase the depth of the pipeline increases with it, and modern processors may have 20 stages or more. In this case the CPU is being stalled for the vast majority of its cycles every time one of these instructions is encountered.

The solution, or one of them, is speculative execution, also known as branch prediction. In reality one side or the other of the branch will be called much more often than the other, so it is often correct to simply go ahead and say "x will likely be smaller than five, start processing that". If the prediction turns out to be correct, a huge amount of time will be saved. Modern designs have rather complex prediction systems, which watch the results of past branches to predict the future with greater accuracy.

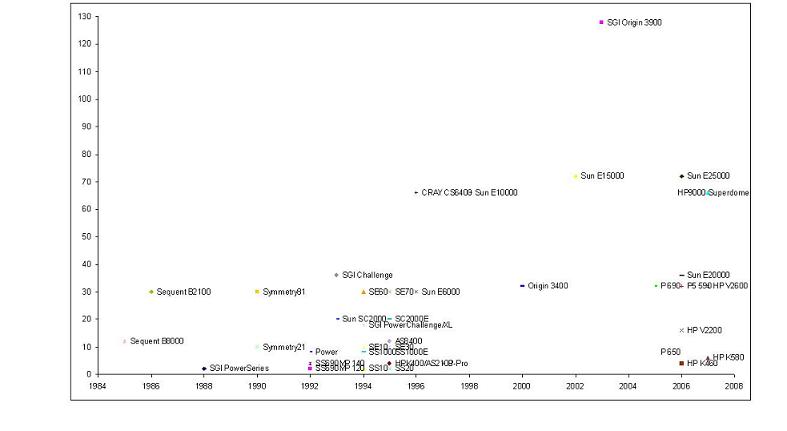

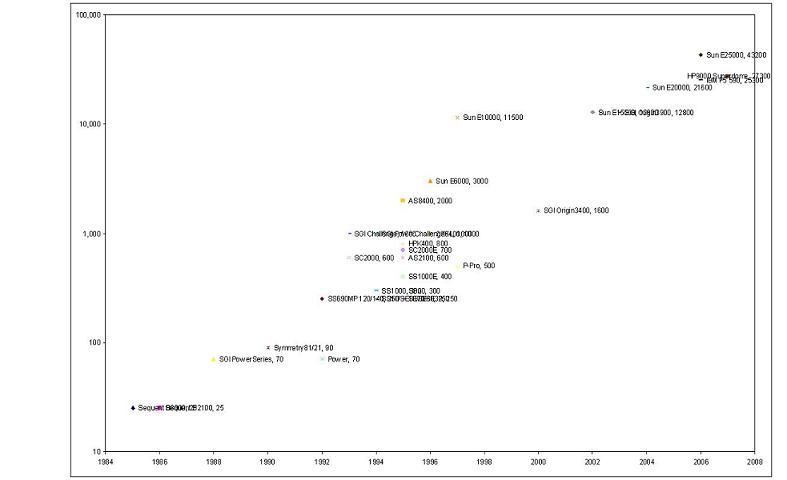

Updated Figure 1.8 & Figure 1.9

References

[1]John L. Hennessy, David A. Patterson, "Computer Architecture: A Quantitative Approach" 3rd Ed., Morgan Kaufmann, CA, USA [2]CE Kozyrakis, DA Patterson, "A new direction for computer architecture research", Computer Volume 31 Issue 11, IEEE, Nov 1998, pp24-32 [3]K.C. Yeager, "THE MIPS R10000 Superscalar Microprocessor", IEEE Micro Volume 16 Issue 2, Apr. 1996, pp28-41 [4]Geoff Koch, "Discovering Multi-Core: Extending the Benefits of Moore’s Law", Technology@Intel Magazine, Jul 2005, pp1-6 [5]Richard Low, "Microprocessor trends:multicore, memory, and power developments", Embedded Computing Design, Sep 2005 [6]Artur Klauser, "Trends in High-Performance Microprocessor Design", Telematik 1, 2001