CSC/ECE 517 Fall 2017/E1790 Text metrics

Introduction

In this final project “Text Metric”, first, we will integrate a couple of external sources such as Github, Trello to fetch information. Second, we will introduce the idea of "Readability." To get the level of readability, we will import the content of write-ups written by students, split the sentences to get the number of sentences, the number of words, etc., and then we calculate the indices by using these numbers and formulas.

Our primary task for the final project is to design tables which allow the Expertiza app to store data fetched from external sources, such as GitHub, Trello, and write-ups. For the next step, we would like to utilize this raw data for virtualized charts and grading metrics.

Current Design

Currently, there are three models created to store the raw data from metrics source. (Metrics, Metric_data_points, Metric_data_point_types)

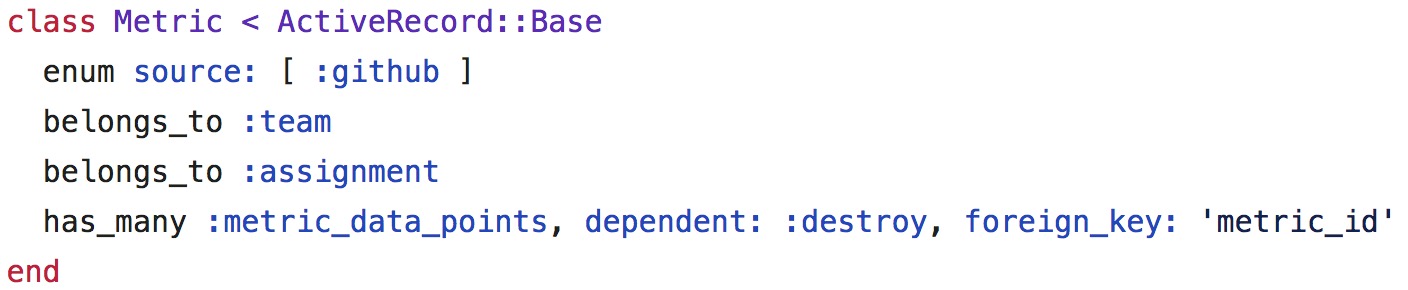

Metric

Model:

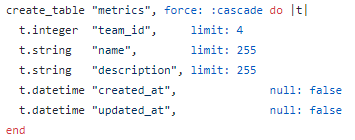

Schema:

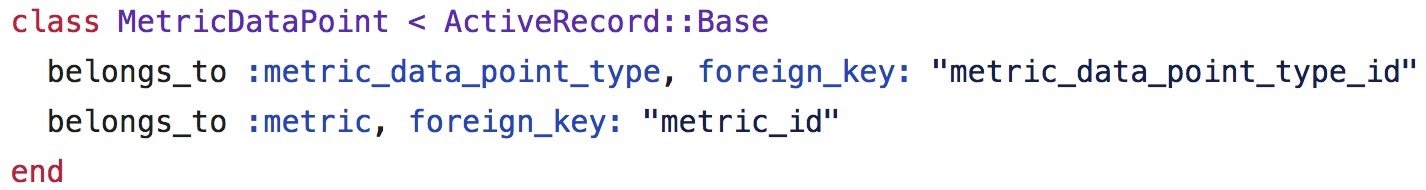

Metric_data_points

Model:

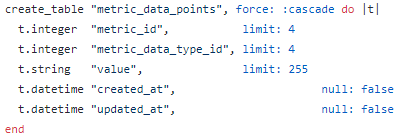

Schema:

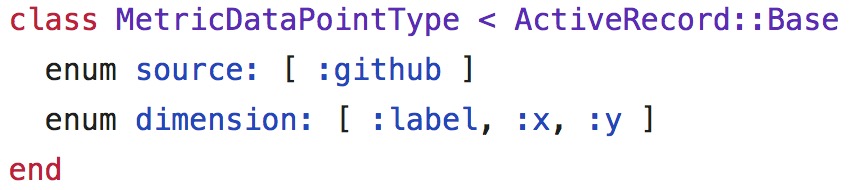

Metric_data_point_types

Model:

Schema:

The current framework only defined the schema, but the models are still empty, and the methods of the data parser have not been implemented yet.

This schema is a clever design because it follows the "Open to Extension and Closed to Modification" principle. When new data is added to the database, developers don't have to change the metric_data_point_types and metric_data_points tables. The developers only need to add two methods to translate the data type to and from strings. By browsing the code, the most basic types already have those methods to meet our requirements. But it is not flawless, and we will talk about the problems in the next section.

Besides, we only have GitHub to be our data source currently. As a result, we also need to find other data sources to be one of the grading metrics.

Analysis of the Problem

Proposed Design

Current source: GitHub

Our first and current source for grading metrics is GitHub, and Zach and Tyler have implemented the integration with GitHub API for fetching the commit data. However, the integration looks that it is still in the first stage; the app can fetch the data and store into the database. We don't have actual implementations of getting the valid data and the usage of this data to be one of the grading metrics yet.

From the API offered by GitHub, we can fetch the commit information that the number of additions and the number of deletions is made by each contributor in the repository. We can just use these numbers to calculate the contributions for the metrics, or we can put these numbers into some equations to get the impact factor to represent as contributions for each group member.

Proposed source for metrics:

1. Readability[1][2]

Sometimes, students only have project write-ups to submit (for example, this stage of the final project). As a result, there might be no GitHub commits to check the number of additions or deletions of the working repository. Here, we introduce some formulas for calculating "Readability" of those write-ups to be one of grading metrics.

The readability indices contain:

Flesch Kincaid Reading Ease

Based on a 0-100 scale. A high score means the text is easier to read. Low scores suggest the text is complicated to understand.

206.835 - 1.015 x (words/sentences) - 84.6 x (syllables/words) A value between 60 and 80 should be easy for a 12 to 15 year old to understand.

Flesch Kincaid Grade Level

0.39 x (words/sentences) + 11.8 x (syllables/words) - 15.59

Gunning Fog Score

0.4 x ( (words/sentences) + 100 x (complexWords/words) )

SMOG Index

1.0430 x sqrt( 30 x complexWords/sentences ) + 3.1291

Coleman Liau Index

5.89 x (characters/words) - 0.3 x (sentences/words) - 15.8

Automated Readability Index (ARI)

4.71 x (characters/words) + 0.5 x (words/sentences) - 21.43

To calculate these indices, we need to fetch the article to get the number of sentences, the number of words and the number of complex words, and then we can use these numbers to calculate the indices mentioned above to get the readability level.

2. Trello[3][4]

Trello is a web-based project management application. It helps students to understand what tasks have been accomplished, what works are in progress and what jobs are waiting for being started by adding cards and writing down to-do lists inside, and it also helps instructors to keep track of how students work by looking into these cards and lists.

It is useful for both coding projects and writing projects because we can fetch the information from the activities to calculate the percentages of the workloads for each group member, and this result can be one of the grading metrics.

For example, a group of students has a to-do list, which contains eight tasks. Student A finishes two tasks, Student B finishes one, and Student finishes one as well. We can use the information fetched from the activity of project from Trello RESTful API to calculate the percentage of finished jobs (which is 50%) and the proportions of contributions for each student (which are 50%, 25%, 25%, respectively).

Using this data from Trello might be a good idea for being one of the grading metrics because we cannot merely conclude contributions by observing the number of additions and the number of deletions. What if a student just adjusts the indentation for all files in the project for an hour and the other student thinks about a complicated algorithm to get a correct answer for days or even weeks?

However, the data from Trello offers a different aspect of the grading rubric; it concludes the contributions by calculating how many tasks are done by each student. Also, it can be used as a grading metric for both coding projects and writing projects because we only care about the todo-lists.