CSC/ECE 506 Spring 2013/4a aj: Difference between revisions

No edit summary |

No edit summary |

||

| Line 26: | Line 26: | ||

Here is a list of the top 10 supercomputers [http://www.datacenterknowledge.com/the-top-10-supercomputers-illustrated-nov-2011/ top10 supercomputers] as of November 2011. | Here is a list of the top 10 supercomputers [http://www.datacenterknowledge.com/the-top-10-supercomputers-illustrated-nov-2011/ top10 supercomputers] as of November 2011. | ||

== | ==Paradigms<ref>http://www.cray.com/Assets/PDF/about/CrayTimeline.pdf</ref>== | ||

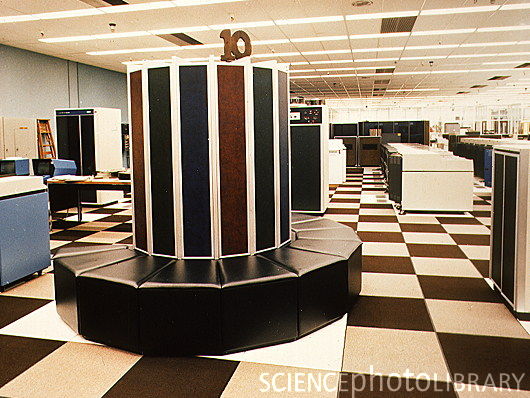

[[Image:cray1.jpg|thumb|right|300px|Cray 1 supercomputer installed at Lawrence Livermore National Laboratory (LLNL), California, USA.]] | [[Image:cray1.jpg|thumb|right|300px|Cray 1 supercomputer installed at Lawrence Livermore National Laboratory (LLNL), California, USA.]] | ||

| Line 83: | Line 83: | ||

blah blah blah | blah blah blah | ||

===Stanford University Intermediate Format (SUIF 1,2)=== | ===Stanford University Intermediate Format (SUIF 1,2)=== | ||

Started out as an NSF-funded and DARPA-funded collaboration between a few universities in the late 1990s with a goal of creating a universal compiler. | Started out as an NSF-funded and DARPA-funded collaboration between a few universities in the late 1990s with a goal of creating a universal compiler. A major focus of SUIF was parallelization of C source code, and this started with taking an intermediate program representation of the code. At this stage, various automatic parallelization techniques were used including: interprocedure optimization, array privatization, and pointer analysis. | ||

===Jade=== | ===Jade=== | ||

A DARPA-funded project that focused on the interactive technique for automatic parallelization. Using this technique, the programmer is able to exploit coarse-grained concurrency.<ref>http://www-suif.stanford.edu/papers/ppopp01.pdf</ref> | A DARPA-funded project that focused on the interactive technique for automatic parallelization. Using this technique, the programmer is able to exploit coarse-grained concurrency.<ref>http://www-suif.stanford.edu/papers/ppopp01.pdf</ref> | ||

Revision as of 21:25, 9 February 2013

Introduction<ref>http://en.wikipedia.org/wiki/Supercomputer</ref>

A supercomputer is generally considered to be the front-line “cutting-edge” in terms of processing capacity (number crunching) and computational speed at the time it is built, but with the pace of development, yesterday's supercomputers have become regular servers today. This wiki article was directed by Wiki Topics for Chapters 3 and 4

Identification <ref>http://www.bukisa.com/articles/13059_supercomputer-evolution</ref>

The United States government has played the key role in the development and use of supercomputers, During World War II, the US Army paid for the construction of Electronic Numerical Integrator And Computer(ENIAC)in order to speed the calculations of artillery tables. In the 30 years after World War II, the US government used high-performance computers to design nuclear weapons, break codes, and perform other security-related applications.

The most powerful supercomputers introduced in the 1960s were designed primarily by Seymour Cray at Control Data Corporation (CDC). They led the market into the 1970s until Cray left to form his own company,Cray Research.

With Moore’s Law still holding after more than thirty years, the rate at which future mass-market technologies overtake today’s cutting-edge super-duper wonders continues to accelerate. The effects of this are manifest in the abrupt about-face we have witnessed in the underlying philosophy of building supercomputers.

During the 1970s and all the way through the mid-1980s supercomputers were built using specialized custom vector processors working in parallel. Typically, this meant anywhere between four to sixteen CPUs. The next phase of the supercomputer evolution saw the introduction of massive parallel processing and a drift away from vector-only microprocessors. However, the processors used in the construction of this generation of supercomputers were still primarily highly specialized purpose-specific custom designed and fabricated units.

That is no longer true. No longer is silicon fabricated into the incredibly expensive highly specialized purpose-specific customized microprocessor units to serve as the heart and mind of supercomputers. Advances in mainstream technologies and economies of scale now dictate that “off-the-shelf” multicore server-class CPUs are assembled into great conglomerates, combined with mind-boggling quantities of storage (RAM and HDD), and interconnected using light-speed transports.

So we now find that instead of using specialized custom-built processors in their design, supercomputers are based on "off the shelf" server-class multicore microprocessors, such as the IBM PowerPC, Intel Itanium, or AMD x86-64. The modern supercomputer is firmly based around massively parallel processing by clustering very large numbers of commodity processors combined with a custom interconnect.

Currently, the K computer is the world's fastest supercomputer at 10.51 petaFLOPS. K is built by the Japanese computer firm Fujitsu, based in Kobe's Riken Advanced Institute for Computational Science. It consists of 88,000 SPARC64 VIIIfx CPUs, and spans 864 server racks. In November 2011, the power consumption was reported to be 12659.89 kW<ref>http://www.top500.org/list/2011/11/100</ref>. K's performance is equivalent to one million linked desktop computers, which is more than its five closest competitors combined. It consists of 672 cabinets stuffed with circuit-boards, and its creators plan to increase that to 800 in the coming months. It uses enough energy to power nearly 10,000 homes and costs $10 million (£6.2 million) annually to run<ref>http://www.telegraph.co.uk/technology/news/8586655/Japanese-supercomputer-K-is-worlds-fastest.html</ref>.

Some of the companies which build supercomputers are Silicon Graphics, Intel, IBM, Cray, Orion, Aspen Systems etc.

Here is a list of the top 10 supercomputers top10 supercomputers as of November 2011.

Paradigms<ref>http://www.cray.com/Assets/PDF/about/CrayTimeline.pdf</ref>

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

Static<ref>http://www.versionone.com/Agile101/Methodologies.asp </ref>

In the beginning there were only a few Cray-1s installed in Japan, and until 1983 no Japanese company produced supercomputers. The first models were announced in 1983. Naturally there had been prototypes earlier like the Fujitsu F230-75 APU produced in two copies in 1978, but Fujitsu's VP-200 and Hitachi's S-810 were the first officially announced versions. NEC announced its SX series slightly later.

The last decade has rather been a surprise. About three generations of machines have been produced by each of the domestic manufacturers. During the last ten years about 300 supercomputer systems have been shipped and installed in Japan, and a whole infrastructure of supercomputing has been established. All major universities, many of the large industrial companies and research centers have supercomputers.

In 1992 NEC announced the SX-3R with a couple of improvements compared to the first version. The clock was further reduced to 2.5 ns, so that the peak performance increased to 6.4 Gflop/s per processor

Speculative <ref>http://www.netlib.org/benchmark/top500/reports/report94/Japan/node5.html</ref>

In 1977 Fujitsu produced the first supercomputer prototype called the F230-75 APU which was a pipelined vector processor added to a scalar processor. This attached processor was installed in the Japanese Atomic Energy Commission (JAERI) and the National Aeronautic Lab (NAL).

Dynamic

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

Interactive

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

Polytope Model

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

Pitfalls

Hitachi has been producing supercomputers since 1983 but differs from other manufacturers by not exporting them. For this reason, their supercomputers are less well known in the West. After having gone through two generations of supercomputers, the S-810 series started in 1983 and the S-820 series in 1988, Hitachi leapfrogged NEC in 1992 by announcing the most powerful vector supercomputer ever.The top S-820 model consisted of one processor operating at 4 ns and contained 4 vector pipelines with four pipelines and two independent floating-point units. This corresponded to a peak performance of 2 Gflop/s. Hitachi put great emphasis on a fast memory although this meant limiting its size to a maximum of 512 MB. The memory bandwidth of 2 words per pipe per vector cycle, giving a peak rate of 16 GB/s was a respectable achievement, but it was not enough to keep all functional units busy.

The S-3800 was announced two years ago and is comparable to NEC's SX- 3R in its features. It has up to four scalar processors with a vector processing unit each. These vector units have in turn up to four independent pipelines and two floating point units that can each perform a multiply/add operation per cycle. With a cycle time of 2.0 ns, the whole system achieves a peak performance level of 32 Gflop/s.

The S-3600 systems can be seen as the design of the S-820 recast in more modern technology. The system consists of a single scalar processor with an attached vector processor. The 4 models in the range correspond to a successive reduction of the number of pipelines and floating point units installed. Link showing the list of the top 500 super computers top 500 super computers. Link showing the statistics of top 500 supercomputer statistics

Limitations

In the early 1950s, IBM built their first scientific computer, the IBM 701. The IBM 704 and other high-end systems appeared in the 1950s and 1960s, but by today's standards, these early machines were little more than oversized calculators. After going through a rough patch, IBM re-emerged as a leader in supercomputing research and development in the mid-1990s, creating several systems for the U.S. Government's Accelerated Strategic Computing Initiative (ASCI). These computers boast approximately 100 times as much computational power as supercomputers of just ten years ago.

Fine-grained Parallelism

blah blah blah

Course-grained Parallelism

blah blah blah

Examples<ref>http://http://www.top500.org</ref>

Power Fortran Analyzer

blah blah blah

Polaris

blah blah blah

Stanford University Intermediate Format (SUIF 1,2)

Started out as an NSF-funded and DARPA-funded collaboration between a few universities in the late 1990s with a goal of creating a universal compiler. A major focus of SUIF was parallelization of C source code, and this started with taking an intermediate program representation of the code. At this stage, various automatic parallelization techniques were used including: interprocedure optimization, array privatization, and pointer analysis.

Jade

A DARPA-funded project that focused on the interactive technique for automatic parallelization. Using this technique, the programmer is able to exploit coarse-grained concurrency.<ref>http://www-suif.stanford.edu/papers/ppopp01.pdf</ref>

Intel C and C++ Compilers

blah blah blah

Legend

- Vendor – The manufacturer of the platform and hardware.

- Rmax – The highest score measured using the LINPACK benchmark suite. This is the number that is used to rank the computers. Measured in quadrillions of floating point operations per second, i.e. Petaflops(Pflops).

- Rpeak – This is the theoretical peak performance of the system. Measured in Pflops.

- Processor cores – The number of active processor cores used.

Top 10 supercomputers of today<ref>http://www.junauza.com/2011/07/top-10-fastest-linux-based.html</ref>

Below are the Top 10 supercomputers in the World(as of June 2011). An effort has been made to compare the architectural features of these supercomputers.

1.K-computer:

- K-computer is currently the world's fastest supercomputer. It is developed by Fujitsu at the RIKEN Advanced Institute for Computational Science campus in Kobe, Japan.

- As per the LINPACK benchmarking standards, K-computer managed to give a peak performance of a mind-blowing 8.16 petaflops toppling Tianhe-1A off its number one spot.

- This supercomputer uses 68,544 2.0 GHZ 8-core SPARC 64 VIIIfx processors packed in 672 cabinets, for a total of 548,352 cores. In layman's term, K-computer's performance is almost equivalent to the performance of 1 million desktop computers.

- The file system used here is an optimized parallel file system based on Lustre, called Fujitsu Exabyte File System.

- One of the disadvantage with this high-performer is it consumes about 9.8 MW of power, that's the amount of power that would be enough to light 10,000 houses. When compared with its closest competitor, that is the Tianhe-1A, the K-computer is miles ahead and it is highly unlikely that it would lose its number 1 spot any time soon.

Supercomputer Programming Models<ref>http://books.google.com/books?id=tDxNyGSXg5IC&pg=PA4&lpg=PA4&dq=evolution+of+supercomputers&source=bl&ots=I1NZtZyCTD&sig=Ma2fHyp336BSp4Yv2ERmfrpeo4&hl=en&ei=IAReS4WbM8eUtgf2u8GnAg&sa=X&oi=book_result&ct=result&resnum=5&ved=0CB4Q6AEwBA#v=onepage&q=evolution%20of%20supercomputers&f=false</ref>

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. The base language of supercomputer code is, in general, Fortran or C, using special libraries to share data between nodes. Now environments such as PVM and MPI for loosely connected clusters and OpenMP for tightly coordinated shared memory machines are used. Significant effort is required to optimize a problem for the interconnect characteristics of the machine it is run on. The aim is to prevent any of the CPUs from wasting time waiting on data from other nodes.

Now we will discuss briefly regarding the programming languages mentioned above.

1) Fortran previously known as FORTRAN is a general-purpose, procedural, imperative programming language that is especially suited to computation like numeric and scientific computing. It was originally developed by IBM in the 1950s for scientific and engineering applications,then became very dominant in this area of programming early on and has been in use for over half a century in very much computationally intensive areas such as numerical weather prediction, finite element analysis, computational fluid dynamics (CFD), computational physics, and computational chemistry. It is one of the most popular and highly preferred language in the area of high-performance computing and is the language used for programs that benchmark and rank the world's fastest supercomputers.

Fortran a blend derived from The IBM Mathematical Formula Translating System encompasses a lineage of versions, each of which evolved to add extensions to the language while usually retaining compatibility with previous versions. Successive versions have added support for processing of character-based data (FORTRAN 77), array programming, modular programming and object-based programming (Fortran 90 / 95), and object-oriented and generic programming (Fortran 2003).

External links

3.Top500-The supercomputer website

5.Supercomputers to "see" black holes

6.Supercomputer simulates stellar evolution

7.Encyclopedia on supercomputer

10.Water-cooling System Enables Supercomputers to Heat Buildings

13.UC-Irvine Supercomputer Project Aims to Predict Earth's Environmental Future

14.Wikipedia

15.Parallel programming in C with MPI and OpenMP ByMichael Jay Quinn

19.SuperComputers for One-Fifth the Price

References

<references/>