CSC/ECE 506 Spring 2013/4a aj: Difference between revisions

No edit summary |

No edit summary |

||

| Line 1: | Line 1: | ||

== Introduction<ref>http://en.wikipedia.org/wiki/Supercomputer</ref>== | == Introduction<ref>http://en.wikipedia.org/wiki/Supercomputer</ref>== | ||

A [http://en.wikipedia.org/wiki/Supercomputer supercomputer] is generally considered to be the front-line “cutting-edge” in terms of processing capacity (number crunching) and computational speed at the time it is built, but with the pace of development, yesterday's supercomputers have become regular servers today. | A [http://en.wikipedia.org/wiki/Supercomputer supercomputer] is generally considered to be the front-line “cutting-edge” in terms of processing capacity (number crunching) and computational speed at the time it is built, but with the pace of development, yesterday's supercomputers have become regular servers today. | ||

This wiki article was directed by [https://docs.google.com/a/ncsu.edu/document/d/1cShv7MK21_EinS1UA_uXBzijjcpenmsSUMicP_kW8Wg/edit 3 and 4] | This wiki article was directed by [https://docs.google.com/a/ncsu.edu/document/d/1cShv7MK21_EinS1UA_uXBzijjcpenmsSUMicP_kW8Wg/edit Wiki Topics for Chapters 3 and 4] | ||

== Supercomputer Evolution <ref>http://www.bukisa.com/articles/13059_supercomputer-evolution</ref>== | == Supercomputer Evolution <ref>http://www.bukisa.com/articles/13059_supercomputer-evolution</ref>== | ||

Revision as of 20:11, 9 February 2013

Introduction<ref>http://en.wikipedia.org/wiki/Supercomputer</ref>

A supercomputer is generally considered to be the front-line “cutting-edge” in terms of processing capacity (number crunching) and computational speed at the time it is built, but with the pace of development, yesterday's supercomputers have become regular servers today. This wiki article was directed by Wiki Topics for Chapters 3 and 4

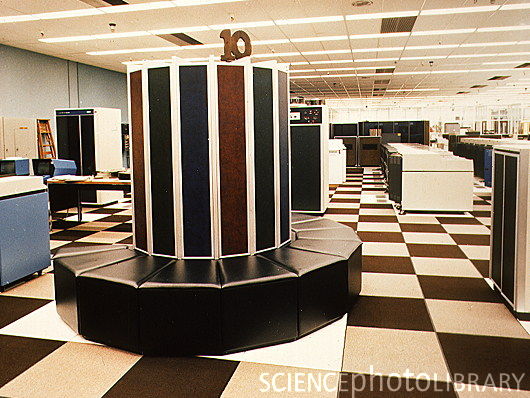

Supercomputer Evolution <ref>http://www.bukisa.com/articles/13059_supercomputer-evolution</ref>

The United States government has played the key role in the development and use of supercomputers, During World War II, the US Army paid for the construction of Electronic Numerical Integrator And Computer(ENIAC)in order to speed the calculations of artillery tables. In the 30 years after World War II, the US government used high-performance computers to design nuclear weapons, break codes, and perform other security-related applications.

The most powerful supercomputers introduced in the 1960s were designed primarily by Seymour Cray at Control Data Corporation (CDC). They led the market into the 1970s until Cray left to form his own company,Cray Research.

With Moore’s Law still holding after more than thirty years, the rate at which future mass-market technologies overtake today’s cutting-edge super-duper wonders continues to accelerate. The effects of this are manifest in the abrupt about-face we have witnessed in the underlying philosophy of building supercomputers.

During the 1970s and all the way through the mid-1980s supercomputers were built using specialized custom vector processors working in parallel. Typically, this meant anywhere between four to sixteen CPUs. The next phase of the supercomputer evolution saw the introduction of massive parallel processing and a drift away from vector-only microprocessors. However, the processors used in the construction of this generation of supercomputers were still primarily highly specialized purpose-specific custom designed and fabricated units.

That is no longer true. No longer is silicon fabricated into the incredibly expensive highly specialized purpose-specific customized microprocessor units to serve as the heart and mind of supercomputers. Advances in mainstream technologies and economies of scale now dictate that “off-the-shelf” multicore server-class CPUs are assembled into great conglomerates, combined with mind-boggling quantities of storage (RAM and HDD), and interconnected using light-speed transports.

So we now find that instead of using specialized custom-built processors in their design, supercomputers are based on "off the shelf" server-class multicore microprocessors, such as the IBM PowerPC, Intel Itanium, or AMD x86-64. The modern supercomputer is firmly based around massively parallel processing by clustering very large numbers of commodity processors combined with a custom interconnect.

Currently, the K computer is the world's fastest supercomputer at 10.51 petaFLOPS. K is built by the Japanese computer firm Fujitsu, based in Kobe's Riken Advanced Institute for Computational Science. It consists of 88,000 SPARC64 VIIIfx CPUs, and spans 864 server racks. In November 2011, the power consumption was reported to be 12659.89 kW<ref>http://www.top500.org/list/2011/11/100</ref>. K's performance is equivalent to one million linked desktop computers, which is more than its five closest competitors combined. It consists of 672 cabinets stuffed with circuit-boards, and its creators plan to increase that to 800 in the coming months. It uses enough energy to power nearly 10,000 homes and costs $10 million (£6.2 million) annually to run<ref>http://www.telegraph.co.uk/technology/news/8586655/Japanese-supercomputer-K-is-worlds-fastest.html</ref>.

Some of the companies which build supercomputers are Silicon Graphics, Intel, IBM, Cray, Orion, Aspen Systems etc.

Here is a list of the top 10 supercomputers top10 supercomputers as of November 2011.

Cray History <ref>http://www.cray.com/Assets/PDF/about/CrayTimeline.pdf</ref>

Cray Inc. has a history that extends back to 1972, when the legendary Seymour Cray, the "father of supercomputing," founded Cray Research. R&D and manufacturing were based in his hometown of Chippewa Falls, Wisconsin and business headquarters were in Minneapolis, Minnesota.

The first Cray-1 system was installed at Los Alamos National Laboratory in 1976 for $8.8 million. It boasted a world-record speed of 160 million floating-point operations per second (160 megaflops) and an 8 megabyte (1 million word) main memory. In order to increase the speed of the system, the Cray-1 had a unique "C" shape which made integrated circuits to be closer together and no wire in the system was more than four feet long. To handle the intense heat generated by the computer, Cray developed an innovative refrigeration system using Freon.

In order to concentrate his efforts more on the design, Cray left the CEO position in 1980 and became an independent contractor. Later he worked on the follow-on to the Cray-1, another group within the company developed the first multiprocessor supercomputer, the Cray X-MP, which was introduced in 1982. The Cray-2 system made its debut in 1985, providing a tenfold increase in performance over the Cray-1. In 1988, Cray Y-MP was introduced, the world's first supercomputer to sustain over 1 gigaflop on many applications. Multiple 333 MFLOPS processors powered the system speeds of up-to 2.3 gigaflops.

Always a visionary, Seymour Cray had been exploring the use of gallium arsenide in creating a semiconductor faster than silicon. However, the costs and complexities of this material made it difficult for the company to support both the Cray-3 and the Cray C90 development efforts. In 1989, Cray Research spun off the Cray-3 project into a separate company, Cray Computer Corporation, headed by Seymour Cray and based in Colorado Springs, Colorado. Tragically, Seymour Cray died in September 1996 at the age of 71.

The 1990s brought a number of transformations to Cray Research. The company continued its leadership in providing the most powerful supercomputers for production applications. The Cray C90 featured a new central processor which produced a performance of 1 gigaflop. Using 16 of these powerful processors and 256 million words of central memory, the system boasted of amazing total performance. The company also produced its first "mini-supercomputer," the Cray XMS system, followed by the Cray Y-MP EL series and the subsequent Cray J90. In 1993, it offered the first massively parallel processing (MPP) system, the Cray T3D supercomputer, and quickly captured MPP market leadership from early MPP companies such as Thinking Machines and MasPar. The Cray T3D proved to be exceptionally robust, reliable, sharable and easy-to-administer, compared with competing MPP systems.

The successor, Cray T3E supercomputer has been the world's best selling MPP system. The Cray T3E-1200E system was the first supercomputer to sustain one teraflop (1 trillion calculations per second) on a real-world application. In November 1998, a joint scientific team from Oak Ridge National Laboratory, the National Energy Research Scientific Computing Center (NERSC), Pittsburgh Supercomputing Center and the University of Bristol (UK) ran a magnetism application at a sustained speed of 1.02 teraflops. In another technological landmark, the Cray T90 became the world's first wireless supercomputer when it was released in 1994. Also the Cray J90 series which was released during the same year has become the world's most popular supercomputer, with over 400 systems sold.

Cray Research merged with SGI (Silicon Graphics, Inc.) in February 1996 then the company was renamed Cray Inc. and the ticker symbol was changed to CRAY. In August 1999, SGI created a separate Cray Research business unit to focus completely and exclusively on the unique requirements of high-end supercomputing customers. Assets of this business unit were sold to Tera Computer Company in March 2000. Tera began software development for the Multithreaded Architecture (MTA) systems in 1988 and hardware design commenced in 1991. The Cray MTA-2 system provides scalable shared memory, in which every processor has equal access to every memory location, which greatly simplifies the programming because it eliminates concerns about the layout of memory.Company received its first order for the MTA from the San Diego Supercomputer Center. The multiprocessor system was accepted by the center in 1998, and has since been upgraded to eight processors.

The link below shows the Historical Timeline of Cray in the field of Supercomputers. Historical Timeline of Cray.

Supercomputer History in Japan<ref>http://www.versionone.com/Agile101/Methodologies.asp </ref>

In the beginning there were only a few Cray-1s installed in Japan, and until 1983 no Japanese company produced supercomputers. The first models were announced in 1983. Naturally there had been prototypes earlier like the Fujitsu F230-75 APU produced in two copies in 1978, but Fujitsu's VP-200 and Hitachi's S-810 were the first officially announced versions. NEC announced its SX series slightly later.

The last decade has rather been a surprise. About three generations of machines have been produced by each of the domestic manufacturers. During the last ten years about 300 supercomputer systems have been shipped and installed in Japan, and a whole infrastructure of supercomputing has been established. All major universities, many of the large industrial companies and research centers have supercomputers.

In 1984 the NEC announced the SX-1 and SX-2 and started delivery in 1985. The first two SX-2 systems were domestic deliveries to Osaka University and the Institute for Computational Fluid Dynamics (ICFD). The SX-2 had multiple pipelines with one set of add and multiply floating point units each.It had a cycle time of 6 nanoseconds so each pipelined floating-point unit could peak at 167 Mflop/s. With four pipelines per unit and two floating-point units, the peak performance was about 1.3 Gflop/s. Due to limited memory bandwidth and other issues the performance in benchmark tests was less than half the peak value. The SX-1 had a slightly higher cycle time of 7 ns than the SX-2 and had only half the number of pipelines. The maximum execution rate was 570 Mflop/s.

At the end of 1987, NEC improved its supercomputer family with the introduction of A-series which gave improvements to the memory and I/O bandwidth. The top model, the SX-2A, had the same theoretical peak performance as the SX-2. Several low-range models were also announced but today none of them qualify for the TOP500.

In 1989 NEC announced a rather aggressive new model, the SX-3, with several important changes. The vector cycle time was brought down to 2.9 ns, the number of pipelines was doubled, but most significantly NEC added multiprocessing capability to its new series. It contained four independent arithmetic processors each with a scalar and a vector processing unit and NEC increased its performance by more than one order of magnitude of 22 Gflop/s from 1.33 on the SX-2A. The combination of these features made SX-3 the most powerful vector processor in the world. The total memory bandwidth was subdivided into two halves which in turn featured two vector load and one vector store paths per pipeline set as well as one scalar load and one scalar store path. This gave a total memory bandwidth to the vector units of about 66 GB/s. Like its predecessors, the SX-3 was to offer the memory bandwidth needed to sustain peak performance unless most operands were contained in the vector registers.

In 1992 NEC announced the SX-3R with a couple of improvements compared to the first version. The clock was further reduced to 2.5 ns, so that the peak performance increased to 6.4 Gflop/s per processor

Fujitsu's VP series <ref>http://www.netlib.org/benchmark/top500/reports/report94/Japan/node5.html</ref>

In 1977 Fujitsu produced the first supercomputer prototype called the F230-75 APU which was a pipelined vector processor added to a scalar processor. This attached processor was installed in the Japanese Atomic Energy Commission (JAERI) and the National Aeronautic Lab (NAL).

In 1983 the company came out with the VP-200 and VP-100 systems. In 1986 VP-400 was released with twice as many pipelines as the VP-200 and during mid-1987 the whole family became the E-series with the addition of an extra (multiply-add) pipelined floating point unit that increased the performance potential by 50%. With the flexible range of systems in this generation (VP-30E to VP-400E), good marketing and a broad range of applications, Fujitsu has became the largest domestic supplier with over 80 systems installed, many of which are named in TOP500.

Available since 1990, the VP-2000 family can offer a peak performance of 5 Gflop/s due to a vector cycle time of 3.2 ns. The family was initially announced with four vector performance levels (model 2100, 2200, 2400, and 2600) where each level could have either one of two scalar processors, but the VP-2400/40 doubled this limit offering a peak vector performance similar to the VP-2600. Most of these models are now represented in the Japanese TOP500.

Previous machines wre heavily criticized for the lack of memory throughput. The VP-400 series had only one load/store path to memory that peaked at 4.57 GB/s. This was improved in the VP-2000 series by doubling the paths so that each pipeline set can do two load/store operations per cycle giving a total transfer rate of 20 GB/s. Fujitsu recently decided to use the label, VPX-2x0, for the VP-2x00 systems adapted to their Unix system. Keio Daigaku university now runs such a system.

The VPP-500 series

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

The most amazing part of the VPP-500 is the capability to interconnect up to 222 processors via a cross-bar network with two independent (read/write) connections, each operating at 400 MB/s. The total memory is addressed via virtual shared memory primitives. The system is meant to be front-ended by a VP-2x00 system that handles input/output and permanent file store, and job queue logistics.

As mentioned in the introduction, an early version of this system called the Numeric Wind Tunnel, was developed together with NAL. This early version of the VPP-500 (with 140 processors) is today the fastest supercomputer in the world and stands out at the beginning of the TOP500 due to a value that is twice that of the TMC CM-5/1024 installed at Los Alamo.

Hitachi's Supercomputers

Hitachi has been producing supercomputers since 1983 but differs from other manufacturers by not exporting them. For this reason, their supercomputers are less well known in the West. After having gone through two generations of supercomputers, the S-810 series started in 1983 and the S-820 series in 1988, Hitachi leapfrogged NEC in 1992 by announcing the most powerful vector supercomputer ever.The top S-820 model consisted of one processor operating at 4 ns and contained 4 vector pipelines with four pipelines and two independent floating-point units. This corresponded to a peak performance of 2 Gflop/s. Hitachi put great emphasis on a fast memory although this meant limiting its size to a maximum of 512 MB. The memory bandwidth of 2 words per pipe per vector cycle, giving a peak rate of 16 GB/s was a respectable achievement, but it was not enough to keep all functional units busy.

The S-3800 was announced two years ago and is comparable to NEC's SX- 3R in its features. It has up to four scalar processors with a vector processing unit each. These vector units have in turn up to four independent pipelines and two floating point units that can each perform a multiply/add operation per cycle. With a cycle time of 2.0 ns, the whole system achieves a peak performance level of 32 Gflop/s.

The S-3600 systems can be seen as the design of the S-820 recast in more modern technology. The system consists of a single scalar processor with an attached vector processor. The 4 models in the range correspond to a successive reduction of the number of pipelines and floating point units installed. Link showing the list of the top 500 super computers top 500 super computers. Link showing the statistics of top 500 supercomputer statistics

IBM History

In the early 1950s, IBM built their first scientific computer, the IBM 701. The IBM 704 and other high-end systems appeared in the 1950s and 1960s, but by today's standards, these early machines were little more than oversized calculators. After going through a rough patch, IBM re-emerged as a leader in supercomputing research and development in the mid-1990s, creating several systems for the U.S. Government's Accelerated Strategic Computing Initiative (ASCI). These computers boast approximately 100 times as much computational power as supercomputers of just ten years ago.

Sequoia is a petascale Blue Gene/Q supercomputer being constructed by IBM for the National Nuclear Security Administration as part of the Advanced Simulation and Computing Program (ASC). It is scheduled to be delivered to the Lawrence Livermore National Laboratory in 2011 and fully deployed in 2012.

Sequoia was revealed in February 2009; the targeted performance of 20 petaflops was more than the combined performance of the top 500 supercomputers in the world and about 20 times faster than Roadrunner, the reigning champion of the time. It will be twice as fast as the current record-holding K computer and also twice as fast as the intended future performance of Pleiades.

IBM has also built a smaller prototype called "Dawn," capable of 500 teraflops, using the Blue Gene/P design, to evaluate the Sequoia design. This system was delivered in April 2009 and entered the Top500 list at 9th place in June 2009

Supercomputer speeds are advancing rapidly as manufacturers latch on to new techniques and cheaper prices for computer chips. The first machine to break the teraflop barrier - a trillion calculations per second - was only built in 1996. Two years ago a $59m machine from Sun Microsystems, called Constellation, attempted to take the crown of world's fastest with operating speeds of 421 teraflops. Just two years later, Sequoia was able to achieve nearly 50 times the computing power.

Current Top Supercomputers<ref>http://http://www.top500.org</ref>

Comparison of Top Supercomputer Vendors In The World (November 2011)

| Vendor | System Count | System Share(%) | Rmax(GFlops) | Rpeak(GFlops) | Processor cores |

|---|---|---|---|---|---|

| IBM | 223 | 44.6 | 20234409.46 | 31888720.48 | 3317036 |

| Hewlett-Packard | 141 | 28.2 | 9673402.4 | 16410722.22 | 1509694 |

| Cray Inc. | 27 | 5.4 | 10614483 | 13558554.6 | 1457068 |

| SGI | 17 | 3.4 | 2974418 | 3764607.92 | 336104 |

| Bull SA | 15 | 3 | 3287252 | 4146261.12 | 321284 |

| Appro International | 13 | 2.6 | 2371260 | 3122119.2 | 219648 |

| Dell | 11 | 2.2 | 1160900 | 1492525.8 | 136722 |

| Oracle | 10 | 2 | 1648199.82 | 1965064.96 | 183040 |

| Hitachi | 5 | 1 | 404551 | 548899.8 | 32032 |

| Fujitsu | 4 | 0.8 | 10909940 | 11707788 | 743176 |

Legend

- Vendor – The manufacturer of the platform and hardware.

- Rmax – The highest score measured using the LINPACK benchmark suite. This is the number that is used to rank the computers. Measured in quadrillions of floating point operations per second, i.e. Petaflops(Pflops).

- Rpeak – This is the theoretical peak performance of the system. Measured in Pflops.

- Processor cores – The number of active processor cores used.

Top 10 supercomputers of today<ref>http://www.junauza.com/2011/07/top-10-fastest-linux-based.html</ref>

Below are the Top 10 supercomputers in the World(as of June 2011). An effort has been made to compare the architectural features of these supercomputers.

1.K-computer:

- K-computer is currently the world's fastest supercomputer. It is developed by Fujitsu at the RIKEN Advanced Institute for Computational Science campus in Kobe, Japan.

- As per the LINPACK benchmarking standards, K-computer managed to give a peak performance of a mind-blowing 8.16 petaflops toppling Tianhe-1A off its number one spot.

- This supercomputer uses 68,544 2.0 GHZ 8-core SPARC 64 VIIIfx processors packed in 672 cabinets, for a total of 548,352 cores. In layman's term, K-computer's performance is almost equivalent to the performance of 1 million desktop computers.

- The file system used here is an optimized parallel file system based on Lustre, called Fujitsu Exabyte File System.

- One of the disadvantage with this high-performer is it consumes about 9.8 MW of power, that's the amount of power that would be enough to light 10,000 houses. When compared with its closest competitor, that is the Tianhe-1A, the K-computer is miles ahead and it is highly unlikely that it would lose its number 1 spot any time soon.

2.Tianhe-IA:

- Tianhe-1A is an upgraded model of Tianhe-1 that was developed by the Chinese National University of Defense in Changsha, Hunan. Tianhe-1 stands for “Milky Way number 1” in Chinese.

- Till June 2011, Tianhe-1A was the world's fastest supercomputer before being overtaken by Japan's K computer.

- This 88 million dollar beast consists of 112 computer cabinets, 12 storage cabinets, 6 communication cabinets and 8 I/O cabinets. Each cabinet has 4 frames, each frame having eight blades and a 16-port switching board. The system has 3584 such blades containing 7168 GPUs and 14,336 CPUs.

- This Chinese marvel has given a peak performance of about 2.5 petaflops and is used in carrying out computations for petroleum exploration and aircraft design.

- The best part about Tianhe-1A however, is the fact that it is an open access computer. Which means that it will provide services to other countries too.

- And maintaining this supercomputer costs about 20 million USD a year.

3. Jaguar Cray:

- Running on Cray Linux Environment, Jaguar is currently the world's third fastest supercomputer. It has achieved a peak performance of about 1.75 petaflops and was once the world's fastest supercomputer before being overtaken by the Chinese Tianhe-1A in 2010-11.

- The current model, that is Cray CTX5, is an upgraded version of the popular Cray CTX4. Jaguar has around 224, 256 x86-based AMD Opteron processor cores with 16 GB of memory for each node.

- The file system used here is an external Lustre file system, which is basically a massively parallel-distributed file system that is used for cluster computing.The file system is capable of storing over 10 Petabytes of data and has a read/write benchmark of 240 GB/s.

- This mean that this supercomputer costs a whopping 104 million USD and can be found at the Oak Ridge National Laboratory in Tennessee.

4. Nebulae:

- Nebulae is a research supercomputer located in Shenzhen, Guangdong, China.

- It has a theoretical peak performance of around 2.9 petaflops.

- Nebulae is the 4th most powerful supercomputer in the world and the second most powerful in China.

5.TSUBAME 2.0:

- TSUBAME 2.0 is the successor of TSUBAME 1.0, which previously was the fastest supercomputer in Japan.

- TSUBAME stands for Tokyo Tech Supercomputer Ubiquitously Accessible Mass storage Environment.Tsubame is also the word for a swallow in Japanese that forms an integral part of their logo.

- The Japanese marvel has a theoretical peak performance of a whopping 2.4 petaflops making it the 5th fastest supercomputer in the world. It has an aggregated memory bandwidth of 720 Terabytes per second.

6. Cielo Cray XE6:

- This mean machine that was unveiled in May 2010, is the sixth fastest supercomputer in the world.

- It is powered by AMD x86-64 Opteron 8 core processor. Cielo is located in Los Alamos National Laboratory in New Mexico, USA and is mainly used for research purposes.

7. Pleiades SGI Altix:

- Pleiades is a supercomputer used by NASA to conduct modeling and simulation for their missions.Pleiades is the world's 7th fastest supercomputer.

- Its performance averages around 1.09 petaflops with a peak of 1.315 petaflops. Loaded with a memory of 185 TB and 111,104 cores.

- The beast runs on SUSE Linux and has about 6.9 PB of storage space with 12 Direct Data Network (DDN) RAIDs.

8. Cray XE:

- Housed in DOE's National Energy Research Scientific Computing Center (NERSC), California, Cray XE6 is currently the world's 8th fastest supercomputer.

- It has achieved a peak performance of 1.5 petaflops and runs on Cray Linux Environment version 3. Specs include, 1536 cores per cabinet with 8 or 12-core 64-bit AMD Opteron 6100 Series processors.

- XE6 also comes with a Hardware Supervisory System (HSS) that integrates hardware and software components to provide system monitoring, fault identification and recovery.

9. Tera 100:

- Built by the French company Bull SA, Tera 100 is Europe's fastest supercomputer.

- It runs on Red Hat Enterprise Linux and gives an average of 1 petaflops, peaking at 1.25 petaFlops.

- It is one of the most efficient supercomputers in the world running at an efficiency of 83.7 %.

- Going back to the specs, Tera 100 comes with 20 Petabytes of storage, 300 TB of memory and the processing power of 140,000 Intel Xeon processor cores.

- This supercomputer includes specially designed water-cooled doors, which cut electrical consumption to half when compared with traditional air-cooled ones.

10. IBM Roadrunner:

- This is the world's tenth fastest supercomputer, IBM Roadrunner was built by IBM at the Los Almos National Laboratory in New Mexico, USA.

- It costs around 125 million USD and is the fourth most energy efficient supercomputer in the world.

- A computer's performance is generally measured in FLOPS, which stands for floating point operations per second. IBM's Roadrunner has a speed of about 1 petaflops(1015) with a top speed of 1.456 petaflops which it reached in November 2008.

- It uses Red Hat Enterprise Linux along with Fedora as its operating system and occupies almost 6000 sq. ft. of real estate.

- Roadrunner's main use is to predict whether USA's aging arsenal of nuclear weapons is safe and reliable. It is also used in other fields like financial, aerospace and automotive industries.

- The unique thing about Roadrunner is its use of two different processing architectures at the same time, more commonly known as hybrid design.

- This consists of AMD's Opteron along with IBM's own Powercell 8i. In case your dual core computer's speed was never good enough for you, the IBM Roadrunner boasts of a whopping 122,400 cores

Benchmarking: The benchmarks – that is, the figures which are in petaflops – are carried out using LINPACK. LINPACK is basically a collection of FORTRAN subroutines that analyzes and solves linear equations and linear least-square problems. The computer runs a program that solves a system of linear equations and the floating point rate of execution is measured. It is currently the best way to understand how fast a computer works thus making it a benchmarking standard in the world of supercomputers.

Supercomputer Programming Models<ref>http://books.google.com/books?id=tDxNyGSXg5IC&pg=PA4&lpg=PA4&dq=evolution+of+supercomputers&source=bl&ots=I1NZtZyCTD&sig=Ma2fHyp336BSp4Yv2ERmfrpeo4&hl=en&ei=IAReS4WbM8eUtgf2u8GnAg&sa=X&oi=book_result&ct=result&resnum=5&ved=0CB4Q6AEwBA#v=onepage&q=evolution%20of%20supercomputers&f=false</ref>

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. The base language of supercomputer code is, in general, Fortran or C, using special libraries to share data between nodes. Now environments such as PVM and MPI for loosely connected clusters and OpenMP for tightly coordinated shared memory machines are used. Significant effort is required to optimize a problem for the interconnect characteristics of the machine it is run on. The aim is to prevent any of the CPUs from wasting time waiting on data from other nodes.

Now we will discuss briefly regarding the programming languages mentioned above.

1) Fortran previously known as FORTRAN is a general-purpose, procedural, imperative programming language that is especially suited to computation like numeric and scientific computing. It was originally developed by IBM in the 1950s for scientific and engineering applications,then became very dominant in this area of programming early on and has been in use for over half a century in very much computationally intensive areas such as numerical weather prediction, finite element analysis, computational fluid dynamics (CFD), computational physics, and computational chemistry. It is one of the most popular and highly preferred language in the area of high-performance computing and is the language used for programs that benchmark and rank the world's fastest supercomputers.

Fortran a blend derived from The IBM Mathematical Formula Translating System encompasses a lineage of versions, each of which evolved to add extensions to the language while usually retaining compatibility with previous versions. Successive versions have added support for processing of character-based data (FORTRAN 77), array programming, modular programming and object-based programming (Fortran 90 / 95), and object-oriented and generic programming (Fortran 2003).

2) C-Language is a general-purpose computer programming language developed in 1972 by Dennis Ritchie at the Bell Telephone Laboratories to use with the Unix operating system. It is also widely used for developing portable application software. C is one of the most popular programming languages and there are hardly few computer architectures for which a C compiler does not exist. C has greatly influenced many other popular programming languages, most notably C++, which originally began as an extension to C.

It was designed to be compiled using a relatively straightforward compiler, to provide low-level access to memory, to provide language constructs that map efficiently to machine instructions, and to require minimal run-time support.The language was designed to encourage machine-independent programming. The language has been used in very wide range of platforms, from embedded microcontrollers to supercomputers.

3) The Parallel Virtual Machine (PVM) is a software tool used for parallel networking of computers. It is designed to allow a network of heterogeneous Unix and/or Windows machines to be used as a single distributed parallel processor. Thus large and complex computational problems can be solved more cost effectively by using the combined memory and power of many computers. The software is very portable and has been compiled on everything from laptops to Crays.

PVM enables users to exploit their existing computer hardware to solve complex problems at very less cost. PVM is also been used as an educational tool to teach parallel programming and to solve important practical problems. It was developed by the University of Tennessee, Oak Ridge National Laboratory and Emory University. The first version was written at ORNL in 1989, and after being rewritten by University of Tennessee, version 2 was released in March 1991. Version 3 was released in March 1993, and supported fault tolerance and better portability.User programs written in C, C++, or Fortran can access PVM through provided library routines.

4) The OpenMP (Open Multi-Processing) is an application programming interface (API) that supports multi-platform shared memory multiprocessing programming in C, C++ and Fortran on many architectures, including Unix and Microsoft Windows platforms. It consists of a set of compiler directives, library routines, and environment variables that influence run-time behavior.

It was developed by a group of major computer hardware and software vendors. OpenMP is a portable, scalable model that gives programmers a simple and flexible interface for developing parallel applications for platforms ranging from the desktop to the supercomputer.An application built with the hybrid model of parallel programming can run on a computer cluster using both OpenMP and Message Passing Interface (MPI), or more transparently through the use of OpenMP extensions for non-shared memory systems.

The OpenMP Architecture Review Board (ARB) published its first API specifications, OpenMP for Fortran 1.0, in October 1997. October the following year they released the C/C++ standard. 2000 saw version 2.0 of the Fortran specifications with version 2.0 of the C/C++ specifications being released in 2002. Version 2.5 is a combined C/C++/Fortran specification that was released in 2005.

Version 3.0, released in May, 2008, is the current version of the API specifications. The new features included in 3.0 is the concept of tasks and the task construct. More info regarding openMP can be read here OpenMP 3.0 specifications.

External links

3.Top500-The supercomputer website

5.Supercomputers to "see" black holes

6.Supercomputer simulates stellar evolution

7.Encyclopedia on supercomputer

10.Water-cooling System Enables Supercomputers to Heat Buildings

13.UC-Irvine Supercomputer Project Aims to Predict Earth's Environmental Future

14.Wikipedia

15.Parallel programming in C with MPI and OpenMP ByMichael Jay Quinn

19.SuperComputers for One-Fifth the Price

References

<references/>