CSC/ECE 506 Spring 2013/1b dj: Difference between revisions

| Line 14: | Line 14: | ||

*'''Massive Parallel System''' can be used to describe the architecture of the supercomputer. By the end of 20th century, connecting thousands of processors with fast connections started to become the main approach for building supercomputers. Today, there are two main approaches to build this massive parallel system – the grid computing and computer cluster approaches, which will be described in the later sections. | *'''Massive Parallel System''' can be used to describe the architecture of the supercomputer. By the end of 20th century, connecting thousands of processors with fast connections started to become the main approach for building supercomputers. Today, there are two main approaches to build this massive parallel system – the grid computing and computer cluster approaches, which will be described in the later sections. | ||

*'''Large Power Consumption''' is one of the major problems for running a supercomputer. Each supercomputer can contain thousands of processors and requires a tremendous amount of power to run. For example, [http://en.wikipedia.org/wiki/K_computer K computer], a Japanese supercomputer on [http://www.TOP500.org TOP500.org] reported the highest total power consumption of 9.89 MW in 2011, which is the equivalent of the total power consumption for 10,000 suburban homes in one year. | *'''Large Power Consumption''' is one of the major problems for running a supercomputer. Each supercomputer can contain thousands of processors and requires a tremendous amount of power to run. For example, [http://en.wikipedia.org/wiki/K_computer K computer], a Japanese supercomputer on [http://www.TOP500.org TOP500.org] reported the highest total power consumption of 9.89 MW in 2011, which is the equivalent of the total power consumption for 10,000 suburban homes in one year. | ||

*'''Heat Management''' is another major problem for supercomputer and affect the computer system in various ways. For instances, it can | *'''Heat Management''' is another major problem for supercomputer and affect the computer system in various ways. For instances, it can cause processors delay, system shutdown, or even equipments burn-down. In order to reduce the heat, various cooling technologies can be applied, such as liquid cooling, air cooling, etc. One of interesting example was the [http://www.extremetech.com/extreme/131259-ibm-deploys-hot-water-cooled-supercomputer dubbed Aquasar], a hot-water cooling system developed by IBM that consumes 40 percent less energy than a comparable air-cooling system. | ||

*'''High Cost''' is another major issue of supercomputer. For example, the initial upgrade of Titan supercomputer was $60 million, and the estimated total cost for the system was $97 million. | *'''High Cost''' is another major issue of supercomputer. For example, the initial upgrade of Titan supercomputer was $60 million, and the estimated total cost for the system was $97 million. | ||

Revision as of 18:27, 3 February 2013

Comparisons Between Supercomputers

Supercomputers are extremely capable computers, able to solve complex tasks in a relatively small amount of time. Their ability to massively outperform typical home and office computers is made possible normally either by an abundance of processor cores or smaller computers working in conjunction together. Supercomputers are generally specialized computers that tend to be very expensive, not available for general-purpose use and are used in computations where large amounts of numerical processing is required. They are used in scientific, military, graphics applications and for other number or data intensive computations <ref>http://dictionary.reference.com/browse/supercomputer Definition of supercomputer</ref>, <ref>http://www.webopedia.com/TERM/S/supercomputer.html Definition of supercomputer</ref>.

Since supercomputers have existed, as technology has advanced, they have continued to be surpassed by one another. This has lead to a drive for engineers and scientists to design and create supercomputers that continue to outperform others<ref>http://en.wikipedia.org/wiki/Supercomputer#History</ref>. This article compares current supercomputers as well as supercomputer architectures.

For the history, development and current state of supercomputing, including a fairly recent, detailed top 10 list, please see a fellow student's wiki article on Supercomputers.

Benchmarking Supercomputers

Supercomputers are generally compared qualitatively using floating point operations per second, or FLOPS. Using standard prefixes, higher levels of FLOPS can be specified as the computing power of supercomputers increases. For example, KiloFLOPS for thousands of FLOPS and MegaFLOPS for millions of FLOPS <ref>http://kevindoran.blogspot.com/2011/04/comparing-performance-of-supercomputers.html Doran, Kevin (April 2011) Comparing the performance of supercomputers</ref>. Often you'll see just the first letter of the prefix with FLOPS. For example, for GigaFLOPS or billions of FLOPS, you'll see GFLOPS <ref>http://top500.org/faq/what_gflop_s Definition of GFLOPS</ref>.

A software package called LINPACK is a standard approach to testing or benchmarking supercomputers by solving a dense system of linear equations using the Gauss method. <ref>http://www.top500.org/project/linpack LINPACK defined</ref>. However, LINPACK benchmarking software is not only used to benchmark supercomputers, it can also be used to benchmark a typical user computer <ref>http://www.xtremesystems.org/forums/showthread.php?197835-IntelBurnTest-The-new-stress-testing-program Intel Benchmark Software</ref>.

Characteristics

Based on the TOP500.org data as of November 2012, today’s supercomputers share the following key characteristics:

- High Processing Speed is the primary feature of supercomputer. As the technology grows, more and more calculation-intensive tasks such as weather forecasting and molecular modeling needed to be performed and resolved in a small time frame. These tasks used to take years to complete, but a supercomputer can now solve the same problem in minutes. As November 2012, the world’s #1 supercomputer, Cray Titan, can handle more than 20,000 trillion calculation per second. It is targeting to conduct researches such as material science code, climate change, and biofuels to bring tremendous real world societal benefits.

- Massive Parallel System can be used to describe the architecture of the supercomputer. By the end of 20th century, connecting thousands of processors with fast connections started to become the main approach for building supercomputers. Today, there are two main approaches to build this massive parallel system – the grid computing and computer cluster approaches, which will be described in the later sections.

- Large Power Consumption is one of the major problems for running a supercomputer. Each supercomputer can contain thousands of processors and requires a tremendous amount of power to run. For example, K computer, a Japanese supercomputer on TOP500.org reported the highest total power consumption of 9.89 MW in 2011, which is the equivalent of the total power consumption for 10,000 suburban homes in one year.

- Heat Management is another major problem for supercomputer and affect the computer system in various ways. For instances, it can cause processors delay, system shutdown, or even equipments burn-down. In order to reduce the heat, various cooling technologies can be applied, such as liquid cooling, air cooling, etc. One of interesting example was the dubbed Aquasar, a hot-water cooling system developed by IBM that consumes 40 percent less energy than a comparable air-cooling system.

- High Cost is another major issue of supercomputer. For example, the initial upgrade of Titan supercomputer was $60 million, and the estimated total cost for the system was $97 million.

Finding Supercomputer Comparison Data

Starting in 1993, TOP500.org began collecting performance data on computers and update their list every six months <ref>http://top500.org/faq/what_top500 What is the TOP500</ref>. This appears to be an excellent online source of information that collects benchmark data submitted by users of computers and readily provides performance statistics by Vendor, Application, Architecture and nine (9) other areas <ref name="t500stats">http://i.top500.org/stats TOP500 Stats</ref>. This article, in order to be vendor neutral, is providing the comparison by architecture. However, there are many ways to compare supercomputers and the user interface at TOP500.org makes these comparisons easy to do.

Comparison of Supercomputers by Architecture

Traditional supercomputers of today are composed of three (3) types of parallel processing architectures. These architectures are Cluster, Massively Parallel Processing or MPP, and Constellation <ref name="t500stats" />. A non-traditional, or disruptive approach, to supercomputers is Grid Computing<ref>http://searchdatacenter.techtarget.com/definition/grid-computing</ref>.

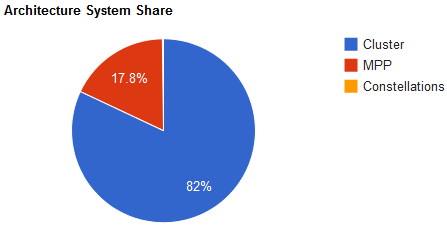

The graphic generated at TOP500.org shows the distribution of supercomputers by architecture. The Massively Parallel Processing section of the graph makes up 17.8% of the total number of current supercomputers. The bulk of supercomputers is made up of clustered systems, which Constellation architectures make up a fraction of a percent of supercomputers. Each architecture is further discussed, below.

Cluster

A Cluster is a group of computers connected together that appear as a single system to the outside world and provide load balancing and resource sharing <ref>http://searchdatacenter.techtarget.com/definition/cluster-computing Definition of Cluster Computing</ref>. Invented by Digital Equipment Corporation in the 1980's, clusters of computers form the largest number of supercomputers available today <ref>http://books.google.com/books?id=Hd_JlxD7x3oC&pg=PA90&lpg=PA90&dq=what+is+a+constellation+in+parallel+computing?&source=bl&ots=Rf9nxSqOgL&sig=-xleas5wXvNpvkgYYxguvP1tSLA&hl=en&sa=X&ei=aDcnT-XRNqHX0QHymbjrAg&ved=0CGMQ6AEwBw#v=onepage&q=what%20is%20a%20constellation%20in%20parallel%20computing%3F&f=false Applied Parallel Computing</ref>, <ref name="t500stats" />.

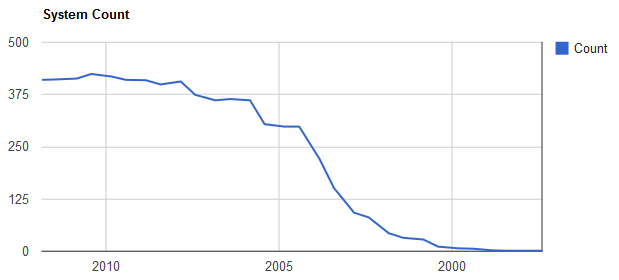

TOP500.org data as of November 2011 shows that Cluster computing makes up the largest subset of supercomputers at eight-two percent (82%). The chart to the right shows the growth of cluster supercomputer systems with the oldest data on the right. Teh number of clustered supercomputer systems grew rapidly during the 21st century and started leveling off after about 7.5 years.

The total processing power of the top 500 cluster supercomputers is reported at 50,192.82 TFLOPS and the trend for growth of cluster based supercomputers has leveled off<ref name="t500stats" />.

Some of the advantages and disadvantages of using cluster architecture are shown below.

Advantages - Test

Massively Parallel Processing, MPP

Massively Parallel Processing or MPP supercomputers are made up of hundreds of computing nodes and process data in a coordinated fashion <ref name="ttmppdef">http://whatis.techtarget.com/definition/0,,sid9_gci214085,00.html</ref>. Each node of the MPP generally has its own memory and operating system and can be made up of nodes that have multiple processors and/or multiple cores <ref name="ttmppdef" />.

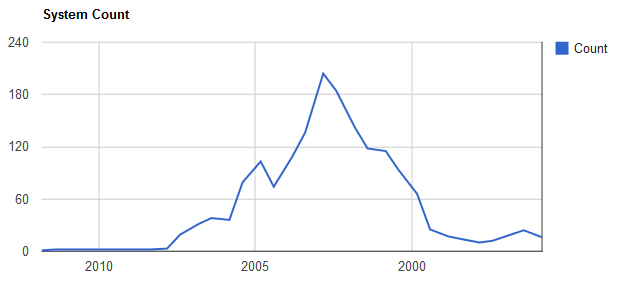

TOP500.org/stats for the MPP architecture of supercomputers shows that as of November 2011, MPP makes up approximately 17.8% of all supercomputers reported. A graph of the growth and subsequent decline of the MPP architecture from data displayed at TOP500.org/stats is shown on the right. MPP supercomputer systems grew from the early 90's until the early part of the 21st century and have since declined in total number.

The total processing power of the top 500 MPP supercomputers is 23,823.97 TFLOPS. The trend of MPP supercomputers, like cluster based supercomputers, has leveled off<ref name="t500stats" />.

Constellation

A Constellation is a cluster of supercomputers <ref>http://www.mimuw.edu.pl/~mbiskup/presentations/Parallel%20Computing.pdf</ref>. TOP500.org shows only one constellation supercomputer as of November 2011. The graph shows rapid growth and decline in the first 5 years of the 21st century.

This author's speculation about the decline of constellations is based on several factors: Multiple processor and/or multiple core computers have been getting faster and less expensive. Combine these less expensive computers into very large clusters and you can get computing power that rivals a constellation. Alternatively, more and more computers have symmetric multiprocessing, SMP, and the concept of constellations and clusters is converging.

The total processing power of the constellation supercomputer is: 52.84 TFLOPS<ref name="t500stats" />.

Grid Computing

Grid Computing is defined as applying many networked computers to solving a single problem simultaneously<ref>http://searchdatacenter.techtarget.com/definition/grid-computing</ref>. It is also defined as a network of computers used by a single company or organization to solve a problem<ref>http://boinc.berkeley.edu/trac/wiki/DesktopGrid</ref>. Yet another definition as implemented by GridRepublic.org creates a supercomputing grid by using volunteer computers from across the globe<ref>http://www.gridrepublic.org/index.php?page=about</ref>. All of these definitions have something in common, and that is using parallel processing to attack a problem that can be broken up into many pieces.

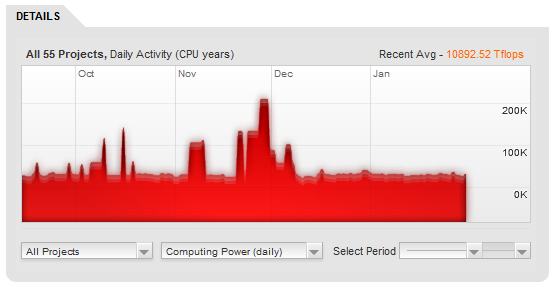

The graph generated by data at GridRepublic.org shows the average processing power of this supercomputer created by volunteers from around the world.

The GridRepublic.org statistics is for 55 applications running using a total of 10,979,114 GFLOPS or 10,979.114 TFLOPS<ref name="grstats">http://www.gridrepublic.org/index.php?page=stats</ref>.

Top 10 Supercomputers

According to Top500.org, the top 10 supercomputers in the world, as of November 2012, are listed below:

| Number | Name | System |

|---|---|---|

| 1 | Titan | Cray XK7 , Opteron 6274 16C 2.200GHz, Cray Gemini interconnect, NVIDIA K20x |

| 2 | Sequoia | BlueGene/Q, Power BQC 16C 1.60 GHz, Custom |

| 3 | K computer | SPARC64 VIIIfx 2.0GHz, Tofu interconnect |

| 4 | Mira | BlueGene/Q, Power BQC 16C 1.60GHz, Custom |

| 5 | JUQUEEN | BlueGene/Q, Power BQC 16C 1.600GHz, Custom Interconnect |

| 6 | SuperMUC | iDataPlex DX360M4, Xeon E5-2680 8C 2.70GHz, Infiniband FDR |

| 7 | Stampede | PowerEdge C8220, Xeon E5-2680 8C 2.700GHz, Infiniband FDR, Intel Xeon Phi |

| 8 | Tianhe-1A | NUDT YH MPP, Xeon X5670 6C 2.93 GHz, NVIDIA 2050 |

| 9 | Fermi-100 | BlueGene/Q, Power BQC 16C 1.60GHz, Custom |

| 10 | DARPA Trial Subset | Power 775, POWER7 8C 3.836GHz, Custom Interconnect |

For a more detailed version of this list, see a fellow student's wiki on supercomputers.

Advantages & Disadvantages of Supercomputers

An excellent way to compare the advantages and disadvantages of supercomputers is to use a table. Although this list is not exhaustive, it generally sums up the advantages as being the ability solve large number crunching problems quickly but at a high cost due to the specialty of the hardware, the physical scale of the system and power requirements.

| Advantage | Disadvantage |

|---|---|

| Ability to process large amounts of data. Examples include atmospheric modeling and oceanic modeling. Processing large matrices and weapons simulation<ref name="paulmurphy">http://www.zdnet.com/blog/murphy/uses-for-supercomputers/746 Murphy, Paul (December 2006) Uses for supercomputers</ref>. | Limited scope of applications, or in general, they're not general purpose computers. Supercomputers are usually engaged in scientific, military or mathematical applications<ref name="paulmurphy" />. |

| The ability to process large amounts of data quickly and in parallel, when compared to the ability of low end commercial systems or user computers<ref>http://nickeger.blogspot.com/2011/11/supercomputers-advantages-and.html Eger, Nick (November 2011) Supercomputer advantages adn disadvantages</ref>. | Cost, power and cooling. Commercial supercomputers costs hundreds of millions of dollars. They have on-going energy and cooling requirements that are expensive<ref name="robertharris">http://www.zdnet.com/blog/storage/build-an-8-ps3-supercomputer/220?tag=rbxccnbzd1 Harris, Robert (October 2007) Build an 8 PS3 supercomputer</ref>. |

Although these are advantages and disadvantages of the traditional supercomputer, there is movement towards the consumerization of supercomputers which could result in supercomputers being affordable to the average person<ref name="robertharris" />.

Summary of the Comparison of Supercomputers

Cluster supercomputers account for about twice as much processing in TFLOPS as MPP based supercomputers. The statistics tracked by GridRepublic.org for 55 applications shows that grid computing is using about the same amount of processing power as the fastest individual supercomputer listed on the TOP500.org list of supercomputers. The fastest computer listed is the

RIKEN located at the Advanced Institute for Computational Science (AICS) in Japan, which is a K computer, SPARC64 VIIIfx 2.0GHz, and Tofu interconnect that operates at 10510.00 TFLOPS<ref>http://www.top500.org/list/2011/11/100</ref>.

Grid Computing as an alternative to individually defined supercomputers seems to be growing and the expense of operating it is fully distributed across the volunteers that are apart of it. However, with any system where you don't have complete control of its parts, you can't rely on all of those parts being there all the time.

References

<references />