CSC/ECE 506 Fall 2007/wiki3 1 satkar: Difference between revisions

No edit summary |

|||

| Line 19: | Line 19: | ||

== | == ''[[Strategies to combat “False Sharing”]]'' == | ||

Revision as of 23:52, 17 October 2007

Introduction

The cache organization plays a key role in the modern computers, especially in the multiprocessors. As we scale the number of processors, subsequently the cache miss rate also increases. The high cache miss rate is a cause of concern, since it can significantly limit the performance of multiprocessors. The cache misses are broadly categorized into “Three-Cs”, namely Compulsory misses, Capacity misses and the Conflict misses. There is yet another category of misses introduced by the cache coherent multiprocessors, called the coherence misses. These occur when blocks of data are shared among multiple caches, and are of two types;

• True sharing: When a data word produced by a processor is used by another processor, then it is said to be True Sharing. True sharing is intrinsic to a particular memory reference stream of a program and is not dependent on the block size.

• False Sharing: When independent data words for different processors are placed in the same block, then it is called false sharing.

Increasing the size of the line in the cache helps in reducing the hit time, as more blocks can be accommodated in the same line. However, long cache lines may cause false sharing, when different processors access different words in the same cache line. In essence, they share the same line, without truly sharing the accessed data.

Problem with False Sharing

In multiprocessors, accessing a memory location causes a slice of actual memory (a cache line) containing the memory location requested to be copied into the cache.Subsequent references to the same memory location or those around it can probably be satisfied out of the cache until the system determines it is necessary to maintain the coherency between cache and memory and restore the cache line back to memory.

But, in a scenario, where multiple processors try to update individual elements in the same cache line, the entire cache line is invalidated, even though the updates are independent of each other.Each update of an individual element of a cache line marks the line as invalid. Hence, other processors accessing a different element in the same line see the line marked as invalid. They are forced to fetch a fresh copy of the line from memory, even though the element accessed has not been modified. This is because cache coherency is maintained on a cache-line basis, and not for individual elements. As a result there will be an increase in interconnect traffic and overhead. Also, while the cache-line update is in progress, access to the elements in the line is inhibited.

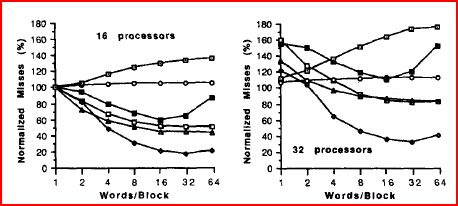

This situation is called false sharing, and might become a bottleneck in the path of performance and scalability. A very important parameter that affects false sharing, is the block size in a cache.An increase in the block size in case of uniprocessors tends to increase the spatial locality due to which the miss rate decreases. Where as in case of multiprocessors, increase in block size not only increases spatial locality but also increases the probability of false sharing. Thus the miss rate due to increased block size can go up or down in multiprocessors. The graph below shows the variations of miss rate as a function of the block size, done with respect to 16 and 32 processors. It depicts the significant increment in the miss rate as the block size increases, due to false sharing.

Strategies to combat “False Sharing”

References

[1] False Sharing and Spatial Locality in Multiprocessor Caches Josep Torrellas, Member, IEEE, Mbnica S. Lam, Member, IEEE, and John L. Hennessy, Fellow, IEEE

[2] Analysis of Shared Memory Misses and Reference Patterns Jeffrey B. Rothman and Alan Jay Smith