CSC/ECE 506 Spring 2011/ch2 JR: Difference between revisions

| Line 19: | Line 19: | ||

= Data Parallel Model = | = Data Parallel Model = | ||

One important feature of data-parallel programming model or data parallelism (SIMD) is the single control flow. Flynn's taxonomy classifies SIMD to be analogous to doing the same operation repeatedly over a large data set. There is only one control processor that directs the activities of all the processing elements. | One important feature of data-parallel programming model or data parallelism (SIMD) is the single control flow. Flynn's taxonomy classifies SIMD to be analogous to doing the same operation repeatedly over a large data set. There is only one control processor that directs the activities of all the processing elements. In a multiprocessor system executing a single set of instructions (SIMD), data parallelism is achieved when each processor performs the same task on different pieces of distributed data. In some situations, a single execution thread controls operations on all pieces of data. In others, different threads control the operation, but they execute the same code. | ||

== Description and Example == | |||

This section shows a simple example adapted from Solihin textbook (pp. 24 - 27) that illustrates the data-parallel programming model. Each of the codes below are written in pseudo-code style. | |||

Suppose we want to perform the following task on an array <code>a</code>: updating each element of <code>a</code> by the product of itself and its index, and adding together the elements of <code>a</code> into the variable <code>sum</code>. The corresponding code is shown below. | |||

// simple sequential task | |||

sum = 0; | |||

'''for''' (i = 0; i < a.length; i++) | |||

{ | |||

a[i] = a[i] * i; | |||

sum = sum + a[i]; | |||

} | |||

== | When we orchestrate the task using the data-parallel programming model, the program can be divided into two parts. The first part performs the same operations on separate elements of the array for each processing element (sometimes referred to as PE or pe), and the second part reorganizes data among all processing elements (In our example data reorganization is summing up values across different processing elements). Since data-parallel programming model only defines the overall effects of parallel steps, the second part can be accomplished either through shared memory or message passing. The three code fragments below are examples for the first part of the program, shared-memory version of the second part, and message passing for the second part, respectively. | ||

// data parallel programming: let each PE perform the same task on different pieces of distributed data | |||

pe_id = getid(); | |||

my_sum = 0; | |||

'''for''' (i = pe_id; i < a.length; i += number_of_pe) //separate elements of the array are assigned to each PE | |||

{ | |||

a[i] = a[i] * i; | |||

my_sum = my_sum + a[i]; //all PEs accumulate elements assigned to them into local variable my_sum | |||

} | |||

In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. For instance, if three processing elements are used then one processing element would start at i = 0, one would start at i = 1, and the last would start at i = 2. Since there are three processing elements then the index of the array for each will increase by three on each iteration until the task is complete (note that in our example elements assigned to each PE are interleaved instead of continuous). If the length of the array is a multiple of three then each processing element takes the same amount of time to execute its portion of the task. | |||

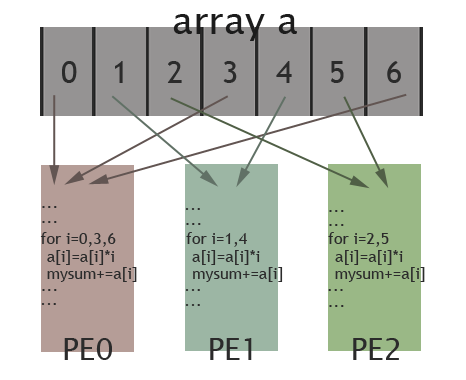

The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes (0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved. | |||

[[Image:506wiki1.png|frame|center|150px|Illustration of data parallel programming(adapted from [http://computing.llnl.gov/tutorials/parallel_comp/#ModelsData Introduction to Parallel Computing])]] | |||

== Comparison with Message Passing and Shared Memory == | == Comparison with Message Passing and Shared Memory == | ||

Revision as of 00:26, 30 January 2011

Supplement to Chapter 2: The Data Parallel Programming Model

History

As computer architectures have evolved, so have parallel programming models. The earliest advancements in parallel computers took advantage of bit-level parallelism. These computers used vector processing, which required a shared memory programming model. As performance returns from this architecture diminished, the emphasis was placed on instruction-level parallelism and the message passing model began to dominate. Most recently, with the move to cluster-based machines, there has been an increased emphasis on thread-level parallelism. This has corresponded to an increase interest in the data parallel programming model.

Bit-level parallelism in the 1970's

The major performance improvements from computers during this time were due to the ability to execute 32-bit word size operations at one time (Culler (1999), p. 15.). The dominant supercomputers of the time, like the Cray and the ILLIAC IV, were mainly Single Instruction Multiple Data architectures and used a shared memory programming model. They each used different forms of vector processing (Culler (1999), p. 21.). Development of the ILLIAC IV began in 1964 and wasn't finished until 1975 [1]. A central processor was connected to the main memory and delegated tasks to individual PE's, which each had their own memory cache. [2]. Each PE could operate either an 8-, 32- or 64-bit operand at a given time [3].

The Cray machine was installed at Los Alamos National Laborartory in1976 by Control Data Corporation and had similar performance to the ILLIAC IV [4]. The Cray machine relied heavily on the use of registers instead of individual processors like the ILLIAC IV. Each processor was connected to main memory and had a number of 64-bit registers used to perform operations [5].

Move to instruction-level parallelism in the 1980's

Increasing the word size above 32-bits offered diminishing returns in terms of performance (Culler (1999), p. 15.). In the mid-1980's the emphasis changed from bit-level parallelism to instruction-level parallelism, which involved increasing the number of instructions that could be executed at one time (Culler (1999), p. 15.). The message passing model allowed programmers the ability to divide up instructions in order to take advantage of this architecture.

Thread-level parallelism

The move to cluster-based machines in the past decade, has added another layer of complexity to parallelism. Since computers could be located across a network from each other, there is more emphasis on software acting as a bridge [6]. This has led to a greater emphasis on thread- or task-level parallelism [7] and the addition of the data parallelism programming model to existing message passing or shared memory models [8].

Data Parallel Model

One important feature of data-parallel programming model or data parallelism (SIMD) is the single control flow. Flynn's taxonomy classifies SIMD to be analogous to doing the same operation repeatedly over a large data set. There is only one control processor that directs the activities of all the processing elements. In a multiprocessor system executing a single set of instructions (SIMD), data parallelism is achieved when each processor performs the same task on different pieces of distributed data. In some situations, a single execution thread controls operations on all pieces of data. In others, different threads control the operation, but they execute the same code.

Description and Example

This section shows a simple example adapted from Solihin textbook (pp. 24 - 27) that illustrates the data-parallel programming model. Each of the codes below are written in pseudo-code style.

Suppose we want to perform the following task on an array a: updating each element of a by the product of itself and its index, and adding together the elements of a into the variable sum. The corresponding code is shown below.

// simple sequential task

sum = 0;

for (i = 0; i < a.length; i++)

{

a[i] = a[i] * i;

sum = sum + a[i];

}

When we orchestrate the task using the data-parallel programming model, the program can be divided into two parts. The first part performs the same operations on separate elements of the array for each processing element (sometimes referred to as PE or pe), and the second part reorganizes data among all processing elements (In our example data reorganization is summing up values across different processing elements). Since data-parallel programming model only defines the overall effects of parallel steps, the second part can be accomplished either through shared memory or message passing. The three code fragments below are examples for the first part of the program, shared-memory version of the second part, and message passing for the second part, respectively.

// data parallel programming: let each PE perform the same task on different pieces of distributed data

pe_id = getid();

my_sum = 0;

for (i = pe_id; i < a.length; i += number_of_pe) //separate elements of the array are assigned to each PE

{

a[i] = a[i] * i;

my_sum = my_sum + a[i]; //all PEs accumulate elements assigned to them into local variable my_sum

}

In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. For instance, if three processing elements are used then one processing element would start at i = 0, one would start at i = 1, and the last would start at i = 2. Since there are three processing elements then the index of the array for each will increase by three on each iteration until the task is complete (note that in our example elements assigned to each PE are interleaved instead of continuous). If the length of the array is a multiple of three then each processing element takes the same amount of time to execute its portion of the task.

The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes (0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved.

Task Parallel Model

Description and Example

Data Parallel Model vs Task Parallel Model

Definitions

References

- David E. Culler, Jaswinder Pal Singh, and Anoop Gupta, Parallel Computer Architecture: A Hardware/Software Approach, Morgan-Kauffman, 1999.

- Ian Foster, Designing and Building Parallel Programs, Addison-Wesley, 1995.

- Magne Haveraaen, "Machine and collection abstractions for user-implemented data-parallel programming," Scientific Programming, 8(4):231-246, 2000.

- W. Daniel Hillis and Guy L. Steele, Jr., "Data parallel algorithms," Communications of the ACM, 29(12):1170-1183, December 1986.

- Alexander C. Klaiber and Henry M. Levy, "A comparison of message passing and shared memory architectures for data parallel programs," in Proceedings of the 21st Annual International Symposium on Computer Architecture, April 1994, pp. 94-105.

- Yan Solihin, Fundamentals of Parallel Computer Architecture: Multichip and Multicore Systems, Solihin Books, 2008.