1.Message passing: Difference between revisions

No edit summary |

No edit summary |

||

| Line 2: | Line 2: | ||

Parallel programming requires interaction between the various processes that are simultaneously run on the individual processors and this is enabled by passing messages between the various processors. This important class of parallel machines, called Message-passing architectures, employs complete computers as building blocks including the microprocessor memory and the I/O system and provides communication between processors as explicit I/O operations. This style of architecture has much in common with the network of workstations, or clusters, except that the packaging of nodes is typically much tighter and the network is of much higher capability than a standard local area network. | Parallel programming requires interaction between the various processes that are simultaneously run on the individual processors and this is enabled by passing messages between the various processors. This important class of parallel machines, called Message-passing architectures, employs complete computers as building blocks including the microprocessor memory and the I/O system and provides communication between processors as explicit I/O operations. This style of architecture has much in common with the network of workstations, or clusters, except that the packaging of nodes is typically much tighter and the network is of much higher capability than a standard local area network. | ||

The world's largest supercomputers are used almost exclusively to run applications which are parallelised using Message Passing. The course covers all the basic knowledge required to write parallel programs using this programming model, and is directly applicable to almost every parallel computer architecture. | |||

Parallel programming by definition involves co-operation between processes to solve a common task. The programmer has to define the tasks that will be executed by the processors, and also how these tasks are to synchronise and exchange data with one another. In the message-passing model the tasks are separate processes that communicate and synchronise by explicitly sending each other messages. All these parallel operations are performed via calls to some message-passing interface that is entirely responsible for interfacing with the physical communication network linking the actual processors together. | |||

[[Image:Message Passing.jpg]] | |||

== Message Passing == | == Message Passing == | ||

Revision as of 03:40, 6 September 2007

Introduction

Parallel programming requires interaction between the various processes that are simultaneously run on the individual processors and this is enabled by passing messages between the various processors. This important class of parallel machines, called Message-passing architectures, employs complete computers as building blocks including the microprocessor memory and the I/O system and provides communication between processors as explicit I/O operations. This style of architecture has much in common with the network of workstations, or clusters, except that the packaging of nodes is typically much tighter and the network is of much higher capability than a standard local area network.

The world's largest supercomputers are used almost exclusively to run applications which are parallelised using Message Passing. The course covers all the basic knowledge required to write parallel programs using this programming model, and is directly applicable to almost every parallel computer architecture.

Parallel programming by definition involves co-operation between processes to solve a common task. The programmer has to define the tasks that will be executed by the processors, and also how these tasks are to synchronise and exchange data with one another. In the message-passing model the tasks are separate processes that communicate and synchronise by explicitly sending each other messages. All these parallel operations are performed via calls to some message-passing interface that is entirely responsible for interfacing with the physical communication network linking the actual processors together.

Message Passing

In message passing, a substantial distance exists between the programming model and the actual hardware primitives, with user communication performed through operating systems or library calls that perform the low-level actions including the actual communication operation. The most common user-level communication operations on message passing are variants of the send and receive. In its simplest form send specifies a local data buffer that is to be transmitted and a receiving process(typically on a remote processor).Receive specifies a sending process and a local data buffer into which the transmitted data is to be placed.together a matching send and receive causes a data transfer from one processor to another.In most message passing systems, the send process also allows an identifier or tag to be attached to the message, and the receiving operation specifies a matching rule( such as a specific tag from a specific processor)

The combination of a send and a matching receive accomplishes a memory to memory copy, where each end specifies its local data address, and a pair wise synchronization event. There are several possible variants of this synchronization event, depending upon whether the send completes when the receive has been executed, when the send buffer is available for reuse, or when the request has been accepted. Similarly, the receive can potentially wait until a matching send occurs or simply post the receive. Each of these variants have somewhat different semantics and different implementation requirements. Message passing has long been used as a means of communication and synchronization among arbitrary collections of cooperating sequential processes, even on a single processor. Important examples include programming languages, such as CSP and Occam, and common operating systems functions, such as sockets. Parallel programs using message passing are typically quite structured, like their shared-memory counter parts. Most often, all nodes execute identical copies of a program, with the same code and private variables. Usually, processes can name each other using a simple linear ordering of the processes comprising a program.

Typical Structure

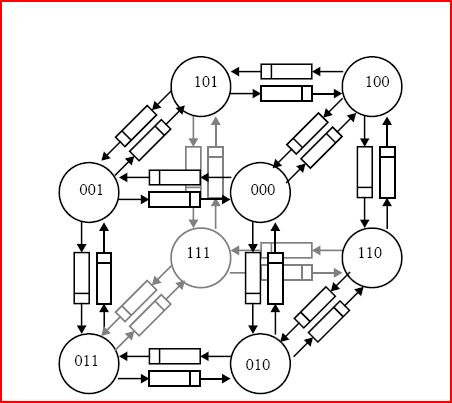

Early message passing machines provided hardware primitives that were very close to the simple send/receive user-level communication abstraction, with some additional restrictions. A node was connected to a fixed set of neighbors in a regular pattern by point-to-point links that behaved as simple FIFOs. Most early machines were hypercubes, where each node is connected to n other nodes differing by one bit in the binary address, for a total of 2^n nodes, or meshes, where the nodes are connect to neighbors on two or three dimensions. The network topology was especially important in the early message passing machines, because only the neighboring processors could be named in a send or receive operation. The data transfer involved the sender writing into a link and then writing the message until the receiver started reading it, so the send would block until the receive occurred. In modern terms this is called synchronous message passing because the two events coincide in time. The details of moving data were hidden from the programmer in a message passing library, forming a layer of software between send and receive calls and the actual hardware.

Typical structure of an early message passing machines

The direct FIFO design was soon replaced by more versatile and more robust designs which provided direct memory access (DMA) transfers on either end of the communication event. The use of DMA allowed non-blocking sends, where the sender is able to initiate a send and continue with useful computation (or even perform a receive) while the send completes. On the receiving end, the transfer is accepted via a DMA transfer by the message layer into a buffer and queued until the target process performs a matching receive, at which point the data is copying into the address space of the receiving process. The physical topology of the communication network dominated the programming model of these early machines and parallel algorithms were often stated in terms of a specific interconnection topology, e.g., a ring, a grid, or a hypercube. However, to make the machines more generally useful, the designers of the message layers provided support for communication between arbitrary processors, rather than only between physical neighbors. This was originally supported by forwarding the data within the message layer along links in the network. Soon this routing function was moved into the hardware, so each node consisted of a processor with memory, and a switch that could forward messages, called a router. However, in this store and forward approach the time to transfer a message is proportional to the number of hops it takes through the network, so there remained an emphasis on interconnection topology.

The emphasis on network topology was significantly reduced with the introduction of more general purpose networks, which pipelined the message transfer through each of the routers forming the interconnection network. In most modern message passing machines, the incremental delay introduced by each router is small enough that the transfer time is dominated by the time to simply move that data between the processor and the network, not how far it travels.This greatly simplifies the programming model; typically the processors are viewed as simply forming a linear sequence with uniform communication costs.A processor in a message passing machine can name only the locations in its local memory, and it can name each of the processors, perhaps by number or by route. A user process can only name private addresses and other processes; it can transfer data using the send/receive calls.