CSC/ECE 517 Spring 2022 - E2241: Heatgrid fixes and improvements: Difference between revisions

No edit summary |

No edit summary |

||

| Line 209: | Line 209: | ||

</pre> | </pre> | ||

<h4> Unit Testing </h4> | |||

<h4>Unit | As our project does not deal with actually adding new metrics, but just facilitating a low-coupling addition of new metrics, we need not add any unit test cases for metric implementations. There is only one metric in the system as of now for which test cases are already written. | ||

<h2>Implementation of Issue 2019</h2> | <h2>Implementation of Issue 2019</h2> | ||

Revision as of 17:39, 1 May 2022

This page provides information about the documentation of E2241: Heatgrid fixes and improvements as part of CSC/ECE 517 Spring 2022 Final project.

Team

Mentor

- Nicholas Himes (nnhimes)

Team Members (PinkPatterns)

- Eshwar Chandra Vidhyasagar Thedla (ethedla)

- Gokul Krishna Koganti (gkogant)

- Jyothi Sumer Goud Maduru (jmaduru)

- Suneha Bose (sbose2)

Heat Grid

Heat Grid refers to a part of view in Expertiza that shows review scores of all the reviews done for a particular assignment. It shows scores given for each rubric by peer reviewers. This is used by instructors to assign scores to individual assignments and by students to view review scores of their assignment. Our project scope involves dealing with fixing issues related to the heat grid and any subsequent bugs that might arrive.

Issues identified

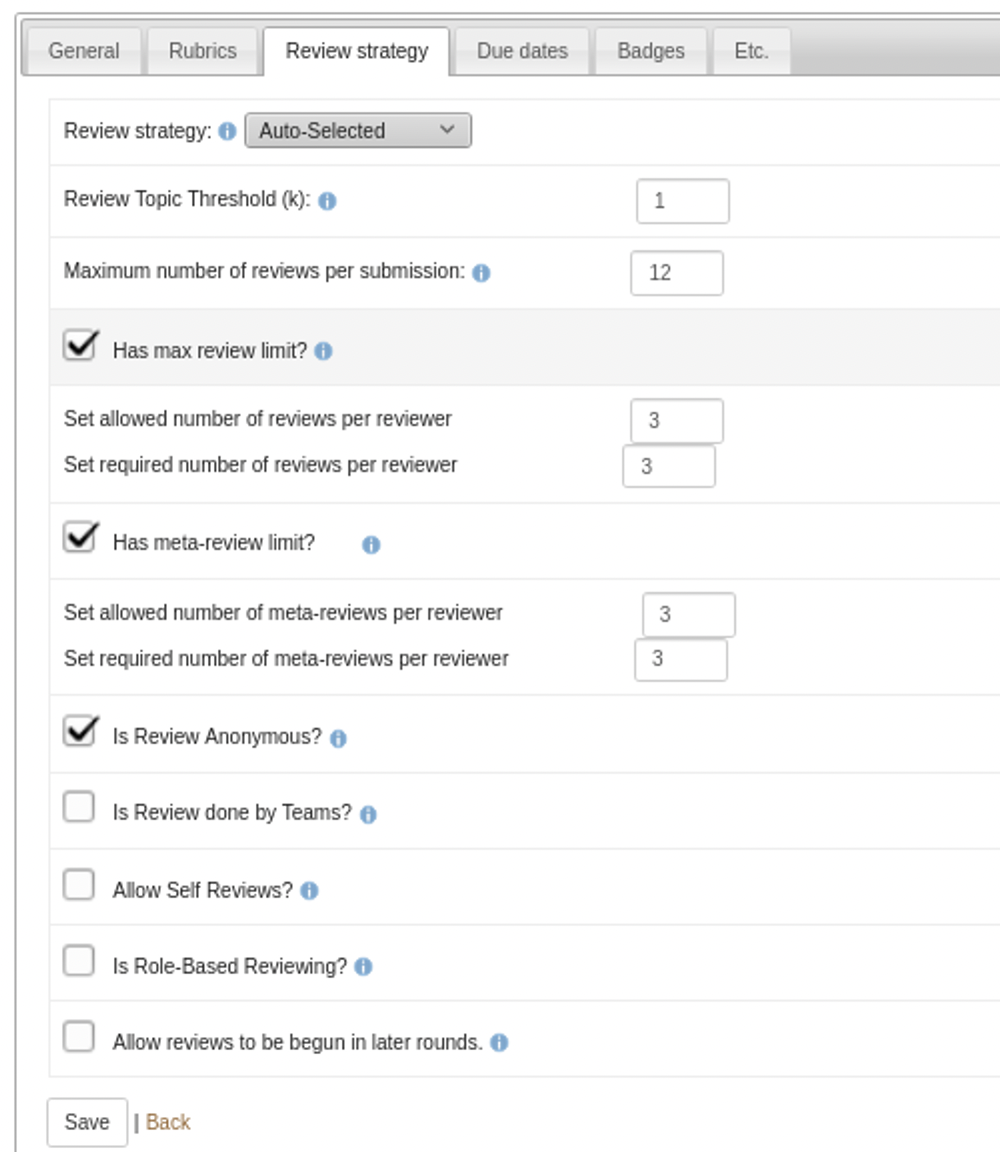

Issue #2019: Restrict teammates from reviewing their own work

There are some instances where a student was shown as reviewing his/her team even though the "Allow Self Review" checkbox was not checked by the instructor. This situation should not arise for practical purposes. Test cases must be extensively added so that we can be notified if the bug occurs again.

Issue #1869: Deal with the metrics of the heat grid

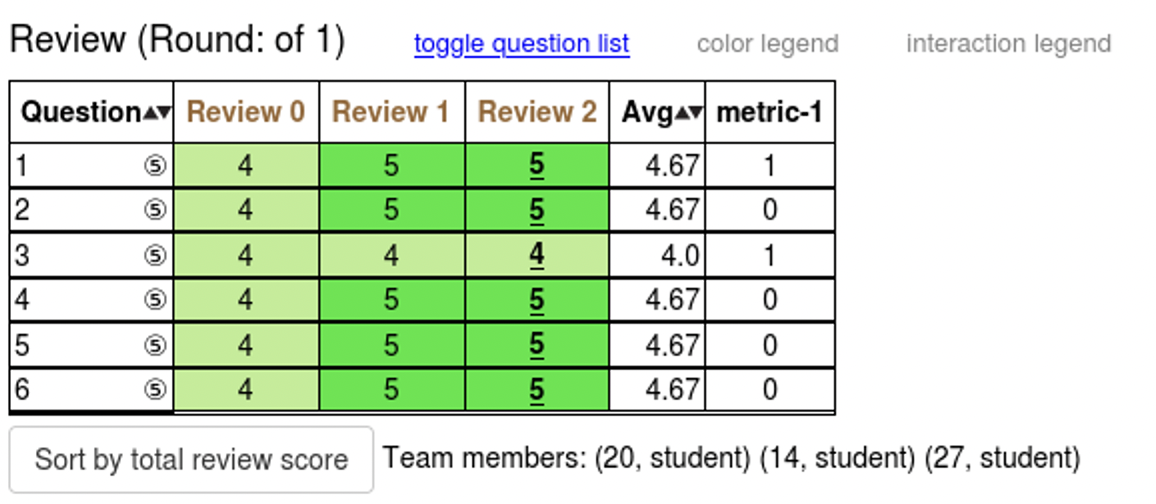

metric-1 column name in the heat grid follows bad naming convention. It doesn't explain what the column refers to. This must be changed to make the column name more apt.

As of now, the heat grid uses only one metric (metric-1). We must facilitate the system for addition of new metrics. Instructors should be able to toggle between various metrics as per their preferences. This can be done by the use of a drop down menu.

Plan of work

Issue #2019: Restrict teammates from reviewing their own work

Relevant bug of this issue can’t be reproduced to deal with it. So comprehensive test cases must be written to be notified if the bug arises again. We are planning to add rspec unit tests covering the following cases:

- If the instructor unchecks “ Allow self review” option

- Instructor should not be able to assign a student to review work that he/she has submitted.

- Instructor should not be able to assign a student to review work that their teammate has submitted.

- Students should not be able to review work he/she has submitted.

- Students should not be able to review work a teammate has submitted.

- If the instructor checks “ Allow self review” option

- Instructor should be able to assign a student to review work that he/she has submitted.

- Instructor should be able to assign a student to review work that their teammate has submitted.

- Students should be able to review work he/she has submitted.

- Students should be able to review work a teammate has submitted.

Issue #1869: Deal with the metrics of the heat grid

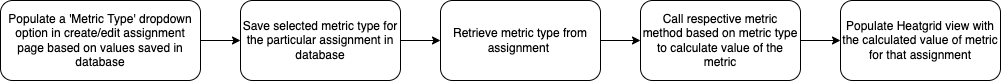

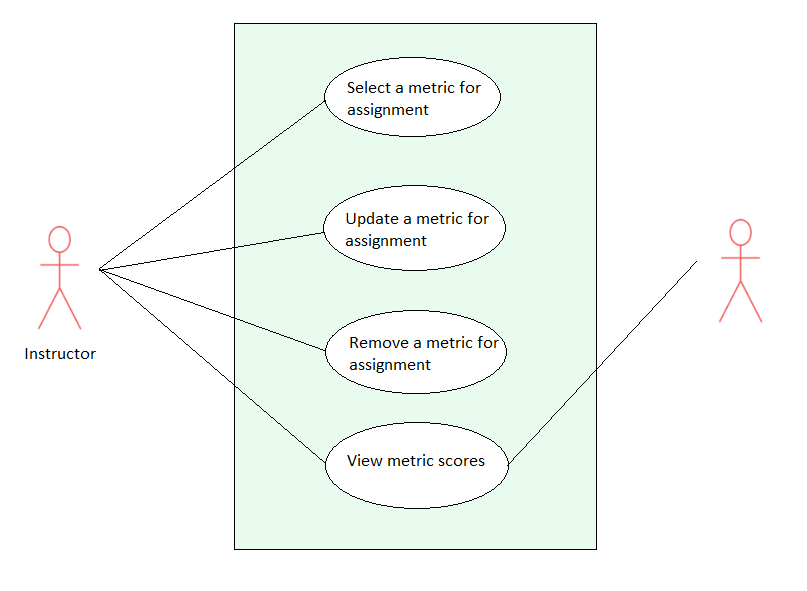

The new metrics can be added to the Metrics table that we will create. While creating an assignment/ editing an assignment, the instructor can select a metric from the Metrics table which will be available as a dropdown menu. We will retrieve the metric selected for the assignment by the instructor and display it in the Heatgrid Map of reviews.

- In create/edit assignment, the instructor will see ‘Metric Type’ drop down which has list of metrics based on the values in the database

- Save the selected metric type and update the value in the database

- Retrieve the metric type from the assignment and calculate the value of the metric

- Display the values of the metric in the Heatgrid view

Proposed Control Flow

UML Diagram

Design Principle

For Issue #1869 we plan to use the Factory Design Pattern since we are dealing with the creation of multiple kinds of metrics. As part of our implementation, we plan on using a metric interface, which can be implemented by different concrete metric classes. A factory class acts as a middleman between the implementations and the main class that instantiates the objects. Based on the instructor's selection, the main class requests the metric object from the factory class, rather than dealing with the creation logic on its own. This pattern, therefore, enforces encapsulation and results in the low coupling, the principle which is our primary target for this project scope.

The previous implementation has dealt with issue #1869. But the drawback with the implementation is that it renders a code that is highly coupled. Whenever a new metric is added two files (assignments/edit/_general.html.erb and grades/view_team.html.erb) are to be changed, resulting in high coupling. As a consequence, tests pertaining to the highly coupled codes require new tests as a new metric is added. This is undesirable. So, the principle of low coupling is the main focus of our implementation. This principle will enable a common set of tests that would be valid for any number of metrics that will potentially be added in the future.

Files to be targeted

Issue #2019

Test file for adding test cases to implement self-reviews checks

- spec/controllers/grades_controller_spec.rb

Issue #1869

For Heat grid view

- app/views/grades/_view_heatgrid.html.erb

- app/views/grades/view_team.html.erb

- app/helpers/grades_helper.rb

- app/controllers/grades_controller.rb

- app/models/vm_question_response.rb

- app/models/vm_question_row.rb

- assets/view_team_in_grades.js

For Assignment view

- app/views/assignment/edit/_general.html.erb

- app/controllers/assignments_controller.rb

Implementation of Issue 1869

Previous team’s implementation

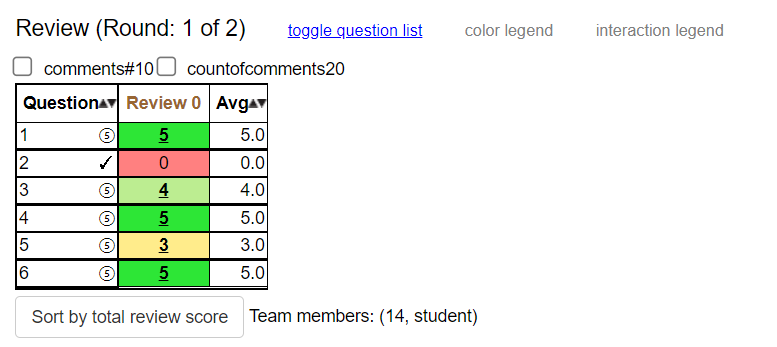

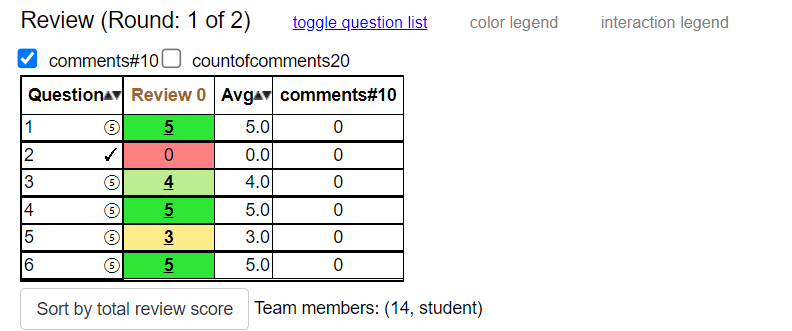

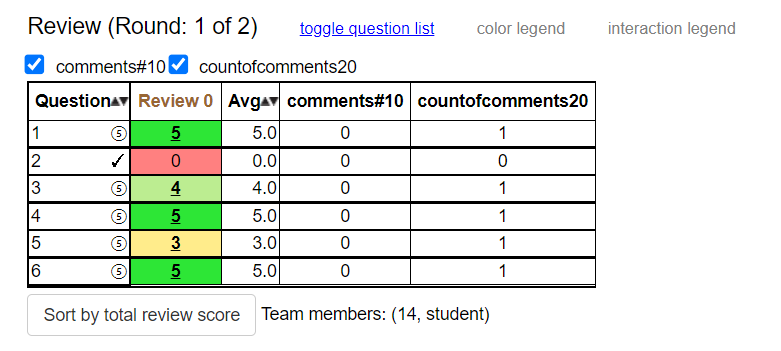

The previous team has dealt with the issue by changing “metric-1” to “verbose comment count”, which is relevant to what metric-1 refers to. For the latter half of the issue, they have provided a metric dropdown for the instructor to select while creating the assignment. This consequently requires only one metric to be displayed on the heat grid for which they’ve given a toggle option to show and hide the metric.

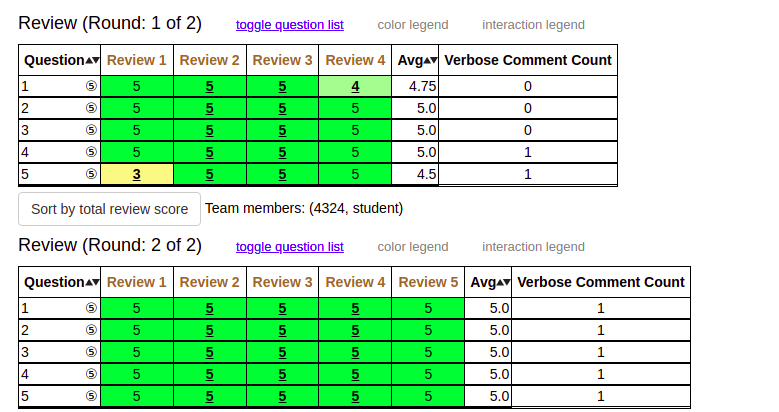

Changes reflected in the UI

Issue with previous team's implementation

The critical problem with their changes was, when in future a new metric is added, it forces a change in two view files: one time in assignments/edit/_general.html.erb and two times in grades/view_team.html.erb. Requiring these changes in view files that have little relevance to the heat grid is essentially causing high coupling.

Current implementation

Issue Goal

Our mentor has required us to display metrics in the heat grid with a toggle option for the user to select only the required metrics according to their preferences. This should be done keeping in mind low coupling as the primary design goal. These changes should be accompanied by minor changes such as adding comments and changing the name of “metric-1” to a self-indicative name.

Implementation details

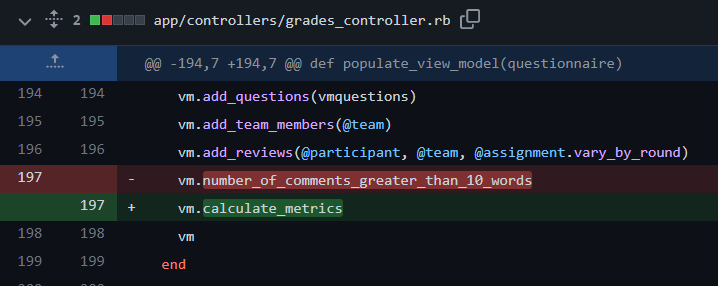

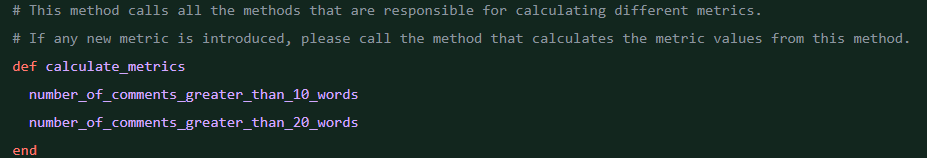

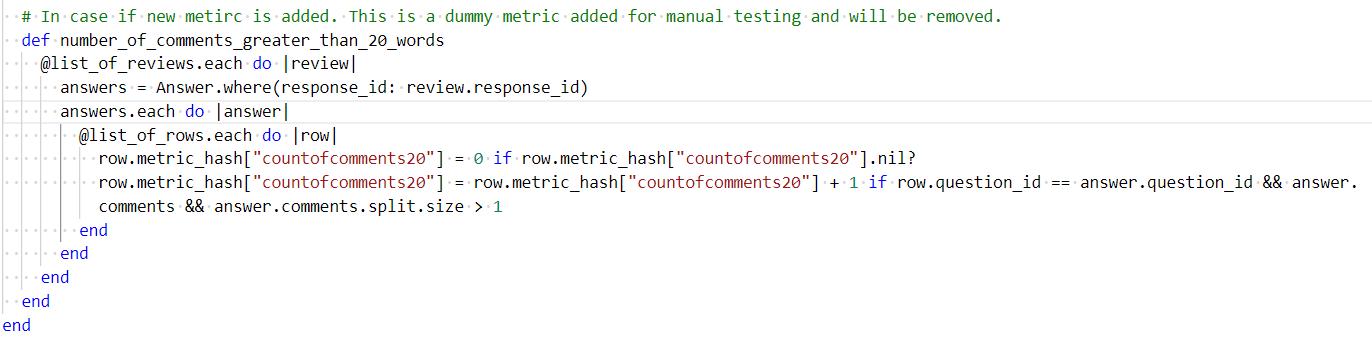

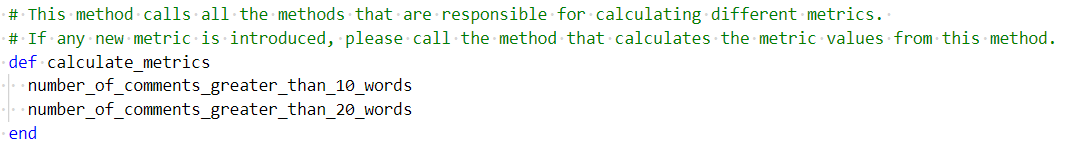

In the original beta implementation, whenever the relevant view file is loaded, it is calling the method "populate_view_model" present in the "grades_controller.rb" file. This method, is explicitly calling another model method to calculate metric. Whenever a new metric is added, we will have to make a call to that metric in the populate view model. This is not ideal as each new metric added is introducing a new line of code in the controller body. It is also not possible to determine the metrics present dynamically. To deal with this problem, we introduced a new parent method "calculate_metrics" in model file "vm_question_response.rb". This method is responsible for calling other individual methods that calculate metrics. The call to this method is replacing the explicit calls to metric calculation in the controller. This essentially is breaking the dependency between new metric introduction and the controller. Now, whenever a new metric is added, there is no need to introduce any code changes in the controller, instead we just have to change the code in relevant model file.

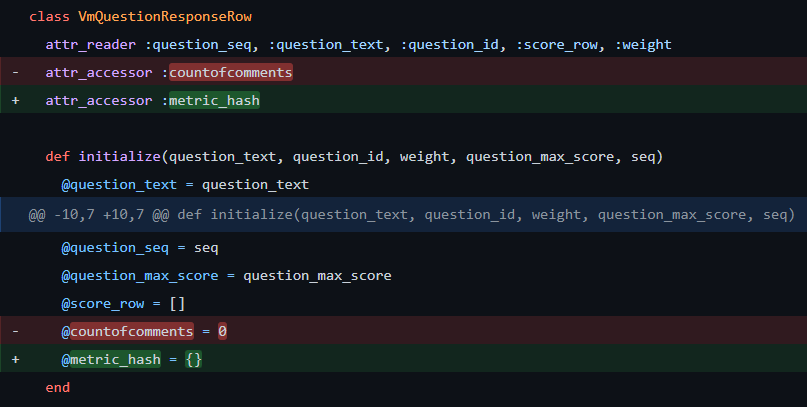

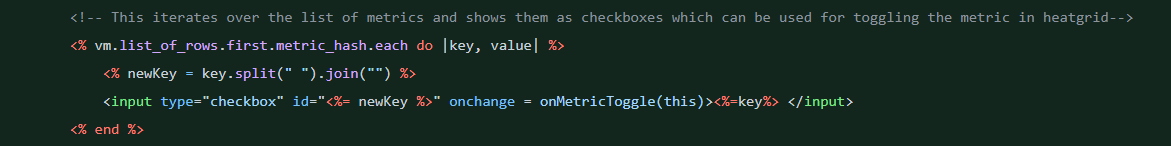

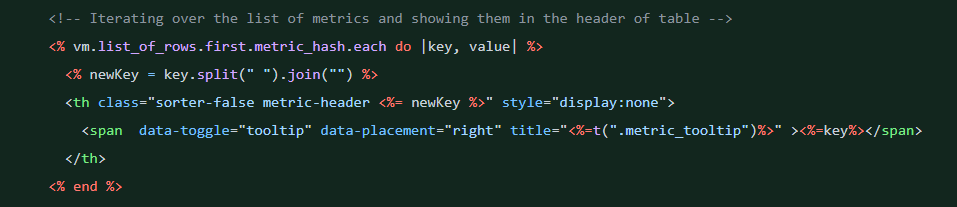

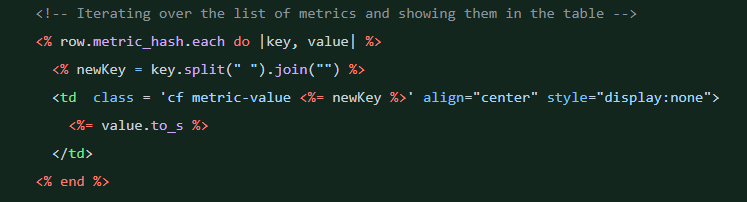

A new hashmap is introduced to store the values of the metrics in the form of key value pairs. Instead of explicitly calling metrics by name in the view file, this hashmap is used to iterate over the metrics and displayed

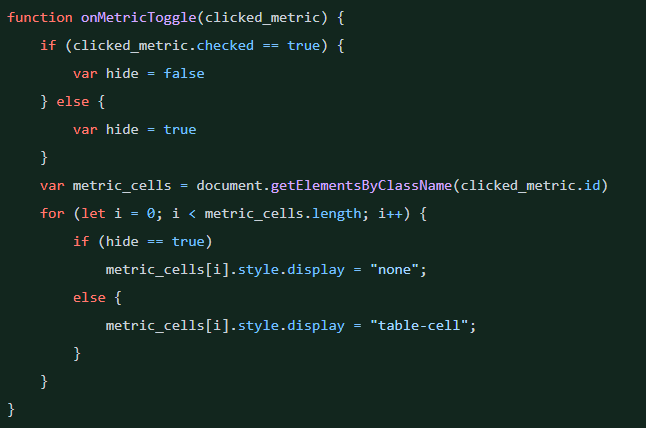

Toggle feature is implemented in "view_team_in_grades.js" file. When the checkbox is clicked, function in javascript file is called and appropriate elements are hidden.

Adding a new metric in future

To introduce new metric, a new method should be added in "vm_question_response.rb" file. This newly added method should be called from "calculate_metrics" method

Changes reflected in the UI

Manual Testing

Scenario: Visibility of all the unchecked metric checkboxes in View Scores view Given user has logged in When user visits View Scores page Then all the unchecked metric checkboxes are present above the heat grid

Scenario: Default invisibility of metric columns in View Scores view Given user has logged in When user visits View Scores page Then metric columns are not visible in the heat grid

Scenario: Checking a metric checkbox displays the corresponding metric column Given user has logged in When user visits View Scores page and When user clicks on unchecked comments#10 metric Then column with column name " comments#10" gets displayed with corresponding values

Scenario: Unchecking a metric checkbox hides the corresponding metric column Given user has logged in When user visits View Scores page and When user clicks on checked comments#10 metric Then column with column name " comments#10" and corresponding values get hidden

Unit Testing

As our project does not deal with actually adding new metrics, but just facilitating a low-coupling addition of new metrics, we need not add any unit test cases for metric implementations. There is only one metric in the system as of now for which test cases are already written.

Implementation of Issue 2019

Important Links

- GitHub repository link: https://github.com/EshwarCVS/expertiza

- Project Description Document: https://docs.google.com/document/d/1H0BjzMBz5it7Wckhegq4LXhMi8RVctTwVzP2x9gnFCo/edit#heading=h.tqdrrd12xs4x

- GitHub Issue #1869: https://github.com/expertiza/expertiza/issues/1869

- GitHub Issue #2019: https://github.com/expertiza/expertiza/issues/2019