CSC/ECE 517 Fall 2018/E1876 Completion/Progress view: Difference between revisions

No edit summary |

No edit summary |

||

| Line 63: | Line 63: | ||

|} | |} | ||

== Answer Table Structure == | === Answer Table Structure === | ||

{| class="wikitable" | {| class="wikitable" | ||

| Line 89: | Line 89: | ||

|} | |} | ||

== Response Table Structure == | === Response Table Structure === | ||

{| class="wikitable" | {| class="wikitable" | ||

| Line 127: | Line 127: | ||

|} | |} | ||

== Response Map Table == | === Response Map Table === | ||

{| class="wikitable" | {| class="wikitable" | ||

Revision as of 19:40, 13 November 2018

Problem Statement

In Expertiza, peer reviews are used as a metric to evaluate someone’s project. Once someone has peer reviewed a project, the authors of the project can also provide a feedback for this review in terms of ‘Author feedback’. While grading peer reviews, it would be nice for the instructors to take into account the author feedbacks given on a particular peer review, this will be helpful in evaluating how helpful the peer review actually was to the author of the project.

Goal

The aim of this project is to build this into the system. We need an additional column in the 'Review Report' page for reviews which shows the calculation of the author feedback. This will help instructor's to know how the reviews proved useful to the authors/team. The aim of this project is to integrate the author feedback column in the summary page

Design

Database

The following are the table structures we will need for mapping. First, the questions table has all the questions based on the questionnaire. We will be only concerned with the questions in the feedback questionnaire. The answers for each question in the feedback questionnaire is saved in Answers table below based on Question ID. Now, in order to know if the answers is a feedback by team members or a review by reviewer, the mapping for Answers table is done by response_id which is a foreign key to response table. Response table gives us map_id which maps to Response Maps table. Now, Response Map table gives us information of the reviewer_id, reviewee_id, reviewed_object_id (which is the id for the assignment being reviewed) and the type (whether it's a teammate review, author feedback or a regular review). We will have to fetch the answers from the Answer table based on response_id because in our case, the response is from a reviewee and not a reviewer. So, we will fetch those answers whose response type is FeedbackResponseMap and calculate scores for those questions from Review_Scores table.

Questions Table Structure

| Field Name | Type | Description |

|---|---|---|

| id | int(11) | unique identifier for the record |

| txt | text | the question string |

| weight | int(11) | specifies the weighting of the question |

| questionnaire_id | int(11) | the id of the questionnaire that this question belongs to |

| seq | DECIMAL | |

| type | VARCHAR(255) | Type of question |

| size | VARCHAR(255) | Size of the question |

| alternatives | VARCHAR(255) | Other question which means the same |

| break_before | BIT | |

| max_label | VARCHAR(255) | |

| min_label | VARCHAR(255) |

Answer Table Structure

| Field Name | Type | Description |

|---|---|---|

| id | int(11) | Unique ID for each Answers record. |

| question_id | int(11) | ID of Question. |

| answer | int(11) | Value of each of the answer. |

| comments | text | Comment given to the answer. |

| reponse_id | int(11) | ID of the response associated with this Answer. |

Response Table Structure

| Field Name | Type | Description |

|---|---|---|

| id | int(11) | The unique record id |

| map_id | int(11) | The ID of the response map defining the relationship that this response applies to |

| additional_comment | text | An additional comment provided by the reviewer to support his/her response |

| updated_at | datetime | The timestamp indicating when this response was last modified |

| created_at | datetime | The timestamp indicating when this response was created |

| version_num | int(11) | The version of the review. |

| round | int(11) | The round the review is connected to. |

| is_submitted | tinyint(1) | Boolean Field to indicate whether the review is submitted. |

Response Map Table

| Field Name | Type | Description |

|---|---|---|

| id | int(11) | The unique record id |

| reviewed_object_id | int(11) | The object being reviewed in the response. Possible objects include other ResponseMaps or assignments |

| reviewer_id | int(11) | The participant (actually AssignmentParticipant) providing the response |

| reviewee_id | int(11) | The team (AssignmentTeam) receiving the response |

| type | varchar(255) | Used for subclassing the response map. Available subclasses are ReviewResponseMap, MetareviewResponseMap, FeedbackResponseMap, TeammateReviewResponseMap |

| created_at | DATETIME | Date and Time for when the record was created |

| updated_at | DATETIME | Date and Time when the last update was made |

| calibrate_to | BIT |

UI Implementation

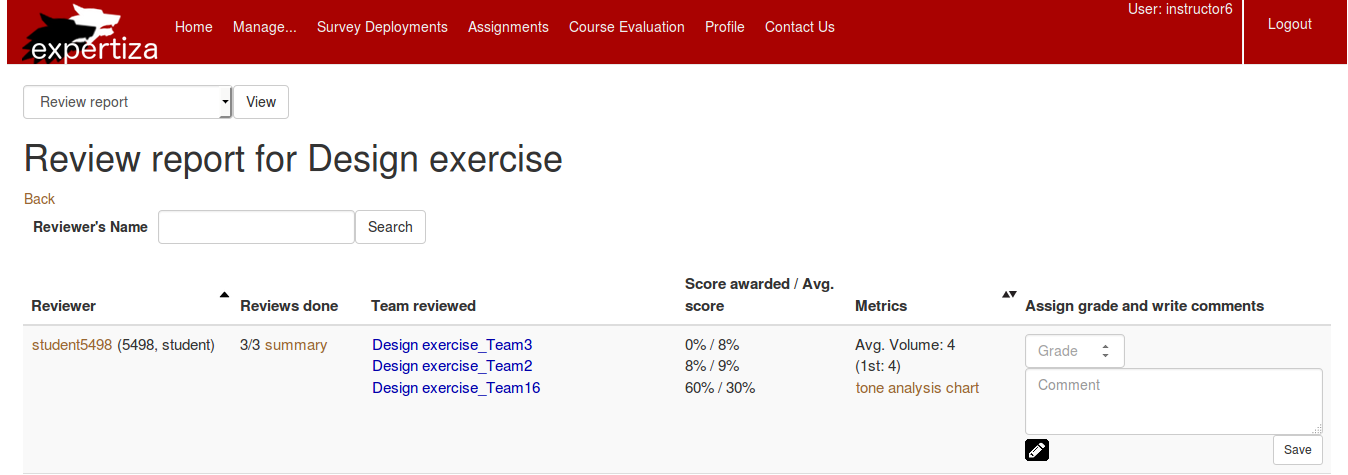

In the page Review report for Design exercise ( login as an instructor -> Manage -> Assignments -> View review report ), we are planning to add one more column to show the average ratings for the authors feedback on a particular assignment. The logic for calculating the average score for the feedback would be similar to already implemented logic for score awarded/ average score column. Below attached shows the page we are planning to edit.

Test Plan

References

1) http://wiki.expertiza.ncsu.edu/index.php/Documentation_on_Database_Tables