CSC517 Fall 2017 OSS M1705: Difference between revisions

(→Design) |

|||

| Line 59: | Line 59: | ||

The ''package.json'' file contains a list of all of the necessary libraries. To install the dependencies, run ''npm install''. This will create a ''node_modules'' folder with the necessary libraries. | The ''package.json'' file contains a list of all of the necessary libraries. To install the dependencies, run ''npm install''. This will create a ''node_modules'' folder with the necessary libraries. | ||

'''Libraries Used''' | |||

* | |||

* | For Tools: | ||

* fs-extra | |||

* nodegit | |||

* path | |||

* request | |||

* slash | |||

* underscore | |||

For Testing: | |||

* mocha | |||

* chai | |||

* nock | |||

===Tool # 1: Clone Tool=== | ===Tool # 1: Clone Tool=== | ||

Revision as of 20:22, 28 April 2017

M1705 - Automatically report new contributors to all git repositories

Introduction

Servo is a browser layout engine developed by Mozilla. It aims to take advantage of parallelism while eliminating many security vulnerabilities.

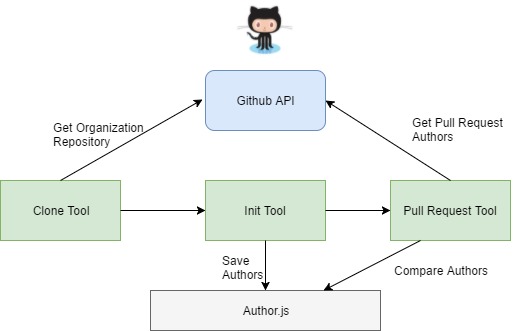

In the weekly This Week in Servo blog post, the new contributors to the servo/servo repository are listed. We want to be able to track this information across all repositories in the servo organization. Thus, the purpose of this work is to build a system that uses the Github API to determine this information on a regular basis.

Initial Phase

Tasks

The first part of the project was to complete the following steps:

- Create a GitHub organization with several repositories that can be used for manual tests

- Create a tool that initializes a JSON file with the known authors for a local git repository

- Create a tool that clones every git repository in a given GitHub organization (use the GitHub API to retrieve this information)

More details about it can be found directly on the Project Description page.

Our Process

The initial target of our project required us to get familiar with the GitHub API and JSON format. We did not have an existing code base to start from. We had to make decisions on what language and tools would be best to accomplish the initial tasks. The rationale behind our choices can be found in the Design subsection below.

In this phase, we build two tools: Clone Tool and Initialization Tool. For more details, please see the Tools section below.

In the initial phase, we did not explore any testing frameworks. However, we put a test plan in place and created very basic test cases for both tools. In order to see the test plan and check how to run these, please check out the README.md file in our Tool Repository. Unit testing will implemented as part of next phase, where we will be following Test Driven Development.

Second Phase

As we are familiar using GitHub APIs now, we are ready to move to subsequent steps mentioned on the wiki page<ref>https://github.com/servo/servo/wiki/Report-new-contributors-project</ref> We have three main tasks. We will be working on them in parallel, since they are independent.

Task 1

First of all, we will extend the Initialization Tool to support stopping at a particular date.

- We will be using the until flag for the git log command to achieve this. <ref>https://git-scm.com/docs/git-log</ref>

Task 2

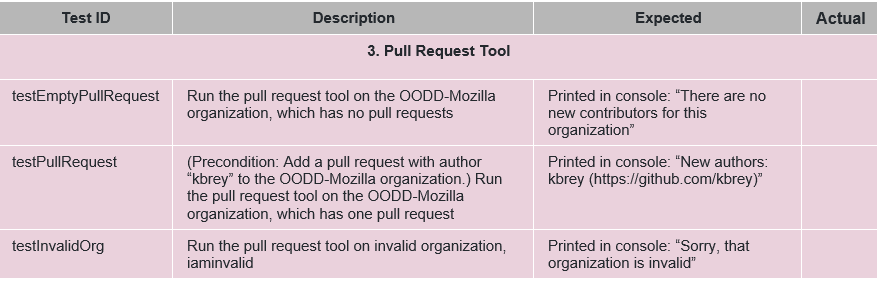

(Tool #3 - Pull Request Tool) Create a tool that processes all of the closed pull requests for a github organization during a particular time period

- For each pull requests, we will get the list of commits present.

- For each commit, we will retrieve the author/committers present.

- Each of the author/committer is checked with list of known author/committers. If they are new, add it to a list of new contributors. Return this list along with link to the author profiles.

- Update the JSON file containing the known authors with the new authors.

Using the GitHub web API to get the commits has limitations. We can only get the last 30 commits present. However, for organizations like servo, the number of commits can be much higher. Hence, we first need to clone all the repositories present in an organization (using tool #1) and then fetch the list of commits (modified version tool #2) in order to build on our tool #3. The images below task 3 show how these tools come together along with a sketch plan for the pull request tool.

Task 3

Test the tools we have created.

- Create unit tests mocking the GitHub API usage for validating the tools. Check the Test Plan section on this page for more information.

- Black box test cases can also be found in the Test section.

The Tools

Dependencies

The package.json file contains a list of all of the necessary libraries. To install the dependencies, run npm install. This will create a node_modules folder with the necessary libraries.

Libraries Used

For Tools:

- fs-extra

- nodegit

- path

- request

- slash

- underscore

For Testing:

- mocha

- chai

- nock

Tool # 1: Clone Tool

- Location: tools/CloneTool.js

- Clones all repositories in the given organization into the specified folder

- Parameters

* folderPath - the path to the folder that will hold the repos folder, where the repositories will be cloned * token - the GITHUB token, required to use the GitHub API * organization - the organization whose repositories will be cloned

- Logic

* First, the tool removes the folder at folderPath, if it exists * Next, it uses the GitHub API to find all repositories for the given organization * Then, it clones all of the repositories into the <folderPath>/repos folder

- Returns a promise that is resolved if all repositories are clones successfully, and is rejected otherwise

Tool # 2: Initialization Tool

- Location: tools/InitTool.js

- Creates / Updates a JSON file with the authors for the repositories in the given path

- Parameters

* folderPath - the path to the folder that holds repos, the folder with the local repositories, cloned by the CloneTool.

- Logic

* First, the tool uses the git log command to find all author names from all the commits in a repository * Then, it saves the authors array to authors.json in the provided folderPath

- Returns a promise that is resolved if the authors are saved to authors.json, and is rejected otherwise

Tool # 3: Pull Request Tool

- Location: tools/InitTool.js

- Gives a list of new authors not listed in authors.json

- Parameters

* TODO

- Logic

* TODO

- Returns a promise that is resolved with all new authors if the tool runs successfully, and is rejected otherwise

Running the Tools

We created a driver, Main.js, to make it easier to run the tools. This runs the tools on the OODD-Mozilla organization, the test organization we created in the initial phase. It outputs the repositories and author.json file into /toolfolder.

For security reasons, the GitHub token is not hard coded. Instead, you must set the environment variable GITHUB_KEY to your token. In Git Bash, this can be done with the following command:

export GITHUB_KEY=<your token here>

To run the tools, execute the following commands:

git clone https://github.com/OODD-Mozilla/ToolRepository.git cd ToolRepository npm install node Main.js

This will print all new authors for the OODD-Mozilla repository.

Design

Design Patterns

One opportunity we came across is that the AuthorTool and PullRequestTool both require access to the author list file, and if left separate the tools would have duplicate code. To solve this, we plan on using the dependency injection design pattern (see this blog post). We will create a AuthorListUtils module that will be passed to both tools when they are created. This will provide a common interface to the author file and lead to DRYer code.

Design Choices

Since we were not working on a existing code base, we had to make design and tool choices when we started working on the project. We picked NodeJS as the language of choice since it is a lightweight run time environment based on javascript. It also has a rich collection of third party and open source packages that can be easily be added as dependencies and managed with the npm package manager. Since we were working with the GitHub API, we used a libraries to help us to wrap the calls - nodegit and request .

We rely heavily on callbacks feature that javascript supports since many of the function calls are asynchronous. The callback() idea falls under API Patterns. Promises are another way javascript will support us when we need to manage the sequence of these asynch calls.

Security Concerns

In order to access and make requests to GITHUB API, a user must have proper authentication. Details about basic authentication and along with an example is provided on the this developer's manualpage. Any user can generate a personal OAuth token for his or her account from the settings page. This randomly generated token when placed inside a source file authorizes the user and allows to place API requests. Having this token exposed in a source file stored in a version control system is the same as hard coding a password in. This introduces a security risk for the user. One way to avoid this, is to generate the token and store it in a local environment variable. In this way, when the program references the environment variable, the token is accessed but its contents remain hidden when source file changes are pushed and pulled.

Please check out the references section in our README.md that provides a link to see (i) how to generate the token on GITHUB (ii) and how to add it as an environment variable.

Testing

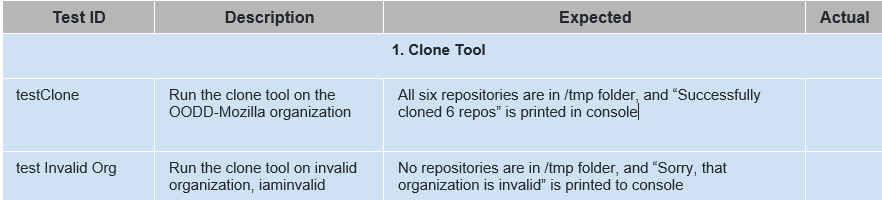

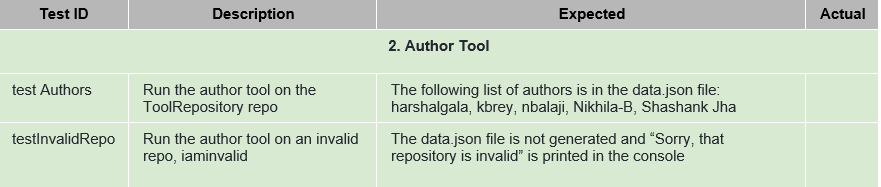

To test our tools we will be using both white-box and black-box testing. For white-box testing we will be using the Mocha framework, with Chai for the assertion library. Additionally, we will use the Nock library to mock our Github API requests. We intend to unit test all of our code, following the methodology provided by Sandi Metz in her “Magic Tricks of Testing” lecture. Our black box test plan is shown in Figure 1 below.

1. White Box Testing - Using Mocha, Chai, and Nock.

- Test suite can be found in our test/ directory. All tests are in test.js, and mock data is in mock.json.

- To run the test suite, type:

npm test

2. Black Box Testing - The tables below outline test cases for each of the tools

Test Plan

Conclusion

After understanding the GitHub API and the way JSON object can be used to access and post the data of the new contributors in the repository, we have observed it can be automated and the steps to understand it are as shown in the article. Once all the tools are written and tested, it can be easily integrated for the dev-servo organization if approved by Mozilla dev-servo community.

References

<references/>