CSC/ECE 506 Spring 2015/5b SA: Difference between revisions

| Line 174: | Line 174: | ||

} | } | ||

</code> | </code> | ||

As seen from the above snippets, locking is done before pushing and popping and release locks after the operations are done. | |||

However, if we have a long queue, and there are significantly more threads reading data out of the queue than those appending data at some point during code execution, the read speed is slowed down as per the above implementation. The reason is we are sharing the same mutex for push and pop operations. The writer threads access the lock, hence reads are inhibited. | |||

Can we use two locks? One for the read operation and another for the write should do the trick. Following snippet, shows the | |||

modified version of more efficient concurrent queue using two locks instead of one: | |||

<code> | |||

template <typename T> | |||

class Queue { | |||

public: | |||

Queue( ) { | |||

pthread_mutex_init(&_rlock, NULL); | |||

pthread_mutex_init(&_wlock, NULL); | |||

} | |||

~Queue( ) { | |||

pthread_mutex_destroy(&_rlock); | |||

pthread_mutex_destroy(&_wlock); | |||

} | |||

void push(const T& data); | |||

T pop( ); | |||

private: | |||

list<T> _list; | |||

pthread_mutex_t _rlock, _wlock; | |||

} | |||

</code> | |||

Pop and push snippets shown below are modified using two distinct locks, one for read and the other for write: | |||

<code> | |||

void Queue<T>::push(const T& value ) { | |||

pthread_mutex_lock(&_wlock); | |||

_list.push_back(value); | |||

pthread_mutex_unlock(&_wlock); | |||

} | |||

T Queue<T>::pop( ) { | |||

if (_list.empty( )) { | |||

throw ”element not found”; | |||

} | |||

pthread_mutex_lock(&_rlock); | |||

T _temp = _list.front( ); | |||

_list.pop_front( ); | |||

pthread_mutex_unlock(&_rlock); | |||

return _temp; | |||

} | |||

</code> | |||

Now, as we discussed the mutex lock based approach, it possesses some problems: | |||

# Waiting on mutexes consumes considerable time. This delay though have negative effects on system scalability. | |||

# Lower-priority threads could acquire a mutex, thus halting higher-priority threads that require the same mutex to proceed. This problem is known as the famous priority inversion. | |||

# A thread holding a mutex can be de-scheduled, perhaps because it was the end of its time-slice. For other threads waiting on the same mutex, this has a negative effect, because the wait time is now even longer. This problem is known as lock convoying. | |||

Thus, issues with mutexes has compelled developers to come up with solutions that donot use mutexes. | |||

Although mutexes are tricky to use, they are definitely worth our attention better performance. | |||

==Algorithms== | ==Algorithms== | ||

Revision as of 01:08, 26 February 2015

Introduction

Parallelization of computations in the presence of dynamic data structures has shown immense potential. Here we discuss how can we parallelize linked data structures [7]. Efficiently exploiting parallelism is fundamental in parallel computing even in the context of dynamic data structures. Some amount of speculation is needed to figure out how to exploit it the best. The idea is to introduce concurrency[6] in the existing data structures to enable multi-threading and hence parallelism. We need to handle synchronization since we need concurrency. We discuss the major steps in parallelizing data structures and then discuss some techniques and algorithms existing in the literature to gain more insight into the process.

Overview

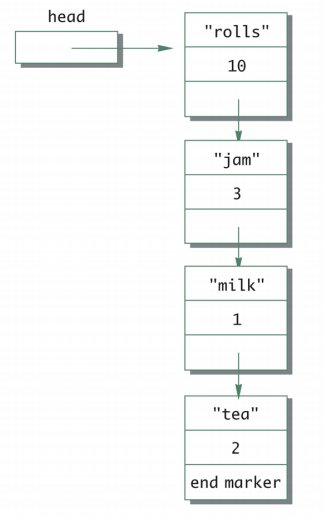

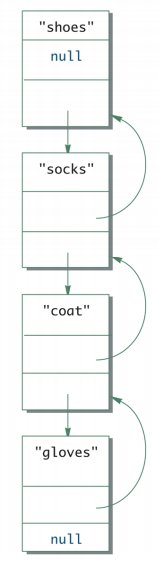

The simplest linked data structure we can think of is a linked list. A single linked list with one pointer pointing to the next node in the list or double linked list where nodes have two pointers pointing to the adjacent nodes to its left and right in the linked list are the most commonly used linked data structures. Following figures are some examples of the same. The first shows the head node to a singly linked list and the second shows a double linked list.

Linearizability

Linearizability is an important property of operations that may be executed concurrently [Herlihy and Wing(1990)][3][2]. An operation executed by some processor is linearizable if the rest of the system observes the corresponding system state change as if it occurred instantaneously at a single point in time after the operation started and before it finished. This property ensures that all the linearizable operations in the program have a mutual order observed in the same way by the entire system.

As mentioned before concurrency needs to be enabled for parallel linked data structures. In that context, linearizability is a useful property to have for concurrent operations. It can identify a single instruction or sub-operation which changes the data structure state and is known to be itself linearizable. In example 1, we will identify (Compare-and-Set) CAS instructions as linearization points.

Linked Data Structure parallelization

Unlike data structures like arrays, linked lists are unsuitable for naive parallelization methods which employ divide and conquer policy, they split the input and assign them to multiple workers for parallel processing. Splitting an arbitrary linked list requires traversing half of its elements. For too many operation instances such an O(n) splitting is unacceptable. Hence, intuitively, it is more efficient to convert the linked list into an array and then use splitters on the array. One thing to be noticed is that these data structures does not retain information about the relative order of elements, hence the operators applied to their data-parallel operations need to be commutative.

As mentioned before, a linked list data structure consists of a set of nodes such that each node points to another node in the set. A special node in this set is considered to be the head of the linked list. We consider connected linked lists without loops, which means every node can be reached from the head, no two nodes point to the same node and no node points at the root.

Example 1 Parallelized Linked List

The following code snippet shows a parallelized linked list. [3]

:class Invocation[T](xs: List[T]) {

::@volatile var stack = xs

::def READ = unsafe.getObjectVolatile(this, OFFSET)

::def CAS(ov: List[T], nv: List[T]) = unsafe.compareAndSwapObject(this, OFFSET, ov, nv)

:}

:abstract class ListTask[T] extends RecursiveTask[T] {

::val inv: Invocation[T]

::def workOn(elem: T): Unit

::def compute() {

::: val stack = inv.READ

::: stack match {

:::: case Nil =>

::::// no more work

::: case head :: tail =>

:::: if (inv.CAS(stack, tail)) workOn(stack)

:::: compute()

:::}

::}

:}

:class ListForeach[T, U](f: T => U, val inv: Invocation[T]) extends ListTask[T] {

:: def workOn(elem: T) = f(elem)

:}

:implicit class ListOps[T](val par: Par[List[T]]) {

::def foreach[U](f: T => U) = {

:::val inv = new Invocation(par.seq)

:::val tasks = for (i <- 0 until P) yield new ListForeach[T](f, inv)

:::for (t <- tasks) t.fork()

:::for (t <- tasks) t.join()

::}

:}

In the above code snippet, the linked list itself is considered as a stack of elements. In the concrete example above the immutable Lists from the Scala standard library has been used. Since, multiple workers will work on the elements of this list, we need to have atomic read and Compare-and-Set or CAS operations.

The JVM defines volatile variables the writes to which are immediately visible to all other threads. In Java these variables are defined with the volatile keyword before the declaration, and in Scala with the @volatile annotation. Whenever we read a volatile variable x, the notation READ(x) is used in the pseudocode, to make it explicit that this read is atomic, i.e. it can represent a linearization point. When writing the value v to the volatile variable, the notation WRITE(x, v) is used. A special compare-and-set or CAS instruction atomically checks if the target memory location is equal to the specified expected value and, if it is, writes the new value, returning true. Otherwise, it returns false. It is basically equivalent to the corresponding synchronized block, but more efficient on most computer architectures.

The stack is manipulated using atomic READ and CAS operations to ensure that every element is assigned to exactly one worker. After reading the stack field in line val stack = inv.READ , every worker checks whether it is empty, indicating

there is no more work. If the list is non-empty, every worker attempts to replace the

current list by its tail with the CAS line if (inv.CAS(stack, tail)) workOn(stack). Success means that only that worker replaced, so he gains ownership of that element – he must call workOn to process the element. If the CAS is not successful, the worker retries by calling the tail-recursive compute again.

The algorithm is correct – a successful CAS in line 89 guarantees that the stack field has

not changed its value since the READ in line 84. No linked list suffix will ever appear

more than once in the field stack because there are no more loops in the linked list

when following the tail pointer. Furthermore, every linked list node will appear in the

stack field at least once since the list is connected. Together, these properties ensure

that every linked list element is assigned to exactly one worker.

The algorithm shown in above is lock-free – although many threads compete to update the field stack at the same time, after a certain finite number of steps some thread will always complete its operation. In general this number of steps can depend on the size of the data structure or the number of competing workers, but in this case the number of steps is constant and equals the number of instructions between two consecutive CAS calls. In the next example #Example 2 Parallelized Queue Linked List we show parallelization through mutex locks.

Example 2 Parallelized Queue Linked List

Now, lets consider the queue data structure based on single linked list. The following code snippet explains a parallelized queue. [4] [5]

#include <pthread.h>

#include <list.h> // you could use std::list or your implementation

namespace concurrent {

template <typename T>

class Queue {

public:

Queue( ) {

pthread_mutex_init(&_lock, NULL);

}

~Queue( ) {

pthread_mutex_destroy(&_lock);

}

void push(const T& data);

T pop( );

private:

list<T> _list;

pthread_mutex_t _lock;

}

};

Multiple threads of control can simultaneously try to push data to the queue or remove data, so we need a mutex object to manage the synchronization. The constructor and destructor of the queue class are responsible for the creation and destruction of the mutex, as shown above.

For insertion and deletion of data from a concurrent queue, we need mutex locks.

What happens if multiple threads intend to append data to the queue? The first thread locks the mutex and appends data to the queue, while the other threads wait for their turn. The operating system decides which thread adds the data next in the queue, once the first thread unlocks or releases the mutex. By default, there is no real time priority threads, the thread waiting the longest is the next to wake up, acquire the lock, and append the data to the queue. Below snippet shows insertion:

procedure: Pushing data to the queue

void Queue<T>::push(const T& value ) {

: pthread_mutex_lock(&_lock);

: _list.push_back(value);

: pthread_mutex_unlock(&_lock);

}

The code for popping data out is similar. Below code snippet shows:

Procedure: Popping data from the queue

T Queue<T>::pop( ) {

: if (_list.empty( )) {

: throw ”element not found”;

: }

: pthread_mutex_lock(&_lock);

: T _temp = _list.front( );

: _list.pop_front( );

: pthread_mutex_unlock(&_lock);

: return _temp;

}

As seen from the above snippets, locking is done before pushing and popping and release locks after the operations are done. However, if we have a long queue, and there are significantly more threads reading data out of the queue than those appending data at some point during code execution, the read speed is slowed down as per the above implementation. The reason is we are sharing the same mutex for push and pop operations. The writer threads access the lock, hence reads are inhibited. Can we use two locks? One for the read operation and another for the write should do the trick. Following snippet, shows the modified version of more efficient concurrent queue using two locks instead of one:

template <typename T>

class Queue {

public:

Queue( ) {

pthread_mutex_init(&_rlock, NULL);

pthread_mutex_init(&_wlock, NULL);

}

~Queue( ) {

pthread_mutex_destroy(&_rlock);

pthread_mutex_destroy(&_wlock);

}

void push(const T& data);

T pop( );

private:

list<T> _list;

pthread_mutex_t _rlock, _wlock;

}

Pop and push snippets shown below are modified using two distinct locks, one for read and the other for write:

void Queue<T>::push(const T& value ) {

pthread_mutex_lock(&_wlock);

_list.push_back(value);

pthread_mutex_unlock(&_wlock);

}

T Queue<T>::pop( ) {

if (_list.empty( )) {

throw ”element not found”;

}

pthread_mutex_lock(&_rlock);

T _temp = _list.front( );

_list.pop_front( );

pthread_mutex_unlock(&_rlock);

return _temp;

}

Now, as we discussed the mutex lock based approach, it possesses some problems:

- Waiting on mutexes consumes considerable time. This delay though have negative effects on system scalability.

- Lower-priority threads could acquire a mutex, thus halting higher-priority threads that require the same mutex to proceed. This problem is known as the famous priority inversion.

- A thread holding a mutex can be de-scheduled, perhaps because it was the end of its time-slice. For other threads waiting on the same mutex, this has a negative effect, because the wait time is now even longer. This problem is known as lock convoying.

Thus, issues with mutexes has compelled developers to come up with solutions that donot use mutexes. Although mutexes are tricky to use, they are definitely worth our attention better performance.

Algorithms

There are several parallelization techniques possible for linked lists. But, each data structure needs to be reviewed on a per-case basis. This means a programmer needs to assess the codes carefully before deciding on which parallelization strategy is best applicable.

After parallelism is identified, programs can be parallelized and then executed on parallel multiprocessor systems to achieve good performance. Good compiler analysis techniques-http://en.wikipedia.org/wiki/Automatic_parallelization_tool- can only analyze parallelism in the traversal operations in programs with dynamic pointer-linked data structures. As a result, only the traversal operations will be parallelized while the construction operations will be executed in sequential. Although the traversal phase of such programs, which usually account for over 90% of total execution times, can be parallelized, the rest of programs that accounts for less than 10% will dominate the execution on multiprocessor systems. Xxxx

Let us now look at a few algorithms for achieving parallelism in different Linked Data Structures:

Simple Locks

The most easy and intuitive approach to parallelization is to parallelize the traversal of LDS. There are no conflicts between Reads among different threads in a Data Structure. Next, we try to introduce parallelism among the different construction operations – Add and Delete. This means that while different threads can simultaneously operate on two different parts of the Data Structure, no two threads will operate on a single(or adjacent) nodes at the same time.

This approach gives rise to the implementation of locks. Locks can be used to define a critical section, which can only be accessed by one thread at a time. –Yan Sohilin – very well defines the techniques for implementing a lock on a simple LDS – the linked list. We will not go through the detailed codes of these, as our focus remain on the techniques not introduced before:

Readers Lock

In this technique, we implement a Read Lock and a Write Lock. A Traversal Operation must obtain a Read Lock prior to execution, and a Modify Operation must obtain a Write Lock prior to execution. Both must release locks after completion. When a Read Lock is granted to an operation, a request for another Read Lock can be granted, but a request for Write Lock cannot be granted until all Read Locks have been released. Finally, if a Write Lock is granted to an Operation no requests for Read or Write Locks will be granted until the operation has completed execution and released the lock.

Global Lock

Fine Grain Lock

The Cyclic Linked List Parallelization

Hash Tables

One algorithm to perform parallelization of data structures is PLDS.

Use cases and Applications

Conclusions

References

1. http://idb.csie.ncku.edu.tw/tsengsm/COURSE/Java/Slides/Java-15-Linked_Data_Structure.pdf

2. http://axel22.github.io/resources/docs/my_thesis.pdf

3. http://dl.acm.org/citation.cfm?id=78972 Maurice Herlihy and Jeannette M. Wing. Linearizability: A correctness condition for concurrent objects. ACM Transactions on Programming Languages and Systems, 12(3):463–492, July 1990.

4. http://www.ibm.com/developerworks/aix/library/au-multithreaded_structures1/#list2

5. http://www.ibm.com/developerworks/aix/library/au-multithreaded_structures2/