CSC/ECE 506 Spring 2012/4b rs: Difference between revisions

| Line 109: | Line 109: | ||

[[Image:superlinear_example.jpg|thumb|center|700px|Figure 4. Intel Kentsfield core and cache utilization: 1 thread versus 3 threads]] | [[Image:superlinear_example.jpg|thumb|center|700px|Figure 4. Intel Kentsfield core and cache utilization: 1 thread versus 3 threads]] | ||

Example: | Example: | ||

Revision as of 23:48, 13 February 2012

The limits to speedup

Introduction <ref>http://en.wikipedia.org/wiki/Amdahl%27s_law</ref>

In parallel computing, speedup refers to how much a parallel algorithm is faster than a corresponding sequential algorithm. Parallel computing gains very high importance in scientific computations because of its good speedup. More so in those computations that involve large-scaled data. As far as the need for parallel computing goes, one claim is that we can always double the speed of a chip every 18 months according to Moore’s Law. This means there is no need to develop parallel computation. This claim however, has been proved to be wrong.

According to Amdahl's law the speedup of a program using multiple processors in parallel computing is limited by the time needed for the sequential fraction of the program. But this solves a fixed problem in the shortest possible period of time, rather than solving the largest possible problem (e.g., the most accurate possible approximation) in a fixed "reasonable" amount of time. To overcome these shortcomings, John L. Gustafson and his colleague Edwin H. Barsis described Gustafson's Law, which provides a counterpoint to Amdahl's law, which describes a limit on the speed-up that parallelization can provide, given a fixed data set size.

Scaled speedup

Scaled speedup is the speedup that can be achieved by increasing the data size. This increase in data size is done to solve a given problem on multiple parallel processors. In other words, with larger number of parallel processors at our disposal, we can increase the data size of the same problem and achieve higher speedup. This is what is referred to as scaled speedup. Means of achieving this speedup are exploited by Gustafson's Law.

Gustafson's Law <ref>http://en.wikipedia.org/wiki/Gustafson%27s_Law</ref>

Gustafson's Law says that it is possible to parallelize computations when they involve significantly large data sets. It says that there is skepticism regarding the viability of massive parallelism. This skepticism is largely due to Amdahl's law, which says that the maximum speedup that can be achieved in a given problem with serial fraction of work s, is 1/s, even when the number of processors increases to an infinite number. For example, if 5% of computation in a problem is serial, then the maximum achievable speedup is 20 regardless of the number of processors. This is not a very encouraging result.

Amdahl's law does not fully exploit the computing power that becomes available as the number of machines increases. Gustafson's law addresses

this limitation. It considers the effect of increasing the problem size. Gustafson reasoned that when a problem is ported onto a multiprocessor system, it is possible to consider larger problem sizes. In other words, the same problem with a larger number of data values takes the same time. The law proposes that programmers tend to set the size of problems to use the available equipment to solve problems within a

practical fixed time. Larger problems can be solved in the same time if faster, i.e. more parallel equipment is available. Therfore, it should be possible to achieve high speedup if we scale the problem size.

Example:

s (serial fraction of work) = 5% p (number of processors) = 20 speedup (Amdahl's Law) = 10.26 scaled speedup (Gustafson's Law) = 19.05

Derivation of Gustafson's Law<ref>http://www.johngustafson.net/pubs/pub13/amdahl.pdf</ref><ref>http://en.wikipedia.org/wiki/Gustafson%27s_Law#Derivation_of_Gustafson.27s_Law</ref>

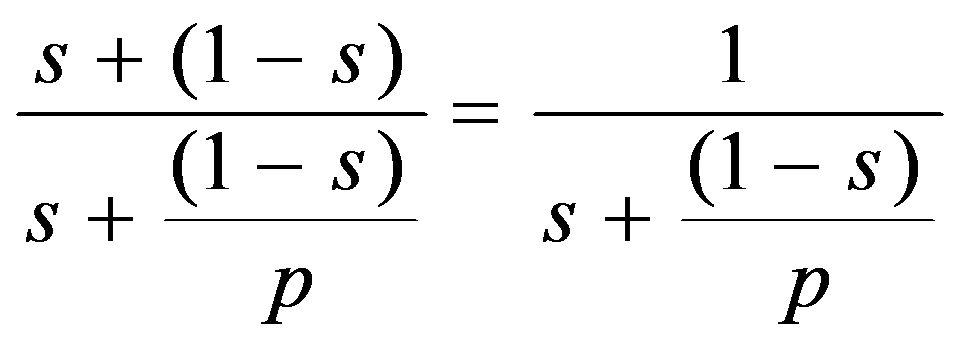

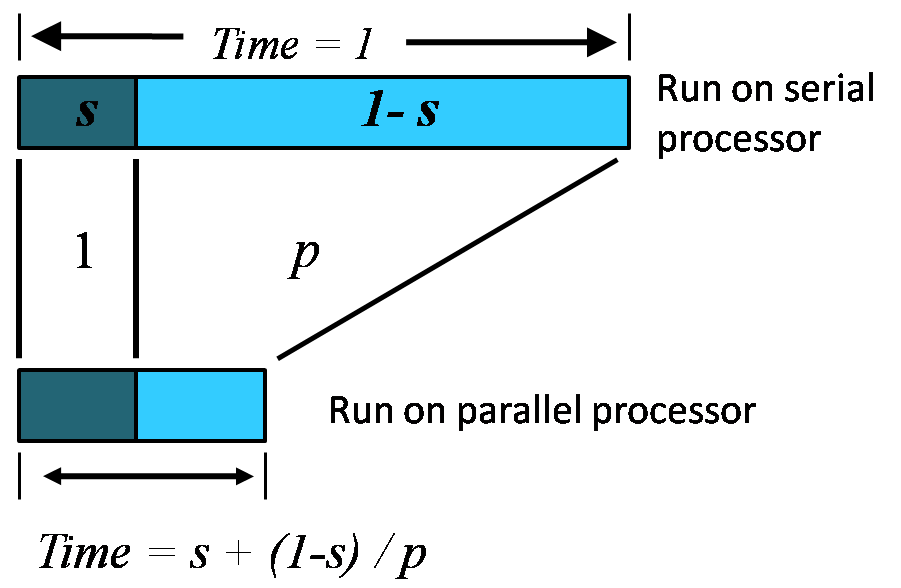

If p is the number of processors, s is the amount of time spent (by a serial processor) on serial parts of a program and 1-s is the amount of time spent (by a serial processor) on parts of the program that can be done in parallel, then Amdahl's law says that speedup is given by:

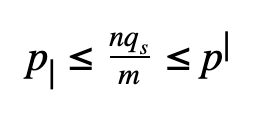

Let us consider a bigger problem size of measure n.

The execution of the program on a parallel computer is decomposed into:

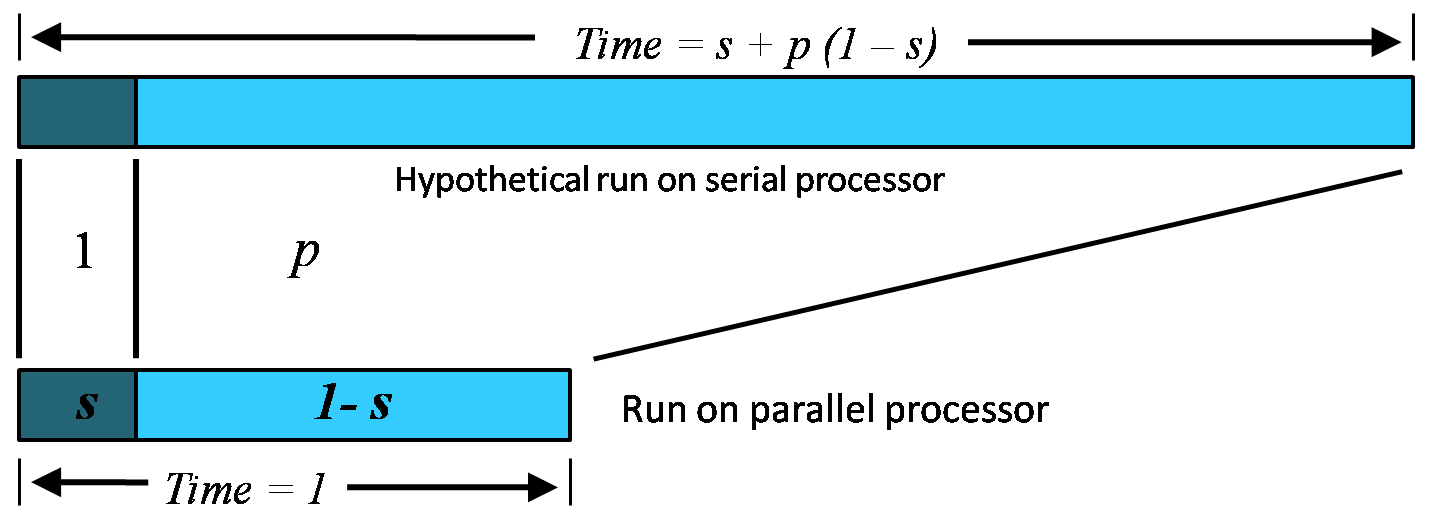

where a is the sequential fraction, 1-s is the parallel fraction, ignoring overhead for now, and p is the number of processors working in parallel during the parallel stage.

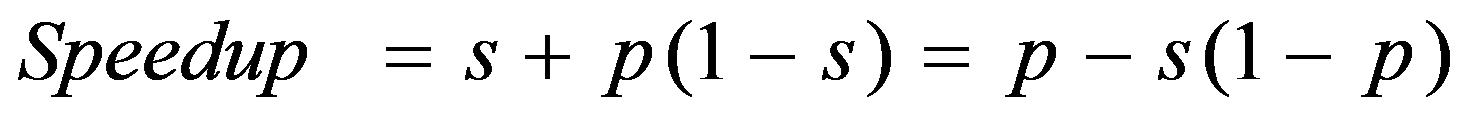

The relative time for sequential processing would be s + p (1 - s), where p is the number of processors in the parallel case.

Speedup is therefore:

where s: = s(n) is the sequential fraction.

Assuming the sequential fraction s(n) diminishes with problem size n, then speedup approaches p as n approaches infinity, as desired. Thus Gustafson's law seems to rescue parallel processing from Amdahl's law.

Amdahl's law argues that even using massively parallel computer systems cannot influence the sequential part of a fixed workload. Since this part is irreducible, the sequential fraction of the fixed workload is a function of p that approaches 1 for large p. In comparison to that, Gustafson's law is based on the idea that the importance of the sequential part diminishes with a growing workload; and if n is allowed to grow along with p, the sequential fraction will not ultimately dominate.

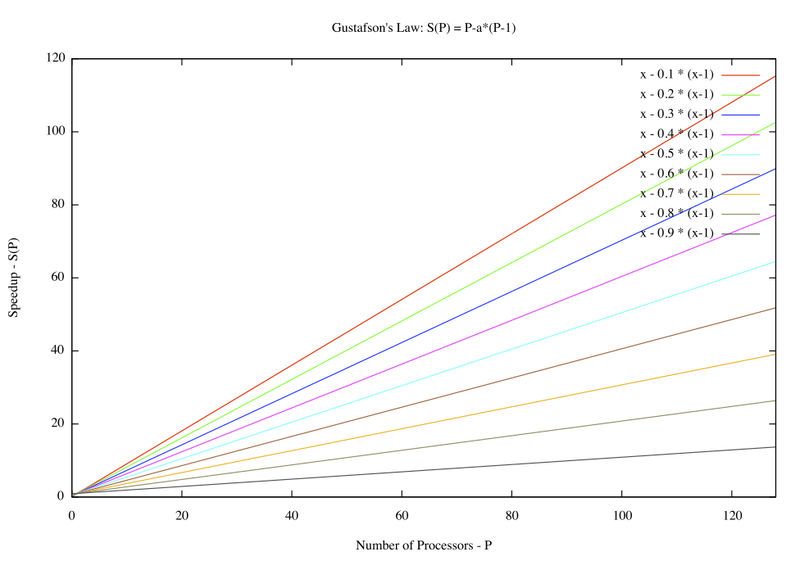

In contrast with Amdahl's Law, this function is simply a line, and one with much more moderate slope: 1 – p.

It is thus much easier to achieve efficient parallel performance than is implied by Amdahl’s paradigm. The two approaches, fixed-sized and scaled-sized, are contrasted and summarized in Figure 2a and b.

A Construction Metaphor

Amdahl's Law approximately suggests:

Suppose a 60 feet building is under construction, and 100 workers have spent 10 days to construct 30 feet at the rate of 3 feet / day. No matter how fast they construct the last 30 feet, it is impossible to achieve average rate of construction as 9 feet / day before completion of the building. Since they had already taken 10 days and there are only 60 feet in total; constructing infinitely fast you would only achieve a rate of 6 feet / day.

Gustafson's Law approximately states:

Suppose 100 workers are constructing a building at the rate of less than 9 feet / day. Given enough workers and height of building to construct, the average rate of construction can always eventually reach 9 feet / day, no matter how slow the construction had been. For example, 100 workers have spent 10 days to construct 30 feet at the rate of 3 feet / day, they could achieve this by more workers at the rate of 12 feet / day, for 20 additional days, or at the rate of 15 feet / day, for 10 additional days, and so on.

Gordon Bell prize <ref>http://techresearch.intel.com/ResearcherDetails.aspx?Id=182</ref> <ref>http://en.wikipedia.org/wiki/Gordon_Bell_Prize</ref>

The Gordon Bell Prizes are a set of awards awarded by the Association for Computing Machinery in conjunction with the Institute of Electrical and Electronics Engineers each year at the Supercomputing Conference to recognize outstanding achievement in high-performance computing applications. The main purpose of the award is to acknowledge, reward, and thereby assess the progress of parallel computing. The awards were established in 1987.

The Prizes were preceded by a similar much smaller prize (nominal: $100) by Alan Karp, a numerical analyst (then of IBM; won by Gustafson and Montry) challenging claims of MIMD performance improvements proposed in the Letters to the Editor section of the Communications of the ACM who went on to be one of the first Bell Prize judges. Cash prizes accompany these recognitions and are funded by the award founder, Gordon Bell, a pioneer in high-performance and parallel computing.

Dr. John L. Gustafson introduced the first commercial cluster system in 1985 and having first demonstrated 1000x, scalable parallel performance on real applications in 1988, for which he won the inaugural Gordon Bell Award. That demonstration broke the “Karp Challenge” that claimed speedup of more than 200x was a practical impossibility; it created a watershed that led to the widespread manufacture and use of highly parallel computers.

Superlinear speedup <ref>http://www.ccs.neu.edu/course/com3620/projects/scalable/jshan/final1.pdf</ref>

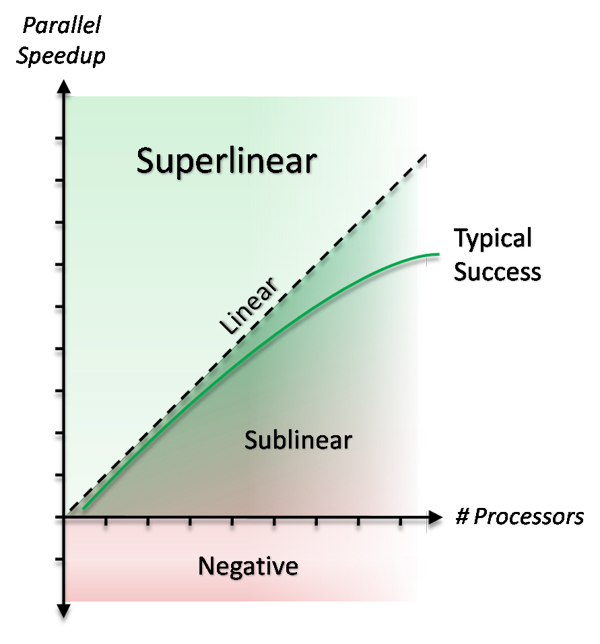

Not too long ago, the parallel time to solve a given problem using p processors was believed to be no greater than p. However, people then observed that in some computations the speedup was greater than p. When the speedup is higher than p, it is called super-linear speedup. One thing that could hinder the chances of achieving super-linear speedup is the cost involved in inter-process communication during parallel computation. This is not a concern in serial computation. However, super-linear speedup can be achieved by utilizing the resources very efficiently.

Controversy

Talk of super-linear speedup always sparks some controversy. Since super-linear speedup is not possible in theory, some non-orthodox practices could be thought of being the cause for achieving super-linear speedup. This is true especially with regard to the traditional research community. Hence, reporting super-linear speedup is controversial.

Reasons for super-linear speedup

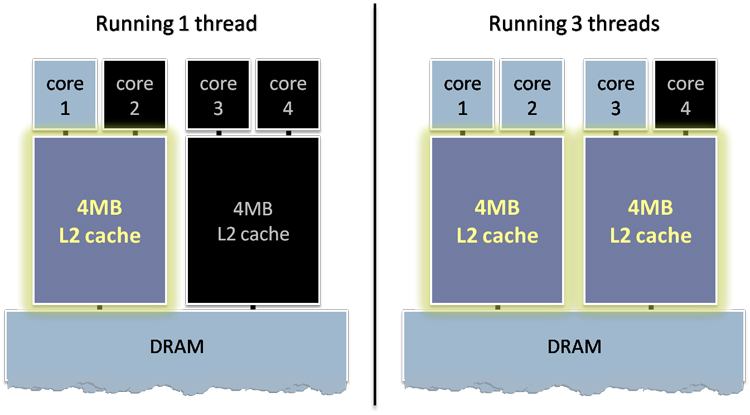

Let us look at the reasons for super-linear speedup. The data set of a given problem could be much larger than the cache size when the problem is executed serially. In parallel computation, however, the data set has enough space in each cache that is available. In problems that involve searching a data structure, multiple searches can be executed at the same time. This reduces the termination time. Another reason is the efficient utilization of resources by multiprocessors.

Parallel Search

When search is being performed in parallel on multiple processors, the amount of work being done is lesser than the amount of work being done serially. Let us see why this is so:

- The parallel algorithm uses some search like a random walk, the more processors that are walking, the less distance has to be walked in total before you reach what you are looking for.

- Modern processors have faster and slower memories. The processor will try to keep the data we are using in the fast memory. The amount of our data is most likely larger than the amount of fast memory. If we use n processors we have n times the amount of faster memory. More data fits in the fast memory which makes it possible to take less time, and hence amount of work to do the same task.

- The original sequential algorithm was really bad

- There are multiple processors at our disposal and hence much more cache is available when compared to serial computation on a single processor. The serial algorithm always runs out of cache space when the data set is very large.

- Getting a serial algorithm to do this parallel work and get better results wouldn't be feasible because the serial algorithm will not utilize the resources efficiently like a parallel algorithm would.

Example:

Suppose we have an 8 processor machine, each processor has a 1MB cache and each computation uses 6MB of data. On a single processor the computation will be doing a lot of data movement between CPU, cache and RAM. On 8 processors the computation will only have to move data between CPU and cache. This way super-linear speedup can be achieved.

Conclusion

External links

2. Plot of speedup vs processors - Gustafson's Law

References

<references/>