CSC/ECE 506 Spring 2012/1a hv: Difference between revisions

| Line 161: | Line 161: | ||

[[File:Interconnect_share.jpg| | [[File:Interconnect_share.jpg|300px|thumb|right| Interconnect trends. source: http://www.top500.org/overtime/list/38/conn]] | ||

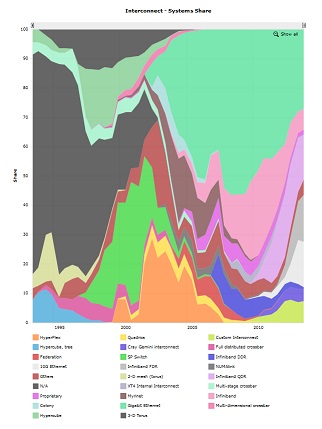

Notice the trend in the type of interconnects being used in supercomputers, the early days used the SP switches now owned by IBM, then the trend shifted to NUMA links and the most popular interconnects these days are the InfiniBand.<ref>[http://en.wikipedia.org/wiki/InfiniBand Infiniband]</ref> This trend is because InfiniBand offers high throughput, low latency, high QoS, failover etc. The future generations of super computers however might use the ethernet fabric architectures instead of InfiniBand because of the various advantages they have to offer. | Notice the trend in the type of interconnects being used in supercomputers, the early days used the SP switches now owned by IBM, then the trend shifted to NUMA links and the most popular interconnects these days are the InfiniBand.<ref>[http://en.wikipedia.org/wiki/InfiniBand Infiniband]</ref> This trend is because InfiniBand offers high throughput, low latency, high QoS, failover etc. The future generations of super computers however might use the ethernet fabric architectures instead of InfiniBand because of the various advantages they have to offer. | ||

Revision as of 06:33, 31 January 2012

Supercomputer Evolution

The United States government has played the key role in the development and use of supercomputers, During World War II, the US Army paid for the construction of ENIAC in order to speed the calculations of artillery tables. In the 30 years after World War II, the US government used high-performance computers to design nuclear weapons, break codes, and perform other security-related applications.

A supercomputer is generally considered to be the front-line “cutting-edge” in terms of processing capacity (number crunching) and computational speed at the time it is built, but with the pace of development yesterdays' supercomputers become regular servers today. A state-of-the-art supercomputer is an extremely powerful computer capable of manipulating massive amounts of data in a relatively short amount of time. Supercomputers are very expensive and are deployed for specialized scientific and engineering applications that must handle very large databases or do a great amount of computation --among them meteorology, animated graphics, fluid dynamic calculations, nuclear energy research, weapons simulation and petroleum exploration.

The most powerful supercomputers introduced in the 1960s were designed primarily by Seymour Cray at Control Data Corporation (CDC). They led the market into the 1970s until Cray left to form his own company, Cray Research. With Moore’s Law still holding after more than thirty years, the rate at which future mass-market technologies overtake today’s cutting-edge super-duper wonders continues to accelerate. The effects of this are manifest in the abrupt about-face we have witnessed in the underlying philosophy of building supercomputers.

During the 1970s and all the way through the mid-1980s supercomputers were built using specialized custom vector processors working in parallel. Typically, this meant anywhere between four to sixteen CPUs. The next phase of the supercomputer evolution saw the introduction of massive parallel processing and a drift away from vector-only microprocessors. However, the processors used in the construction of this generation of supercomputers were still primarily highly specialized purpose-specific custom designed and fabricated units.

That is no longer true. No longer is silicon fabricated into the incredibly expensive highly specialized purpose-specific customized microprocessor units to serve as the heart and mind of supercomputers. Advances in mainstream technologies and economies of scale now dictate that “off-the-shelf” multicore server-class CPUs are assembled into great conglomerates, combined with mind-boggling quantities of storage (RAM and HDD), and interconnected using light-speed transports.

So we now find that instead of using specialized custom-built processors in their design, supercomputers are based on "off the shelf" server-class multicore microprocessors, such as the IBM PowerPC, Intel Itanium, or AMD x86-64. The modern supercomputer is firmly based around massively parallel processing by clustering very large numbers of commodity processors combined with a custom interconnect.

Currently the fastest supercomputer is the Blue Gene/L, completed at Lawrence Livermore National Laboratory in 2005 and upgraded in 2007. It utilizes 212,992 processors to execute potentially as many 596 trillion mathematical operations per second. The computer is used to do nuclear weapons safety and reliability analysis. A prototype of Blue Gene/L demonstrated in 2003 was air-cooled, as opposed to many high-performance machines that use water and refrigeration, and used no more power than the average home. In 2003 scientists at Virginia Tech assembled a relatively low-cost supercomputer using 1,100 dual-processor Apple Macintoshes; it was ranked at the time as the third fastest machine in the world.

Some of the companies which build supercomputers are Silicon Graphics, Intel, IBM, Cray, Orion, Aspen Systems etc.

Here is a list of the top 10 supercomputers top10 supercomputers as of June 2009.

First Supercomputer ( ENIAC )

The ENIAC was first developed in 1949 and it took the world by storm. Originally, it was built to solve very complex problems that would take several months or years to solve. Because of this some of us use computers today but ENIAC was built with a single purpose: to solve scientific problems for the entire nation. The military were first to use it, benefiting the country's defenses. Even today, most new supercomputer technology is designed for the military first, and then is redesigned for civilian uses.

This system actually was used to compute the firing tables for White Sands missile range from 1949 until it was replaced in 1957. This allowed the military to synchronize the liftoff of missiles should it be deemed necessary. This was one of the important milestones in military history for the United States, at least on a technological level.

ENIAC was a huge machine that used nineteen thousand vacuum tubes and occupied a massive fifteen thousand square feet of floor space. It weighted nearly thirty tons, making it one of the largest machines of the time. It was considered the greatest scientific invention up to this point because it took only 2 hours of computation time to do what normally took a team of one hundred engineers a period of a year. That made it almost a miracle in some people's eyes and people got excited about this emerging technology. ENIAC could perform five thousand additions in seconds. Though it seemed very fast, by today's standards, that is extremely slow. Most computers today do millions of additions per second in comparison.

So what made ENIAC run? That task took a lot of manpower to complete and took hours to set up.The people completing the task used board, plus and wires to program the desired commands into the colossal machine. They also had to input the numbers by turning tons of dials until they matched the correct numbers, much like one has to do on a combination lock.

Cray History

Cray Inc. has a history that extends back to 1972, when the legendary Seymour Cray, the "father of supercomputing," founded Cray Research. R&D and manufacturing were based in his hometown of Chippewa Falls, Wisconsin and business headquarters were in Minneapolis, Minnesota.

The first Cray-1 system was installed at Los Alamos National Laboratory in 1976 for $8.8 million. It boasted a world-record speed of 160 million floating-point operations per second (160 megaflops) and an 8 megabyte (1 million word) main memory. In order to increase the speed of the system, the Cray-1 had a unique "C" shape which made integrated circuits to be closer together and no wire in the system was more than four feet long. To handle the intense heat generated by the computer, Cray developed an innovative refrigeration system using Freon.

In order to concentrate his efforts more on the design, Cray left the CEO position in 1980 and became an independent contractor. Later he worked on the follow-on to the Cray-1, another group within the company developed the first multiprocessor supercomputer, the Cray X-MP, which was introduced in 1982. The Cray-2 system made its debut in 1985, providing a tenfold increase in performance over the Cray-1. In 1988, Cray Y-MP was introduced, the world's first supercomputer to sustain over 1 gigaflop on many applications. Multiple 333 MFLOPS processors powered the system speeds of up-to 2.3 gigaflops.

Always a visionary, Seymour Cray had been exploring the use of gallium arsenide in creating a semiconductor faster than silicon. However, the costs and complexities of this material made it difficult for the company to support both the Cray-3 and the Cray C90 development efforts. In 1989, Cray Research spun off the Cray-3 project into a separate company, Cray Computer Corporation, headed by Seymour Cray and based in Colorado Springs, Colorado. Tragically, Seymour Cray died in September 1996 at the age of 71.

The 1990s brought a number of transformations to Cray Research. The company continued its leadership in providing the most powerful supercomputers for production applications. The Cray C90 featured a new central processor which produced a performance of 1 gigaflop. Using 16 of these powerful processors and 256 million words of central memory, the system boasted of amazing total performance. The company also produced its first "mini-supercomputer," the Cray XMS system, followed by the Cray Y-MP EL series and the subsequent Cray J90. In 1993, it offered the first massively parallel processing (MPP) system, the Cray T3D supercomputer, and quickly captured MPP market leadership from early MPP companies such as Thinking Machines and MasPar. The Cray T3D proved to be exceptionally robust, reliable, sharable and easy-to-administer, compared with competing MPP systems.

The successor, Cray T3E supercomputer has been the world's best selling MPP system. The Cray T3E-1200E system was the first supercomputer to sustain one teraflop (1 trillion calculations per second) on a real-world application. In November 1998, a joint scientific team from Oak Ridge National Laboratory, the National Energy Research Scientific Computing Center (NERSC), Pittsburgh Supercomputing Center and the University of Bristol (UK) ran a magnetism application at a sustained speed of 1.02 teraflops. In another technological landmark, the Cray T90 became the world's first wireless supercomputer when it was released in 1994. Also the Cray J90 series which was released during the same year has become the world's most popular supercomputer, with over 400 systems sold.

Cray Research merged with SGI (Silicon Graphics, Inc.) in February 1996 then the company was renamed Cray Inc. and the ticker symbol was changed to CRAY. In August 1999, SGI created a separate Cray Research business unit to focus completely and exclusively on the unique requirements of high-end supercomputing customers. Assets of this business unit were sold to Tera Computer Company in March 2000. Tera began software development for the Multithreaded Architecture (MTA) systems in 1988 and hardware design commenced in 1991. The Cray MTA-2 system provides scalable shared memory, in which every processor has equal access to every memory location, which greatly simplifies the programming because it eliminates concerns about the layout of memory.Company received its first order for the MTA from the San Diego Supercomputer Center. The multiprocessor system was accepted by the center in 1998, and has since been upgraded to eight processors.

The link below shows the Historical Timeline of Cray in the field of Supercomputers. Historical Timeline of Cray.

Supercomputer History in Japan

In the beginning there were only a few Cray-1s installed in Japan, and until 1983 no Japanese company produced supercomputers. The first models were announced in 1983. Naturally there had been prototypes earlier like the Fujitsu F230-75 APU produced in two copies in 1978, but Fujitsu's VP-200 and Hitachi's S-810 were the first officially announced versions. NEC announced its SX series slightly later.

The last decade has rather been a surprise. About three generations of machines have been produced by each of the domestic manufacturers. During the last ten years about 300 supercomputer systems have been shipped and installed in Japan, and a whole infrastructure of supercomputing has been established. All major universities, many of the large industrial companies and research centers have supercomputers.

In 1984 the NEC announced the SX-1 and SX-2 and started delivery in 1985. The first two SX-2 systems were domestic deliveries to Osaka University and the Institute for Computational Fluid Dynamics (ICFD). The SX-2 had multiple pipelines with one set of add and multiply floating point units each.It had a cycle time of 6 nanoseconds so each pipelined floating-point unit could peak at 167 Mflop/s. With four pipelines per unit and two floating-point units, the peak performance was about 1.3 Gflop/s. Due to limited memory bandwidth and other issues the performance in benchmark tests was less than half the peak value. The SX-1 had a slightly higher cycle time of 7 ns than the SX-2 and had only half the number of pipelines. The maximum execution rate was 570 Mflop/s.

At the end of 1987, NEC improved its supercomputer family with the introduction of A-series which gave improvements to the memory and I/O bandwidth. The top model, the SX-2A, had the same theoretical peak performance as the SX-2. Several low-range models were also announced but today none of them qualify for the TOP500.

In 1989 NEC announced a rather aggressive new model, the SX-3, with several important changes. The vector cycle time was brought down to 2.9 ns, the number of pipelines was doubled, but most significantly NEC added multiprocessing capability to its new series. It contained four independent arithmetic processors each with a scalar and a vector processing unit and NEC increased its performance by more than one order of magnitude of 22 Gflop/s from 1.33 on the SX-2A. The combination of these features made SX-3 the most powerful vector processor in the world. The total memory bandwidth was subdivided into two halves which in turn featured two vector load and one vector store paths per pipeline set as well as one scalar load and one scalar store path. This gave a total memory bandwidth to the vector units of about 66 GB/s. Like its predecessors, the SX-3 was to offer the memory bandwidth needed to sustain peak performance unless most operands were contained in the vector registers.

In 1992 NEC announced the SX-3R with a couple of improvements compared to the first version. The clock was further reduced to 2.5 ns, so that the peak performance increased to 6.4 Gflop/s per processor

Fujitsu's VP series

In 1977 Fujitsu produced the first supercomputer prototype called the F230-75 APU which was a pipelined vector processor added to a scalar processor. This attached processor was installed in the Japanese Atomic Energy Commission (JAERI) and the National Aeronautic Lab (NAL).

In 1983 the company came out with the VP-200 and VP-100 systems. In 1986 VP-400 was released with twice as many pipelines as the VP-200 and during mid-1987 the whole family became the E-series with the addition of an extra (multiply-add) pipelined floating point unit that increased the performance potential by 50%. With the flexible range of systems in this generation (VP-30E to VP-400E), good marketing and a broad range of applications, Fujitsu has became the largest domestic supplier with over 80 systems installed, many of which are named in TOP500.

Available since 1990, the VP-2000 family can offer a peak performance of 5 Gflop/s due to a vector cycle time of 3.2 ns. The family was initially announced with four vector performance levels (model 2100, 2200, 2400, and 2600) where each level could have either one of two scalar processors, but the VP-2400/40 doubled this limit offering a peak vector performance similar to the VP-2600. Most of these models are now represented in the Japanese TOP500.

Previous machines wre heavily criticized for the lack of memory throughput. The VP-400 series had only one load/store path to memory that peaked at 4.57 GB/s. This was improved in the VP-2000 series by doubling the paths so that each pipeline set can do two load/store operations per cycle giving a total transfer rate of 20 GB/s. Fujitsu recently decided to use the label, VPX-2x0, for the VP-2x00 systems adapted to their Unix system. Keio Daigaku university now runs such a system.

The VPP-500 series

In 1993 Fujitsu sprung a surprise to the world by announcing a Vector Parallel Processor (VPP) series that was designed for reaching in the range of hundreds of Gflop/s. At the core of the system is a combined Ga-As/Bi-CMOS processor, based largely on the original design of the VP-200. The processor chips gate delay was made as low as 60 ps in the Ga-As chips by using the most advanced hardware technology available. The resulting cycle time was 9.5 ns. The processor has four independent pipelines each capable of executing two Multiply-Add instructions in parallel resulting in a peak speed of 1.7 Gflop/s per processor. Each processor board is equipped with 256 Megabytes of central memory.

The most amazing part of the VPP-500 is the capability to interconnect up to 222 processors via a cross-bar network with two independent (read/write) connections, each operating at 400 MB/s. The total memory is addressed via virtual shared memory primitives. The system is meant to be front-ended by a VP-2x00 system that handles input/output and permanent file store, and job queue logistics.

As mentioned in the introduction, an early version of this system called the Numeric Wind Tunnel, was developed together with NAL. This early version of the VPP-500 (with 140 processors) is today the fastest supercomputer in the world and stands out at the beginning of the TOP500 due to a value that is twice that of the TMC CM-5/1024 installed at Los Alamo.

Hitachi's Supercomputers

Hitachi has been producing supercomputers since 1983 but differs from other manufacturers by not exporting them. For this reason, their supercomputers are less well known in the West. After having gone through two generations of supercomputers, the S-810 series started in 1983 and the S-820 series in 1988, Hitachi leapfrogged NEC in 1992 by announcing the most powerful vector supercomputer ever.The top S-820 model consisted of one processor operating at 4 ns and contained 4 vector pipelines with four pipelines and two independent floating-point units. This corresponded to a peak performance of 2 Gflop/s. Hitachi put great emphasis on a fast memory although this meant limiting its size to a maximum of 512 MB. The memory bandwidth of 2 words per pipe per vector cycle, giving a peak rate of 16 GB/s was a respectable achievement, but it was not enough to keep all functional units busy.

The S-3800 was announced two years ago and is comparable to NEC's SX- 3R in its features. It has up to four scalar processors with a vector processing unit each. These vector units have in turn up to four independent pipelines and two floating point units that can each perform a multiply/add operation per cycle. With a cycle time of 2.0 ns, the whole system achieves a peak performance level of 32 Gflop/s.

The S-3600 systems can be seen as the design of the S-820 recast in more modern technology. The system consists of a single scalar processor with an attached vector processor. The 4 models in the range correspond to a successive reduction of the number of pipelines and floating point units installed. Link showing the list of the top 500 super computers top 500 super computers. Link showing the statistics of top 500 supercomputer statistics

Supercomputer Design

There are two approaches to the design of supercomputers. One, called massively parallel processing (MPP), is to chain together thousands of commercially available microprocessors utilizing parallel processing techniques. A variant of this, called a Beowulf cluster or cluster computing, employs large numbers of personal computers interconnected by a local area network and running programs written for parallel processing. The other approach, called vector processing, is to develop specialized hardware to solve complex calculations. This technique was employed in the Earth Simulator, a Japanese supercomputer introduced in 2002 that utilizes 640 nodes composed of 5104 specialized processors to execute 35.6 trillion mathematical operations per second. it is used to analyze earthquake, weather patterns, climate change, including global warming.

Supercomputer Hierarchical Architecture

The supercomputer of today is built on a hierarchical design where a number of clustered computers are joined by ultra high speed network optical interconnections. 1.Supercomputer – Cluster of interconnected multiple multi-core microprocessor computers. 2.Cluster Members - Each cluster member is a computer composed of a number of Multiple Instruction, Multiple Data (MIMD) multi-core microprocessors and runs its own instance of an operating system. 3.Multi-Core Microprocessors - Each of these multi-core microprocessors has multiple processing cores of which the application software is oblivious and share tasks using Symmetric Multiprocessing (SMP) and Non-Uniform Memory Access (NUMA). 4.Multi-Core Microprocessor Core - Each core of these multi-core microprocessors is in itself a complete Single Instruction, Multiple Data (SIMD) microprocessor capable of running a number of instructions simultaneously and many SIMD instructions per nanosecond.

- SISD machines: These are the conventional systems that contain one CPU, so can accommodate one instruction stream that is executed serially. Nowadays many large mainframes may have more than one CPU but each of these execute instruction streams that are unrelated. Therefore, such systems still should be regarded as SISD machines acting on different data spaces. Examples of SISD machines are workstations like those of DEC, Hewlett-Packard and Sun Microsystems.

- SIMD machines: Such systems often have a large number of processing units, ranging from 1,024 to 16,384 that all may execute the same instruction on different data in lock-step. So, a single instruction manipulates many data items in parallel. Examples of SIMD machines are CPP DAP Gamma II and the Quadrics Apemille.

Another subclass of the SIMD systems are the vectorprocessors. Vectorprocessors act on arrays of similar data rather than on single data items using specially structured CPUs. When data can be manipulated by these vector units, results can be delivered with a rate of one, two or three per clock cycle. So, vector processors execute on their data in an almost parallel way but only when executing in vector mode. In this case they are several times faster than when executing in conventional scalar mode. For practical purposes vectorprocessors are mostly regarded as SIMD machines. An example of such a system is for instance the NEC SX-6i.

- MISD machines: Theoretically in these type of machines multiple instructions should act on a single stream of data. As yet no practical machine in this class has been constructed nor are such systems easily to conceive.

- MIMD machines: These machines execute several instruction streams in parallel on different data. The difference with the multi-processor SISD machines is that the instructions and data are related because they represent different parts of the same task to be executed. So, MIMD systems may run many sub-tasks in parallel in order to shorten the time-to-solution for the main task to be executed. There is a large variety of MIMD systems and especially in this class the Flynn taxonomy proves to be not fully adequate for the classification of systems. Systems that behave very differently like a four-processor NEC SX-6 and a thousand processor SGI/Cray T3E fall both in this class. Now we will make another important distinction between classes of systems.

a)Shared memory systems: Shared memory systems have multiple CPUs all of which share the same address space. This means that the knowledge of where data is stored is of no concern to the user as there is only one memory accessed by all CPUs on an equal basis. Shared memory systems can be both SIMD or MIMD. Single-CPU vector processors can be regarded as an example of the former, while the multi-CPU models of these machines are examples of the latter. We will sometimes use the abbreviations SM-SIMD and SM-MIMD for the two subclasses.

b)Distributed memory systems: In this case each CPU has its own associated memory. The CPUs are connected by some network and may exchange data between their respective memories when required. In contrast to shared memory machines the user must be aware of the location of the data in the local memories and will have to move or distribute these data explicitly when needed. Again, distributed memory systems may be either SIMD or MIMD. The first class of SIMD systems mentioned which operate in lock step, all have distributed memories associated to the processors. Distributed-memory MIMD systems exhibit a large variety in the topology of their connecting network. The details of this topology are largely hidden from the user which is quite helpful with respect to portability of applications. For the distributed-memory systems we will sometimes use DM-SIMD and DM-MIMD to indicate the two subclasses.

Trends in Supercomputers

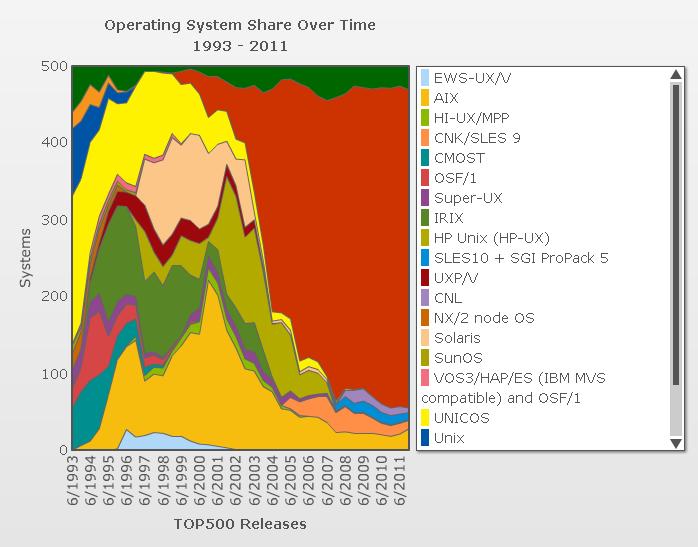

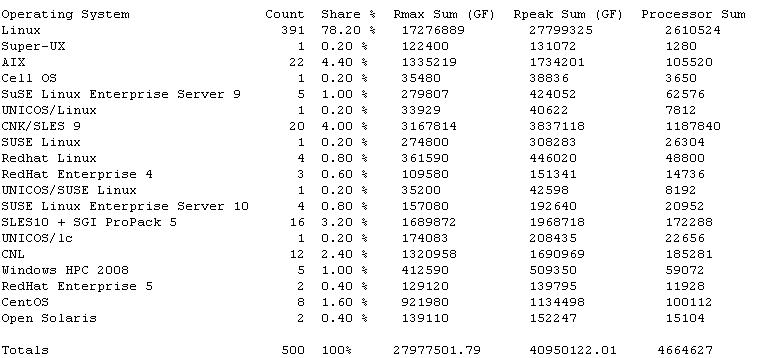

Operating System

The early manufacturers of supercomputers always preferred a custom built operating system tailored specifically for their needs. But, as time progressed all of that changed. Companies started taking advantage of the big developer community spread around the world and started to value open source contributions. One such community is the Linux developer community. People from all around the world contribute towards the development of the Linux operating system and at the same time make the operating system run fast, be efficient and scalable. This made Linux the most popular flavour among the supercomputer vendors because they could take a copy of Linux off the shelf, tailor it to suit their needs and run it with ease on their supercomputers. For example, The Blue Gene/P supercomputer at Argonne National Lab runs on over 250,000 processors and uses Linux. As of June’10 Linux helf 91% of the market share among the top 500 supercomputers.<ref>Linux adoption</ref><ref>Linux adoption wiki article</ref>

The early Cray machines ran their own propriety version of the operating system from the late 70s to the early 90s called the COS. Cray Y-MP 8/8-64 built in 1990 was the first super computer to run a variant of UNIX called the UNICOS.<ref>Early supercomputer operating systems</ref> As the above figure shows, UNICOS started to dominate the supercomputer market in 1990s with 11 systems running the operating system. The downfall of UNICOS led to the adoption of AIX and Linux, AIX being IBMs mainframe operating system. IBM realized the potential of Linux and chose it to be its operating system which will be running on its Blue Gene series of super computers. Linux continues to show strong signs of growth till date and today it powers the worlds fastest Japan K supercomputer.<ref>Linux triumph as a supercomputer OS</ref>

<ref>OS shares summary.</ref>

Interconnects

Supercomputers are known to have a large number of processors and they need to work together. Interconnects help connects different nodes in a super computer and they have the capacity to carry high bandwidth at the same time maintaining a very low latency. A node in a supercomputer usually denotes a communication end point, that is it maybe visualized as compute nodes, I/O nodes, service nodes and network nodes. In supercomputers where message passing plays a key role, the interconnects are kept as shot as possible just like the way electronic circuits were setup in a ‘C’ shape in the Cray systems to ensure that there is little latency. Since the interconnect plays a key role, supercomputers do not use wireless networking because of the probability of a higher latency which will be involved.<ref>Why supercomputers are fast?</ref>

Notice the trend in the type of interconnects being used in supercomputers, the early days used the SP switches now owned by IBM, then the trend shifted to NUMA links and the most popular interconnects these days are the InfiniBand.<ref>Infiniband</ref> This trend is because InfiniBand offers high throughput, low latency, high QoS, failover etc. The future generations of super computers however might use the ethernet fabric architectures instead of InfiniBand because of the various advantages they have to offer.

System Architecture: Comparing Crays to IBM and Japan's K computer

Cray built his first solid-state computer, the CDC 1604 in 1960. It used transistors instead of the traditional vacuum tubes, making it the fastest supercomputer in the world. The CDC 6600 then followed which was 50 times faster. The 7600, produced in 1968, could perform 15 million instructions per second.<ref>Cray History</ref>. The 8600 supercomputer was the first to use multiple processors. After bureaucratic issues, Cray left CDC to form his own company, and produced the Cray 1 supercomputer, which was sixteen times faster than the 7600, with 240 million calculations per second. It also brought about size effectiveness in its build, with it being only half the size of the 7600.<ref>Wisconsin Biographical Dictionary by Caryn Hannan 2008 ISBN 1878592637 pages 83-84.</ref>

The effectiveness of the Cray supercomputers can be attributed to the concept of vector processors. They were used specifically for high performance applications. Many supercomputer builders developed their own processors which at the time were cost effective. The purpose was to perform SIMD operations, because similar operations were executed repeatedly over an array of data. Vector processors were hence preferred. The STAR architecture was later developed where the generic vector processing was further improved by the fact that in some cases even the data points were similar and operations were performed over the same data points repeatedly.<ref>Control Data Corp.</ref> Another technique called chaining was also used in which scalar and vector processors work together to produce results used immediately to prevent memory references.<ref>History of Supercomputers.</ref>

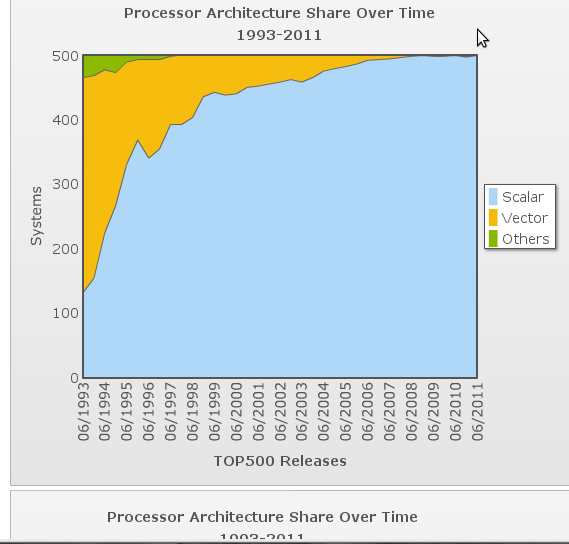

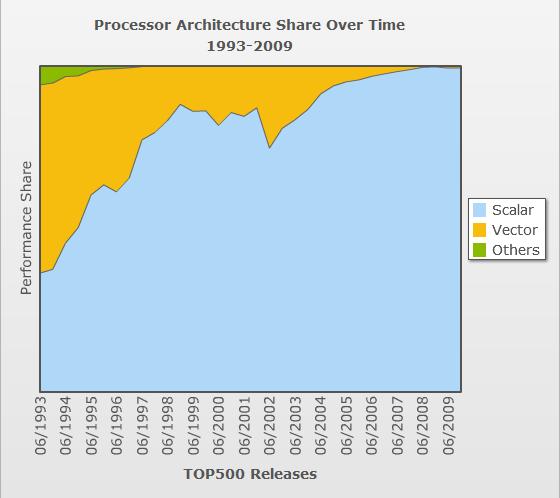

Processor architecture and generation

Supercomputers in the 80s improved speeds drastically with the advent of the SX processors, which used hundreds of vector processors to increase speeds. NEC led this revolution with the Fujitsu's Numerical Wind Tunnel(NEC), becoming the fastest supercomputer in 1994.

The Japanese supercomputer revolution in the 1980s was largely due to the vector parallel architecture it used. The Cray 2 was released in 1985. Though it was the fastest supercomputer at that time, it was quite cost ineffective with respect to the hardware and software costs. The in-house software built by Cray, UNICOS was replaced with UNIX.<ref>Parallel computing for real-time signal processing and control by M. O. Tokhi, Mohammad Alamgir Hossain 2003 ISBN 9781852335991 pages 201-202</ref>

The NEC had speeds of upto 100 GFlops. This started the trend of massively parallel computing in supercomputers. The NEC used vector parallel processors. But in 1993, parallel vector processors(PVV) still ruled the Top500 list with about 70% using the architecture. The year 1994 was the turning point in terms of architectural changes, with the PVVs only having a share of 40%, while the massively parallel processors(MPP) increased to 48%. The HITACHI SR2201, released in 1996, was six times faster than the NEC with speeds of upto 600 GFlops. <ref>top500.org</ref>

http://www.hitachi.co.jp/Prod/comp/hpc/eng/sr1.html

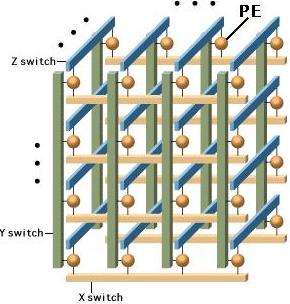

Two special features helped it gain such massive speeds. One was pseudo-vector processing. This technique improves performance bypassing the cache to fetch data directly from memory. This is because computations that are of high granularity end up requiring a large data set to work on. Caches often cannot support such operations, and thus direct memory access tended to improve performance drastically. The other architectural introduction was the 3D crossbar switch network. The advantages of this was described as : "The High-end SR2201 line employs an innovative three-dimensional crossbar switch to provide high-speed connection among individual processing elements(PEs).With this switch,there are only three output lines from any PE:one for each of the crossbars.This simple layout achieves almost the same performance as the configuration which interconnects all the processing elements directly ,yet at a much lower cost".<ref>Hitachi Corp.</ref> <ref>Netlib benchmark reports</ref> <ref>Fujitsu global archives</ref>

SMPs started to be replaced with Massively parallel processors and then with computer clusters.

Massively Parallel Processing,clusters and IBM'S Blue Gene

The architecture in the early 2000s were based on using off-the-shelf components to build clusters in a short time. Terascale speeds were achieved at a low cost. System X could run t 12.25 Teraflops. It was unique in the sense that it "self made" at the university.<ref>System X</ref> Cray continued to maintain its architecture while releasing the Cray MT-2. Since it was not successful, its next release Cray X1 moved slightly away from its vector architecture to incorporate the MPP idea. It still only had half the speed of the fastest supercomputer of its time.

The Massively parallel architecture soon found dominance in the supercomputer industry when the IBM Blue Gene supercomputer performed PFlop range computations. An advantage of the Blue Gene series was that it sacrificed slightly processor speed for better power performance. Instead larger number of processors were used. This completed the paradigm shift in the architecture of supercomputers and also has brought about a period in which there is rapid growth in supercomputer performance using massively parallel computing. Better processors brought about better performance on a large scale. While the IBM Blue Gene supercomputer was the fastest in 2006, the K Computer to be completed in 2012 is currently 60 times faster. This is mainly due to the superior performance of the SPARC64 VIIIfx, which Fujitsu in 2009 claimed was the world's fastest CPU. Thus, improving processor capabilities while including thousands of such processors( 60000 to be precise) in a massively parallel architecture has dominated the supercomputer market for many years now. It seems that this trend will continue for a few more years to come as all companies embrace this paradigm.<ref>SPARC64 VIIIfx</ref>

Vendors for supercomputers

During the nascent stage of supercomputer production, the CDC corporation was the top vendor of supercomputers. Throughout the 60s and 70s, none of the other vendors were able to make a mark on the supercomputer industry. This was partly because supercomputer architectures required specialized processors, i.e. vector processors to make optimum use of the supercomputers.It was best suited for defense applications. It required scientist to work towards specialized needs for the supercomputer, and required processors that had to be created by the vendor itself.

The market domination of Cray started reducing in the late 1980s with the advent of the Japanese supercomputers. While the Cray supercomputers used less than 10 powerful processors( Cray 2, the fastest supercomputer in the world in 1985 had only 8 processors!), the Japanese vendors managed to increase drastically the number of processors and improved performance, power and efficiency, thus overtaking Cray and becoming the fastest supercomputer with the Fujitsu NEC in 1994.<ref>Cray computer corporation</ref>

The reason for Cray's reduced market share in the 90s can be attributed to a number of factors. High performance processors were slowly being replaced by massively parallel processors. Quantity took precedence over quality, with vendors like Thinking Machines, Kendall Square Research, Intel Supercomputing Systems Division, nCUBE, MasPar and Meiko Scientific starting to make massively parallel processors. The focus was on assembly of large, not necessarily the most powerful parallel processors, rather than improving the processing speed of the existing processors. Another factor was the cost of the Cray supercomputers. Historically, supercomputers were used primarily by defense and other elite organizations. But with the mainstream industry also requiring high end computing power, there arose a necessity for creating minisupercomputers. "As scientific computing using vector processors became more popular, the need for lower-cost systems that might be used at the departmental level instead of the corporate level created an opportunity for new computer vendors to enter the market. As a generalization, the price targets for these smaller computers were one-tenth of the larger supercomputers. These computer systems were characterized by the combination of vector processing and small-scale multiprocessing".<ref>Minisupercomputer</ref>

The Cray supercomputer was still the dominant vendor in 1993, but its inertia in moving to the parallel processing domain cost its market share to be overtaken by vendors like IBM and Oracle. IBM concentrated its effort on the massively parallel computing paradigm, while Oracle, acquired the Thinking Machine Corporation(TMC) to further its market share.

Today, IBM has the lion's share with over 44.6% units in the Top500 list. It uses the fundamental concept in today's supercomputing trend- increasing the number of processors, each having low power consumption. There is strong interconnect capabilities among these processors which supports effective communication between processor nodes and racks.

The observation that can be made from the trends is that vendors need to adapt quickly to architectural shifts in the supercomputing industry. New vendors starting up with architectures in alignment with trends of that era tend to dominate the market.

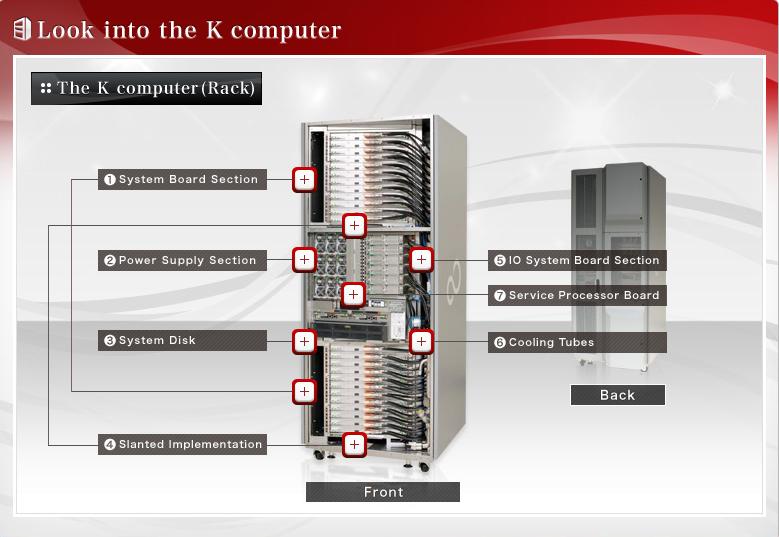

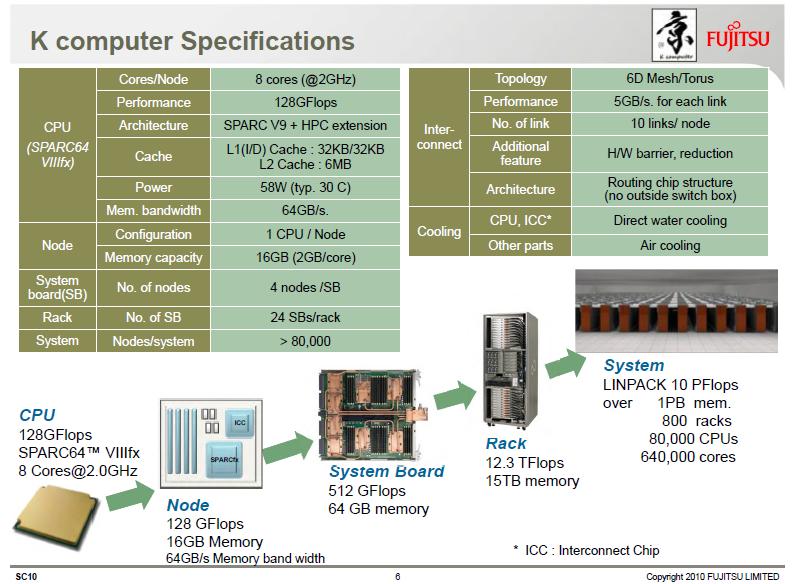

Worlds fastest supercomputer - The K Computer

Japans K supercomputer is currently the worlds fastest supercomputer and with major upgrades over the past few months, its speed is quadruple times faster than its closest competitor. The K computer, K - “kei” which stands for 10 quadrillion is a product of Fujitsu built at the RIKEN Advanced Institute for Computational Science campus in Japan. The K computer topped the supercomputer rankings in June’11 and managed to stay ahead of competition till date. A surprising aspect though is that the K computer is yet to become fully operational. There is little doubt in saying that the K computer is here to stay on top of the supercomputer charts for a while. But as history of supercomputing shows, the top supercomputer today is most of the times no where to be found in the top 10 in a few years of time. The K computer even though it has the highest power consumption (nearly 10 megawatts, equivalent to powering up ten thousand homes) is one of the most efficient systems on the list in terms of Mflops/watt delivered.<ref>top500.org article</ref><ref>K Computer wiki</ref>

The K computer system specs includes an all intel based processors, without the use of any GPUs which is usually not the trend that other supercomputers follow these days. K-Computer uses 705,024 cores (8-cores form a 2.0GHz SPARC64 VIIIfx processor which the K-computer uses). A 6-D Mesh/Torus topology is used as a network and the CPUs together provide a 64GB/s memory bandwidth. K Computer is both water and air cooled (more about the energy efficient air cooling approach will be discussed later).<ref>K computer specs</ref>

The applications of K computer include the areas of drug manufacturing, materials energy creation, weather prediction, etc.

Supercomputer Programming Models

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. The base language of supercomputer code is, in general, Fortran or C, using special libraries to share data between nodes. Now environments such as PVM and MPI for loosely connected clusters and OpenMP for tightly coordinated shared memory machines are used. Significant effort is required to optimize a problem for the interconnect characteristics of the machine it is run on. The aim is to prevent any of the CPUs from wasting time waiting on data from other nodes.

Now we will discuss briefly regarding the programming languages mentioned above.

1) Fortran previously known as FORTRAN is a general-purpose, procedural, imperative programming language that is especially suited to computation like numeric and scientific computing. It was originally developed by IBM in the 1950s for scientific and engineering applications,then became very dominant in this area of programming early on and has been in use for over half a century in very much computationally intensive areas such as numerical weather prediction, finite element analysis, computational fluid dynamics (CFD), computational physics, and computational chemistry. It is one of the most popular and highly preferred language in the area of high-performance computing and is the language used for programs that benchmark and rank the world's fastest supercomputers.

Fortran a blend derived from The IBM Mathematical Formula Translating System encompasses a lineage of versions, each of which evolved to add extensions to the language while usually retaining compatibility with previous versions. Successive versions have added support for processing of character-based data (FORTRAN 77), array programming, modular programming and object-based programming (Fortran 90 / 95), and object-oriented and generic programming (Fortran 2003).

In late 1953, John W. Backus submitted a proposal to his superiors at IBM to develop a more practical alternative to assembly language for programming their IBM 704 mainframe computer. A draft specification for The IBM Mathematical Formula Translating System was completed by mid-1954. The first manual for FORTRAN appeared in October 1956, with the first FORTRAN compiler delivered in April 1957. This was an optimizing compiler, since customers were reluctant to use a high-level programming language unless its compiler could generate code whose performance was comparable to that of hand-coded assembly language.

It reduced the number of programming statements necessary to operate a machine by a factor of 20, became very popular then it was accepted. The language was popular among scientists for writing numerically intensive programs, which encouraged compiler writers to produce compilers that could generate faster and more efficient code. The inclusion of a complex number data type in the language made Fortran especially suited to technical applications such as electrical engineering.

By 1960, different versions of FORTRAN were available for the IBM 709, 650, 1620, and 7090 computers. The increasing popularity of FORTRAN spurred competing computer manufacturers to provide FORTRAN compilers for their machines, so that by 1963 over 40 FORTRAN compilers existed. Because of these reasons, FORTRAN is considered to be the first widely used programming language supported across a variety of computer architectures. The development of FORTRAN paralleled the early evolution of compiler technology. Indeed many advances in the theory and design of compilers were specifically motivated by the need to generate efficient code for FORTRAN programs.

2) C-Language is a general-purpose computer programming language developed in 1972 by Dennis Ritchie at the Bell Telephone Laboratories to use with the Unix operating system. It is also widely used for developing portable application software. C is one of the most popular programming languages and there are hardly few computer architectures for which a C compiler does not exist. C has greatly influenced many other popular programming languages, most notably C++, which originally began as an extension to C.

It was designed to be compiled using a relatively straightforward compiler, to provide low-level access to memory, to provide language constructs that map efficiently to machine instructions, and to require minimal run-time support.The language was designed to encourage machine-independent programming. The language has been used in very wide range of platforms, from embedded microcontrollers to supercomputers.

3) The Parallel Virtual Machine (PVM) is a software tool used for parallel networking of computers. It is designed to allow a network of heterogeneous Unix and/or Windows machines to be used as a single distributed parallel processor. Thus large and complex computational problems can be solved more cost effectively by using the combined memory and power of many computers. The software is very portable and has been compiled on everything from laptops to Crays.

PVM enables users to exploit their existing computer hardware to solve complex problems at very less cost. PVM is also been used as an educational tool to teach parallel programming and to solve important practical problems. It was developed by the University of Tennessee, Oak Ridge National Laboratory and Emory University. The first version was written at ORNL in 1989, and after being rewritten by University of Tennessee, version 2 was released in March 1991. Version 3 was released in March 1993, and supported fault tolerance and better portability.User programs written in C, C++, or Fortran can access PVM through provided library routines.

4) The OpenMP (Open Multi-Processing) is an application programming interface (API) that supports multi-platform shared memory multiprocessing programming in C, C++ and Fortran on many architectures, including Unix and Microsoft Windows platforms. It consists of a set of compiler directives, library routines, and environment variables that influence run-time behavior.

It was developed by a group of major computer hardware and software vendors. OpenMP is a portable, scalable model that gives programmers a simple and flexible interface for developing parallel applications for platforms ranging from the desktop to the supercomputer.An application built with the hybrid model of parallel programming can run on a computer cluster using both OpenMP and Message Passing Interface (MPI), or more transparently through the use of OpenMP extensions for non-shared memory systems.

The OpenMP Architecture Review Board (ARB) published its first API specifications, OpenMP for Fortran 1.0, in October 1997. October the following year they released the C/C++ standard. 2000 saw version 2.0 of the Fortran specifications with version 2.0 of the C/C++ specifications being released in 2002. Version 2.5 is a combined C/C++/Fortran specification that was released in 2005.

Version 3.0, released in May, 2008, is the current version of the API specifications. The new features included in 3.0 is the concept of tasks and the task construct. More info regarding openMP can be read here OpenMP 3.0 specifications.

Supercomputing Applications

The primary tasks that the supercomputers are used for are solidly focused on number crunching and enormous calculation intensive tasks that involve massive datasets requiring real-time resolution that for all intent and purpose are beyond the generation lifetime of general purpose computers even in large numbers or that of the average humans life expectancy today. The type of tasks that supercomputers are built to tackle are : Physics - Quantum mechanics, thermodynamics, cosmology, astrophysics Meteorology - Weather forecasting, climate research, global warming research, storm warnings Molecular Modeling - Computing the structures and properties of chemical compounds, biological macromolecules, polymers, and crystals Physical Simulations – Aerodynamics, fluid dynamics, wind tunnels Engineering Design – Structural simulations, bridges, dams, buildings, earthquake tolerance Nuclear Research – Nuclear fusion research, simulation of the detonation of nuclear weapons, particle physics Cryptography and Cryptanalysis – Code and cipher breaking, encryption Earth Sciences – Geology, geophysics, volcanic behavior Training Simulators – Advanced astronaut training and simulation, civil aviation training Space Research – Mission planning, vehicle design, propulsion systems, mission proposals and feasibility studies and simulations The main users of these supercomputers include: universities, military agencies, NASA, scientific research laboratories and major corporations.

RIT Scientists Use Supercomputers to ‘See’ Black Holes RIT

Supercomputer Simulates Stellar Evolution Stellar Evolution

Georgia Tech University have used the Super Computers for getting better insight into genomic evolution. genomic evolution

Largest-Ever Simulation of Cosmic Evolution Calculated at San Diego Supercomputer Center Cosmic Evolution

UC-Irvine Supercomputer Project Aims to Predict Earth's Environmental Future - In February, the university announced the debut of the Virtual Climate Time Machine -- a computing system designed by IBM to help Irvine scientists predict earth's meteorological and environmental future.

SGI ALTIX - COLUMBIA SUPERCOMPUTER

The Columbia supercluster makes it possible for NASA to achieve breakthroughs in science and engineering for the agency's missions and Vision for Space Exploration. Columbia's highly advanced architecture is also being made available to a broader national science and engineering community. Here shows the Columbia System Facts Columbia

Cooling Supercomputers

Hot Topic – the Problem of Cooling Supercomputers

The continued exponential growth in the performance of Leadership Class computers (supercomputers) has been predicated on the ability to perform more computing in less space. Two key components have been 1) the reduction of component size, leading to more powerful chips, and 2) the ability to increase the number of processors, leading to more powerful systems. There is strong pressure to keep the physical size of the system compact to keep communication latency manageable. There has been an increase in power density. The ability to remove the waste heat as quickly and efficiently as possible is becoming a limiting factor in the capability of future machines.

Convection cooling with air is currently the preferred method of heat removal in most data centers. Air handlers force large volumes of cooled air under a raised floor (the deeper the floor, the lower the wind resistance) and up through perforated tiles in front of or under computer racks where fans within the racks servers or blade cages distribute it across the electronics radiating heat, perhaps with the help of heat sinks or heat pipes. This system easily accommodates racks drawing 4-7 kW. In 2001 the average U.S. household drew 1.2 kW. Think about cooling half a dozen homes crammed into about 8 square feet. A BlueGene/L rack uses 9 kW. The Energy Smart Data Center’s (ESDC’s) NW-ICE compute rack uses 12 kW. Petascale system racks may require 60 kW to satisfy communication latency demands that limit a systems physical size. Additional ducting can be used to keep warm and cold air from mixing in the data center, but air cooling alone is reaching its limits.

Chilled water has been used by previous generations of bipolar transistor-based mainframes and the Cray-2 immersed the entire system in Fluorinert in the 1980s. Water has a much higher heat capacity than air and even than Fluorinert, but it is also a conductor so it cannot come into direct contact with the electronics, making transferring the heat to the water. Blowing hot air through a water cooled heat exchanger mounted on or near the rack is one common way of improving the ability to cool a rack, but it is limited by the low heat capacity of air and requires energy to move enough air.

More efficient and effective cooling is only one part of developing a truly energy smart data center. Not generating heat in the first place is another component, which includes moving some heat sources such as power supplies away from the compute components or using more efficient power conversion mechanisms where the power taken of the grid is high voltage alternating current (AC), while the components use low voltage direct current (DC). Power aware components that can reduce their power requirements or turn off entirely when not needed are another element.

This photo story is a peek into one of the world's great supercomputer labs housed inside the US's Oak Ridge National Laboratory, a leading research institution and the site of the reactor in which plutonium for the first atomic bombs was refined during World War II.

Pictured here is one row of the lab's Cray X1E, the largest vector supercomputer in the world. It is rated for 18 teraflops of processing power. The computer is liquid-cooled, and piping was installed into the floor for that purpose

Cooling ESDC's NW-ICE

Fluorinert not only has a high dielectric constant in excess of 35,000 volts across a 0.1 inch gap, but it has other desirable properties. 3M Fluorinert Liquids are actually a family of clear, colorless, odorless perfluorinated fluids having a viscosity similar to water. These non-flammable liquids are thermally and chemically stable and compatible with most sensitive materials, including metals, plastics, and elastomers. Fluorinert liquids are completely fluorinated, containing no chlorine or hydrogen atoms. The strength of the carbon-fluorine bond contributes to their extreme stability and inertness. Fluorinert liquids are available with boiling points ranging from 30°C to 215°C.

NW-ICE is being cooled with a combination of air and two-phase liquid (Fluorinert) cooling, in this case SprayCool. Closed SprayCool modules 1) replace the normal heat sinks on each of the processor chips, 2) cool them with a fine mist of Fluorinert that evaporates as it hits the hot thermal conduction layer on top of the chip package, and 3) return the heated Fluorinert to the heat exchanger in the bottom of the rack. The heat exchanger, also called a thermal server, transfers the heat to facility chilled water. The rest of the electronics in the rack, including memory, is now easily cooled with air. The high heat transfer rate of the two-phase cooling allows the use of much warmer water than conventional air-water heat exchangers, allowing direct connection to efficient external cooling towers.Two-phase liquid cooling is thermodynamically more efficient than convection cooling with air, resulting in less energy being needed to remove waste heat while at the same time being able to handle a higher heat load.

Alternative Cooling Approaches

Spray Cooling is, of course, just one approach to solving data center cooling problems. A plethora of cooling technologies and products exist. Technologies of interest use air, liquid, and/or solid-state cooling principles: Evolutionary progress is made with conventional air cooling techniques that are known for their reliability. Current investigation focuses on novel heat sinks and fan technologies with the aim to improve contact surface, conductivity, and heat transfer parameters. Efficiency and noise generation are also of great concern with air cooling. 1 Improvements have been made in the design of Piezoelectric Infrasonic Fans that exhibit low power consumption and have a lightweight and inexpensive construction. 2 One of the most effective air cooling options is Air Jet Impingement. 3 The design and manufacturing of nozzles and manifolds for jet impingement is relatively simple.

The same benefits that apply to Air Jet Impingement are exhibited in Liquid Impingement technologies. In addition, liquid cooling offers higher heat transfer coefficients as a tradeoff for higher design and operation complexity. 4 One of the most interesting liquid cooling technologies are microchannel heat sinks in conjunction with micropumps because the channels can be manufactured in the micrometer range with the same process technologies used for electronic devices. 5 Microchannels heat sinks are effective supporting large heat fluxes. 6 Liquid metal cooling, used in cooling reactors, is starting to be an interesting alternative for high-power-density micro devices.7 Large heat transfer coefficients are achieved by circulating the liquid with hydroelectric or hydromagnetic pumps. The pumping circuit is reliable because no moving parts, except for the liquid itself, are involved in the cooling process. Heat transfer efficiency is also increased by high conductivity. The low heat capacity of metals leads to less stringent requirements for heat exchangers. 8 Heat extraction with liquids can be increased by several orders of magnitude by exploiting phase changes. Heat pipes and Thermosyphons exploit the high latent heat of vaporization to remove large quantities of heat from the evaporator section. The circuits are closed by either capillary action in the case of heat pipes or gravity in the case of Thermosyphons. These devices are therefore very efficient but are limited in their temperature range and heat flux capabilities.9 Thermoelectric Coolers (TEC) that use the Peltier-Seebeck effect do not have the largest efficiency but have the ability to provide localized spot cooling, an important capability in modern processor design. Research in this area focuses on improving materials and distributing control of TEC arrays such that the efficiency over the whole chip improves.

Water-cooling System Enables Supercomputers to Heat Buildings

In an effort to achieve energy-aware computing, the Swiss Federal Institute of Technology Zurich (ETH), and IBM have announced plans to build a first-of-a-kind water-cooled supercomputer that will directly repurpose excess heat for the university buildings. The system, dubbed Aquasar, is expected to decrease the carbon footprint of the system by up to 85% and estimated to save up to 30 tons of CO2 per year, compared to a similar system using today's cooling technologies. <ref>water cooled supercomputer</ref>

Inspiration: EPA's Energy Star for Superior Energy Efficiency at NetApp Data Center

We know that supercomputers and data centers have one thing in common, the problem of excess heat being produced and finding efficient ways to cool them. "NetApp (NASDAQ: NTAP) announced that its Research Triangle Park (RTP) data center has earned the U.S. Environmental Protection Agency's (EPA's) prestigious ENERGY STAR®, the national symbol for protecting the environment through superior energy efficiency. This is the first data center to achieve this distinction from the EPA. The RTP data center achieved a near perfect mark by scoring a 99 on a scale of 100".<ref>NetApp company releases</ref>

<ref>NetApp green data center</ref>

The way NetApp achieves the energy efficient cooling is, unlike traditional methods of air cooling where outside air is got in and recirculated in the data center to cool the systems, NetApp's data center uses outside air and circulates it around for once and then immediately pumps out the air and gets fresh air in. However the hot and cold air regions are isolated and instead of cooling the entire data center, they focus only on cooling the systems. Since cold air is denser than hot air, the hot air rises above and exits the data center and fresh air is brought in again. 67% of the times the outside air has a temperature of 75F which can be used as a coolant.<ref>NetApp Data Center efficiency</ref>

Supercomputer vendors can perhaps take this as an example and try to introduce better cooling methodologies when cooling the systems. Using energy efficient cooling approaches also saves up releasing tons of CO2 gas into the atmosphere.

The future of supercomputing

Growing use of GPUs in supercomputing and the Barcelona Supercomputing Center

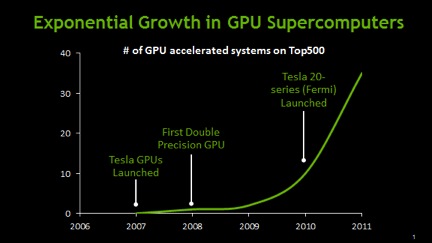

Today's supercomputers and those of the future will use the MIMD model for their computation. Along with the instruction and data stream architectures, even their processors will exhibit heterogeneous properties. This is because the massively parallel architecture will plateau in terms of the quantity of processors and their performance of supercomputer applications. Today's cluster architectures have started to use more than a million cores in their devices. Thus, the introduction of 'co-processors' can help improve the overall performance of the supercomputer. This is because the GPU performs on a scale of many magnitudes faster than the CPU. The GPU co-processors can concentrate on performing large scale SIMD operations, while the processors, the basic unit being SIMD, can integrate with other processors to exhibit MIMD. Presently more than 37 out of the Top500 supercomputers use the NVidia GPU as part of their hybrid architecture consisting of processors and co-processors.<ref>GPU supercomputers exponential growth</ref>

The Cray XK6 supercomputer, for example uses a 16-core AMD CPU, and uses a co-processor NVidia X2090 Tesla GPU. It gives an option of large scalability, such that it can support upto 96 CPUs and also 96 GPUs in a single cabinet! Thus, in the future, an equal number of processors and co-processors could be deployed. Even blade level scalability can be achieved as part of the 'Scalable Compute nodes' of the Cray system.<ref>Cray XK6 Brochure</ref>

Recently, The Barcelona Supercomputing Center(BSC) announced plans to build a supercomputer on an ARM-based architecture. This was the first radical step taken towards building a supercomputer with drastic power efficient requirements. "BSC is planning to build the first ARM supercomputer, accelerated by CUDA GPUs<ref>BSC ARM supercomputer</ref>, for scientific research. This prototype system will use NVIDIA’s quad-core ARM-based Tegra 3 system-on-a-chip, along with NVIDIA CUDA GPUs on a hardware board designed by SECO., to accelerate a variety of scientific research projects". The ARM architecture, popular for its use in cellphones for its low power consumption. It has been documented that GPU based heterogeneous devices are set to replace x86 processors because of their power efficiency. More and more supercomputers today are using so-called 'accelarator technology' along with their CPUs. In 2008 By this time, 28% of the HPC sites were using accelerator technology — a threefold increase from two years earlier — and nearly all of these accelerators were GPUs. "9% of HPC sites were using some form of accelerator technology alongside CPUs in their installed systems.By this time, 28% of the HPC sites were using accelerator technology — a threefold increase from two years earlier — and nearly all of these accelerators were GPUs. Although GPUs represent only about 5% of the processor counts in heterogeneous systems, their numbers are growing rapidly".<ref>IDC Exascale Brief</ref>

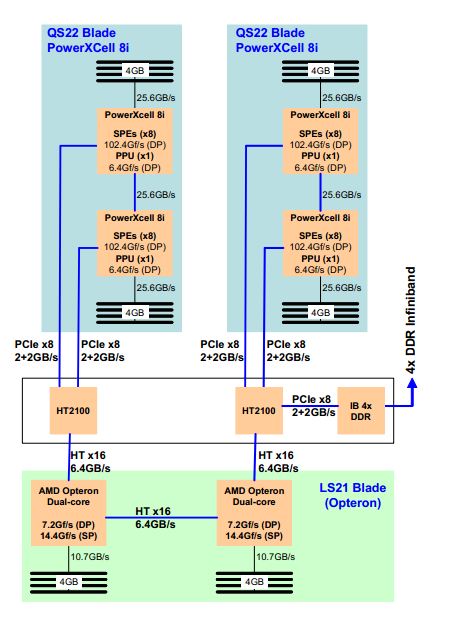

GPUs are not the only technique for processors to increase performance using auxiliary measures. The IBM roadrunner uses two processor architectures, one using the Dual core Opteron, and the other using a PowerXCell 8i processor. It helped the roadrunner be the first supercomputer to achieve consistent Petaflop speeds. The PowerXCell 8i helped the overall system in terms of cost effectiveness and power budget. Infact, the roadrunner used 12,240 PowerXCell 8i processors, along with 6,562 AMD Opteron processors.

In the following years, the development of hybrid architecture will center around GPU and cell co-processor architectures. The focus, apart from higher computing speeds, will also concentrate on lesser power consumption. The IBM BlueGene family of supercomputers, as a result occupy the top 4 spots in the Green500.org list of power efficient supercomputers. The Barcelona SuperComputing Center comes in at 7.<ref>Barcelona SuperComputing Center</ref>

Research centers are constantly delving into new applications like data mining to explore additional uses of supercomputing. Data mining is a class of applications that looks for hidden patterns in a group of data which allows scientists to discover previously unknown relationships among the data. For instance, the Protein Data Bank at the San Diego Supercomputer Center is a collection of scientific data that provides scientists around the world with a greater understanding of biological systems. Over the years, the Protein Data Bank has developed into a web-based international repository for three-dimension almolecular structure data that contains detailed information on the atomic structure of complex molecules. The three-dimensional structures of proteins and other molecules contained in the Protein Data Bank and supercomputer analysis of the data provide researchers with new insights on the causes, effects, and treatment of many diseases.

Other modern supercomputing applications involve the advancement of brain research. Researchers are beginning to use supercomputers to provide them with a better understanding of the relationship between the structure and function of the brain, and operation of the brain. Specifically, neuroscientists use supercomputers to look at the dynamic and physiological structures of the brain. Scientists are also working toward development of three-dimensional simulation programs that will allow them to conduct research on areas such as memory processing and cognitive recognition.

In addition to new applications, the future of supercomputing includes the assembly of the next generation of computational research infrastructure and the introduction of new supercomputing architectures. Parallel supercomputers have many processors, distributed and shared memory, and many communications parts. We have yet to explore all of the ways in which they can be assembled. Supercomputing applications and capabilities will continue to develop as institutions around the world share their discoveries and researchers become more proficient at parallel processing.

Notes

<references/>

References (created by the authors of old wiki article written in 2011)

3.info regarding supercomputer evolution

9.Water-cooling System Enables Supercomputers to Heat Buildings

12.UC-Irvine Supercomputer Project Aims to Predict Earth's Environmental Future

13.ASRock X58 SuperComputer Motherboard Review

14.Wikipedia

15.Parallel programming in C with MPI and OpenMP By Michael Jay Quinn