CSC/ECE 517 Summer 2020 - Active Learning for Review Tagging: Difference between revisions

| Line 165: | Line 165: | ||

*Added an additional bar chart in each row of the review report for metrics | *Added an additional bar chart in each row of the review report for metrics | ||

*Fixed redirection bug | *Fixed redirection bug | ||

==Test Plan== | ==Test Plan== | ||

Revision as of 20:06, 21 November 2020

This page provides a description of the Expertiza based independent development project.

Introduction

Background

The web application Expertiza, used by students in CSC 517 and other courses, allows students to peer-review and give suggestive comments to each other's work. Students will later be asked to voluntarily participate in an extra-credit review-tagging assignment in which they tag comments they received for helpfulness, positive tone, and other characteristics interested by researchers. Currently, students have to tag hundreds of comments they received in order to get full participation credits. Researchers are concerned that this amount of work would cause inattentive participants to submit responses deviating from what they should be, thus corrupting the established model. Therefore, by having the machine-learning algorithm pre-determine the confidence level of the asked characteristic's presence in a comment, one can ask students to assign only tags that the algorithm is unsure of, so students can focus on fewer tags with more attention and accuracy.

Problem Statement

The goal of this project is to construct a workable infrastructure for active learning, by incorporating machine-learning algorithms in evaluating which tags, by having a manual input, can help the AI learn more effectively. In particular, the following requirements are fulfilled:

- Incorporate metrics analysis into the review-giving process

- Reduce the number of tags students have to assign

- Reveal gathered information to report pages

- Update the web service to include paths to the confidence level of each prediction

- Decide a proper tag certainty threshold that says how certain the ML algorithm must be of a tag value before it will ask the author to tag it manually

Notes

This project is simultaneously being held with another project named 'Integrate Suggestion Detection Algorithm.' Whereas that project focuses on forming a central outlet to external web services, this project focuses more on interpreting results from external web services.

Design

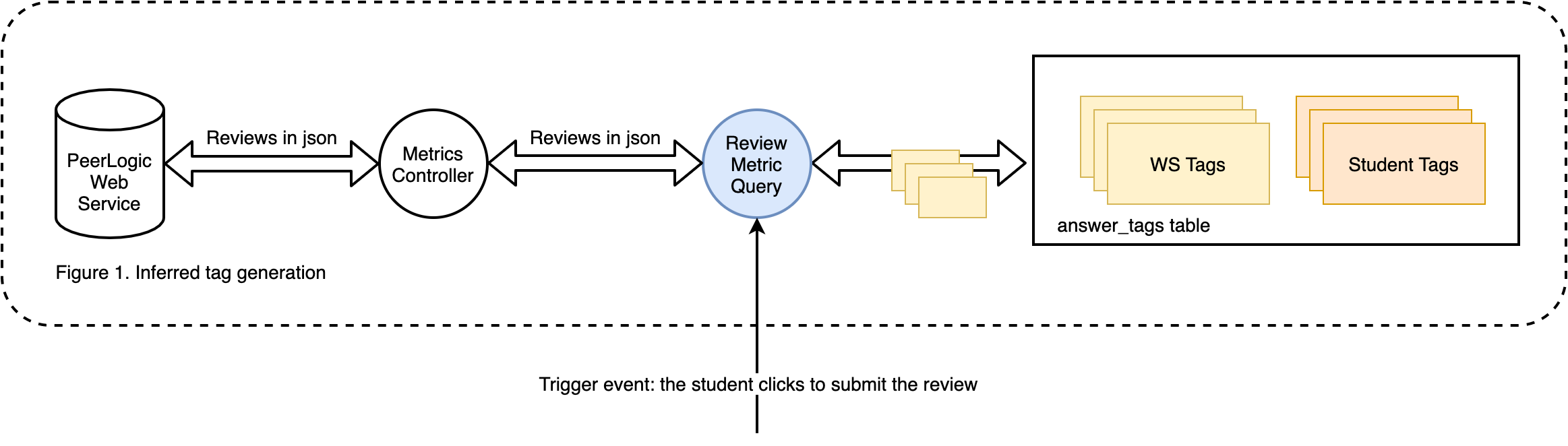

Control Flow Diagram

Peer Logic is an NSF-funded research project that provides services for educational peer-review systems. It has a set of mature machine learning algorithms and models that compute metrics on the reviews. It would be helpful for Expertiza to integrate these algorithms into the peer-review process. Specifically, we want to

- Let students see the quality of their review before submission, and

- Selectively query manual tagging that are used to train the models (active learning) further

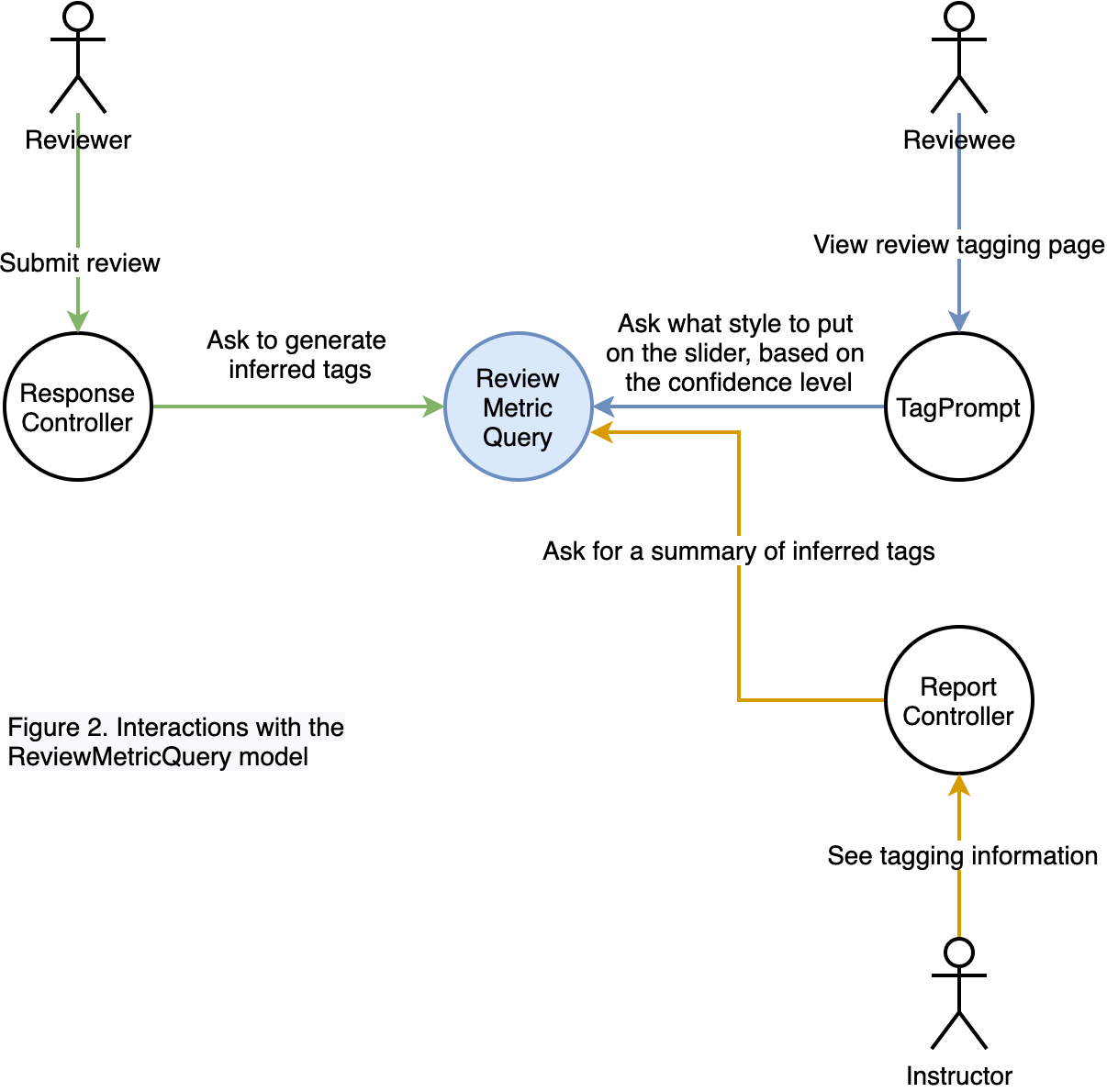

In order to integrate these algorithms into the Expertiza system, we have to build a translator-like model, which we named ReviewMetricsQuery, that converts outputs from external sources into a form that our system can understand and use.

Below we show the control flow diagram to help illustrate the usage of the ReviewMetricsQuery model.

The ReviewMetricsQuery class is closely tied to the peer-review process. It first gets called when students finish and about to submit their reviews on other students' work. Before the system marks their reviews as submitted, we plan that the ReviewMetricsQuery class intercepts these reviews' content and sends them to the Peer Logic web service for predictions. After it receives the predicted results, it caches them to the local Expertiza database and then releases the intercept. Instead of being redirected to the list of reviews, students are presented with an analysis report on the quality of their reviews. They may go back and edit their review comments or confirm to submit, depending on whether they are satisfied with the results displayed to them.

Every prediction from the web service comes with a confidence level, indicating how confident the algorithm is about this prediction. Whenever a student visits the review tagging page, before rendering any tags, the system consults the ReviewMetricsQuery class to check whether the web service has previously determined the value of the tag and whether its confidence level exceeds the pre-set threshold. If yes, meaning the algorithm is confident about its prediction, it applies a lightening effect onto the tag to make it less noticeable. Students who do the tagging can easily distinguish between normal tags and gray-out tags and focus their attention more on normal tags. This is what active learning is about, to query manual inputs only if it adds to the algorithm's knowledge.

These cached data would also be used in the instructor's report views, and that's why we need these data to be cached locally. One review consists of about 10 to 20 comments and takes about minutes to process, and a report composes thousands of such reviews. Querying web service results in real-time is impractical concerning the time it consumes. We limit the number of contacts with the web service the least by sending requests only when students decide to submit their reviews. In this way, the predicted values of each tag are up to date with the stored reviews.

Database Design

The only change to the database is to add a confidence_level column to the existing answer_tags table, which is originally used to store tags assigned by students. One can imagine results from web service to be a stack of tags assigned by the outside tool, with a confidence level indicating how confident the outside tool is to each tag it assigns. Therefore, the answer_tags table will have two types of tags, one from the student, which has the user_id but not the confidence_level, and the other inferred from web service, which has the confidence_level but not the user_id. The system can determine what type of tags they are by checking the presence of values in these two fields.

| id | answer_id | tag_prompt_deployment_id | user_id | value | confidence_level | created_at | updated_at |

|---|---|---|---|---|---|---|---|

| 1 | 1066685 | 5 | 7513 | 0 | NULL | 2017-11-04 02:32:59 | 2017-11-04 02:32:59 |

| 353916 | 1430431 | 149 | NULL | -1 | 0.99700 | 2020-11-16 01:47:29 | 2020-11-16 01:47:29 |

UI Design

Four pages are needed to be modified to reflect the addition of new functionality.

Metrics Analysis Page

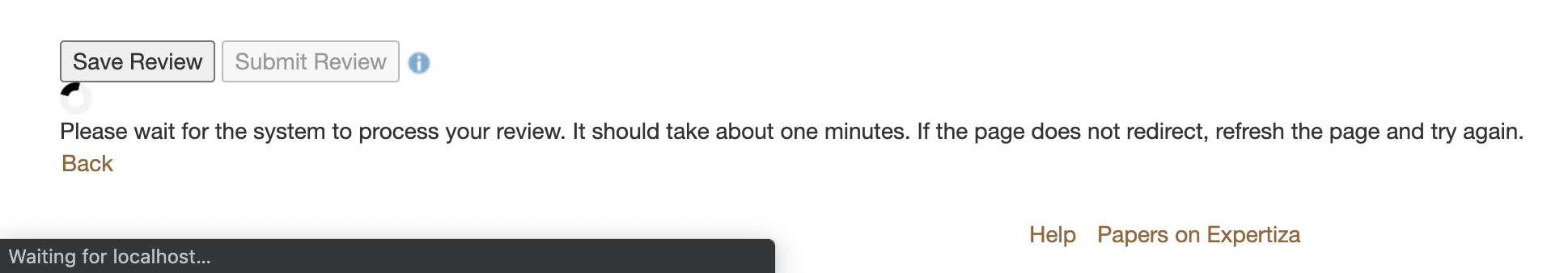

When students click the 'submit' button on the review-giving page, the button will be put on the disabled effect to prevent students from submitting requests multiple times. The consequence of submitting requests multiple times is that the same set of comments are sent to the external web service for processing, wasting resources on both sides. To reassure students that the request has been made, we add a loader and a message to the bottom of the 'submit' button, asking them to wait patiently to avoid overload the system.

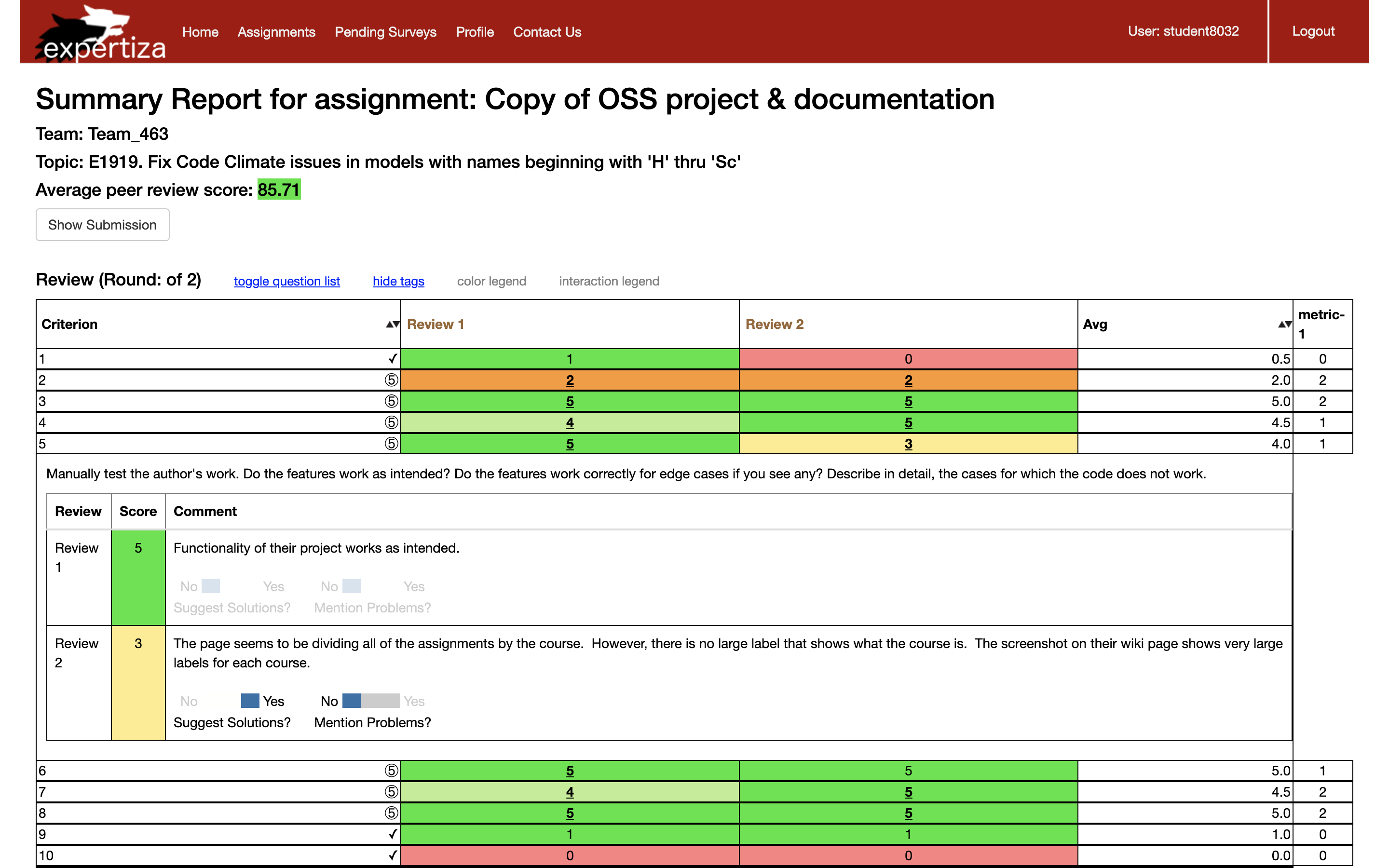

About a minute after students click the 'submit' button, they are redirected to a page that shows the analysis of their submitted reviews. Students can see every of their submitted comments along with the analyzed result on each metric. These metrics came from tag prompt deployments set by the instructor in a per questionnaire scope. Predictions with the confidence level under the predefined threshold are not rendered in the report, so students do not see uncertain or inaccurate predictions. When students confirm to submit, they return to the list of reviews to perform other actions.

Review Tagging Page

From the above image, one can see that the slider has been changed into three forms:

The original form, meaning it needs input from the user.

The gray-out form, presenting tag inferred by the web service. The tag in this form is still editable, meaning students can override some of the inferred tags if they wish to.

The overridden form, which is used to represent a tag that originally has a value assigned by the web service but gets overrode by the user.

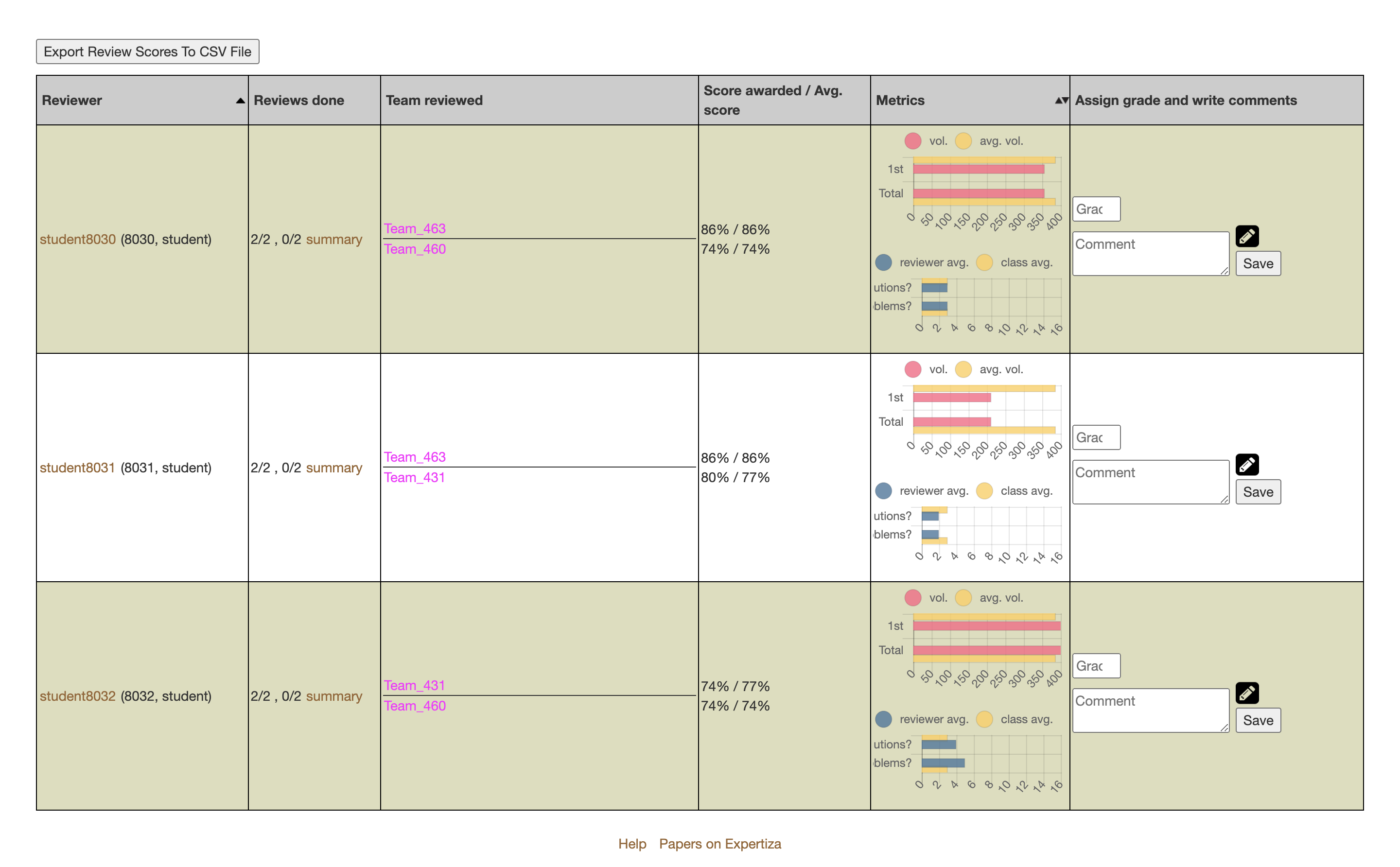

Review Report Page

In each row representing a student, a metrics chart is added below the volume chart that is already there. Graders get useful information by looking at these two charts combined and can offer more accurate feedback to students. Due to the space limitation, each metric name cannot be fully expanded. Grader could hover the cursor over each bar to view its corresponding metric name.

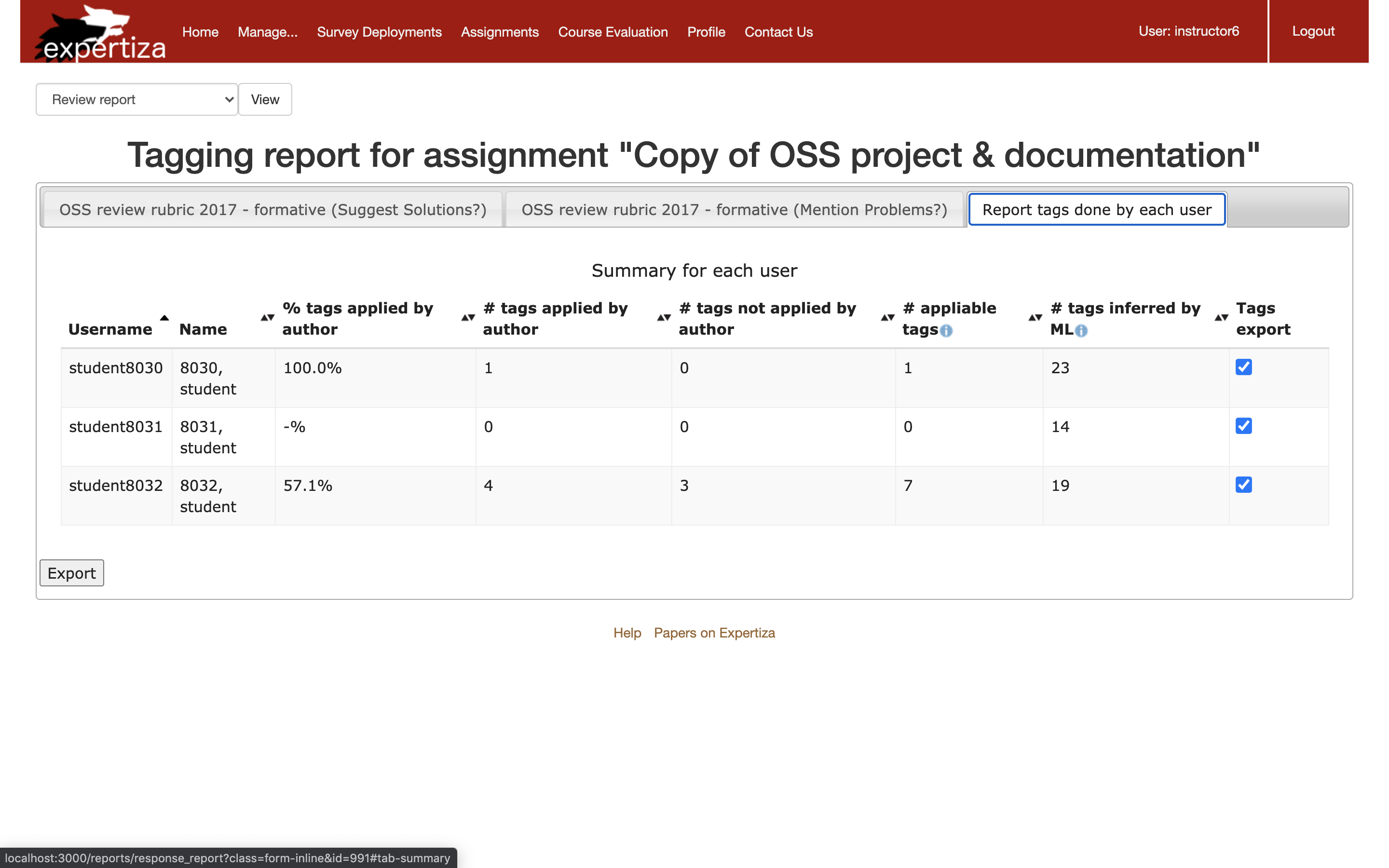

Answer-Tagging Report Page

Changes made to this page include changing column names and adding a column for the number of inferred tags. Below we explained how each column is calculated.

- % tags applied by author = # tags applied by author / # appliable tags

- # tags applied by author = from # appliable tags, how many are tagged by the author

- # tags not applied by author = # appliable tags - # tags applied by the author

- # appliable tags = # tags whose comment is longer than the length threshold - # tags inferred by ML

- # tags inferred by ML = # tags whose comment is predicted by the machine learning algorithm with high confidence

Implementation

Core Changes

app/models/review_metrics_query.rb

- The only model class that is responsible for communications between `MetricsController` and the rest of the Expertiza system where tags are used

- Added

average_number_of_qualifying_commentsmethod which returns either the average for one reviewer or the average for the whole class, depending on whether the reviewer is supplied

db/migrate/20200825210644_add_confidence_level_to_answer_tags_table.rb

- Added

confidence_levelcolumn to theanswer_tagstable

Cache Inferred Tags

app/models/answer.rb

- Added

de_tag_commentsmethod which remove html tags from the submitted review comment

app/models/answer_tag.rb

- Corrected typo (tag_prompt_deployment instead of tag_prompts_deployment)

- Added validation clause that checks the presence of either the

user_idor theconfidence_level

app/controllers/metrics_controller.rb

- Created an empty

MetricsControllerclass so tests could be passed

app/controllers/response_controller.rb

- Alternated the redirection so students could be redirected to the analysis page after they click to submit their reviews

- Added

confirm_submitmethod which marks the review in the parameter to 'submitted'

app/views/response/analysis.html.erb

- Drafted the analysis page, which shows the web service's prediction for each comment on each metric

app/views/response/response.html.erb

- Added codes that disable the "Submit" button after it is being clicked

app/assets/stylesheets/response.scss

- Added styles for disabled button and spinning loader

config/routes.rb

- Added a

confirm_submitroute

Show Inferred Tags

app/models/tag_prompt.rb

- Added codes to set up the style of the slider (none, gray-out, or overridden) when it is about to be rendered

app/assets/javascripts/answer_tags.js

- Controlled the dynamic effect of overriding an inferred tag

app/assets/stylesheets/three_state_toogle.scss

- Added styles for different forms of tags (gray-out and overridden)

Show Summary of Inferred Tags

app/models/tag_prompt_deployment.rb

- Slightly changed how each column in the answer_tagging report is calculated

app/models/vm_user_answer_tagging.rb & app/helpers/report_formatter_helper.rb

- Added one variable that stores the number of tags inferred by ML

app/views/reports/_answer_tagging_report.html.erb

- Renamed columns in the answer_tagging report

- Added a new column to the table named "# tags inferred by ML"

app/views/reports/_review_report.html.erb & app/helpers/review_mapping_helper.rb

- Added an additional bar chart in each row of the review report for metrics

- Fixed redirection bug

Test Plan

RSpec Testing

Since this project involves changes to many places of the system, some existing tests needed to be fixed. These include

spec/features/peer_review_spec.rb

- The button "Submit Review" will no longer redirect students to the list of reviews. We made the test to click "Save Review" instead of "Submit Review" so the expected behavior could still be tested.

spec/models/tag_prompt_spec.rb

- This spec file tests functionalities regarding

TagPrompt. Some tests break because we incorporated the logic of calling theconfident?method to determine the slider's style. We fixed these tests by always letting theconfident?method to return false, so as to strip that part of logic out of testing.

Two new spec files are written for the new code:

spec/models/review_metrics_query_spec.rb

- Ensure the

cache_ws_resultsmethod callsMetricsControllerwith the right parameters and saves the results into theanswer_tagstable. - Ensure the

inferred_valuemethod interprets the web service results correctly - Ensure the

inferred_confidencemethod flips the confidence value for predictions that have a negative meaning - Ensure

confident?,confidence(), andhas?methods access the right column in theanswer_tagstable.

UI Testing

Following UI tests were done to ensure the following:

- The 'Submit' button is disabled after the student clicks it to prevent multiple queries to the web service.

- The student gets redirected to the analysis page after the web service request completes.

- The student sees the analysis of their review comments on the analysis page.

- The slider for inferred tags are gray-out.

- The student can override the gray-out tag with a new value, and the slider changes to the overridden style.

- The instructor sees the new bar chart for review metrics.

- The instructor sees the column summarizing inferred tags in the answer-tagging report.

Reference

Yulin Zhang (yzhan114@ncsu.edu)