CSC/ECE 506 Spring 2011/ch6a ep: Difference between revisions

| (22 intermediate revisions by the same user not shown) | |||

| Line 12: | Line 12: | ||

Cache management is impacted by three characteristics of modern processor architectures: multilevel cache hierarchies, multi-core and multiprocessor chip and systems designs, and shared vs. private caches. | Cache management is impacted by three characteristics of modern processor architectures: multilevel cache hierarchies, multi-core and multiprocessor chip and systems designs, and shared vs. private caches. | ||

Multi-level cache hierarchies are used by most contemporary chip designs to enable memory access to keep pace with CPU speeds that are advancing at a more rapid pace. Two-level and three-level cache hierarchies are common. L1 typically ranges from 16-64KB and provide access in 2-4 cycles. L2 typically ranges from 512KB to as much as 8MB and provides access in 6-15 cycles. L3 typically ranges from 4MB to 32MB and provides access to the data it contains within 50-30 cycles.[[#References|[ | Multi-level cache hierarchies are used by most contemporary chip designs to enable memory access to keep pace with CPU speeds that are advancing at a more rapid pace. Two-level and three-level cache hierarchies are common. L1 typically ranges from 16-64KB and provide access in 2-4 cycles. L2 typically ranges from 512KB to as much as 8MB and provides access in 6-15 cycles. L3 typically ranges from 4MB to 32MB and provides access to the data it contains within 50-30 cycles.[[#References|[8]]] | ||

Multi-core and multprocessor designs place additional constraints on cache management by requiring them to consider all processes running concurrently in the cache coherency, replenishment, fetching, and other management functions. | Multi-core and multprocessor designs place additional constraints on cache management by requiring them to consider all processes running concurrently in the cache coherency, replenishment, fetching, and other management functions. | ||

| Line 322: | Line 322: | ||

Note: The 'x #' in the L1 and L2 columns indicates that this cache is for each core. | Note: The 'x #' in the L1 and L2 columns indicates that this cache is for each core. | ||

The next two sections will discuss cache write policies and cache prefetching as techniques to improve the performance of these complex caching architectures by reducing write-miss and read-miss rates. | |||

=Cache Write Policies[[#References|[5]]]= | =Cache Write Policies[[#References|[5]]]= | ||

In section 6.2.3[[#References|[8]]], cache write hit policies and write miss policies were explored. The write hit policies, write-through and write-back, determine when the data written in the local cache is propagated to lower-level memory. As review, write-through writes data to the cache and memory on a write. Write-back writes to cache first | In section 6.2.3[[#References|[8]]], cache write hit policies and write miss policies were explored. The write hit policies, write-through and write-back, determine when the data written in the local cache is propagated to lower-level memory. As review, write-through writes data to the cache and memory on a write. Write-back writes to cache first and to memory only when a flush is required. | ||

The write miss policies covered in the text[[#References|[8]]], write-allocate and no-write-allocate, determine if a memory block is stored in a cache line after the write occurs. Note that since a miss occurred on write, the block is not already in a cache line. | |||

Write-miss policies can also be expressed in terms of write-allocate, fetch-on-write, and write-before-hit. These can be used in combination with the same write-through and write-back hit policies to define the complete cache write policy. Investigating alternative policies such as these is important since write-misses produce on average one-third of all cache misses. | |||

The following discusses each of these policies: | |||

==Fetch-on-Write== | ==Fetch-on-Write== | ||

On a write miss, | On a write miss, the memory block containing the address to be written is fetched from the lower level memory hierarchy before the write proceeds. Note that this is different from write-allocate. Fetch-on-write determines if the block is fetched from memory, and write-allocate determines if the memory block is stored in a cache line. Certain write policies may allocate a line in cache for data that is written by the CPU without retrieving it from memory first. | ||

==No-Fetch-on-Write== | ==No-Fetch-on-Write== | ||

| Line 344: | Line 348: | ||

==Write-Miss Policy Combinations== | ==Write-Miss Policy Combinations== | ||

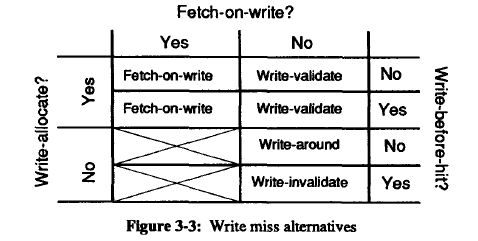

In practice, these policies are used in combination to provide an over-all write policy. Four combinations of these three write miss policies are relevant, | In practice, these policies are used in combination to provide an over-all write policy. Four combinations of these three write miss policies are relevant, as illustrated in the table below: | ||

<center>[[image:policies.jpg]]</center> | <center>[[image:policies.jpg]]</center> | ||

The two combinations of fetch-on-write and no-write-allocate are blocked out in the above diagram. They are considered unproductive since the data is fetched from lower level memory, but is not stored in the cache. Temporal and | The two combinations of fetch-on-write and no-write-allocate are blocked out in the above diagram. They are considered unproductive since the data is fetched from lower level memory, but is not stored in the cache. Temporal and spacial locality suggest that this data will be used again, and will therefore need to be retrieved a second time. | ||

Three combinations named write-validate, write-around, and write-invalidate all use no-fetch-on-write. They result in 'eliminated misses' when compared to a fetch-on-write policy. In general, this will yield better cache performance if the overhead to manage the policy remains low. | |||

The following discusses each of these combinations: | |||

===Write-Validate=== | ===Write-Validate=== | ||

The combination of no-fetch-on-write and write-allocation is referred to as 'write-validate'. It writes the data into the cache line without fetching the corresponding block from memory first. The assumption is that the block will be written to memory at a later time. It requires additional overhead, or dirty bits, to track what bytes have been | The combination of no-fetch-on-write and write-allocation is referred to as 'write-validate'. It writes the data into the cache line without fetching the corresponding block from memory first. The assumption is that the block will be written to memory at a later time. It requires additional overhead, or dirty bits, to track what bytes have been written into that cache line and which bytes were not written. Lower level memories also must be able can process only the changed portions of these lines. Otherwise, when the line is flushed to memory, the unwritten bytes may overwrite valid data. | ||

The no-fetch-on-write has the advantage of avoiding an immediate bus transaction to retrieve the block from memory. For a multiprocessor with a cache coherency model, though, a bus transaction will be required to get exclusive ownership of the block. While this appears to negate the advantages of no-fetch-on-write, the process is able to continue other tasks while this transaction is occurring, including rewriting to the same cache location. | |||

Tests by the author demonstrated more than a 90% reduction in write misses when compared to fetch-on-write policies. | |||

===Write-Around=== | ===Write-Around=== | ||

The combination of no-fetch-on-write, no-write-allocate, and no-write-before-hit is referred to as a 'write-around' | The combination of no-fetch-on-write, no-write-allocate, and no-write-before-hit is referred to as a 'write-around'. It bypasses the higher level cache, writes directly to the lower level memory, and leaves the existing block line in the cache unaltered on a hit or a miss. This strategy shows performance improvements when the data that is written will not be reread in the near future. Since we are writing before a hit is detected, the cache is written around for both hits and misses. | ||

The author notes that in only but a few cases write-around performs worse than write-validate policies. Most applications tend to reread what they have recently written. Using a write-around policy, this would result in a cache miss and a read from lower-level memory. With write-validate, the data would be in cache. | |||

Tests by the author demonstrated a 40-70% reduction in write misses when compared to fetch-on-write policies. | |||

===Write-Invalidate=== | ===Write-Invalidate=== | ||

The combination of write-before-hit, no-fetch-on-write, and no-write-allocate is referred to as 'write-invalidate' because the line is invalidated on the miss. | The combination of write-before-hit, no-fetch-on-write, and no-write-allocate is referred to as 'write-invalidate' because the line is invalidated on the miss. The copy that exists in lower level memory after the write miss differs from the one in the cache. For write hits, though, the data is simply written into the cache using the cache hit policy. Thus, for hits, the cache is not written around. | ||

Of these three combinations, write validate performed the worse. The author notes, though, that it does perform better than fetch-on-write and is easy to implement. Tests by the author demonstrated a 35-50% reduction in write misses when compared to fetch-on-write policies. | |||

===Fetch-on-write=== | ===Fetch-on-write=== | ||

When used with write-allocate, fetch-on-write stores the block and processes the write to cache as expected. | When used with write-allocate, fetch-on-write stores the block and processes the write to cache as expected. This policy was used as the base-line for determining the performance of the other three policy combinations. In general, all three previous combinations exhibited fewer misses than this policy. | ||

=Prefetching in Contemporary Parallel Processors= | =Prefetching in Contemporary Parallel Processors= | ||

Prefetching is a technique that can be used in addition to write-hit and write-miss policies to improved the utilization of the cache. Microprocessors, such as those listed in Table 1, support prefetching logic directly in the chip. | Prefetching is a technique that can be used in addition to write-hit and write-miss policies to improved the utilization of the cache. Microprocessors, such as those listed in Table 1, support prefetching logic directly in the chip. | ||

Prefetching is the process of retrieving instructions or data from memory before the process explicitly requests it. Instruction prefetching is commonly used by single and multiprocessors to reduce process wait states.[[#References|[17]]] Prefetching of data may also be used, though, to pre-populate caches with data that is likely going to be required by the processors in the near term. If the data requirements are anticipated correctly, the requests to memory will result in a greater | Prefetching is the process of retrieving instructions or data from memory before the process explicitly requests it. Instruction prefetching is commonly used by single and multiprocessors to reduce process wait states.[[#References|[17]]] Prefetching of data may also be used, though, to pre-populate caches with data that is likely going to be required by the processors in the near term. If the data requirements are anticipated correctly, the requests to memory will result in a greater cache hit rates and, therefore, reduce overall memory access time. If the prefetcher guesses wrong, bus traffic can increase unnecessarily, more relevant data can be flushed from caches, and miss rates can increase. | ||

Prefetching algorithms can leverage both temporal and spacial locality in making these decisions. For example, streaming and sequential access applications often process adjacent memory locations in subsequent tasks. | Prefetching algorithms can leverage both temporal and spacial locality in making these decisions. For example, streaming and sequential access applications often process adjacent memory locations in subsequent tasks. | ||

| Line 378: | Line 394: | ||

The Data Cache Unit prefetcher is a streaming prefetcher for L1 caches that detects ascending access to data that has been loaded very recently. The processor assumes that this ascending access will continue, and prefetches the next line. | The Data Cache Unit prefetcher is a streaming prefetcher for L1 caches that detects ascending access to data that has been loaded very recently. The processor assumes that this ascending access will continue, and prefetches the next line. | ||

The data prefetch logic (DPL) maintains two arrays to track the recent accesses to memory: one for the upstreams that has 12 entries, and one for downstreams that has 4 entires. As pages are accessed, their | The data prefetch logic (DPL) maintains two arrays to track the recent accesses to memory: one for the upstreams that has 12 entries, and one for downstreams that has 4 entires. As pages are accessed, their addresses are tracked in these arrays. When the DPL detects an access to a page that is sequential to an existing entry, it assumes this sequential access will continue, and prefetches the next cache line from memory. | ||

== AMD [[#References|[7]]]== | == AMD [[#References|[7]]]== | ||

Latest revision as of 04:23, 8 March 2011

INTRODUCTION TO MEMORY HIERARCHY ORGANIZATION

Write-Miss Policies and Prefetching

Write miss policies and prefetching are two strategies that are used by multiprocessors to achieve optimal performance for memory accesses. Write miss policies can help eliminate write misses and therefore reduce bus traffic. This reduces the wait-time of all processors sharing the bus. Prefetching assures that a CPU has data blocks in cache to be read when processing large data files and streaming data. When CPUs or cores share a cache, prefetched data in a shared cache is available to all processes on those cores processing the data.

This article begins by highlighting the variety of multicore processors on the market today that have hierarchal memory structures and shared caches. It then explores the write miss policies and prefetching techniques that these multiprocessors can use to take advantage of these architectures.

Recent Architectures and their Cache Characteristics

Cache management is impacted by three characteristics of modern processor architectures: multilevel cache hierarchies, multi-core and multiprocessor chip and systems designs, and shared vs. private caches.

Multi-level cache hierarchies are used by most contemporary chip designs to enable memory access to keep pace with CPU speeds that are advancing at a more rapid pace. Two-level and three-level cache hierarchies are common. L1 typically ranges from 16-64KB and provide access in 2-4 cycles. L2 typically ranges from 512KB to as much as 8MB and provides access in 6-15 cycles. L3 typically ranges from 4MB to 32MB and provides access to the data it contains within 50-30 cycles.[8]

Multi-core and multprocessor designs place additional constraints on cache management by requiring them to consider all processes running concurrently in the cache coherency, replenishment, fetching, and other management functions.

In multiprocessors with multiple levels of cache, certain cache levels may be shared by processors and others private to each processor. Typically, lower-level caches are private and high-level caches may or may not be shared. Notice that none of the examples in the below have a shared L1 cache. Cache management functions must consider both shared and private caches when reading and writing data from memory.

Below is a table that illustrates the variety with which these characteristics have been combined in processors from four manufacturers over the past 6 years.

| Table 1: Recent Architectures and their Cache Characteristics [1][2][3][4][9][10][11][12][13][14][15][16] | ||||||

|---|---|---|---|---|---|---|

| Company | Processor | Cores | L1 Cache | L2 Cache | L3 Cache | Released |

| AMD | Athlon 64 X2 | 2 | 128 KB x 2 | 512 KB x 2 1MB x 2 | 2005 | |

| AMD | Athlon 64 FX | 2 | 128 KB x 2 | 1MB x 2 | 2006 | |

| AMD | Athlon X2 | 2 | 128 KB x 2 | 512 KB x 2 | 2007 | |

| AMD | Phenom X3 | 3 | 128 KB x 3 | 512 KB x 3 | 2 MB | 2008 |

| AMD | Phenom X4 | 4 | 128 KB x 4 | 512 KB x 4 | 2 MB | 2007 |

| AMD | Athlon II X2 | 2 | 128 KB x 2 | 512 KB x 2 1MB x 2 | 2009 | |

| AMD | Athlon II X3 | 3 | 128 KB x 3 | 512 KB x 3 | 2009 | |

| AMD | Athlon II X4 | 4 | 128 KB x 4 | 512 KB x 4 | 2009 | |

| AMD | Phenom II X2 | 2 | 128 KB x 2 | 512 KB x 2 | 6 MB | 2009 |

| AMD | Phenom II X3 | 3 | 128 KB x 3 | 512 KB x 3 | 6 MB | 2009 |

| AMD | Phenom II X4 | 4 | 128 KB x 4 | 512 KB x 4 | 4-6 MB | 2009 |

| AMD | Phenom II X6 | 6 | 128 KB x 6 | 512 KB x 4 | 6 MB | 2010 |

| Intel | Pentium D | 2 | 12+16KB x 2 | 2 MB | 2005 | |

| Intel | Celeron E | 2 | 32 KB x2 | 512 -1 MB | 2006 | |

| Intel | Pentium D | 2 | 32 KB x2 | 1 MB | 2006 | |

| Intel | Core 2 Duo | 2 | 32 KB x2 | 2 - 4 MB | 2006 | |

| Intel | Core 2 Quad | 4 | 32 KB x2 | 8 MB | 2007 | |

| Intel | Atom 330 | 2 | 32+24KB x 2 | 512 MB x 2 | 2008 | |

| Intel | Pentium E | 2 | 32 KB x 2 | 2 MB | 2007 | |

| Intel | Core 2 Duo E | 2 | 32 KB x 2 | 3-6 MB | 2008 | |

| Intel | Core 2 Quad | 4 | 32 KB x 4 | 2-6 MB x 2 | 2008 | |

| Intel | Core i3 | 2 | 32+32 KB x 2 | 256 KB x2 | 4 MB | 2010 |

| Intel | Core i5 - 6 Series | 2 | 32+32 KB x 2 | 256 KB x2 | 4 MB | 2010 |

| Intel | Core i5 - 7 Series | 4 | 32+32 KB x 4 | 256 KB x 4 | 8 MB | 2010 |

| Intel | Core i5 - 2400 Series Core i5 - 2500 Series | 4 | 32+32 KB x 4 | 256 KB x 4 | 6 MB | 2010 |

| Intel | Core i7 - 8 Series | 4 | 32+32 KB x 4 | 256 KB x 4 | 8 MB | 2010 |

| Intel | Core i7 - 9 Series | 4 | 32+32 KB x 4 | 256 KB x 4 | 8 MB | 2010 |

| Intel | Core i7 - 970 | 6 | 32+32 KB x 6 | 256 KB x 6 | 12 MB | 2010 |

| Sun | UltraSPARC T1 | 8 | 8 K x 8 Inst. 16 K x 8 Data | 3 MB | 2005 | |

| Sun | SPARC64 VI | 2 | 128 K x 2 Inst. 128 K x 2 Data | 5 MB | 2007 | |

| Sun | UltraSPARC T2 | 8 | 8 K x 4 Inst. 16 K x 4 Data | 4 MB | 2007 | |

| Sun | SPARC64 VII | 4 | 64 K x 4 Inst. 64 K x 4 Data | 6 MB | 2008 | |

| Sun | SPARC T3 | 8 | 8 K x 8 Inst. 16 K x 8 Data | 6 MB | 2010 | |

| Sun | SPARC64 VII+ | 2 | 128 K x 2 Inst. 128 K x 2 Data | 12 MB | 2010 | |

| IBM | Power5 | 2 | 64 K x 2 Inst. 64 K x 2 Data | 4 MB x 2 | 32 MB | 2004 |

| IBM | Power7 | 4, 6, or 8 | 32+32 KB x C | 256 kB x C | 4 - 32 MB x C | 2010 |

Note: The 'x #' in the L1 and L2 columns indicates that this cache is for each core.

The next two sections will discuss cache write policies and cache prefetching as techniques to improve the performance of these complex caching architectures by reducing write-miss and read-miss rates.

Cache Write Policies[5]

In section 6.2.3[8], cache write hit policies and write miss policies were explored. The write hit policies, write-through and write-back, determine when the data written in the local cache is propagated to lower-level memory. As review, write-through writes data to the cache and memory on a write. Write-back writes to cache first and to memory only when a flush is required.

The write miss policies covered in the text[8], write-allocate and no-write-allocate, determine if a memory block is stored in a cache line after the write occurs. Note that since a miss occurred on write, the block is not already in a cache line.

Write-miss policies can also be expressed in terms of write-allocate, fetch-on-write, and write-before-hit. These can be used in combination with the same write-through and write-back hit policies to define the complete cache write policy. Investigating alternative policies such as these is important since write-misses produce on average one-third of all cache misses.

The following discusses each of these policies:

Fetch-on-Write

On a write miss, the memory block containing the address to be written is fetched from the lower level memory hierarchy before the write proceeds. Note that this is different from write-allocate. Fetch-on-write determines if the block is fetched from memory, and write-allocate determines if the memory block is stored in a cache line. Certain write policies may allocate a line in cache for data that is written by the CPU without retrieving it from memory first.

No-Fetch-on-Write

On a write miss, the memory block is not fetched first from the lower level memory hierarchy. Therefore, the write can proceed with out having to wait for the memory block to be returned.

Write-Before-Hit

On a write, the write proceeds before the cache determines if a hit or miss occurred. In this scenario, the tag and the data can be written simultaneously, but it incurs an immediate bus transaction for each write by the processor.

No-Write-Before-Hit

On a write, the write waits until the cache determines if the block being written to is in the cache or not. This may avoid a bus transaction by allowing the processor to write to the cache multiple times before the cache line is flushed to memory.

Write-Miss Policy Combinations

In practice, these policies are used in combination to provide an over-all write policy. Four combinations of these three write miss policies are relevant, as illustrated in the table below:

The two combinations of fetch-on-write and no-write-allocate are blocked out in the above diagram. They are considered unproductive since the data is fetched from lower level memory, but is not stored in the cache. Temporal and spacial locality suggest that this data will be used again, and will therefore need to be retrieved a second time.

Three combinations named write-validate, write-around, and write-invalidate all use no-fetch-on-write. They result in 'eliminated misses' when compared to a fetch-on-write policy. In general, this will yield better cache performance if the overhead to manage the policy remains low.

The following discusses each of these combinations:

Write-Validate

The combination of no-fetch-on-write and write-allocation is referred to as 'write-validate'. It writes the data into the cache line without fetching the corresponding block from memory first. The assumption is that the block will be written to memory at a later time. It requires additional overhead, or dirty bits, to track what bytes have been written into that cache line and which bytes were not written. Lower level memories also must be able can process only the changed portions of these lines. Otherwise, when the line is flushed to memory, the unwritten bytes may overwrite valid data.

The no-fetch-on-write has the advantage of avoiding an immediate bus transaction to retrieve the block from memory. For a multiprocessor with a cache coherency model, though, a bus transaction will be required to get exclusive ownership of the block. While this appears to negate the advantages of no-fetch-on-write, the process is able to continue other tasks while this transaction is occurring, including rewriting to the same cache location.

Tests by the author demonstrated more than a 90% reduction in write misses when compared to fetch-on-write policies.

Write-Around

The combination of no-fetch-on-write, no-write-allocate, and no-write-before-hit is referred to as a 'write-around'. It bypasses the higher level cache, writes directly to the lower level memory, and leaves the existing block line in the cache unaltered on a hit or a miss. This strategy shows performance improvements when the data that is written will not be reread in the near future. Since we are writing before a hit is detected, the cache is written around for both hits and misses.

The author notes that in only but a few cases write-around performs worse than write-validate policies. Most applications tend to reread what they have recently written. Using a write-around policy, this would result in a cache miss and a read from lower-level memory. With write-validate, the data would be in cache.

Tests by the author demonstrated a 40-70% reduction in write misses when compared to fetch-on-write policies.

Write-Invalidate

The combination of write-before-hit, no-fetch-on-write, and no-write-allocate is referred to as 'write-invalidate' because the line is invalidated on the miss. The copy that exists in lower level memory after the write miss differs from the one in the cache. For write hits, though, the data is simply written into the cache using the cache hit policy. Thus, for hits, the cache is not written around.

Of these three combinations, write validate performed the worse. The author notes, though, that it does perform better than fetch-on-write and is easy to implement. Tests by the author demonstrated a 35-50% reduction in write misses when compared to fetch-on-write policies.

Fetch-on-write

When used with write-allocate, fetch-on-write stores the block and processes the write to cache as expected. This policy was used as the base-line for determining the performance of the other three policy combinations. In general, all three previous combinations exhibited fewer misses than this policy.

Prefetching in Contemporary Parallel Processors

Prefetching is a technique that can be used in addition to write-hit and write-miss policies to improved the utilization of the cache. Microprocessors, such as those listed in Table 1, support prefetching logic directly in the chip.

Prefetching is the process of retrieving instructions or data from memory before the process explicitly requests it. Instruction prefetching is commonly used by single and multiprocessors to reduce process wait states.[17] Prefetching of data may also be used, though, to pre-populate caches with data that is likely going to be required by the processors in the near term. If the data requirements are anticipated correctly, the requests to memory will result in a greater cache hit rates and, therefore, reduce overall memory access time. If the prefetcher guesses wrong, bus traffic can increase unnecessarily, more relevant data can be flushed from caches, and miss rates can increase.

Prefetching algorithms can leverage both temporal and spacial locality in making these decisions. For example, streaming and sequential access applications often process adjacent memory locations in subsequent tasks.

Following are two examples of processors that support prefetching directly in the chip design:

Intel Core i7 [6]

The Intel 64 Architecture, including the Intel Core i7, includes both instruction and data prefetching directly on the chip.

The Data Cache Unit prefetcher is a streaming prefetcher for L1 caches that detects ascending access to data that has been loaded very recently. The processor assumes that this ascending access will continue, and prefetches the next line.

The data prefetch logic (DPL) maintains two arrays to track the recent accesses to memory: one for the upstreams that has 12 entries, and one for downstreams that has 4 entires. As pages are accessed, their addresses are tracked in these arrays. When the DPL detects an access to a page that is sequential to an existing entry, it assumes this sequential access will continue, and prefetches the next cache line from memory.

AMD [7]

The AMD Family 10h processors use a stream-detection strategy similar to the Intel process described above to trigger prefetching of the next sequential memory location into the L1 cache. (Previous AMD processors fetched into the L2 cache, which introduced the access latency of the L2 cache and hindered performance.)

The AMD Family 10h can prefetch more than just the next sequential block when a stream is detected, though. When this 'unit-stride' prefetcher detects misses to sequential blocks, it can trigger a preset number of prefetch requests from memory. For example, if the preset value is two, the next two blocks of memory will be prefetched when a sequential access is detected. Subsequent detection of misses of sequential blocks may only prefetch a single block. This maintains the 'unit-stride' of two blocks in the cache ahead of the next anticipated read.

AMD contends that this is beneficial when processing large data sets which often process sequential data and process all the data in the stream. In these cases, a larger unit-stride populates the cache with blocks that will ultimately be processed by the CPU. AMD suggests that the optimal number of blocks to prefetch is between 4 and 8, and that fetching too many or fetching too soon has impaired performance in empirical testing.

The AMD Family 10h also includes 'Adaptive Prefetching', a hardware optimization that triggers prefetching when the demand stream catches up to the prefetch stream. In this case, the unit-stride is increased to assure that the prefetcher is fetching at a rate sufficient enough to continuously provide data to the processor.

References

[1]: http://www.techarp.com/showarticle.aspx?artno=337

[2]: http://en.wikipedia.org/wiki/SPARC

[3]: http://en.wikipedia.org/wiki/POWER7

[4]: http://en.wikipedia.org/wiki/POWER5

[5]: “Cache write policies and performance,” Norman Jouppi, Proc. 20th International Symposium on Computer Architecture (ACM Computer Architecture News 21:2), May 1993, pp. 191–201. http://www.hpl.hp.com/techreports/Compaq-DEC/WRL-91-12.pdf

[6]: "Intel® 64 and IA-32 Architectures Optimization Reference Manual", Intel Corporation, 1997-2011. http://www.intel.com/Assets/PDF/manual/248966.pdf

[7]: "Software Optimization Guide for AMD Family 10h and 12h Processors", Advanced Micro Devices, Inc., 2006–2010. http://support.amd.com/us/Processor_TechDocs/40546.pdf

[8]: Yan Solihin, Fundamentals of Parallel Computer Architecture (Solihin Publishing and Consulting, LLC, 2008-2009), p. 151

[9]: http://en.wikipedia.org/wiki/AMD_Phenom

[10]: http://en.wikipedia.org/wiki/Phenom_II

[11]: http://en.wikipedia.org/wiki/Athlon_II

[12]: http://en.wikipedia.org/wiki/List_of_AMD_Athlon_64_microprocessors

[13]: http://en.wikipedia.org/wiki/List_of_AMD_Athlon_X2_microprocessors

[14]: http://en.wikipedia.org/wiki/List_of_Intel_Core_i5_microprocessors

[15]: http://en.wikipedia.org/wiki/List_of_Intel_Core_i3_microprocessors

[16]: http://www.intel.com/pressroom/kits/quickrefyr.htm

[17]: http://en.wikipedia.org/wiki/Instruction_prefetch