CSC/ECE 506 Spring 2010/ch 2 maf: Difference between revisions

No edit summary |

No edit summary |

||

| (96 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

=Supplement to Chapter 2: The Data Parallel Programming Model= | |||

Chapter 2 of [[#References | Solihin (2008)]] covers the shared memory and message passing parallel programming models. However, it does not address the [[#Definitions | ''data parallel'']] model, another commonly recognized parallel programming model covered in other treatments like [[#References | Foster (1995)]] and [[#References | Culler (1999)]]. Whereas the shared memory and message passing models are often present as competing models, the data parallel model addresses fundamentally different programming concerns and can therefore be used in conjunction with either. The goal of this supplement is to provide a treatment of the data parallel model which complements Chapter 2 of [[#References | Solihin (2008)]]. The [[#Definitions | ''task parallel'']] model will also be introduced briefly as a point of contrast. | |||

=Overview= | |||

Whereas the shared memory and message passing models focus on how parallel tasks access common data, the [[#Definitions | ''data parallel'']] model focuses on how to divide up work into parallel tasks. Data parallel algorithms exploit parallelism by dividing a problem into a number of identical tasks which execute on different subsets of common data. An example of a data parallel code can be seen in Code 2.5 from [[#References | Solihin (2008)]] which is reproduced below. It has been annotated with comments identifying the region of the code which is data parallel. | |||

// Data parallel code, adapted from [[#References|Solihin (2008), p. 27.]] | |||

|+''' | |||

id = getmyid(); // Assume id = 0 for thread 0, id = 1 for thread 1 | |||

local_iter = 4; | |||

start_iter = id * local_iter; | |||

end_iter = start_iter + local_iter; | |||

if (id == 0) | |||

send_msg(P1, b[4..7], c[4..7]); | |||

else | |||

recv_msg(P0, b[4..7], c[4..7]); | |||

// Begin data parallel section | |||

for (i = start_iter; i < end_iter; i++) | |||

a[i] = b[i] + c[i]; | |||

local_sum = 0; | |||

for (i = start_iter; i < end_iter; i++) | |||

if (a[i] > 0) | |||

local_sum = local_sum + a[i]; | |||

// End data parallel section | |||

if (id == 0) | |||

{ | |||

recv_msg(P1, &local_sum1); | |||

sum = local_sum + local_sum1; | |||

Print sum; | |||

} | |||

else | |||

send_msg(P0, local_sum); | |||

In the code above, the three 8 element arrays are each divided into two 4 element chunks. In the data parallel section, the code executed by the two threads is identical, but each thread operates on a different chunk of data. | |||

[[#References | Hillis (1986)]] points out that a major benefit of data parallel algorithms is that they easily scale to take advantage of additional processing elements simply by dividing the data into smaller chunks. [[#References | Haveraaen (2000)]] also notes that data parallel codes typically bear a strong resemblance to sequential codes, making them easier to read and write. Comparison of the data parallel section of code identified above with the sequential Code 2.3 of [[#References | Solihin (2008)]], which is reproduced below, supports this assertion. The only differences between the two codes are the start and end indices and that, in the data parallel example, the variable sum is replaced by a private variable. Structurally the two codes are identical. | |||

// Sequential code, from [[#References|Solihin (2008), p. 25.]] | |||

for (i = 0; i < 8; i++) | |||

a[i] = b[i] + c[i]; | |||

sum = 0; | |||

for (i = 0; i < 8; i++) | |||

if (a[i] > 0) | |||

sum = sum + a[i]; | |||

Print sum; | |||

== Example of Data Parallel Programing Model == | |||

This section shows a simple example adapted from Solihin textbook (pp. 24 - 27) that illustrates the data-parallel programming model. Each of the codes below are written in pseudo-code style. | |||

Suppose we want to perform the following task on an array <code>a</code>: updating each element of <code>a</code> by the product of itself and its index, and adding together the elements of <code>a</code> into the variable <code>sum</code>. The corresponding code is shown below. | |||

// simple sequential task | |||

sum = 0; | |||

'''for''' (i = 0; i < a.length; i++) | |||

{ | |||

a[i] = a[i] * i; | |||

sum = sum + a[i]; | |||

} | |||

When we orchestrate the task using the data-parallel programming model, the program can be divided into two parts. The first part performs the same operations on separate elements of the array for each processing element (sometimes referred to as PE or pe), and the second part reorganizes data among all processing elements (In our example data reorganization is summing up values across different processing elements). Since data-parallel programming model only defines the overall effects of parallel steps, the second part can be accomplished either through shared memory or message passing. The three code fragments below are examples for the first part of the program, shared-memory version of the second part, and message passing for the second part, respectively. | |||

// data parallel programming: let each PE perform the same task on different pieces of distributed data | |||

pe_id = getid(); | |||

my_sum = 0; | |||

'''for''' (i = pe_id; i < a.length; i += number_of_pe) //separate elements of the array are assigned to each PE | |||

{ | |||

a[i] = a[i] * i; | |||

my_sum = my_sum + a[i]; //all PEs accumulate elements assigned to them into local variable my_sum | |||

} | |||

In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. For instance, if three processing elements are used then one processing element would start at i = 0, one would start at i = 1, and the last would start at i = 2. Since there are three processing elements then the index of the array for each will increase by three on each iteration until the task is complete (note that in our example elements assigned to each PE are interleaved instead of continuous). If the length of the array is a multiple of three then each processing element takes the same amount of time to execute its portion of the task. | |||

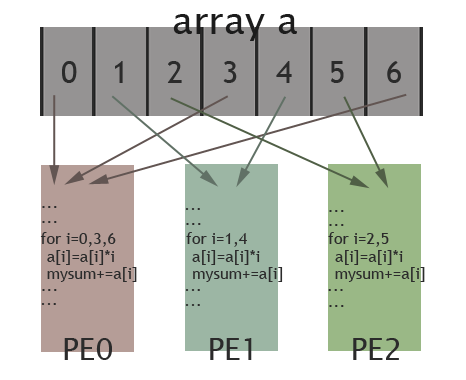

The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes (0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved. | |||

[[Image:506wiki1.png|frame|center|150px|Illustration of data parallel programming(adapted from [http://computing.llnl.gov/tutorials/parallel_comp/#ModelsData Introduction to Parallel Computing])]] | |||

==Example of Task Parallel Programming Model== | |||

The logical opposite of data parallel is [[#Definitions | ''task parallel,'']] in which a number of distinct tasks operate on common data. An example of a task parallel code which is functionally equivalent to the sequential and data parallel codes given above follows below. | |||

// Task parallel code. | |||

int id = getmyid(); // assume id = 0 for thread 0, id = 1 for thread 1 | |||

if (id == 0) | |||

{ | |||

for (i = 0; i < 8; i++) | |||

{ | |||

a[i] = b[i] + c[i]; | |||

send_msg(P1, a[i]); | |||

} | |||

} | |||

else | |||

{ | |||

sum = 0; | |||

for (i = 0; i < 8; i++) | |||

{ | |||

recv_msg(P0, a[i]); | |||

if (a[i] > 0) | |||

sum = sum + a[i]; | |||

} | |||

Print sum; | |||

} | |||

In the code above, work is divided into two parallel tasks. The first performs the element-wise addition of arrays ''b'' and ''c'' and stores the result in ''a.'' The other sums the elements of ''a.'' These tasks both operate on all elements of ''a'' (rather than on separate chunks), and the code executed by each thread is different (rather than identical). | |||

Since each parallel task is unique, a major limitation of task parallel algorithms is that the maximum degree of parallelism attainable is limited to the number of tasks that have been formulated. This is in contrast to data parallel algorithms, which can be scaled easily to take advantage of an arbitrary number of processing elements. In addition, unique tasks are likely to have significantly different run times, making it more challenging to balance load across processors. [[#References | Haveraaen (2000)]] also notes that task parallel algorithms are inherently more complex, requiring a greater degree of communication and synchronization. In the task parallel code above, after thread 0 computes an element of ''a'' it must send it to thread 1. To support this, sends and receives occur every iteration of the two loops, resulting in a total of 8 messages being sent between the threads. In contrast, the data parallel code sends only 2 messages, one at the beginning and one at the end. The table below summarizes the key differences between data parallel and task parallel programming models. | |||

{| class="wikitable" border="1" align="center" | |||

|+ '''Comparison between data parallel and task parallel programming models.''' | |||

|- | |- | ||

! Aspects | ! Aspects | ||

! Data Parallel | ! Data Parallel | ||

! Task Parallel | |||

|- | |- | ||

| | | Decomposition | ||

| | | Partition data into subsets | ||

| | | Partition program into subtasks | ||

|- | |- | ||

| | | Parallel tasks | ||

| | | Identical | ||

| | | Unique | ||

|- | |- | ||

| | | Degree of parallelism | ||

| | | Scales easily | ||

| Fixed | |||

| | |||

|- | |- | ||

| | | Load balancing | ||

| | | Easier | ||

| | | Harder | ||

|- | |- | ||

| | | Communication overhead | ||

| | | Lower | ||

| Higher | |||

| | |||

|} | |} | ||

=== | =History of Parallel Programming Models= | ||

==Vector Machines== | |||

First appearing in the 1970s, vector machines were able to apply a single instruction to multiple data values. This type of operation is used frequently in scientific fields or in multimedia. | |||

The Solomon project at Westinghouse was one of the first machines to use vector operations. It's CPU had a large number of ALUs that would each be fed different data each cycle. Solomon was unsuccessful and was cancelled, eventually to be reborn as the ILLIAC IV at the University of Illinois. The ILLIAC IV showed great success at solving data-intensive problems, peaking at 150 MFLOPS under the right conditions. | |||

An innovation came with the Cray-1 supercomputer in 1976. It was realized that the large data sets are often manipulated by several instructions back-to-back, such as an addition followed by a multiplication. In the ILLIAC, up to 64 data points were loaded from memory with every instruction, but had to be stored back to manipulate the rest of the vector. The Cray computer was only able to load 12 data points, but by completing multiple instructions before continuing the total number of memory accesses decreased. The Cray-1 could perform at 240 MFLOPS. | |||

==References for this section== | |||

*Wikipedia, Vector processor http://en.wikipedia.org/w/index.php?title=Vector_processor&oldid=405209552 | |||

*Wikipedia, Cray-1 http://en.wikipedia.org/w/index.php?title=Cray-1&oldid=409177730 | |||

=Comparing the Data Parallel Model with the Shared Memory and Message Passing Models= | |||

Although the shared memory and message passing models may be combined into hybrid approaches, the two models are fundamentally different ways of addressing the same problem (of access control to common data). In contrast, the data parallel model is concerned with a fundamentally different problem (how to divide work into parallel tasks). As such, the data parallel model may be used in conjunction with either the shared memory or the message passing model without conflict. In fact, [[#References | Klaiber (1994)]] compares the performance of a number of data parallel programs implemented with both shared memory and message passing models. | |||

As discussed in the previous section, one of the major advantages of combining the data parallel and message passing models is a reduction in the amount and complexity of communication required relative to a task parallel approach. Similarly, combining the data parallel and shared memory models tends to simplify and reduce the amount of synchronization required. If the task parallel code given above were modified from a message passing model to a shared memory model, the two threads would require 8 signals be sent between the threads (instead of 8 messages). In contrast, the data parallel code would require a single barrier before the local sums are added to compute the full sum. | |||

Much as the shared memory model can benefit from specialized hardware, the data parallel programming model can as well. [[#Definitions | ''SIMD (single-instruction-multiple-data)'']] processors are specifically designed to run data parallel algorithms. These processors perform a single instruction on many different data locations simultaneously. Modern examples include [http://en.wikipedia.org/wiki/CUDA CUDA processors] developed by nVidia and [http://en.wikipedia.org/wiki/Cell_%28microprocessor%29 Cell processors] developed by STI (Sony, Toshiba, and IBM). For the curious, example code for CUDA processors is provided in the [[#Appendix: C for CUDA Example Code | Appendix]]. However, whereas the shared memory model can be a difficult and costly abstraction in the absence of hardware support, the data parallel model—like the message passing model—does not require hardware support. | |||

Since data parallel code tends to simplify communication and synchronization, data parallel code may be easier to develop than a more task parallel approach. However, data parallel code also requires writing code to split program data into chunks and assign it to different threads. In addition, it is possible that a problem may not decompose easily into subproblems relying on largely independent chunks of data. In this case, it may be impractical or impossible to apply the data parallel model. | |||

Once written, data parallel programs can scale easily to large numbers of processors. The data parallel model implicitly encourages data locality by having each thread work on a chunk of data. The regular data chunks also make it easier to reason about where to locate data and how to organize it. | |||

=Definitions= | |||

* ''Data parallel.'' A data parallel algorithm is composed of a set of identical tasks which operate on different subsets of common data. | |||

* ''Task parallel.'' A task parallel algorithm is composed of a set of differing tasks which operate on common data. | |||

* ''SIMD (single-instruction-multiple-data).'' A processor which executes a single instruction simultaneously on multiple data locations. | |||

=References= | |||

* David E. Culler, Jaswinder Pal Singh, and Anoop Gupta, [http://portal.acm.org/citation.cfm?id=550071 ''Parallel Computer Architecture: A Hardware/Software Approach,''] Morgan-Kauffman, 1999. | |||

* Ian Foster, [http://www.mcs.anl.gov/~itf/dbpp/ ''Designing and Building Parallel Programs,''] Addison-Wesley, 1995. | |||

* Magne Haveraaen, [http://portal.acm.org/citation.cfm?id=1239917 "Machine and collection abstractions for user-implemented data-parallel programming,"] ''Scientific Programming,'' 8(4):231-246, 2000. | |||

* W. Daniel Hillis and Guy L. Steele, Jr., [http://portal.acm.org/citation.cfm?id=7903 "Data parallel algorithms,"] ''Communications of the ACM,'' 29(12):1170-1183, December 1986. | |||

* Alexander C. Klaiber and Henry M. Levy, [http://portal.acm.org/citation.cfm?id=192020 "A comparison of message passing and shared memory architectures for data parallel programs,"] in ''Proceedings of the 21st Annual International Symposium on Computer Architecture,'' April 1994, pp. 94-105. | |||

* Yan Solihin, ''Fundamentals of Parallel Computer Architecture: Multichip and Multicore Systems,'' Solihin Books, 2008. | |||

<!-- | |||

// Data parallel implementation in C++ with OpenMP. | // Data parallel implementation in C++ with OpenMP. | ||

#include <omp.h> | |||

#include <iostream> | |||

int main(void) | int main(void) | ||

{ | { | ||

double a[8], b[8], c[8], localSum[2]; | double a[8], b[8], c[8], localSum[2]; | ||

long s = 4; | |||

int id, i; | |||

#pragma omp parallel for | #pragma omp parallel for private(id, i) reduction(+:s) | ||

for ( | for (i = 0; i < 8; i++) | ||

{ | { | ||

a[i] = b[i] + c[i]; | |||

} | |||

for (i = 0; i < 2; i++) localSum[i] = 0; | |||

#pragma omp parallel for private(id, i) reduction(+:s) | |||

for (i = 0; i < 8; i++) | |||

{ | |||

id = omp_get_thread_num(); | |||

if (a[i] > 0) | |||

localSum[id] = localSum[id] + a[i]; | |||

} | } | ||

double sum = localSum[0] + localSum[1]; | double sum = localSum[0] + localSum[1]; | ||

cout << sum; | std::cout << sum << std::endl; | ||

} | } | ||

--> | |||

=Appendix: C for CUDA Example Code= | |||

// Data parallel implementation | The following code is a data parallel implementation of the sequential Code 2.3 from [[#References | Solihin (2008)]] using [http://www.nvidia.com/object/cuda_learn.html C for CUDA]. It is presented to give an impression of programming for a SIMD architecture, but a detailed discussion is beyond the scope of this supplement. Ignoring memory allocation issues, the code is very similar to the data parallel example, Code 2.5 from [[#References | Solihin (2008)]], discussed earlier. The main difference is the presence of a control thread that sends the parallel tasks to the CUDA device. | ||

// Data parallel implementation of the example code using C for CUDA. | |||

#include <iostream> | |||

__global__ void kernel( | __global__ void kernel(float* a, float* b, float* c, float* local_sum) | ||

{ | { | ||

int id = threadIdx.x; | int id = threadIdx.x; | ||

| Line 91: | Line 236: | ||

int start_iter = id * local_iter; | int start_iter = id * local_iter; | ||

int end_iter = start_iter + local_iter; | int end_iter = start_iter + local_iter; | ||

// Begin data parallel section | |||

for (int i = start_iter; i < end_iter; i++) | for (int i = start_iter; i < end_iter; i++) | ||

a[i] = b[i] + c[i]; | a[i] = b[i] + c[i]; | ||

local_sum[id] = 0; | local_sum[id] = 0; | ||

for (int i = start_iter; i < end_iter; i++) | for (int i = start_iter; i < end_iter; i++) | ||

if (a[i] > 0) | if (a[i] > 0) | ||

local_sum[id] = local_sum[id] + a[i]; | |||

// End data parallel section | |||

} | } | ||

int main() | int main() | ||

{ | { | ||

float h_a[8], h_b[8], h_c[8], h_sum[2]; | |||

float *d_a, *d_b, *d_c, *d_sum; | |||

float sum; | |||

size_t size = 8 * sizeof(float); | |||

size_t size2 = 2 * sizeof(float); | |||

cudaMalloc((void**)&d_a, size); | |||

cudaMalloc((void**)&d_b, size); | |||

cudaMalloc((void**)&d_c, size); | |||

cudaMalloc((void**)&d_local_sum, size2); | |||

cudaMemcpy(d_b, h_b, size, cudaMemcpyHostToDevice); | |||

cudaMemcpy(d_c, h_c, size, cudaMemcpyHostToDevice); | |||

kernel<<<1, 2>>>(d_a, d_b, d_c, d_sum); | |||

cudaMemcpy(h_a, d_a, size, cudaMemcpyDeviceToHost); | |||

cudaMemcpy(h_sum, d_sum, size2, cudaMemcpyDeviceToHost); | |||

sum = h_sum[0] + h_sum[1]; | |||

std::cout << sum; | |||

cudaFree(d_a); | |||

cudaFree(d_b); | |||

cudaFree(d_c); | |||

cudaFree(d_sum); | |||

} | |||

Latest revision as of 20:31, 31 January 2011

Supplement to Chapter 2: The Data Parallel Programming Model

Chapter 2 of Solihin (2008) covers the shared memory and message passing parallel programming models. However, it does not address the data parallel model, another commonly recognized parallel programming model covered in other treatments like Foster (1995) and Culler (1999). Whereas the shared memory and message passing models are often present as competing models, the data parallel model addresses fundamentally different programming concerns and can therefore be used in conjunction with either. The goal of this supplement is to provide a treatment of the data parallel model which complements Chapter 2 of Solihin (2008). The task parallel model will also be introduced briefly as a point of contrast.

Overview

Whereas the shared memory and message passing models focus on how parallel tasks access common data, the data parallel model focuses on how to divide up work into parallel tasks. Data parallel algorithms exploit parallelism by dividing a problem into a number of identical tasks which execute on different subsets of common data. An example of a data parallel code can be seen in Code 2.5 from Solihin (2008) which is reproduced below. It has been annotated with comments identifying the region of the code which is data parallel.

// Data parallel code, adapted from Solihin (2008), p. 27. id = getmyid(); // Assume id = 0 for thread 0, id = 1 for thread 1 local_iter = 4; start_iter = id * local_iter; end_iter = start_iter + local_iter; if (id == 0) send_msg(P1, b[4..7], c[4..7]); else recv_msg(P0, b[4..7], c[4..7]); // Begin data parallel section for (i = start_iter; i < end_iter; i++) a[i] = b[i] + c[i]; local_sum = 0; for (i = start_iter; i < end_iter; i++) if (a[i] > 0) local_sum = local_sum + a[i]; // End data parallel section if (id == 0) { recv_msg(P1, &local_sum1); sum = local_sum + local_sum1; Print sum; } else send_msg(P0, local_sum);

In the code above, the three 8 element arrays are each divided into two 4 element chunks. In the data parallel section, the code executed by the two threads is identical, but each thread operates on a different chunk of data.

Hillis (1986) points out that a major benefit of data parallel algorithms is that they easily scale to take advantage of additional processing elements simply by dividing the data into smaller chunks. Haveraaen (2000) also notes that data parallel codes typically bear a strong resemblance to sequential codes, making them easier to read and write. Comparison of the data parallel section of code identified above with the sequential Code 2.3 of Solihin (2008), which is reproduced below, supports this assertion. The only differences between the two codes are the start and end indices and that, in the data parallel example, the variable sum is replaced by a private variable. Structurally the two codes are identical.

// Sequential code, from Solihin (2008), p. 25. for (i = 0; i < 8; i++) a[i] = b[i] + c[i]; sum = 0; for (i = 0; i < 8; i++) if (a[i] > 0) sum = sum + a[i]; Print sum;

Example of Data Parallel Programing Model

This section shows a simple example adapted from Solihin textbook (pp. 24 - 27) that illustrates the data-parallel programming model. Each of the codes below are written in pseudo-code style.

Suppose we want to perform the following task on an array a: updating each element of a by the product of itself and its index, and adding together the elements of a into the variable sum. The corresponding code is shown below.

// simple sequential task

sum = 0;

for (i = 0; i < a.length; i++)

{

a[i] = a[i] * i;

sum = sum + a[i];

}

When we orchestrate the task using the data-parallel programming model, the program can be divided into two parts. The first part performs the same operations on separate elements of the array for each processing element (sometimes referred to as PE or pe), and the second part reorganizes data among all processing elements (In our example data reorganization is summing up values across different processing elements). Since data-parallel programming model only defines the overall effects of parallel steps, the second part can be accomplished either through shared memory or message passing. The three code fragments below are examples for the first part of the program, shared-memory version of the second part, and message passing for the second part, respectively.

// data parallel programming: let each PE perform the same task on different pieces of distributed data

pe_id = getid();

my_sum = 0;

for (i = pe_id; i < a.length; i += number_of_pe) //separate elements of the array are assigned to each PE

{

a[i] = a[i] * i;

my_sum = my_sum + a[i]; //all PEs accumulate elements assigned to them into local variable my_sum

}

In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. For instance, if three processing elements are used then one processing element would start at i = 0, one would start at i = 1, and the last would start at i = 2. Since there are three processing elements then the index of the array for each will increase by three on each iteration until the task is complete (note that in our example elements assigned to each PE are interleaved instead of continuous). If the length of the array is a multiple of three then each processing element takes the same amount of time to execute its portion of the task.

The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes (0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved.

Example of Task Parallel Programming Model

The logical opposite of data parallel is task parallel, in which a number of distinct tasks operate on common data. An example of a task parallel code which is functionally equivalent to the sequential and data parallel codes given above follows below.

// Task parallel code.

int id = getmyid(); // assume id = 0 for thread 0, id = 1 for thread 1

if (id == 0)

{

for (i = 0; i < 8; i++)

{

a[i] = b[i] + c[i];

send_msg(P1, a[i]);

}

}

else

{

sum = 0;

for (i = 0; i < 8; i++)

{

recv_msg(P0, a[i]);

if (a[i] > 0)

sum = sum + a[i];

}

Print sum;

}

In the code above, work is divided into two parallel tasks. The first performs the element-wise addition of arrays b and c and stores the result in a. The other sums the elements of a. These tasks both operate on all elements of a (rather than on separate chunks), and the code executed by each thread is different (rather than identical).

Since each parallel task is unique, a major limitation of task parallel algorithms is that the maximum degree of parallelism attainable is limited to the number of tasks that have been formulated. This is in contrast to data parallel algorithms, which can be scaled easily to take advantage of an arbitrary number of processing elements. In addition, unique tasks are likely to have significantly different run times, making it more challenging to balance load across processors. Haveraaen (2000) also notes that task parallel algorithms are inherently more complex, requiring a greater degree of communication and synchronization. In the task parallel code above, after thread 0 computes an element of a it must send it to thread 1. To support this, sends and receives occur every iteration of the two loops, resulting in a total of 8 messages being sent between the threads. In contrast, the data parallel code sends only 2 messages, one at the beginning and one at the end. The table below summarizes the key differences between data parallel and task parallel programming models.

| Aspects | Data Parallel | Task Parallel |

|---|---|---|

| Decomposition | Partition data into subsets | Partition program into subtasks |

| Parallel tasks | Identical | Unique |

| Degree of parallelism | Scales easily | Fixed |

| Load balancing | Easier | Harder |

| Communication overhead | Lower | Higher |

History of Parallel Programming Models

Vector Machines

First appearing in the 1970s, vector machines were able to apply a single instruction to multiple data values. This type of operation is used frequently in scientific fields or in multimedia.

The Solomon project at Westinghouse was one of the first machines to use vector operations. It's CPU had a large number of ALUs that would each be fed different data each cycle. Solomon was unsuccessful and was cancelled, eventually to be reborn as the ILLIAC IV at the University of Illinois. The ILLIAC IV showed great success at solving data-intensive problems, peaking at 150 MFLOPS under the right conditions.

An innovation came with the Cray-1 supercomputer in 1976. It was realized that the large data sets are often manipulated by several instructions back-to-back, such as an addition followed by a multiplication. In the ILLIAC, up to 64 data points were loaded from memory with every instruction, but had to be stored back to manipulate the rest of the vector. The Cray computer was only able to load 12 data points, but by completing multiple instructions before continuing the total number of memory accesses decreased. The Cray-1 could perform at 240 MFLOPS.

References for this section

- Wikipedia, Vector processor http://en.wikipedia.org/w/index.php?title=Vector_processor&oldid=405209552

- Wikipedia, Cray-1 http://en.wikipedia.org/w/index.php?title=Cray-1&oldid=409177730

Although the shared memory and message passing models may be combined into hybrid approaches, the two models are fundamentally different ways of addressing the same problem (of access control to common data). In contrast, the data parallel model is concerned with a fundamentally different problem (how to divide work into parallel tasks). As such, the data parallel model may be used in conjunction with either the shared memory or the message passing model without conflict. In fact, Klaiber (1994) compares the performance of a number of data parallel programs implemented with both shared memory and message passing models.

As discussed in the previous section, one of the major advantages of combining the data parallel and message passing models is a reduction in the amount and complexity of communication required relative to a task parallel approach. Similarly, combining the data parallel and shared memory models tends to simplify and reduce the amount of synchronization required. If the task parallel code given above were modified from a message passing model to a shared memory model, the two threads would require 8 signals be sent between the threads (instead of 8 messages). In contrast, the data parallel code would require a single barrier before the local sums are added to compute the full sum.

Much as the shared memory model can benefit from specialized hardware, the data parallel programming model can as well. SIMD (single-instruction-multiple-data) processors are specifically designed to run data parallel algorithms. These processors perform a single instruction on many different data locations simultaneously. Modern examples include CUDA processors developed by nVidia and Cell processors developed by STI (Sony, Toshiba, and IBM). For the curious, example code for CUDA processors is provided in the Appendix. However, whereas the shared memory model can be a difficult and costly abstraction in the absence of hardware support, the data parallel model—like the message passing model—does not require hardware support.

Since data parallel code tends to simplify communication and synchronization, data parallel code may be easier to develop than a more task parallel approach. However, data parallel code also requires writing code to split program data into chunks and assign it to different threads. In addition, it is possible that a problem may not decompose easily into subproblems relying on largely independent chunks of data. In this case, it may be impractical or impossible to apply the data parallel model.

Once written, data parallel programs can scale easily to large numbers of processors. The data parallel model implicitly encourages data locality by having each thread work on a chunk of data. The regular data chunks also make it easier to reason about where to locate data and how to organize it.

Definitions

- Data parallel. A data parallel algorithm is composed of a set of identical tasks which operate on different subsets of common data.

- Task parallel. A task parallel algorithm is composed of a set of differing tasks which operate on common data.

- SIMD (single-instruction-multiple-data). A processor which executes a single instruction simultaneously on multiple data locations.

References

- David E. Culler, Jaswinder Pal Singh, and Anoop Gupta, Parallel Computer Architecture: A Hardware/Software Approach, Morgan-Kauffman, 1999.

- Ian Foster, Designing and Building Parallel Programs, Addison-Wesley, 1995.

- Magne Haveraaen, "Machine and collection abstractions for user-implemented data-parallel programming," Scientific Programming, 8(4):231-246, 2000.

- W. Daniel Hillis and Guy L. Steele, Jr., "Data parallel algorithms," Communications of the ACM, 29(12):1170-1183, December 1986.

- Alexander C. Klaiber and Henry M. Levy, "A comparison of message passing and shared memory architectures for data parallel programs," in Proceedings of the 21st Annual International Symposium on Computer Architecture, April 1994, pp. 94-105.

- Yan Solihin, Fundamentals of Parallel Computer Architecture: Multichip and Multicore Systems, Solihin Books, 2008.

Appendix: C for CUDA Example Code

The following code is a data parallel implementation of the sequential Code 2.3 from Solihin (2008) using C for CUDA. It is presented to give an impression of programming for a SIMD architecture, but a detailed discussion is beyond the scope of this supplement. Ignoring memory allocation issues, the code is very similar to the data parallel example, Code 2.5 from Solihin (2008), discussed earlier. The main difference is the presence of a control thread that sends the parallel tasks to the CUDA device.

// Data parallel implementation of the example code using C for CUDA.

#include <iostream>

__global__ void kernel(float* a, float* b, float* c, float* local_sum)

{

int id = threadIdx.x;

int local_iter = 4;

int start_iter = id * local_iter;

int end_iter = start_iter + local_iter;

// Begin data parallel section

for (int i = start_iter; i < end_iter; i++)

a[i] = b[i] + c[i];

local_sum[id] = 0;

for (int i = start_iter; i < end_iter; i++)

if (a[i] > 0)

local_sum[id] = local_sum[id] + a[i];

// End data parallel section

}

int main()

{

float h_a[8], h_b[8], h_c[8], h_sum[2];

float *d_a, *d_b, *d_c, *d_sum;

float sum;

size_t size = 8 * sizeof(float);

size_t size2 = 2 * sizeof(float);

cudaMalloc((void**)&d_a, size);

cudaMalloc((void**)&d_b, size);

cudaMalloc((void**)&d_c, size);

cudaMalloc((void**)&d_local_sum, size2);

cudaMemcpy(d_b, h_b, size, cudaMemcpyHostToDevice);

cudaMemcpy(d_c, h_c, size, cudaMemcpyHostToDevice);

kernel<<<1, 2>>>(d_a, d_b, d_c, d_sum);

cudaMemcpy(h_a, d_a, size, cudaMemcpyDeviceToHost);

cudaMemcpy(h_sum, d_sum, size2, cudaMemcpyDeviceToHost);

sum = h_sum[0] + h_sum[1];

std::cout << sum;

cudaFree(d_a);

cudaFree(d_b);

cudaFree(d_c);

cudaFree(d_sum);

}