CSC/ECE 517 Spring 2024 - E2412. Testing for hamer.rb

This page describes the changes made for the Spring 2024 Program 3: First OSS project E2412. Testing for hamer.rb

Project Overview

Problem Statement

The practice of using student feedback on assignments as a grading tool is gaining traction among university professors and courses. This approach not only saves instructors and teaching assistants considerable time but also fosters a deeper understanding of assignments among students as they evaluate their peers' work. However, there is a concern that some students may not take their reviewing responsibilities seriously, potentially skewing the grading process by assigning extreme scores such as 100 or 0 arbitrarily. To address this issue, the Hamer algorithm was developed to assess the credibility and accuracy of reviewers. It generates reputation weights for each reviewer, which instructors can use to gauge their reliability or incorporate into grading calculations. Our goal here is to test this Hamer algorithm.

Objectives

- Develop code testing scenarios to validate the Hamer algorithm and ensure the accuracy of its output values.

- Verify the correctness of the reputation web server's Hamer values by accessing the URL http://peerlogic.csc.ncsu.edu/reputation/calculations/reputation_algorithms.

- Reimplement the algorithm if discrepancies arise in the reputation web server's Hamer values.

- Validate the accuracy of the newly implemented Hamer algorithm.

????????Files Involved

- reputation_web_server_hamer.rb

- reputation_mock_web_server_hamer.rb

Mentor

- Muhammet Mustafa Olmez (molmez@ncsu.edu)

Team Members

- Neha Vijay Patil (npatil2@ncsu.edu)

- Prachit Mhalgi (psmhalgi@ncsu.edu)

- Sahil Santosh Sawant (ssawant2@ncsu.edu)

Hamer Algorithm

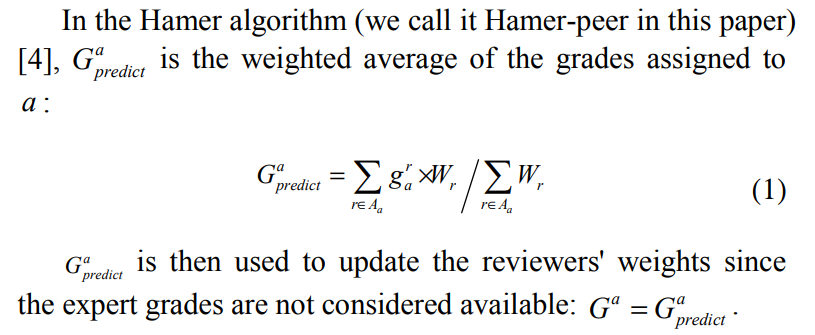

The grading algorithm described in the paper is designed to provide a reward to reviewers who participate effectively by allocating a portion of the assignment mark to the review, with the review mark reflecting the quality of the grading. Here's an explanation of the algorithm:

1. Review Allocation: Each reviewer is assigned a number of essays to grade. The paper suggests assigning at least five essays, with ten being ideal. Assuming each review takes 20 minutes, ten reviews can be completed in about three and a half hours.

2. Grading Process:

- Once the reviewing is complete, grades are generated for each essay and weights are assigned to each reviewer.

- The essay grades are computed by averaging the individual grades from all the reviewers assigned to that essay.

- Initially, all reviewers are given equal weight in the averaging process.

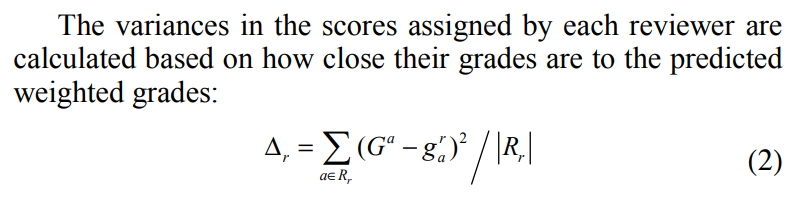

- The algorithm assumes that some reviewers will perform better than others. It measures this by comparing the grades assigned by each reviewer to the averaged grades. The larger the difference between the assigned and averaged grades, the more out of step the reviewer is considered with the consensus view of the class.

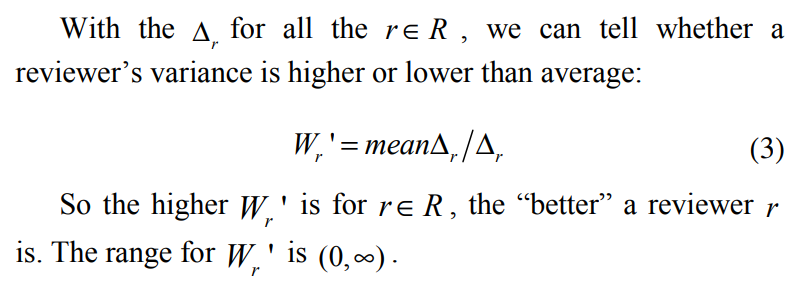

- The algorithm adjusts the weighting of the reviewers based on this difference. Reviewers who are closer to the consensus view are given higher weights, while those who deviate significantly are given lower weights.

3. Iterative Process:

- The calculation of grades and weights is an iterative process. Each time the grades are calculated, the weights need to be updated, and each change in the weights affects the grades.

- Convergence occurs quickly, typically requiring four to six iterations before a solution (a "fix-point") is reached.

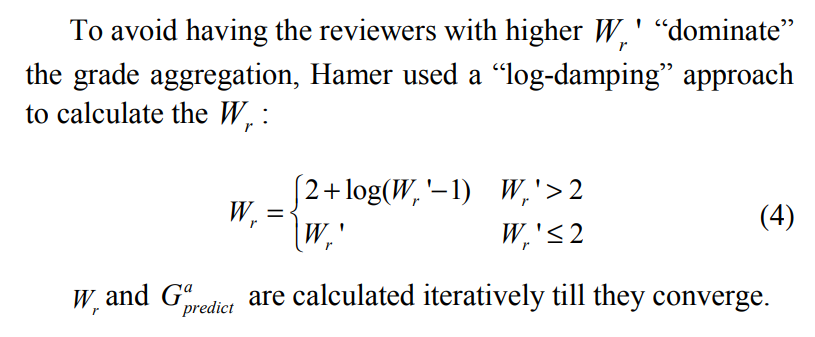

4. Weight Adjustment:

- The weights assigned to reviewers are adjusted based on the difference between the assigned and averaged grades. Reviewers with larger discrepancies have their weights adjusted inversely proportional to this difference.

- To prevent excessively large weights, a logarithmic dampening function is applied, allowing weights to rise to twice the class average before further increases are awarded sparingly.

5. Properties:

- The algorithm aims to identify and diminish the impact of "rogue" reviewers who may inject random or arbitrary grades into the peer assessment process.

- By adjusting reviewer weights based on their grading accuracy, the algorithm aims to improve the reliability of the grading process in the presence of such rogue reviewers.

Overall, the algorithm seeks to balance the contributions of different reviewers based on the accuracy of their grading, ultimately aiming to produce reliable grades for each essay in a peer assessment scenario.

Mathematical representation

Test Plan - Initial Phase

In the initial phase, we were tasked with testing the reputation_web_service_controller. The work done by a previous project team was impacted by the web-service (peerlogic) not being available at that time. This time, we were able to access the Peerlogic server at a late stage - therefore, our plan at this moment involved performing a series of unit tests to determine that the web-service was communicating correctly with Expertiza.

Initial Testing Plan, Object Creation

1. Since our focus in this phase was to conduct exploratory testing of the system, we wrote some conventional tests to examine Peerlogic functionality. At this stage, we realized that Peerlogic would only accept and respond with JSON data.

2. Therefore, a natural next step was to prepare a series of input data that simulated a general input scenario for the system, comprising of:

- a. Each reviewer has assigned scores to 3 reviewees (fellow students)

- b. There are a total of 3 reviewers, who have all graded each other in some fashion for 5 assignments

- c. Convert this scenario to JSON

- d. Write code to PUT this to Peerlogic, and receive a response

- e. Parse through this response to obtain the output values of the Hamer Algorithm, as calculated by Peerlogic.

- f. This output would be compared against actual data that we calculated based on the Research Paper for the Hamer Algorithm

The code for the last step is shown below

import math

# Parameters: reviews list

# reviews list - a list of each reviewer's grades for each assignment

# Example:

# reviews = [[5,4,4,3,2],[5,3,4,4,2],[4,3,4,3,2]]

# Corresponding reviewer and grade for each assignment table

# Essay Reviewer1 Reviewer2 Reviewer3

# Assignment1 5 5 4

# Assignment2 4 3 3

# Assignment3 4 4 4

# Assignment4 3 4 3

# Assignment5 2 2 2

# Reivewer's grades given to each assignment 2D array

# Each index of reviews is a reviewer. Each index in reviews[i] is a review grade

reviews = [[5,4,4,3,2],[5,3,4,4,2],[4,3,4,3,2]]

# Number of reviewers

numReviewers = len(reviews)

# Number of assignments

numAssig = len(reviews[0])

# Initial empty grades for each assignment array

grades = []

# Initial empty delta R array

deltaR = []

# Weight prime

weightPrime = []

# Reviewer's reputation weight

weight= []

# Calculating Average Weighted Grades per Reviewer

for numAssigIndex in range(numAssig):

assignmentGradeAverage = 0

for numReviewerIndex in range(numReviewers):

assignmentGradeAverage += reviews[numReviewerIndex][numAssigIndex]

grades.append(assignmentGradeAverage/numReviewers)

print("Average Grades:")

print(grades)

# Calculating delta R

for numReviewerIndex in range(numReviewers):

reviewerDeltaR = 0

assignmentAverageGradeIndex = 0

for reviewGrade in reviews[numReviewerIndex]:

reviewerDeltaR += ((reviewGrade - grades[assignmentAverageGradeIndex]) ** 2)

assignmentAverageGradeIndex += 1

reviewerDeltaR /= numAssig

deltaR.append(reviewerDeltaR)

print("deltaR:")

print(deltaR)

# Calculating weight prime

averageDeltaR = 0

for reviewerDeltaR in deltaR:

averageDeltaR += reviewerDeltaR

averageDeltaR /= numReviewers

print("averageDeltaR:")

print(averageDeltaR)

# Calculating weight prime

for reviewerDeltaR in deltaR:

weightPrime.append(averageDeltaR/reviewerDeltaR)

print("weightPrime:")

print(weightPrime)

# Calculating reputation weight

for reviewerWeightPrime in weightPrime:

if reviewerWeightPrime <= 2:

weight.append(reviewerWeightPrime)

else:

weight.append(2 + math.log(reviewerWeightPrime - 1))

print("reputation per reviewer:")

i = 1

for reviewerWeight in weight:

print("Reputation of Reviewer ", i)

print(round(reviewerWeight,1))

i += 1

Initial Testing Conclusions

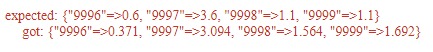

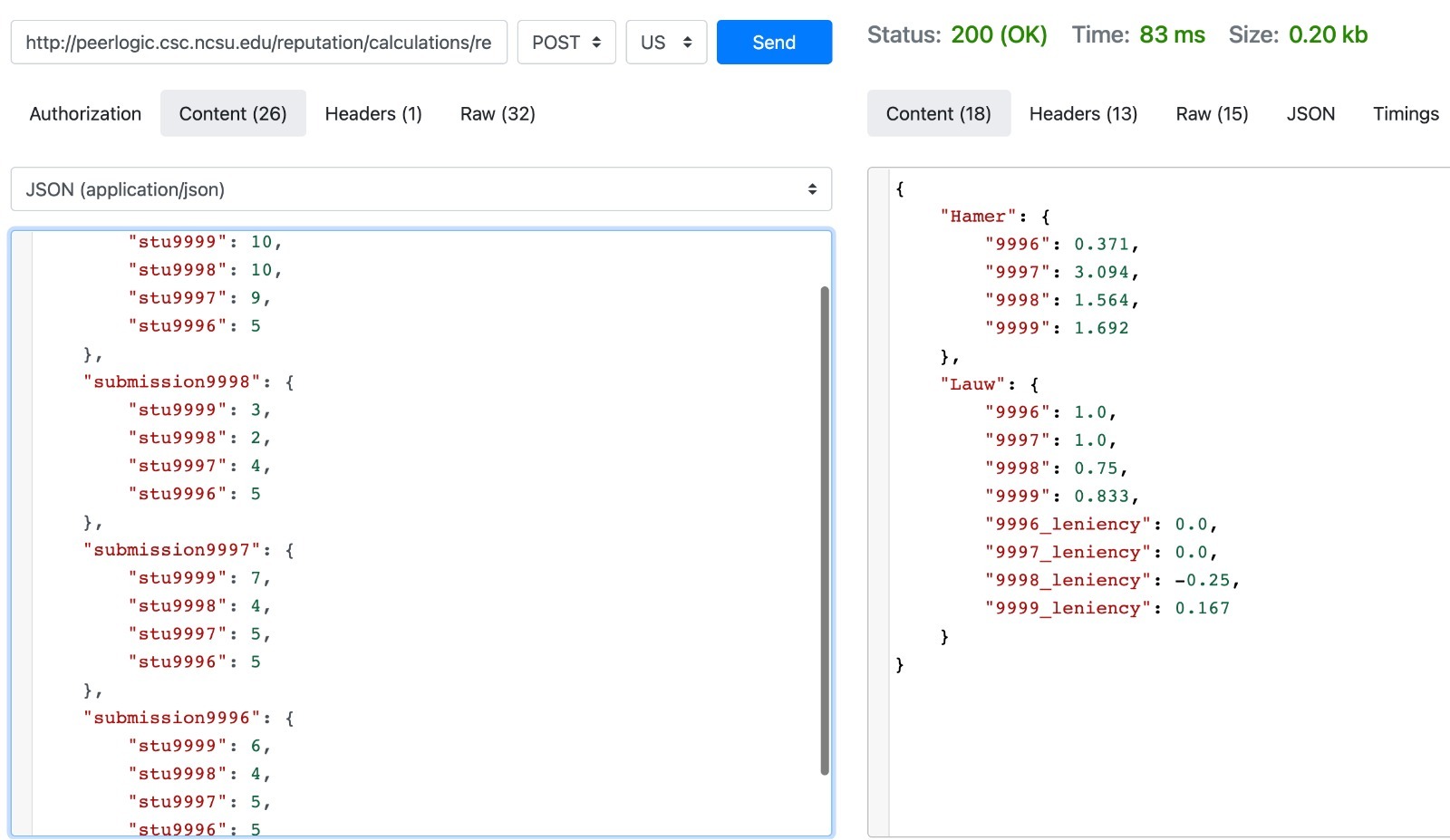

The results that are actually received from Peerlogic are presented below:

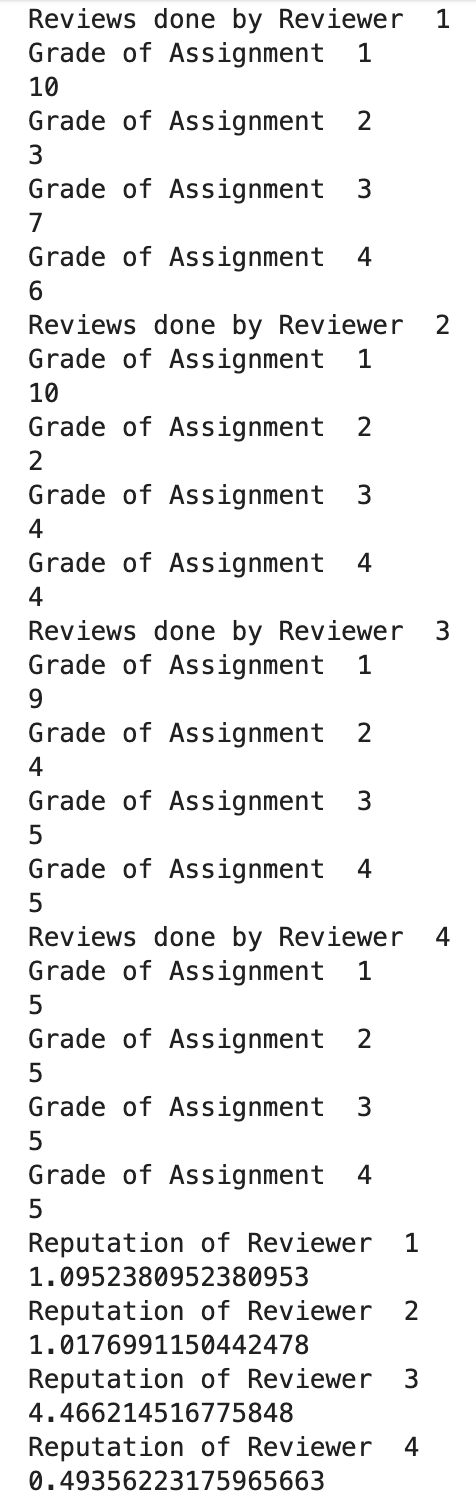

The results from our python recreated Hamer Algorithm are as followed:

As you can see, they do NOT match with expected results.

Therefore, our first conclusion is that the PeerLogic Webservice is implemented incorrectly.

This has been documented in the Conclusion section as the first point.

Changes to Project Scope

Test Plan - Second Phase, Object Creation

We followed the testing thought process recommended by Dr. Gehringer: In testing this service, we used an external program to send requests to a simulated service, and inspected the returned data. This decision was reached since our program of test was unfortunately not running, and could not be inspected in an ideal manner. Additionally, this method was recommended by Dr. Gehringer, our project mentor. Finally, we also added a test case that mocks a webservice and asserts the output, done in 2 ways:

- a. In the first code snippet, we send JSON to a webservice that returns the correct Hamer output, as Peerlogic should when fixed.

- b. In the form of an RSpec mock, in the second snippet below.

The test below sends real JSON to both peerlogic and mock. http://peerlogic.csc.ncsu.edu/reputation/calculations/reputation_algorithms

As we tested on the peerlogic and mock, current web-service is not correct, since the returned values do not match the expected values as can be seen in the picture.

This is what we are supposed to reach in this project.

Test Code Snippet:

require "net/http"

require "json"

#The following contains 4 reviewers who have scored 4 reviewees

# Parameters: reviews list

# reviews list - a list of each reviewer's grades for each assignment

# Input visualization:

# Corresponding reviewer and grade for each assignment table

# Reviewer-> stu9999 stu9998 stu9997 stu9996

# Assignment

# Assignment1 5 5 4

# Assignment2 4 3 3

# Assignment3 4 4 4

# Assignment4 3 4 3

INPUTS = {

"submission9999": {

"stu9999": 10,

"stu9998": 10,

"stu9997": 9,

"stu9996": 5

},

"submission9998": {

"stu9999": 3,

"stu9998": 2,

"stu9997": 4,

"stu9996": 5

},

"submission9997": {

"stu9999": 7,

"stu9998": 4,

"stu9997": 5,

"stu9996": 5

},

"submission9996": {

"stu9999": 6,

"stu9998": 4,

"stu9997": 5,

"stu9996": 5

}

}.to_json

#

#Values that would be returned by a correct Hamer implementation

EXPECTED = {

"Hamer": {

"9996": 0.6,

"9997": 3.6,

"9998": 1.1,

"9999": 1.1

}

}.to_json

#sends API request to Peeerlogic

describe "Expertiza" do

it "should return the correct Hamer calculation" do

uri = URI('http://peerlogic.csc.ncsu.edu/reputation/calculations/reputation_algorithms')

req = Net::HTTP::Post.new(uri)

req.content_type = 'application/json'

req.body = INPUTS

response = Net::HTTP.start(uri.hostname, uri.port) do |http|

http.request(req)

end

#assertion fails, as expected

expect(JSON.parse(response.body)["Hamer"]).to eq(JSON.parse(EXPECTED)["Hamer"])

end

end

#sends API request to Mock Hamer/Peerlogic Server

describe "Expertiza Web Service" do

it "should return the correct Hamer calculation" do

uri = URI('https://4dfaead4-a747-4be4-8683-3b10d1d2e0c0.mock.pstmn.io/reputation_web_service/default')

req = Net::HTTP::Post.new(uri)

req.content_type = 'application/json'

req.body = INPUTS

response = Net::HTTP.start(uri.hostname, uri.port, :use_ssl => uri.scheme == 'https') do |http|

http.request(req)

end

expect(JSON.parse("#{response.body}}")["Hamer"]).to eq(JSON.parse(EXPECTED)["Hamer"])

end

end

Second Phase Output

In addition, this plan enables us to test the current functionality by treating this system as a black box, and is able to provide conclusions on the accuracy of the implementation as a whole.

Therefore, in the section below, we have provided code that showcases this plan in action. The values returned by the algorithm are to be inspected both by code and by hand.

require "webmock/rspec" # gem install webmock -v 2.2.0

WebMock.disable_net_connect!(allow_localhost: true)

#Setting up test objects

INPUTS = {

"submission9999": {

"stu9999": 10,

"stu9998": 10,

"stu9997": 9,

"stu9996": 5

},

"submission9998": {

"stu9999": 3,

"stu9998": 2,

"stu9997": 4,

"stu9996": 5

},

"submission9997": {

"stu9999": 7,

"stu9998": 4,

"stu9997": 5,

"stu9996": 5

},

"submission9996": {

"stu9999": 6,

"stu9998": 4,

"stu9997": 5,

"stu9996": 5

}

}.to_json

#Result expectations are identical here, in order to maintain uniformity

EXPECTED = {

"Hamer": {

"9996": 0.6,

"9997": 3.6,

"9998": 1.1,

"9999": 1.1

}

}.to_json

#tests Peerlogic

describe "Expertiza" do

before(:each) do

stub_request(:post, /peerlogic.csc.ncsu.edu/).

to_return(status: 200, body: EXPECTED, headers: {})

end

it "should return the correct Hamer calculation" do

uri = URI('http://peerlogic.csc.ncsu.edu/reputation/calculations/reputation_algorithms')

#JSON conversion to ensure server compatibility

req = Net::HTTP::Post.new(uri)

req.content_type = 'application/json'

req.body = INPUTS

response = Net::HTTP.start(uri.hostname, uri.port, :use_ssl => uri.scheme == 'https') do |http|

http.request(req)

end

#parse the JSON response body to access values for algorithm of choice, which is Hamer

expect(JSON.parse(response.body)["Hamer"]).to eq(JSON.parse(EXPECTED)["Hamer"])

end

end

#our assertion proves this mock works

Edge Cases & Scenarios

We present these scenarios as possible test cases for an accurately working Peerlogic webservice.

1) Reviewer gives all max scores

2) Reviewer gives all min scores

3) Reviewer completes no review

- alternative scenario - reviewer gives max scores even if no inputs

These have not been implemented as there is no point in testing a system further when positive flows do not work. However, the code in the Initial Phase Section can be used to analytically calculate correct responses for future assertions. We have provided outputs to these scenarios below:

Coverage

We believe that after our edge cases are implemented for a working Peerlogic, and the assertions pass, that test coverage can then be adequately measured.

At this moment, test coverage is not a relevant statistic as no positive or negative flows functions correctly, as do any edge cases.

Conclusion

We as a team figured out the algorithms and applications and write some test scenarios. However, we did not have chance to work on web service since it does not work due to module errors. What we had is undefined method strip on Reputation Web Service Controller. Although sometimes it works on Expertiza team side, we were not able to see the web service working. We created some test scenarios and write a python code for simulate the algorithm.

- 1. In the code segment written to simulate the hamer.rb algorithm as described in "A Method of Automatic Grade Calibration in Peer Assessment" by John Hamer Kenneth T.K. Ma Hugh H.F. Kwong ::::(https://crpit.scem.westernsydney.edu.au/confpapers/CRPITV42Hamer.pdf), we take a list of reviewers and their grades for each assignment reviewed to compute the associated reputation weight. Since the algorithm described in the ::paper does not specify an original weight for first time reviewers, we coded it so the first time reviewers had an original weight of 1. In addition, this code does not have reviewer weights added in for reviewers who already ::have reputation weights but will be added in soon. Also, we followed the algorithm they mentioned in the paper to the dot, but even then the output values they wrote as the example did not match what we computed by hand and by ::code. In this situation, either we missed something completely or the algorithm has been changed. As we tested on the peerlogic and mock, current web-service is not correct, since the returned values do not match the expected ::values as can be seen in the picture. This can be what we are supposed to reach in this project.

- 2. In addition, we also found out that the reputation_web_service_controller.rb currently is broken and needs refactoring. While the client side of the reputation web service page runs, any attempt to submit grades to the ::reputation web server side results in an error.

- 3. We provided scenarios for future teams to implement once Peerlogic is running correctly.

- 4. We mocked an accurate webservice and showed what the expected JSON should be like.

GitHub Links

Link to Expertiza repository: here

Link to the forked repository: here

Link to pull request: here

Link to Github Project page: here

Link to Testing Video: here

References

1. Expertiza on GitHub (https://github.com/expertiza/expertiza)

2. The live Expertiza website (http://expertiza.ncsu.edu/)

3. Pluggable reputation systems for peer review: A web-service approach (https://doi.org/10.1109/FIE.2015.7344292)