CSC/ECE 517 Spring 2016/E1738 Integrate Simicheck Web Service: Difference between revisions

| (18 intermediate revisions by 3 users not shown) | |||

| Line 59: | Line 59: | ||

This model '''has_many''' PlagiarismCheckerComparison models. It represents the results of the comparison among submissions for the assignment. As such it will contain all of the relevant fields that are shown in the view as described in the requirements. | This model '''has_many''' PlagiarismCheckerComparison models. It represents the results of the comparison among submissions for the assignment. As such it will contain all of the relevant fields that are shown in the view as described in the requirements. | ||

=== | === Diagrams === | ||

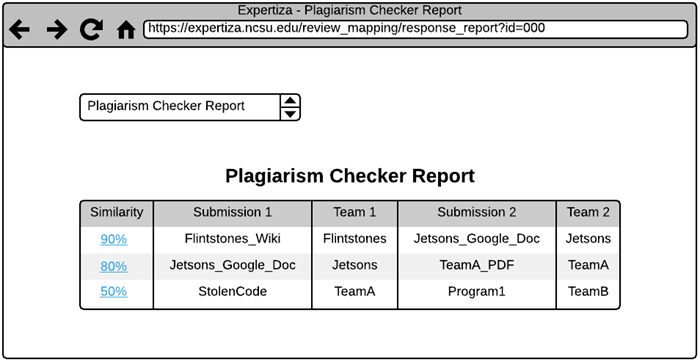

Typical overall system operation | Typical overall system operation: | ||

<br> | <br> | ||

[[File:Simicheck_API_-_SimiCheck.png]] | [[File:Simicheck_API_-_SimiCheck.png]] | ||

<br> | |||

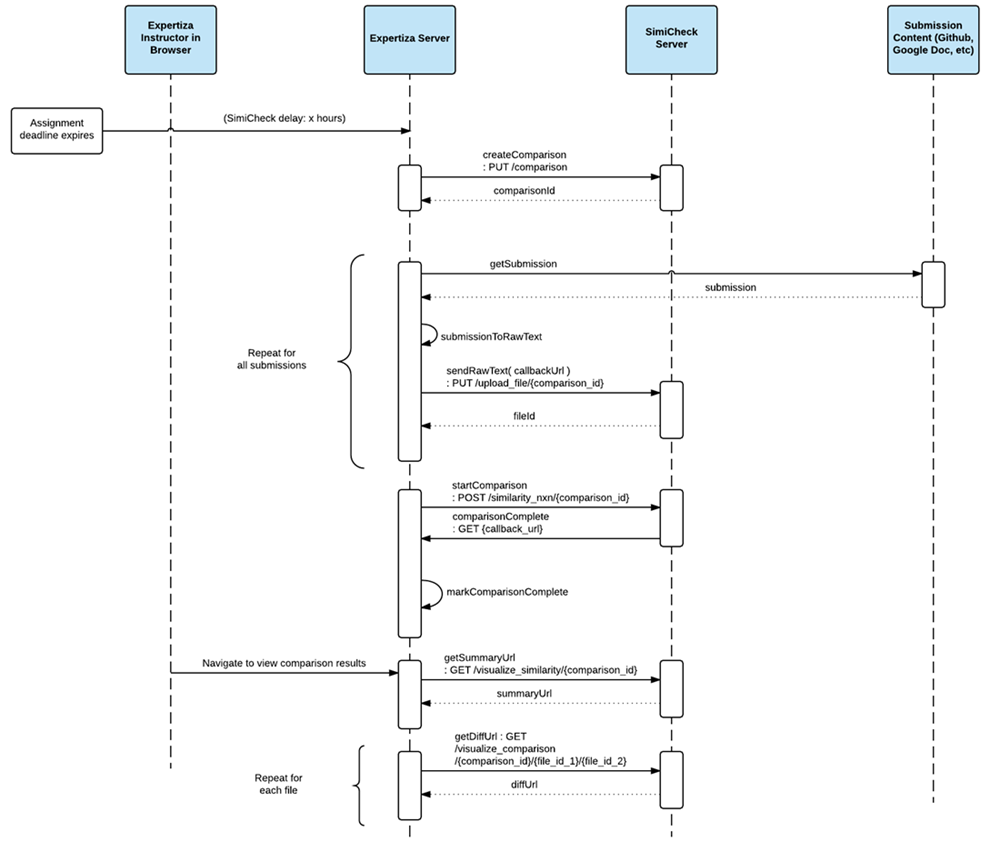

Class heirarchy in the fetchers: | |||

<br> | |||

[[File:SimiCheck_Fetchers.png]] | |||

=== User Interface === | === User Interface === | ||

The current assignment configuration UI | The current assignment configuration UI has been modified to contain 2 new select boxes. These select boxes determine how long to delay the Plagiarism Checker after an assignment's due date, and on what similarity percent to filter the Plagiarism Checker Comparison results. | ||

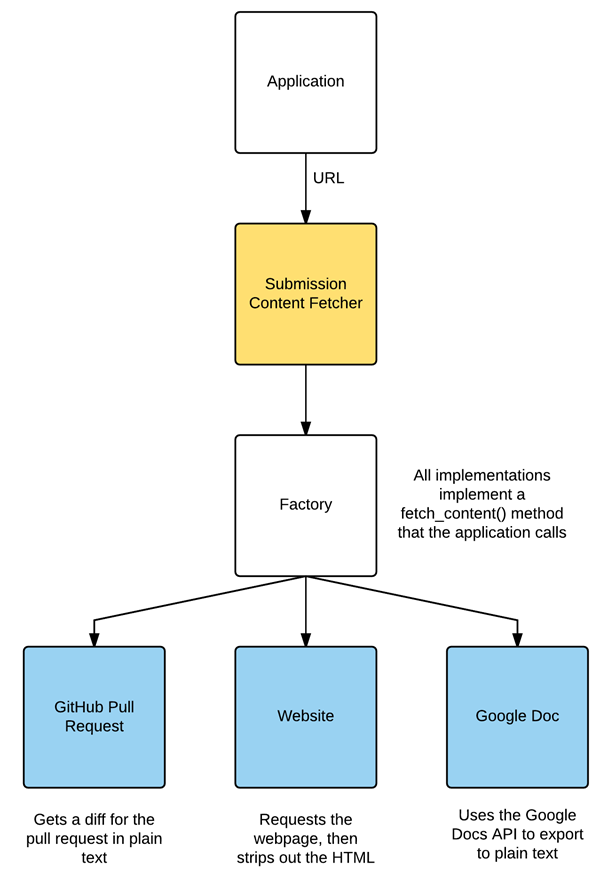

After the results have been aggregated they | After the results have been aggregated they can be viewed in a results report page. This report includes the submission names, the responsible teams, the similarity percentage, and a link to view the similarity results. The Plagiarism Checker Report UI looks similar to this: | ||

[[File:SimiCheck_View_Mockup.png]] | [[File:SimiCheck_View_Mockup.png]] | ||

To view the interface changes, login to Expertiza as an instructor; navigate to Manage... Assignments. Click "New public/private assignment". The last | To view the interface changes, login to Expertiza as an instructor; navigate to Manage... Assignments. Click "New public/private assignment". The last two fields on the New Assignment page say "SimiCheck Delay" and "SimiCheck Similarity Threshold". In "SimiCheck Delay", select a value between 0 and 100 to enable the Plagiarism Checker. In "SimiCheck Similarity Threshold", select a percentage value to filter the Plagiarism Checker Comparison results. The percentage refers to the percent of same text between two documents. | ||

After the assignment ends and the delay period has passed, you can view the Plagiarism Report. Click the "View review report" icon containing a magnifying glass and two people (in the third row of per-assignment icons). Select "Plagiarism Checker Report" from the select box, and click "Submit". If there is any plagiarism to report, it will load. | After the assignment ends and the delay period has passed, you can view the Plagiarism Report. Click the "View review report" icon containing a magnifying glass and two people (in the third row of per-assignment icons). Select "Plagiarism Checker Report" from the select box, and click "Submit". If there is any plagiarism to report, it will load. | ||

=== Task Triggering === | === Task Triggering === | ||

In Expertiza there already existed functionality to schedule or queue tasks for the task system. We have hooked into that system by adding a new task type declared as "compare_files_with_simicheck" and then providing the correct date/time configuration. When a task deadline occurs, there is a method that invokes logic based on the task type. Once this task type is detected on a scheduled task, the SimiCheck comparison is initiated. | |||

=== Code Sample === | |||

====Scheduled task expires, hook is called==== | |||

The following code was added to app/mailers/delayed_mailer.rb/perform: | |||

<code> | |||

if (self.deadline_type == "compare_files_with_simicheck") | |||

perform_simicheck_comparisons(self.assignment_id) | |||

end | |||

</code> | |||

== Testing Strategy == | |||

=== Automated Testing within Rails === | |||

We wrote unit tests for the new functionality that we implemented, this included models, helpers, SimiCheck logic etc.. In order to properly unit test we mocked all interfaces and black box tested the new functionality. Our test cases can be found in the following locations: | |||

*spec/models/website_fetcher_spec.rb | |||

*spec/models/google_doc_fetcher_spec.rb | |||

*spec/models/http_request_spec.rb | |||

*spec/models/simicheck_webservice_spec.rb | |||

*spec/models/submission_content_fetcher_spec.rb | |||

More specifically the fetcher model tests check that they will accept the proper URL only, and that an empty string is returned on request failure. Since the GitHub and Google Docs API fetchers do not do any scrubbing of the content after receipt there is no need to test this functionality. The website fetcher on the other hand is passed a mock response that includes HTML tags, and the test ensures that they are stripped. The fetcher factory checks that the correct fetcher is returned for a range of URLs, and nil for an unmatched URL. | |||

The test for HttpRequest checks that it will accept all possible valid URLs (exceptions like unresolved IP addresses are noted in a comment), since this static method is used by the fetchers to only accept valid URLs. Its get method to start and process a GET request is not unit tested since that is a wrapper for the Net::HTTP::Get. | |||

Lastly a unit test is provided for the Simicheck webservice to ensure that the logic we added to handle the API works. There is a delay during the testing of 30 seconds to prevent bombarding their servers with requests, also because the comparisons are not instantaneous. Essentially the test creates a comparison and runs it through the methods used to interact with the Simicheck REST API to ensure that it still works. One caveat is that a network connection is required which could lead to a false failure. | |||

All of the above unit tests here can be improved by using a gem that is designed for testing external APIs, like VCR for example, that will mock out low level calls to Net::HTTP::Get, etc, and can replay API responses. This is the best long term solution, however was not implemented in order to not break compatibility with the existing toolchain versions by adding another gem. A high level test using Capybara could also be added to click through the UI and using the factories to create test data as submissions, but there are many complexities to consider there and we opted not to implement here until an API testing gem would be added to the code to replay API responses and calls. | |||

Latest revision as of 06:19, 4 May 2017

Team BEND - Bradford Ingersoll, Erika Eill, Nephi Grant, David Gutierrez

Problem Statement

Given that submissions to Expertiza are digital in nature, the opportunity exists to utilize tools that automate plagiarism validation. One of these such tools is called SimiCheck. SimiCheck has a web service API that can be used to compare documents and check them for plagiarism. The goal of this project is to integrate the SimiCheck API into Expertiza in order to allow for an automated plagiarism check to take place once a submission is closed. .

Background

This project has been worked on before in previous semesters. The completed code from previous projects did not clearly demonstrate successful integration with SimiCheck from Expertiza, and was not deemed production worthy. Based on this feedback, we started our development from scratch and utilized the previous project as a resource for lessons learned.

Requirements

- Create a scheduled background task that starts a configurable amount of time after the due date of an assignment has passed

- The scheduled task should do the following:

- Fetch the submission content using links provided by the student in Expertiza from only these sources:

- External website or MediaWiki

- GET request to the URL then strip HTML from the content

- Google Doc (not sheet or slides)

- Google Drive API

- GitHub project (not pull requests)

- GitHub API, will only use the student or group’s changes

- External website or MediaWiki

- Categorize the submission content as either a text submission or source code

- Convert the submission content to raw text format to facilitate comparison

- Use the SimiCheck API to check similarity among the assignment’s submissions

- Notify the instructor that a comparison has started

- Send the content of the submissions for each submission category

- We will experiment with how many documents to send at a time

- Note that each file is limited in size to 1 MB by SimiCheck

- Wait for the SimiCheck comparison process to complete

- We will provide SimiCheck with a callback URL to notify when the comparison is complete, however if this doesn’t work well will revert to polling

- We will also provide an “Update Status” link to manually poll comparison status

- Notify the assignment’s instructor that a comparison has been completed

- Fetch the submission content using links provided by the student in Expertiza from only these sources:

- Visualize the results in report

- We will edit view/review_mapping/response_report.html.haml to include a new PlagiarismCheckerReport type and point to the plagiarism_checker_report partial.

- Since SimiCheck has already implemented file diffs, links will be provided in the Expertiza view that lead to the SimiCheck website for each file combination

- Comparison results for each category will be displayed within Expertiza in a table

- Each row is a file combination with similiarity, file names, team names, and diff link

- Sorted in descending similarity

- Uses available data from the SimiCheck API’s summary report

Expertiza Modifications

The majority of the updates are handled in new background tasks. Therefore, there weren't many modifications to existing Expertiza files. The current New Assignment interface has two new configuration parameters, which have also been added to the Assignment model. SimiCheck Delay (hours) and SimiCheck Similarity Threshold (percentage) were added. The Plagiarism Comparison Report was added to the "Review Report" interface's select box for a selected assignment.

Design

Pattern(s)

Given that this project revolves around integration with several web services, our team is planning to follow the facade design pattern to allow Expertiza to make REST requests to several APIs include SimiCheck, GitHub, Google Drive, etc. This pattern is commonly used to abstract complex functionalities into a simpler interface for use as well as encapsulate API changes to prevent having to update application code if the service changes or a different one is used. We feel this is appropriate based on the requirements because we can create an easy-to-use interface within Expertiza that hides the actual API integration behind the scenes. With this in place, current and future Expertiza developers can use our simplified functionality without needing to understand the miniscule details of the API’s operation.

Model(s)

There is no need to store the raw content sent to SimiCheck.

Assignment - SimiCheck delay and threshold added

We added "simicheck" and "simicheck_threshold" properties to the the existing Assignment model.

The "simicheck" property accommodates the number of hours to delay the execution of the Plagiarism Checker after the assignment's due date. "simicheck" is -1 if there is no Plagiarism Checker scheduled, and between 0 and 100 (hours) if the assignment is to have a Plagiarism Checker Report.

The "simicheck_threshold" property is a percentage that filters the Plagiarism Checker's Similarity results. The threshold refers to the percentage of text that is the same between two documents.

PlagiarismCheckerComparison

This model belongs_to the PlagiarismCheckerAssignmentSubmission model. It stores the file IDs returned from SimiCheck, the percent similarity between them, the Team IDs, and a URL to a detailed comparison (diff).

PlagiarismCheckerAssignmentSubmission

This model has_many PlagiarismCheckerComparison models. It represents the results of the comparison among submissions for the assignment. As such it will contain all of the relevant fields that are shown in the view as described in the requirements.

Diagrams

Typical overall system operation:

Class heirarchy in the fetchers:

User Interface

The current assignment configuration UI has been modified to contain 2 new select boxes. These select boxes determine how long to delay the Plagiarism Checker after an assignment's due date, and on what similarity percent to filter the Plagiarism Checker Comparison results.

After the results have been aggregated they can be viewed in a results report page. This report includes the submission names, the responsible teams, the similarity percentage, and a link to view the similarity results. The Plagiarism Checker Report UI looks similar to this:

To view the interface changes, login to Expertiza as an instructor; navigate to Manage... Assignments. Click "New public/private assignment". The last two fields on the New Assignment page say "SimiCheck Delay" and "SimiCheck Similarity Threshold". In "SimiCheck Delay", select a value between 0 and 100 to enable the Plagiarism Checker. In "SimiCheck Similarity Threshold", select a percentage value to filter the Plagiarism Checker Comparison results. The percentage refers to the percent of same text between two documents.

After the assignment ends and the delay period has passed, you can view the Plagiarism Report. Click the "View review report" icon containing a magnifying glass and two people (in the third row of per-assignment icons). Select "Plagiarism Checker Report" from the select box, and click "Submit". If there is any plagiarism to report, it will load.

Task Triggering

In Expertiza there already existed functionality to schedule or queue tasks for the task system. We have hooked into that system by adding a new task type declared as "compare_files_with_simicheck" and then providing the correct date/time configuration. When a task deadline occurs, there is a method that invokes logic based on the task type. Once this task type is detected on a scheduled task, the SimiCheck comparison is initiated.

Code Sample

Scheduled task expires, hook is called

The following code was added to app/mailers/delayed_mailer.rb/perform:

if (self.deadline_type == "compare_files_with_simicheck")

perform_simicheck_comparisons(self.assignment_id)

end

Testing Strategy

Automated Testing within Rails

We wrote unit tests for the new functionality that we implemented, this included models, helpers, SimiCheck logic etc.. In order to properly unit test we mocked all interfaces and black box tested the new functionality. Our test cases can be found in the following locations:

- spec/models/website_fetcher_spec.rb

- spec/models/google_doc_fetcher_spec.rb

- spec/models/http_request_spec.rb

- spec/models/simicheck_webservice_spec.rb

- spec/models/submission_content_fetcher_spec.rb

More specifically the fetcher model tests check that they will accept the proper URL only, and that an empty string is returned on request failure. Since the GitHub and Google Docs API fetchers do not do any scrubbing of the content after receipt there is no need to test this functionality. The website fetcher on the other hand is passed a mock response that includes HTML tags, and the test ensures that they are stripped. The fetcher factory checks that the correct fetcher is returned for a range of URLs, and nil for an unmatched URL.

The test for HttpRequest checks that it will accept all possible valid URLs (exceptions like unresolved IP addresses are noted in a comment), since this static method is used by the fetchers to only accept valid URLs. Its get method to start and process a GET request is not unit tested since that is a wrapper for the Net::HTTP::Get.

Lastly a unit test is provided for the Simicheck webservice to ensure that the logic we added to handle the API works. There is a delay during the testing of 30 seconds to prevent bombarding their servers with requests, also because the comparisons are not instantaneous. Essentially the test creates a comparison and runs it through the methods used to interact with the Simicheck REST API to ensure that it still works. One caveat is that a network connection is required which could lead to a false failure.

All of the above unit tests here can be improved by using a gem that is designed for testing external APIs, like VCR for example, that will mock out low level calls to Net::HTTP::Get, etc, and can replay API responses. This is the best long term solution, however was not implemented in order to not break compatibility with the existing toolchain versions by adding another gem. A high level test using Capybara could also be added to click through the UI and using the factories to create test data as submissions, but there are many complexities to consider there and we opted not to implement here until an API testing gem would be added to the code to replay API responses and calls.