CSC/ECE 517 Fall 2017/E1792 OSS Visualizations for instructors

This wiki page is for the description of changes made under E1792 OSS Visualizations for instructors.

About Expertiza

Expertiza is an open source project based on Ruby on Rails framework. Expertiza allows the instructor to create new assignments and customize new or existing assignments. It also allows the instructor to create a list of topics the students can sign up for. Students can form teams in Expertiza to work on various projects and assignments. Students can also peer review other students' submissions. Instructors can also use Expertiza for interactive views of class performances and reviews.

Introduction

This project aims to improve the visualizations of certain pages related to reviews and feedback in Expertiza in the instructor's view. This would aid the instructors to judge outcomes of reviews and class performance in assignments via graphs and tables, which in turn would ease the process of grading the reviews.

Problem Statement

- Issue 1: The scale is blue to green, which does not make sense. And colors will change randomly each time loading the page. It will be better to scale from red to green.

- Issue 2: Two adjacent bar represents the response in round 1 to round k. It makes sense only if the rubrics in all review rounds are all the same. If the instructor implements the vary-rubric-by-round mechanism, this visualization will not make sense.

- Issue 3:The table is presorted by teams in the page View Scores, but you can now also sort alphabetically. The cell view looks way too long, and should be divided into partials.

- Issue 4: An interactive visualization or table that shows how a class performed on selected rubric criteria would be immensely helpful. It would show the instructors what they need to focus more attention on.

Approach to be followed to fix the issues

Issue 1: For links in each cell. We are not sure what will happen if we click the link and which page will open.

Approach to solve issue 1: The issue is about the cell contains link to different pages in expertiza, but there is no way to know which cell is linked to which page. So user don't know the destination page when he/she clicks on some page. So we know that there is link in each cell, so we will show a hover over text displaying the link of the destination page.

Issue 2: The scale is blue to green, which does not make sense. And colors will change randomly each time loading the page. It will be better to scale from red to green.

Approach to solve issue 2: Here the color coding is blue to green and also it changes randomly. So we will use RBG color coding to make it red to green. We will use percentage to decide color coding. For example if it between 0 - 20% then make it red and so on. This way no matter what scale is being used it will always have appropriate color coding.

Issue 3: Two adjacent bar represents the response in round 1 to round k. It makes sense only if the rubrics in all review rounds are all the same. If the instructor implements the vary-rubric-by-round mechanism, this visualization will not make sense.

Approach to solve issue 3: Here The problem is that the the review responses show in a grid format that shows all the reviews from round 1 to round n. But this is ok when the rubrics is same for all the rounds. But when the rubric is different from round to round this view doesn't make sense. So what we are thinking is creating different grid for each review round. That will solve the problem for when the rubric is different as well as when the rubric is same.

Issue 4: The table is presorted by teams, but you can now also sort alphabetically. The cell view looks way too long, and should be divided into partials.

Approach to solve issue 4: Here we can sort the select query with appropriate column alphabetically, or we can sort the table automatically according to user criteria using dynamic table format. And the long view issue can be solved by using paging.

Issue 5: An interactive visualization or table that shows how a class performed on selected rubric criteria would be immensely helpful. It would show me what I need to focus more attention on.

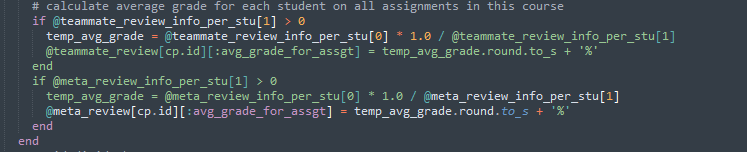

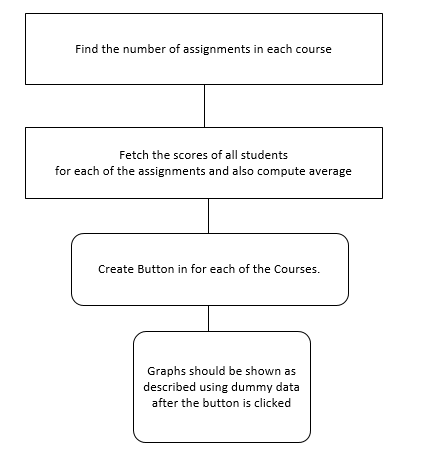

Approach to solve issue 5 : The issue is about getting the performance of the entire class graded using the 5 rubrics criteria.A proposed solution may be use the below logic of calculating average grade for each student on all assignments in a particular course from the controller assessment360_controller.rb

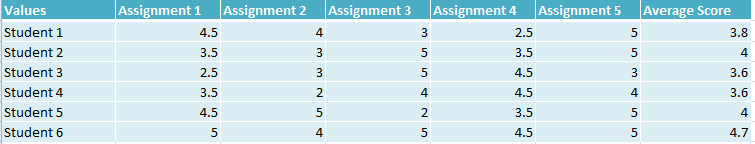

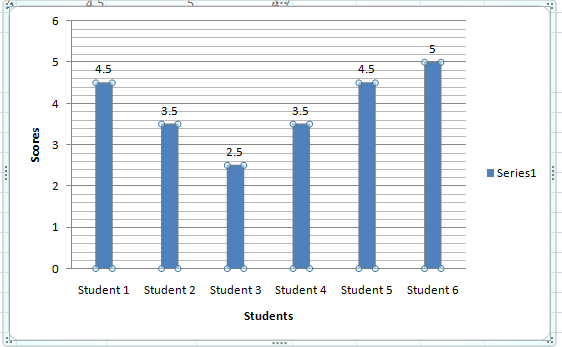

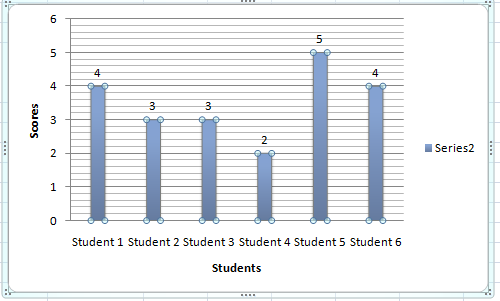

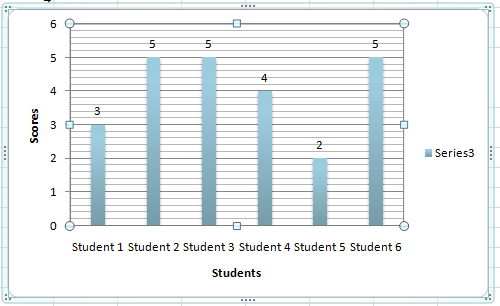

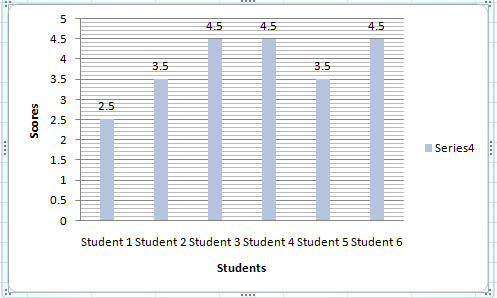

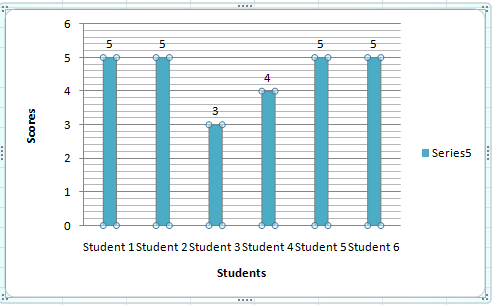

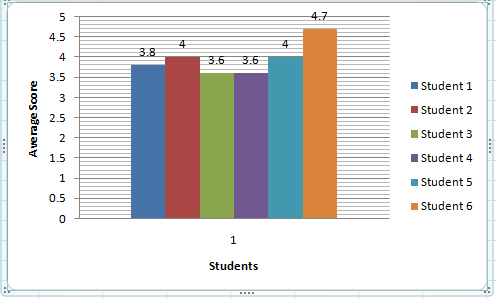

The average grades of each student can be pulled and then used to plot a bar graph as shown below ( This has been created by using a dummy data ) :-

Graph for class performance on Assignment 1 :-

Graph for class performance on Assignment 2 :-

Graph for class performance on Assignment 3 :-

Graph for class performance on Assignment 4 :-

Graph for class performance on Assignment 5 :-

Graph for class performance on Average Class performance :-

The work flow can be approximately like the below diagram :-

Issue 6: Integration of review performance- The basic aim of this implementation is to somehow combine an author’s feedback on a review and the corresponding review of a reviewer, to build a method for grading the reviewers.

Basic rubrics to be considered:

-Number of reviews completed

-Length of reviews

-Summary of reviews

-Whether reviewers added a file or link to their review

-The average ratings they received from the author.

-A graph or table is prefered for easing this change.

Approach to solve issue 6: The entire focus is on to what extent a review could help the author. The latter’s feedback is always available in the form of numerical scores. We intend to capture the author’s score of the review topic-wise like that of tone of review, plausible solutions to problems suggested by reviewer and the rate of how much it helped the author to improve(scored by himself).

-In each round of review done, the author’s feedback is noted topic-wise as suggested in the previous step and possibly generate a graph comparing the “n” rounds of reviews.

-If reviewer added a legit file or link to their review, then we propose to add a few extra credits to the reviewer that can be added to their final grade.

The author’s feedback in the form of an average number can be taken and if that value exceeds that of a threshold , then the reviews were really meaningful.

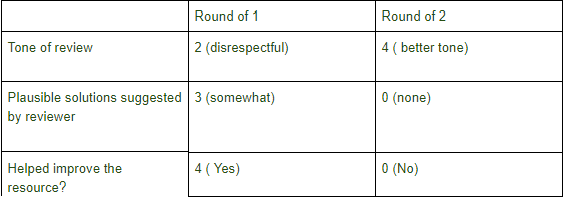

For example - Consider author’s feedback as follows for 2 rounds of reviews:

Observations: Mean score of round 1 is 3 and Mean score of round 2 is 1.9.

Suppose , we take into account that Round of 1 has more importance than the second one because it helps a reviewer improve, and also that every reviewer should atleast provide 2 suggestions or comments that help authors improve. Then the threshold value is considered to be 2 and the Mean score of round 1 > threshold.

Therefore, the reviews were meaningful and deserves a credit in the higher range of grades. If the mean score of round 1 < threshold then the mean score of round 2 is compared with the threshold.

Overall : Mean round 1> threshold : Higher range of grade to reviewer.

Mean round 1<threshold : Check if Mean round 2 > threshold

If true , Medium range of grade to reviewer.

Else , lower range of grades.

Now once it’s decided in what range a reviewer deserves credit, The other factors like summary of review and length of review are taken into account by the instructors and graded according to the effectiveness of concepts explained in it. Also if a reviewer misses the second round of review then only the first round is taken into consideration. The instructor should believe that all but the last round of reviews are crucial to improve a document.

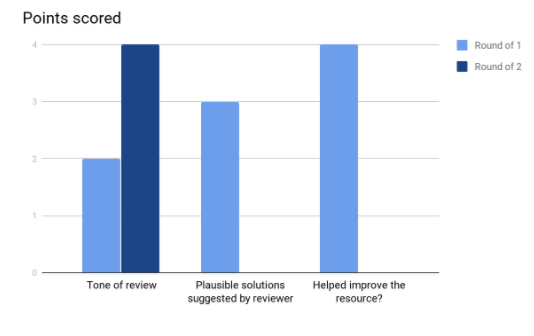

Graphs:Can be generated for comparing the effectiveness of the “n” rounds of reviews for the authors depending on the author’s score.

In this case - (only an example visualization)

Observations: The graph and table together suggest that round 1 of the review actually had an impact on the author.

Test Plan

Issue 1: Check the link matches to the hover over text

- 1) Login as instructor

- 2) Go to View Scores page. Hover over a cell.

- 3) You will see a link being displayed upon hovering. Click on the cell.

- 4) If the system directs you to the link that was displayed upon hovering, then the test passed.

Issue 2: Check the color coding when different number is given in different scale.

- 1) Login as instructor

- 2) Create a dummy assignment and come dummy reviews for the same.Log out.

- 3) Login as a student. Attempt the assignment. Log out.

- 4) Login as another student and repeat step 3.

- 5) Login as either student and attempt the review. Logout.

- 6) Login as instructor. Go to Review grades page and check the table. If color code ranges from red ( for least score) to green ( for highest score), then test passed.

Issue 3: Enter multiple reviews with different rubric and check different table/grid shows for each review round separately.

- 1) Login as instructor.

- 2) Create an assignment. Select 2 reviews. Also select different rubrics for both reviews.

- 3) Login as a student ("A") and submit the assignment. Repeat this for another student("B").

- 4) Login as student A and perform review. Do this for student B too.

- 5) Now resubmit assignment as student A and B again.

- 6) Resubmit reviews as student A and B again. This time the rubrics will be different from the previous round.

- 7) Now login as instructor and see the visualization of the reviews. You can see the different graphs for different submissions.

Issue 4: Check if the table is sorted with appropriate column alphabetically

- 1) Login as instructor

- 2) Create a dummy assignment with some teams. Logout.

- 3) Login as a student and attempt the assignment and logout.

- 4) Repeat step 3 for all dummy teams.

- 5) Login as instructor.

- 6) Go to View Scores page. Check the grade table.

- 7) Click on a column header and check if data in it is getting sorted alphabetically. If yes, then the test passed.

Issue 5:

- 1) Login as the instructor

- 2) Click on the button to compute graphs

- 3) Compare the bar graphs with separate scores of students in each assignments.

Issue 6: Since it is a new algorithm being proposed, we intend to test it first manually using dummy datasets. Later on, automated test cases can be added for the same.

- 1) Login as an instructor and create a dummy assignment with 2 rounds of review submissions. Assign 2 teams to that assignment of 1 student each. Also, put the deadline of first review as "1 hour". Log out

- 2) Login as a student and submit some data for the assignment. Log out.

- 3) Login as the second student and submit the same assignment using dummy data. Log out.

All the above steps will help us reach towards the section of reviews.

- 4) Login as the first student and go to "Other's work" and request for a review document.By default, you will be assigned the document of the second student to review. Once assigned, complete that review with dummy scores. Log out.

- 5) Login as the second student. Check the review in "Your scores" page and click on the section "Show review". There you can provide scored feedback to the reviewer. Definitely take into account the tone, solutions suggested and how it will help you. Log out.

- 6) Login as the first student after the deadline for the first review completes. Go to "Other's work" and request for a new submission. You will be assigned the same document of the other student to review for the second round. Follow step 4.

- 7) Login as the second student and follow step 5.

- 8) The system has the data related to author's feedback and the reviewer's data and will calculate the scores of the reviewer according to the range of credits ( lower range, medium range and higher range) set by the instructor.

- Edge cases - May include options like adding a link in the review document by the reviewer and seeing how the grade is increased.

May also take into account the situation when author's feedback isn't available and the score of the reviewer would solely depend on the latter's reviews adn the instructor's jurisdiction because the algorithm in this case would fail.

References

- Expertiza repo : https://github.com/expertiza/expertiza