CSC/ECE 517 Fall 2015 E1580 Text metrics: Difference between revisions

| Line 36: | Line 36: | ||

=== Design Patterns === | === Design Patterns === | ||

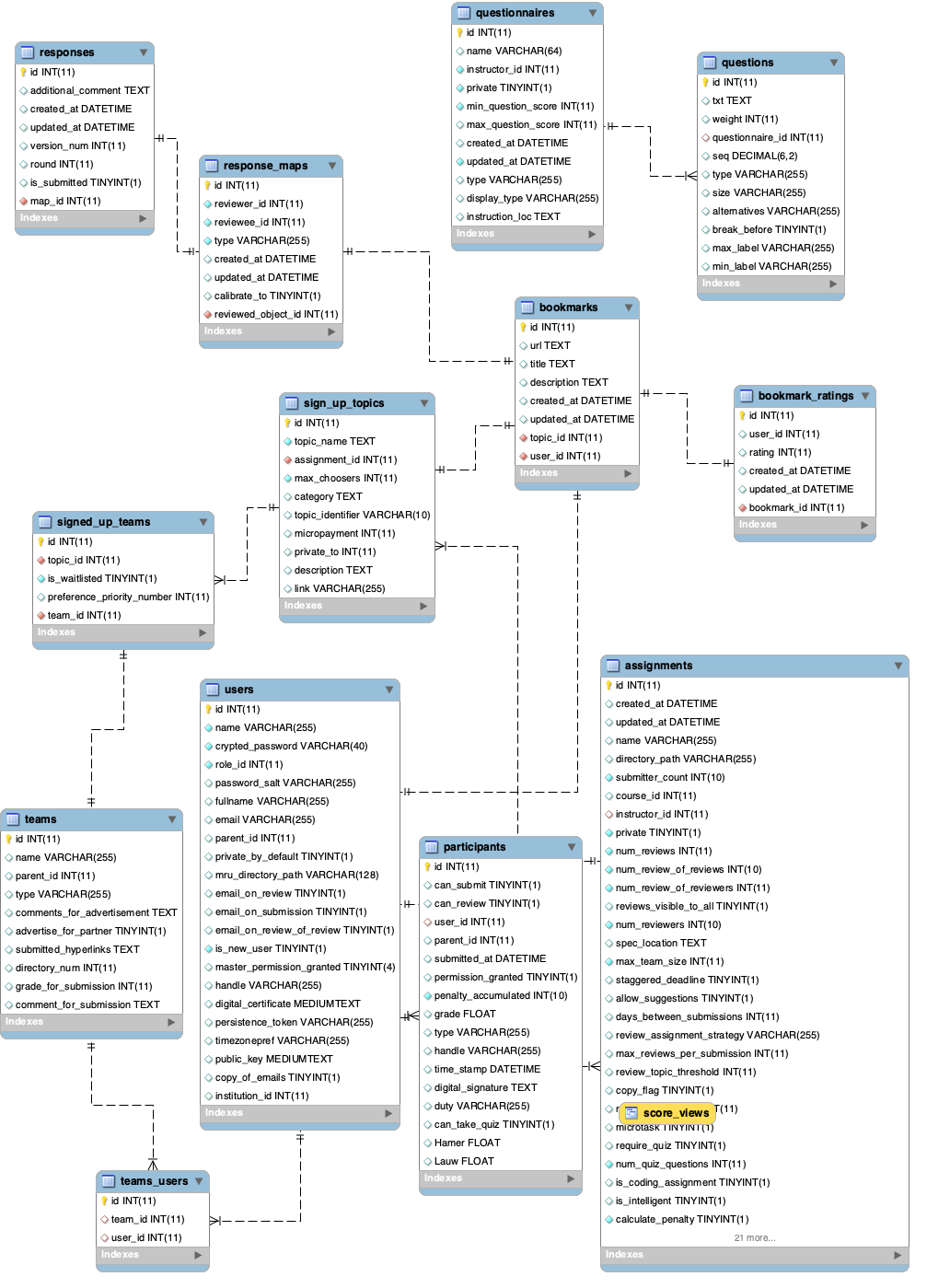

'''Iterator Pattern<ref>https://en.wikipedia.org/wiki/Iterator_pattern</ref>:'''The iterator design pattern uses an iterator to traverse a container and access its elements.In our implementation, we | '''Iterator Pattern<ref>https://en.wikipedia.org/wiki/Iterator_pattern</ref>:'''The iterator design pattern uses an iterator to traverse a container and access its elements.In our implementation, we have iterated over the comments in each response(answers table) relating to a particular round to calculate the individual metrics. Also while calculating the aggregate metrics we have iterated through the review_metrics table to read the metric values for a particular user using all the responses for the particular response map corresponding to the submission of the review. | ||

=== Database Table Design === | === Database Table Design === | ||

Revision as of 04:47, 5 December 2015

Introduction

Expertiza is an open-source peer-review based web application which allows for incremental learning. Students can submit learning objects such as articles, wiki pages, repository links and with the help of peer reviews, improve them. The project has been developed using the Ruby on Rails framework and is supported by the National Science Foundation

Project

Purpose

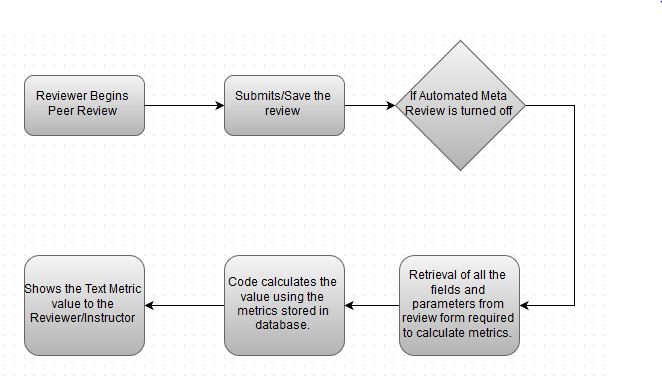

The current version of The Expertiza Project has an automated meta-review system wherein the reviewer gets an e-mail containing various metrics of his review like relevance, plagiarism, number of words etc. , whenever a review is submitted. The purpose of this project is to give students some metrics on the content of the review when the automated meta reviews are disabled. This also includes the addition of new relevant metrics which can help the reviewers and instructors to gain insight into the reviews.

Scope

The scope of the project is limited creating a system where the reviewer and the instructors can view metrics for the submitted reviews.The user or the instructor need to manually visit the link to view the metrics.The instructor can view the metrics for every assignment available whereas the user can view only the metrics of the assignments relating to himself.The scope doesn't include any automated viewing of reports for the metrics.Since the project is mainly related to giving reports about the existing data, we will not be modifying the results saved by the actual peer-review mechanism.The scope also excludes any change of the actual peer review process i.e. submitting a review of an assignment or adding an assignment to a user profile.

Task Description

The project requires completion of the following tasks

- create database table to record all the metrics

- create code to calculate the values of the metrics and also ensure that the code runs fast enough (can give results within 5 seconds) as the current auto text metrics functionality is very slow and diminishes user experience.

- create views for both students and instructors that show for each assignment:

- Total no.of words

- average no. of words for all the reviews for the particular assignment in a particular round

- if there are suggestions in each reviewer's review

- the percentage of peer reviews that offer any suggestions

- if problems or errors are pointed out in the reviews

- the percentage of the peer-reviews which point out problems in this assignment in this round

- if any offensive language is used

- the percentage of peer-reviews containing offensive language

- No.of different words in a particular reviewer’s review

- No. of questions responded to with complete sentences

- make the code work for an assignment with and without the "vary rubric by rounds" feature

- create tests to make sure the test coverage increases

Workflow

Design

Design Patterns

Iterator Pattern<ref>https://en.wikipedia.org/wiki/Iterator_pattern</ref>:The iterator design pattern uses an iterator to traverse a container and access its elements.In our implementation, we have iterated over the comments in each response(answers table) relating to a particular round to calculate the individual metrics. Also while calculating the aggregate metrics we have iterated through the review_metrics table to read the metric values for a particular user using all the responses for the particular response map corresponding to the submission of the review.

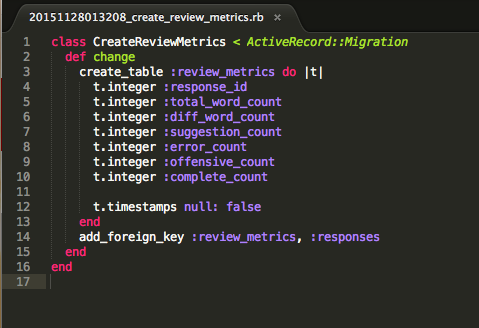

Database Table Design

The image shows the schema for the new table which will be created to store the calculated values of the metrics. Its attributes are explained below:

- response_id: This attribute is captured when a user submits a reviewer submits a review and passed on to the ReviewMetric model.This is used to link the metrics to a particular reviewer-response map.

- total_word_count: This attribute contains the total number of words for a particular review(response_id).

- diff_word_count: This attribute contains the total number of different words for a particular review(response_id).

- suggestions_count: This column holds the number of suggestions given per review

- error_count: Field containing the number of comments which point to errors in the code.

- offensive_count: This attribute contains the number of comments containing offensive words.

- complete_count: This contains the number of comments which have complete sentences in them.

Use Cases

- View Reviews Text Metrics as Reviewer: As a reviewer, he/she can see the text metrics of individual reviews as well as aggregate metrics for all the reviews done for an assignment/project.

- View Reviews Text Metrics as Instructor: As an instructor, he/she can see the text metrics of reviews received by any team for a particular project/assignment. The instructor can also see the text metrics of the reviews done by any reviewer.

Test Plan

- For use case 1, test whether the text metrics Db has entries populated for each type of metrics (no. of words, no. of offensive words, etc), once the reviewer submits any reviews.

- For use case 2, test if the instructor can see the text metrics of reviews received by each team for a project/assignment. Also, test if the instructor can see the text metrics done by any reviewer.

Details of Requirements

Hardware requirements

- Computing Power: Same as the current Expertiza system.

- Memory: Same as the current Expertiza system.

- Disk Storage: Same as the current Expertiza system.

- Peripherals: Same as the current Expertiza system.

- Network: Same as the current Expertiza system.

Software requirements

- Operating system environment : Windows/UNIX/OS X based OS

- Networking environment: Same as it is used in the current Expertiza system

- Tools: Git, Interactive Ruby

Implementation

UML Diagram

Tasks Implemented

The project requires completion of the following tasks

- Create database table to record all the metrics

- A new MVC by the name ReviewMetric has been added to the project.

- A table review_metrics is used for the same. It has the following columns to record data with respect to each response, i.e. review submitted.

- A new row is updated in the table when a new review is saved or submitted and the respective row gets updated when an existing review is re-edited or submitted.

- Create code to calculate the values of the metrics and also ensure that the code runs fast enough (can give results within 5 seconds) as the current auto text metrics functionality is very slow and diminishes user experience.

- The code for evaluating the metrics value as per each review is saved in the function calculate_metric at the model review_metric.rb

- This code is called from the function saving residing at response_controller.rb. The saving function gets called post necessary processing when a review is saved or submitted. The calculate_metric method is called at the end of the saving method to analyse the review and save respective information according to the requirement.

- The calculation code uses the Answer table to pull out the saved review content using the response_id of the review. It incorporates a set each for offensive words list, suggestive words list, and a list of problem pointing words. The function then calculates the following:

- Total number of words in the review

- Different number of words in the review

- Number of offensive words in the review - the method uses a set of offensive words as a dictionary and compares this with each word in the review

- Number of words which signals a suggestion in the review - the method uses a set of suggestive words as a dictionary and compares this with each word in the review

- Number of words which signal a problem being pointed out in the review - the method uses a set of problem pointing words as a dictionary and compares this with each word in the review

- Number of questions responded to with complete sentences - each sentence which has more than seven words qualify for a complete sentence

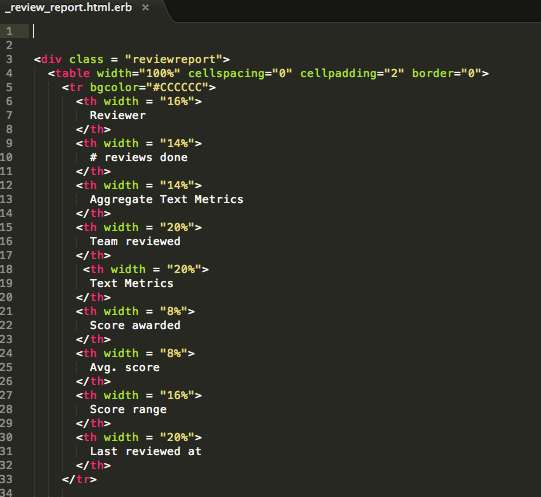

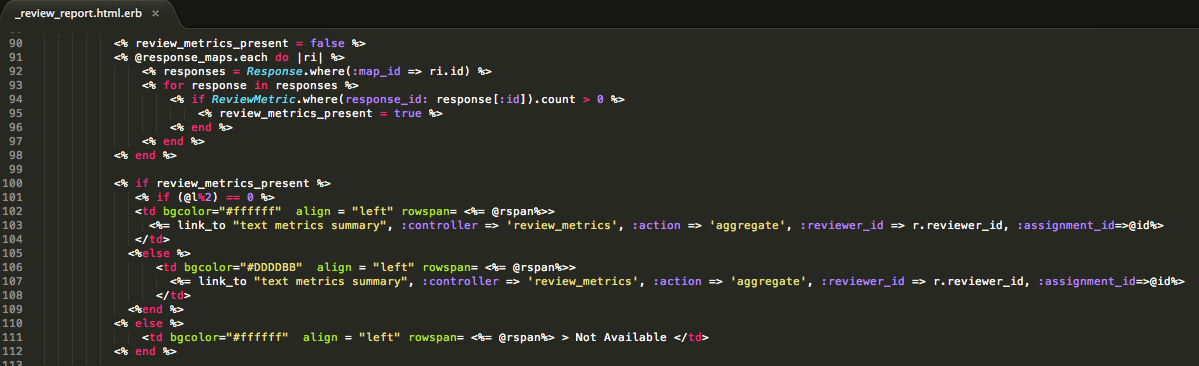

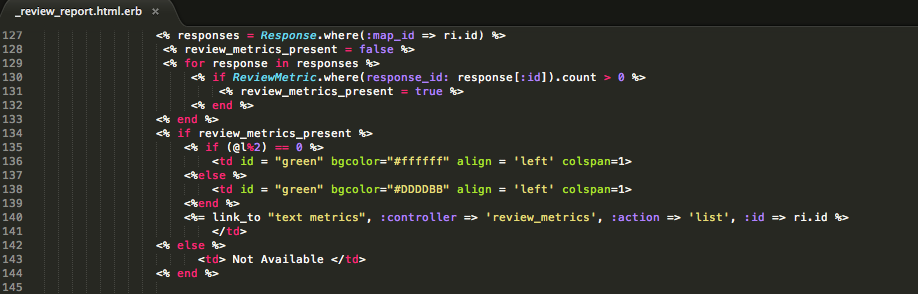

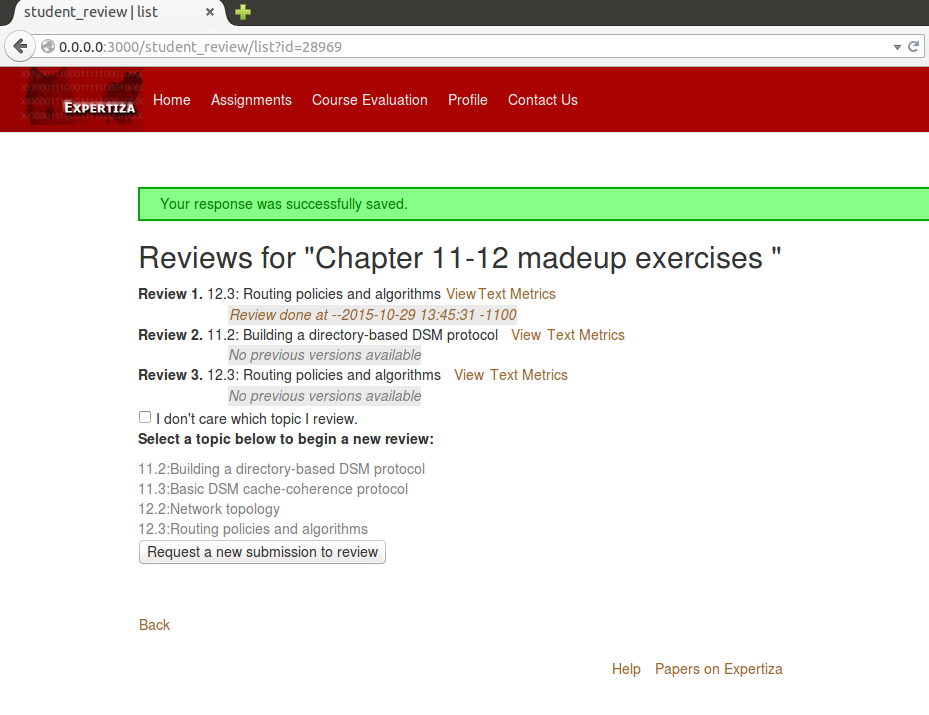

- Create partials for both students and instructors:

- Views are created for both students and instructors to display the text metrics calculated for each review and assignment. These views are accessible through links in the student report page and instructor _review_report.html.erb page

- The following are screenshots where the links are included in the above mentioned pages

- The following is a screenshot when a student saves or submits a review

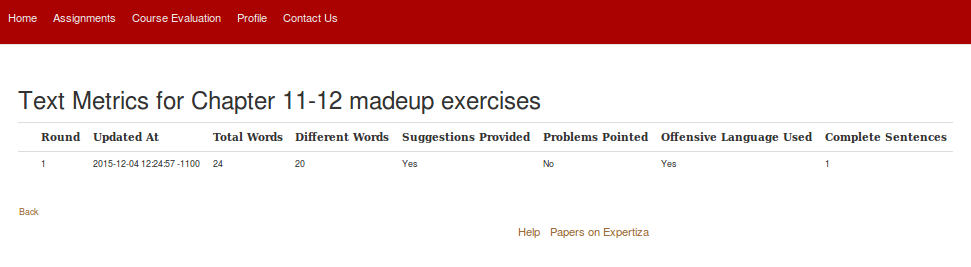

- The following is a screenshot when the student clicks the View Text Metrics link

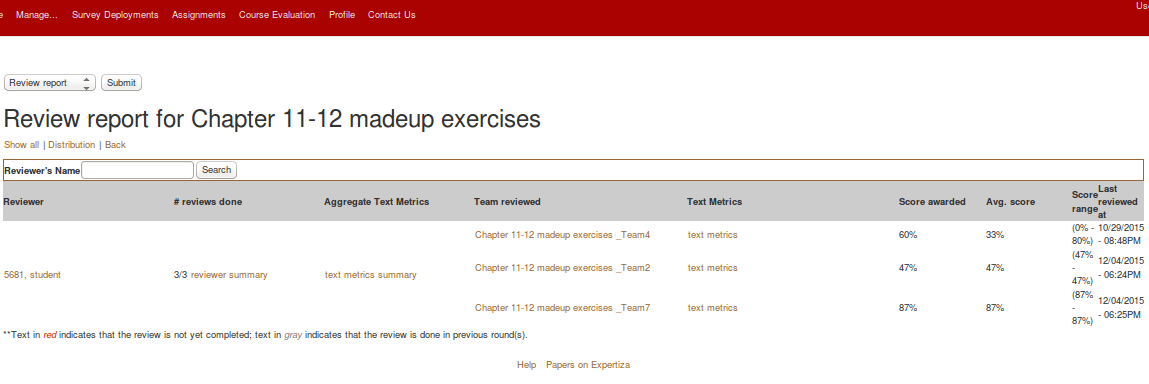

- The following is a screenshot when an instructor uses the View Review Report link at the assignments page for a given assignment

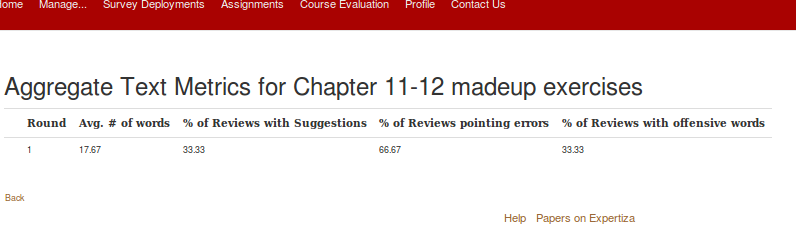

- The following is a screenshot when the instructor clicks the text metrics summary link

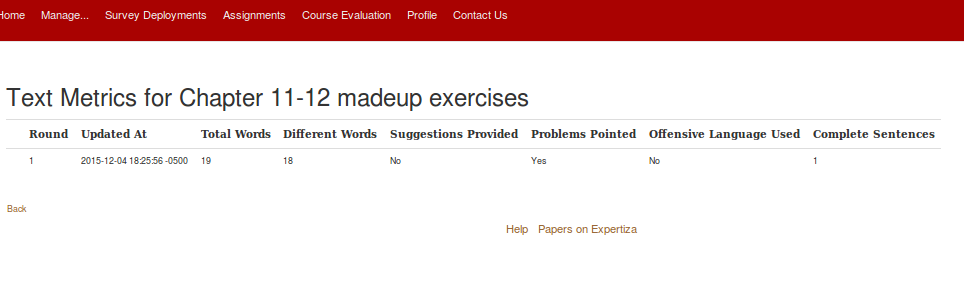

- The following is a screenshot when the instructor clicks the individual text metrics link

- Make the code work for an assignment with and without the "vary rubric by rounds" feat

- The calculate_metric code works for each review submission in a way where it uses the response_id of each review saved/submitted to find the review text saved in Answer table. The entire review text is then thoroughly checked to calculate the required metrics. Hence, any variation in the review rubrics does not affect this metric calculation.

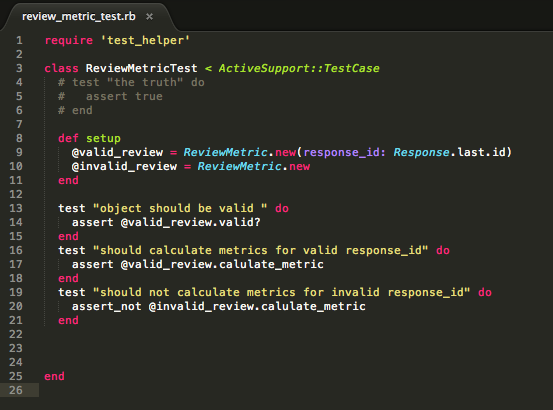

Unit Tests

- Tested for a valid object, i.e. a valid ReviewMetric entry.

- Tested the ReviewMetric model so that it only calculates the metrics for a ReviewMetric entry with a valid response_id.

- Tested the ReviewMetric model so that it does not calculate the metrics for a ReviewMetric entry with an invalid response_id.

Reference

<references/>