CSC/ECE 506 Spring 2013/10a os

Prefetching and Memory Consistency Models

Cache Misses

Cache misses can be classified into four categories: conflict, compulsory, capacity [3], and coherence. Conflict misses are misses that would not occur if the cache was fully-associative and had LRU replacement. Compulsory misses are misses required in any cache organization because they are the first references to an instruction or piece of data. Capacity misses occur when the cache size is not sufficient to hold data between references. Coherence misses are misses that occur as a result of invalidation to preserve multiprocessor cache consistency.The number of compulsory and capacity misses can be reduced by employing prefetching techniques such as by increasing the size of the cache lines or by prefetching blocks ahead of time.

Prefetching

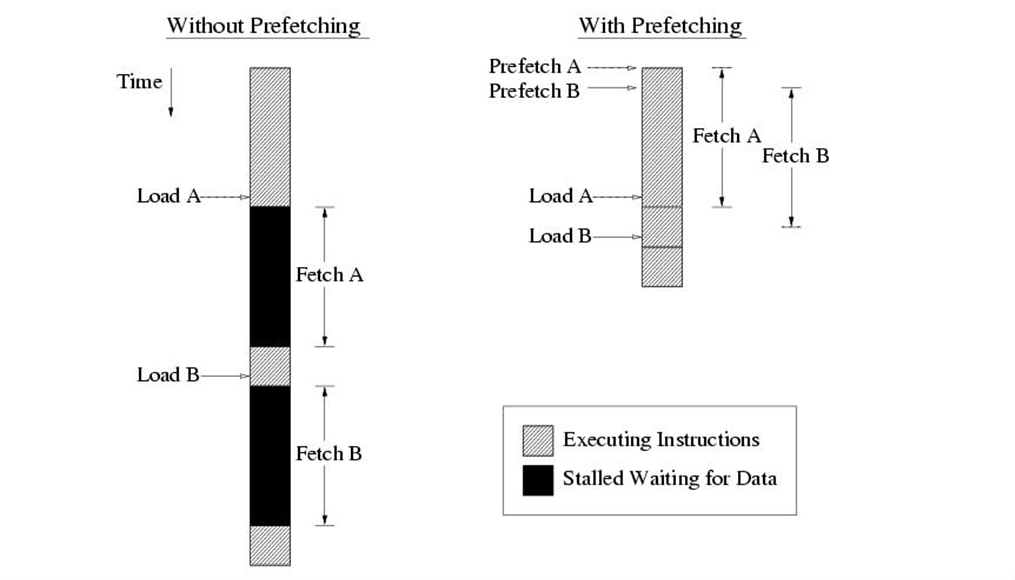

Prefetching is a common technique to reduce the read miss penalty. Prefetching relies on predicting which blocks currently missing in the cache will be read in the future and on bringing these blocks into the cache prior to the reference triggering the miss. Prefetching approaches proposed in the literature are software or hardware based.

Prefetching can be achieved in two ways: 1. Software Prefetching 2. Hardware Prefetching

Software Prefetching

With software prefetching the programmer or compiler inserts prefetch instructions into the program. These are instructions that initiate a load of a cache line into the cache, but do not stall waiting for the data to arrive.

A critical property of prefetch instructions is the time from when the prefetch is executed to when the data is used. If the prefetch is too close to the instruction using the prefetched data, the cache line will not have had time to arrive from main memory or the next cache level and the instruction will stall. This reduces the effectiveness of the prefetch.

If the prefetch is too far ahead of the instruction using the prefetched data, the prefetched cache line will instead already have been evicted again before the data is actually used. The instruction using the data will then cause another fetch of the cache line and have to stall. This not only eliminates the benefit of the prefetch instruction, but introduces additional costs since the cache line is now fetched twice from main memory or the next cache level. This increases the memory bandwidth requirement of the program.

Hardware Prefetching

Many modern processors implement hardware prefetching. This means that the processor monitors the memory access pattern of the running program and tries to predict what data the program will access next and prefetches that data. There are few different variants of how this can be done.

A stream prefetcher looks for streams where a sequence of consecutive cache lines are accessed by the program. When such a stream is found the processor starts prefetching the cache lines ahead of the program's accesses.

A stride prefetcher looks for instructions that make accesses with regular strides, that do not necessarily have to be to consecutive cache lines. When such an instruction is detected the processor tries to prefetch the cache lines it will access ahead of it.

An adjacent cache line prefetcher automatically fetches adjacent cache lines to ones being accessed by the program. This can be used to mimic behaviour of a larger cache line size in a cache level without actually having to increase the line size.

Software vs. Hardware Prefetching

Software prefetching has the following characteristics:

1. Can handle irregular access patterns, which do not trigger the hardware prefetcher.

2. Can use less bus bandwidth than hardware prefetching.

3. Software prefetches must be added to new code, and they do not benefit existing applications.

The characteristics of the hardware prefetching are as follows :

1. Works with existing applications

2. Requires regular access patterns

3. Start-up penalty before hardware prefetcher triggers and extra fetches after array finishes.

4. Will not prefetch across a 4K page boundary (i.e., the program would have to initiate demand loads for the new page before the hardware prefetcher will start prefetching from the new page).

References

<references/>