CSC/ECE 506 Spring 2012/ch9a cm

Introduction

Various schemes have been explored in the past to provide synchronization in a multiprocessor system. This article discusses the overheads associated with traditional locking mechanisms, using Java Monitors as an example. It also discusses some novel methods to and Various techniques have been studied that exploit the

Definitions

- Single Processor/Multiprocessor

- Atomic Instructions

- Mutual Exclusion

Thick locks/Lock overhead

Java is an explicitly multi-threaded language, hence each of the classes are designed with synchronization (using Monitors -link) in mind. In Java, the synchronization operations are specified by declaring a method or a block as synchronized (refer to the code below). If the specified method is an instance method, its receiver object, this, is used as the owner of the lock. To realize this Monitor behavior, the JVM uses a lock mechanism, "Java lock", associated with each object. The lock is acquired by a thread when it enters the object’s monitor, held while the thread is in the monitor and executing the synchronized method, and released when the thread exits from the monitor. If a thread attempts to acquire a lock held by another thread, the second thread is blocked until the first thread releases the lock.

// Simple counter

class MyCounter {

int value = 0;

synchronized int add(int i) { // Guarded by Java locks

value = value + i;

return value;

}

When these classes are used in single threaded programs, there's a huge performance overhead, even in the absence of true concurrency. Also, it incurs space overhead due to the presence of lock code in each of the objects, even if it may not need any synchronization. Currently in JDK design the concurrency primitives are kept outside of the objects to avoid space cost and looked up through a Monitor Cache. Unfortunately this does not scale well since monitor cache itself needs to be locked when making the look-ups.

Additionally, monitors make use of atomic operations(E.g Compare and Swap - CAS) to provide mutual exclusion. Note that CAS incurs local latency and does not really impact scalability. On typical SMP systems, reads are very efficient, since all the requesting processors can have the cache line replicated in their caches.But if even one processor is writing to a shared cache line, those writes will generate considerable cache coherence traffic. Assuming a write-invalidate cache coherence policy, the readers will continually re-load the cache line just to have it subsequently invalidated by the writer. Coherency bandwidth is limited and global resource, so in addition to local latency, excessive sharing traffic will impact overall scalability and impede the progress of threads running on other processors.A so-called coherency miss -- for example a load on processor P1 where processor P2 has the cache line in M-state -- is typically much slower than a normal miss. So if you have threads on processors P1 and P2 iterating trying to acquire the same lock, then lock acquisition itself will generate coherency traffic and result in the cache "sloshing" of the line(s) holding the metadata.

Solutions for Reducing the Overhead

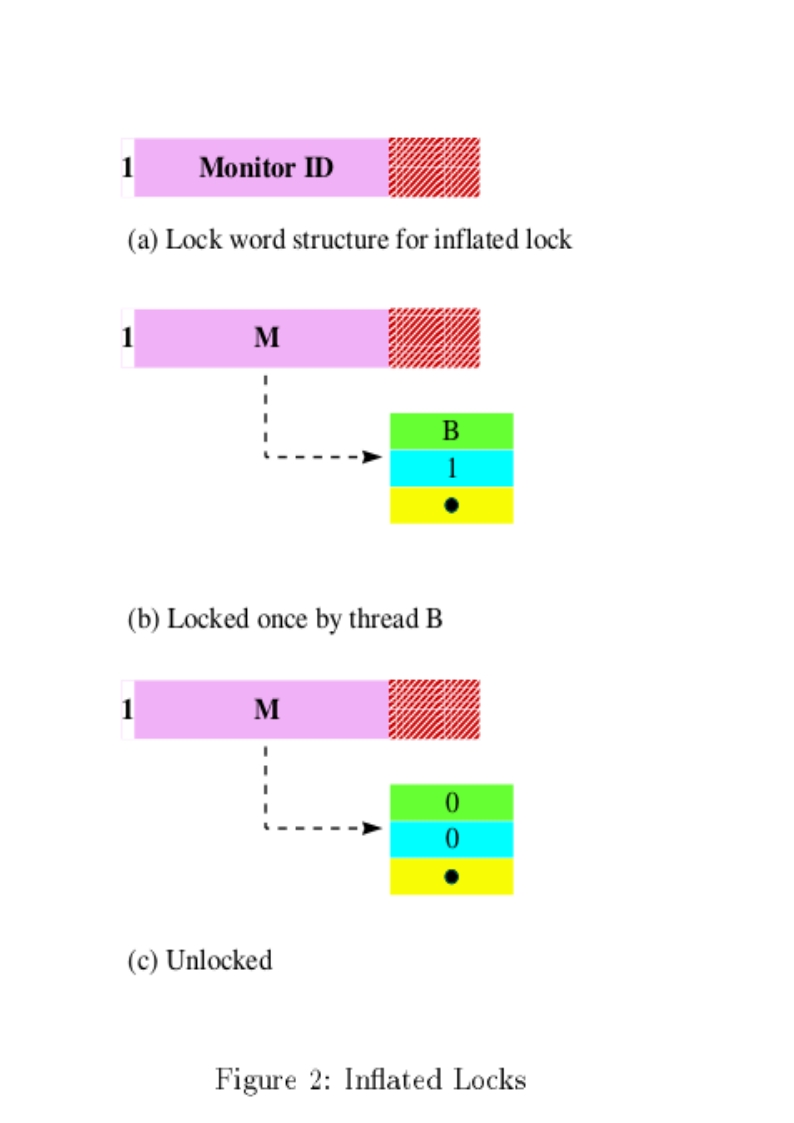

The first characteristic of Java locks is that only a few objects are actually used for locks, although any objects can theoretically be used. The monitor table method utilized this characteristic to reduce the memory consumption. In this method, monitor structures are not prepared for objects that are not used for locks, which is the most common case. When an object is first used for lock, a monitor structure is prepared and associated with the object.

The second characteristic is that contention between threads never occurs for most of the objects used for locks. This fact implies that the heavyweight monitor structure which supports thread suspension is unnecessary for the most common case. The thin lock explained in the next section exploits this characteristic to improve performance.

Thin locks

Language supported synchronization leads to inefficiency due to the useless synchronization requirements due to the thread safe nature of the Java Libraries. The necessity of locking introduces the use of heavy weight objects. In Java, synchronization is introduced even when there is no requirementfor synchronization. This causes slowdowns of upto a factor of 2.In Sun JDK implementation, the monitors are placed outside the objects and are looked up from monitor caches.This is highly inefficient and requires locking of the monitor caches to avoid race conditions. It also leads to considerable increase in space overhead due to the size of the monitor structures. This problem is solved by using a new algorithm that requires fewer machine instructions and lesser number of bits per lock.

Thin Locks are an implementation of monitors in IBM's version of the JDK 1.1.2 for AIX. It has the following desirable characteristics over thick locks.

- In the absence of contention, initial and nested locking performance is much better. There is a significant improvement in performance even in th presence of contention, as compared to the JDK implementation.

- Only 24 bits in an object are used for locking.These 24 bits are obtained using encoding techniques on the other values stored in the header.`

- The structure of the 24 bit lock has been engineered to make the most common locking and unlocking functionalities to be executed with minimal machine instructions.

- The 24 bits of the thin lock are divided as follows:

- The first bit consists of the Monitor shape bit which represents if the lock is a thin lock and thick lock. - The next 23 bits point to the thick lock reference in case a thick lock is implemented. - The next 23 bits in case of a thin lock represents the thread identifier(15 bits) and the lock count(8 bits).

Biased locks

By using the thin lock, the cost of lock acquisition has been reduced to one compare and swap operation. However, such an atomic operation is usually very costly compared to simple memory access operations. If we can acquire a lock without any atomic operations for common cases, the cost of locks is further reduced. In addition, the thin lock implementation uses a busy-wait loop for inflating an object’s lock. It should also be checked that this will not cause performance degradation. When we explore the area of non-contended lock aquisition, we arrive at an alternative that exploits these characteristics - Biased locks.

Java Virtual Machines is the case wherein a single process repeatedly acquires and releases a lock, but other processes rarely if ever access this lock (First characteristic above). The locks that can be optimized for this case may be referred to as Quickly Re-acquirable Locks (QRLs). We shall also refer to the optimized code path in which a process reacquires and subsequently releases a lock that it has previously held, and that no other process has ever held, as an ultra fast path. The idea is to try to reduce the use of atomic instructions (e.g CAS,Barriers,etc) in the ultra fast path, because these instructions are typically much more expensive than other instructions. For QRLs, our target is to avoid all such instructions in both the acquire and release portions of the ultra fast code path.

In order to support this, two additional fields are added to the default lock object. The first field is a status word that takes on one of the values: NEUTRAL, BIASED, REVOKING, DEFAULT. Initially the lock is NEUTRAL and the first time the lock is acquired, the process acquiring the lock changes the status to BIASED. If a second process attempts to acquire the lock, it eventually changes the lock status to DEFAULT, but may set the status to REVOKING as an intermediary state in the revocation protocol.When the lock state is BIASED, this field additionally contains a process identifier for the current bias owner. The second field is a Boolean bit, that may be called a Quick lock bit -- that indicates whether the bias holder currently holds the lock. Hence, when the lock has been acquired, and not subsequently revoked, the bias holder can acquire or release the lock by simply toggling this bit.

Switching a lock from NEUTRAL to being biased to a particular process can be done for the one-time cost of a single CAS operation. The main difficulty in constructing an atomic-free QRL lies in coordinating the revocation of a lock between a revoking process and the bias holder process. Race conditions that must typically be addressed include a revocation that occurs simultaneous to a biased acquire as well as a revocation that occurs simultaneous to a biased release.

In a system that assures Sequential consistency, it is easy to design a technique in which a bias holder reacquires the lock by first writing its quicklock bit and then verifying that the lock has not been revoked; and revoking processes first indicate revocation and then check whether the bias holder has acquired the Quicklock. However, for weaker consistency models, we may be required to use barriers/fences to ensure correctness. But, in this case, it is sufficient to preclude a single read (of the lock status) from being reordered before a single write (of the Quicklock). Hence, if we can introduce an artificial dependency between the write and read instructions mentioned above, we can ensure that they are not adversely reordered.

One way of introducing this dependency (link) is to collocate the status field and the quicklock field into the two halves of a 32-bit word. Then, perform the following steps in order to reacquire the lock on the ultra fast path:

- perform a half-word store on the quicklock field

- load the entire 32 bit word

- shift out the status field from what we read

Now, because the data read in step 2 includes the data that was written in step 1, the read must be ordered after the write. This approach provides portability to any memory model in which apparent program order is guaranteed for instructions executed on the same processor.