CSC/ECE 506 Spring 2011/ch11 DJ: Difference between revisions

No edit summary |

No edit summary |

||

| Line 1: | Line 1: | ||

'''Supplemental to Chapter 11: SCI Cache Coherence''' | == '''Supplemental to Chapter 11: SCI Cache Coherence''' == | ||

This is intended to be a supplement to Chapter 11 of [[#References | Solihin ]], which deals with Distributed Shared Memory (DSM) in Multiprocessors. In the book, the basic approaches to DSM are discussed, and a directory based cache coherence protocol are introduced. This protocol is in contrast to the bus-based cache coherence protocols that were introduced earlier. This supplemental focuses on a specific directory based cache coherence protocol called the Scalable Coherent Interface. | |||

== Introduction: What is the Scalable Coherent Interface? == | == Introduction: What is the Scalable Coherent Interface? == | ||

Revision as of 00:18, 19 April 2011

Supplemental to Chapter 11: SCI Cache Coherence

This is intended to be a supplement to Chapter 11 of Solihin , which deals with Distributed Shared Memory (DSM) in Multiprocessors. In the book, the basic approaches to DSM are discussed, and a directory based cache coherence protocol are introduced. This protocol is in contrast to the bus-based cache coherence protocols that were introduced earlier. This supplemental focuses on a specific directory based cache coherence protocol called the Scalable Coherent Interface.

Introduction: What is the Scalable Coherent Interface?

The Scalable Coherent Interface (SCI) is an ANSI/IEEE protocol to provide fast point-to-point connections for high-performance multiprocessor systems [1]. It works with both shared memory and message passing and was approved in 1992. In the 1980's, as processors were getting faster and faster, they were beginning to outpace the speed of the bus. SCI was created to provide a non-bus based interconnection protocol. It was intended to be scalable, meaning it can work with few or many multiprocessors.

SCI Cache Coherence

SCI utilizes a directory based cache coherence protocol similar to what is describe in Chapter 11 of Solihin (2008). Instead of cache coherence being handled by caches snooping for interactions on a bus, a directory manages the coherence of each individual cache by creating a doubly-linked list of the caches that are sharing a particular block of memory. The first cache in the list is the cache for the processor that most recently accessed the block of memory. When the "head" of the list modifies the block of memory, they have to notify main memory and the next cache in the list. The information then propagates from there.

Coherence Race Conditions

There are many different instances in the SCI cache coherence protocol where race conditions are handled in such a way they are a non-issue. For instance, in SCI only the head node in the link list of sharers is able to write to the block. All sharers, including the head, can read. If a sharer (not the head) wants to write to the block, the sharer first has to detach itself from the linked list. The sharer is no longer sharing the block so from now on we will call it N1. Then N1 has to ask the directory for the block again with intent to write. N1 then swaps out the pointer pointing to the head of the linked list with a pointer to itself. Now N1 is the new head and it has the pointer to the old head. N1 then invalidates the old head’s cache line and all of the sharers linked to it. This allows the SCI protocol to keep write exclusivity [2]. Write exclusivity mean only one write to a give block at a time. Without write exclusivity, each node sharing the block would be able to write to the block, allowing for multiple writes to happen simultaneously. If each cache wrote simultaneously, every cache sharing the block would get invalidated, including the head. Also the block in memory might not posses the correct value. In order for this to be implemented, there needs to be special states for the head, middle, and tail of the linked list. Also there needs to be states that determine the read/write capabilities of the nodes. Another scenario described in 11.4.1 of the Solihin textbook is when a cache is in state M and the directory is in state EM for a given block. If the cache holding the block flushes the value and the value does not make it to the directory before a read request is made, then there can be a potential race condition. The race condition is handled by the use of pending states. When the cache flushes a block, the line transitions from state M to a pending state. The pending state transitions into the invalid state only when the directory has acknowledged receiving the flush. This allows the cache to stall any read request made to the block until the block enters the steady state of I (Solihin).

SCI States

Directory States

In SCI, the directory and each cache maintain a series of states. The directory can be in one of three states:

- HOME or UNCACHED (U) – The only clean copy of the memory block is in main memory

- FRESH or SHARED (S) – The memory block is shared, but the copy in main memory is updated

- GONE or EXCLUSIVE/MODIFIED (EM) – The memory block in main memory is not updated, but a cache has the updated memory block

Cache States

For a cache, there are 29 stable states described in the SCI standard and many pending states. Each state consists of two parts. The first part of the state tells where the state is in the linked list. There are four possibilities (Cutler (1999)):

- ONLY – if the cache is the only state to have cached the memory block

- HEAD – if the cache is the most recent reader/writer of the block and so is the first in the list

- TAIL – if the cache is the least recent reader/writer of the block and so is the last in the list

- MID – if the cache is not the first or last in the list

On top of these four location designations for the states, there are other states to describe the actual state of the memory on the cache. Some of these include:

- CLEAN – if the data in the memory block has not been modified, is the same as main memory, but can be modified.

- DIRTY – if the data in the memory block has been modified, is different from main memory, and can be written to again if needed.

- FRESH – if the data in the memory block has not been modified, is the same as main memory, but cannot be modified without notifying main memory.

- COPY – if the data in the memory has not been modified, is the same as main memory, but cannot be modified, only read.

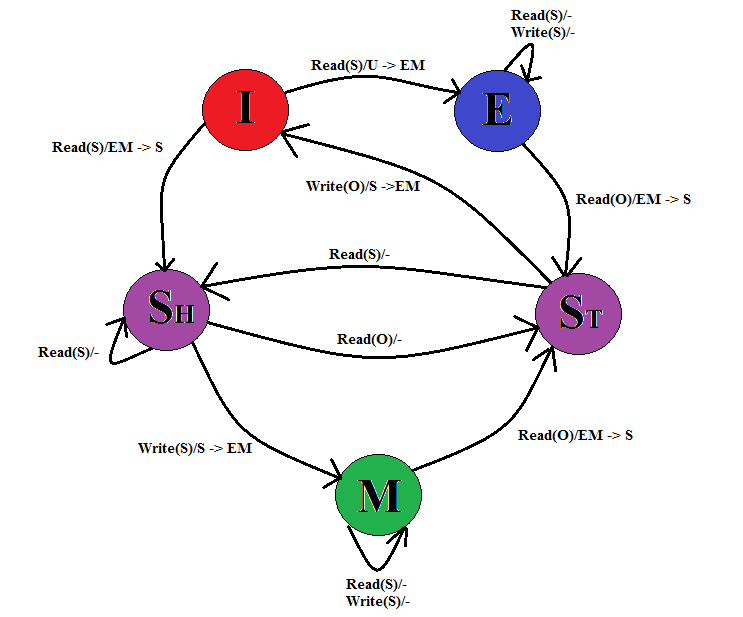

A more simplistic way to envision the SCI coherence protocol states is to limit the system to just two processors and expand the MESI protocol [LINK] states. In this case, we map envision three states in the directory (U, S, EM) and the caches have the following states:

- Invalid (I) – if the block in the cache's memory is invalid.

- Modified (M) – if the block in the cache's memory has been modified and no longer matches the block in main memory.

- Exclusive (E) – if the cache has the only copy of the memory block and it matches the block in main memory.

- Shared-Head (Sh) – if the block in the cache's memory is shared with the other processor's cache, but this processor was the last to read the memory.

- Shared-Tail (St) – if the block in the cache's memory is shared with the other processor's cache and the other processor was the last to read the memory.

In this scenario, the following operations are possible:

- Read(O/S) – a read of the data by either the processor attached to the cache (S for Self) or the other processor (O).

- Write(O/S) – a write of the memory block by either the processor attached to the cache (S) or the other processor (O).

In this case, the following state diagram illustrates the transitions:

Figure 1: Simplified cache state diagram from SCI

Initially, the cache is in the "I" state and the directory is in the "U" state. If processor 1 (P1) makes a read, the processor's cache moves from state I to E and the directory moves from U to EM. The directory records that P1 is the head of the directory list. At this point, processor 2 (P2) is in state I. If P2 makes a read, the directory is in state EM, so the directory notifies P1 that P2 is making a read, P1 transitions from E to St, and sends the data to P2. P2 transitions from I to Sh, and creates a pointer to P1 to note that P1 is the next memory on the directory list. The directory transitions from EM to S and the directory updates its pointer from P1 to P2 to note that P2 is now the head of the directory cache list.

References

- Yan Solihin, Fundamentals of Parallel Computer Architecture: Multichip and Multicore Systems, Solihin Books, 2008.

- Culler, David E. and Jaswinder Pal Singh, Parallel Computer Architecture, Morgan Kaufmann Publishers, Inc, 1999.