CSC 456 Fall 2013/4b cv

Non-Uniform Memory Access(NUMA) technology has become the optimal solution for more complex systems in terms of the increase of processors. NUMA provides the functionality to distribute memory to each processor, giving each processor local access to its own share, as well as giving each processor the ability to access remote memory located in other processors. NUMA is a very important processor feature and if it is ignored one can expect sub-par application memory performance.

Background

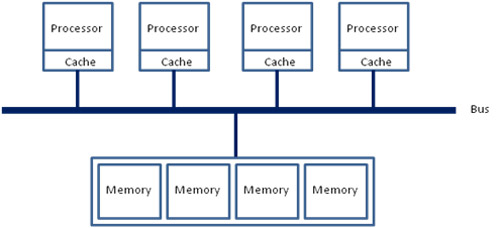

NUMA is often grouped together with Uniform Memory Access(UMA) because the two methods of memory management have similar features. The architecture of UMA(see figure 1.1)<ref name="ott11">David Ott. Optimizing Applications for NUMA. http://software.intel.com/en-us/articles/optimizing-applications-for-numa

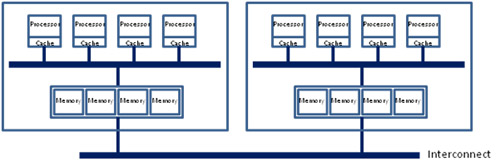

</ref>has a bus inbetween the processors/cache and the memory for each processor. NUMA(see figure 1.2)<ref name = "ott11"/> however has a directl connection between the processor/cache and the memory for the processor, the bus is then connected to the memory. The main trade off between UMA and NUMA is related to memory access time. Since the NUMA memory is directly linked to the processor/cache it provides faster access to local data but is slower when accessing remote data<ref name = "ott11"/>.

The NUMA system memory is managed in a node based model(see figure 1.3)<ref name = "ott11"/>. This design implements a mixture of UMA and NUMA by creating a node at encompasses a NUMA system and connects the nodes so the entire architecture resembles the UMA system.

Advantages/Disadvantages

As previously stated one of the main advantages from using the NUMA system is the fast local memory access due to the location of the memory. This can cause some issues however when the processors are sharing threads.

Different Strategies

Page Allocation strategies are broken down to three categories when concerning with NUMA:

- Fetch - determining which page to be brought to main memory

- demand fetching

- prefetching

- Placement - determining where to hold the page

- Fixed-Node

- Preferred-Node

- Random-Node

- Replacement - determining which page to remove for new pages

- Per-Task

- Per-Computation

- Global

- first touch - allocates the frame on the node that incurs the page fault, i.e. on the same node where the processor that accesses it resides.

- round robin - pages are allocated in different memory nodes and are accessed based on time slices.

- random -

Page Allocation Support in OpenMP

- has directives for allocating blocks a certain way

- !dec$ migrate_next_touch(v1,...,v2) - migrates selected pages to referencing thread for easy access

<references/>