CSC/ECE 506 Spring 2012/12b ad

On-chip Interconnects

Introduction

As the number of processors in multiple-processor systems increases, increasing consideration needs to be given to how those processors communicate. With current technology, for a small number of processors shared-memory arrangements are quite effective. However, as the number of processors increases contention for available resources (memory, bus time, etc) increases, negatively impacting performance of the system. However, keeping these processors all on the same physical piece of hardware is convenient and helps performance due to physical proximity. As such, it is desirable to design hardware where many cores are part of the same die while allowing for the performance gains possible with interconnections. Recently there has been more research into these on-chip interconnects, and this article will explore the state of those efforts.

Topologies

The intracacies of semiconductor design and layout afford many different kinds of possible layouts when creating networking topologies on SoCs. Specifically, designs need to be amenable to creation on a two-dimension substrate, and as such practically limits the use of some more advanced topologies like hypercubes <ref name="GrotKeckler">Grot and Keckler, Scalable On-Chip Interconnect Topologies</ref>.

Rings

Ring topologies can be effective when the “number of cores is still relatively small but is larger than what can be supported using a bus” [Solihin 409]. Such cases are considered to use “medium-scale” interconnection networks.

Meshes

2D mesh networks are used when the scale of the network topology breaks into “larger-than-medium” scale. This is especially true when the dimensions are divisible by a factor two, as the benefit in the number of hops versus a traditional ring network can be tremendous.

For example, in a worst-case scenario a sixteen-core multiprocessor would require eight hops to get to the farthest node. In a 2D mesh, however, creating a 4x4 grid guarantees that the maximum number of hops is six. Assuming random data distributed evenly among the cores, the expectation value (1/16 chance for each node) of the number of hops in a ring would be 3.6 (1/16*[0+1+2+3+... hops]), whereas the expectation value for the 2D mesh in minimal path would be 2.8. This benefit, though, comes at the necessary cost of increased power for the extra processing required. A potential solution to this "power problem" is to group cores together in what is called a concentrated mesh, but even that requires increased crossbar complexity<ref name="GrotKeckler"/>.

Flattened Butterfly

The flattened butterfly offers the benefits of a tree (less contraints on root-level bandwidth [Solihin 367]) as well as the ability to actually be mapped to a substrate, but because of node concentration<ref name="GrotKeckler"/> the number of channels required for high scalability is cost- and validation-prohibitive.

Crossbar Switch

A crossbar switch topology uses a bus arrangement with the bus lines physically perpendicular to each other and whose intersections are connected or disconnected with a switch. In the case of CMPs, this switch is a transistor or, depending on the desired characteristics of the system, a programmable fuse. Due to their ability to be multi-staged<ref name="wikicrossbarsemi">"Crossbar switch." Wikipedia. Last accessed April 24, 2012.</ref>, these topologies lend themselves to being used for memory in large-scale systems.

Design Considerations

Energy Consumption

Multi-processor SoCs require additional energy in order to operate the on-chip interconnection hardware like routers. Too, the links between components can introduce increased losses in regards to required voltages and physical arrangement. Indeed, "on-chip network power has been estimated to consume up to 28% of total chip power" due to "channels, router fifos and router crossbar fabrics"<ref name="GrotKeckler" />. Simple topologies use less power due to simpler routers, but the increased number of hops can lead to overal increased power consumption.

Scalability

Scalability ties back to both energy and cost, but also to engineering constraints as well. Specifcally, the architecture needs to be sensitive to the power and heat requirements of the design, as well as the physical die size. Further, the design requires the ability to be fabricated predictably and within reasonable costs. To mitigate some of these factors, concentration of network interfaces can be employed, where a network interface is shared by multipe terminals. Efforts to scale more complicated topologies like the butterfly (into a "flattened" butterfly) have yielded promising results, but at the expense of too much energy expenditure<ref name="GrotKeckler" />.

Modern Implementations

Tilera Tile Processor

Tilera is a fabless semiconductor company that has developed a "tile processor" whereby the fabrication of the multi-processor device is greatly simplified by the placement of processor "tiles" on the die. The technology behind this innovation is iMesh, which is the name of the on-chip interconnection technology used in the Tile Processor's architecture<ref name="Tilera">"On-Chip Interconnection Architecture of the Tile Processor," Wentzlaff, et al. 2007. IEEE Xplore.</ref>. The Tile Processor is innovative due to its highly scalable implementation of an on-chip network that utilizes 2D meshes. These are physically organized (as opposed to logically organized) due to design considerations when scaling and laying out new designs.

Each tile of the Tile Processor is its own self-contained processor and can effectively function by itself; multiple processors can be combined to form an SMP system. The Tile Processor can be further enhanced by using its custom C language-based programming libraries (both POSIX threads and iLib), which fully leverage the processing capabilities of the CMP.

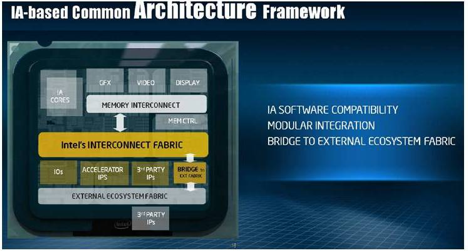

Intel MIC and IOSF

One part of Intel's efforts in the multiprocessor research realm revolves its Many Integrated Cores project<ref name="intelmic">Blyler, John. "Proprietary On-Chip Connections Yield To NoC Designs" System-Level Design Community Blog. September 22, 2011.</ref> These cores would communicate using a Message Parsing Interface. Further, to connect these cores, there is the Intel On-Chip System Fabric, which is a proprietary chassis for its popular Atom computing platform<ref name="inteliosf">Blyler, John. "Intel Challenges ARM with IP and Interconnect Strategy" Semiconductor IP Blog. August 26, 2011.</ref>. The architecture of the IOSF lends itself to communication with traditional PCI buses, making it a viable technology for use in backwards-compatible general purpose computers. Additionally, through numerous licensing agreements, Intel has acquired the use of a wide variety of devices from graphics to modems and Wi-Fi Ethernet adapters. Coupled with the IOSF, conceivably entire computing platforms (SoCs) could be made at low cost and small footprint.

Summary

See Also

References

<references></references>