CSC/ECE 506 Spring 2014/10c gk: Difference between revisions

| Line 284: | Line 284: | ||

Thread 1 Thread 2 | Thread 1 Thread 2 | ||

A = 100 loop_until(B == 1) | A = 100 loop_until(B == 1) | ||

B = 1 print A | B = 1 print A | ||

Revision as of 04:53, 13 April 2014

Memory Consistency models

Introduction

The memory consistency model of a shared-memory multiprocessor provides a formal specification of how the memory system will appear to the programmer, eliminating the gap between the behavior expected by the programmer and the actual behavior supported by a system. In other words, a memory consistency model is a set of rules that govern how memory systems will process memory access operations from multiple processors. In case of a uniprocessor, memory access operations occur in program order, so memory consistency may not be a significant issue. However, in case of multiprocessor systems, a memory consistency model establishes the requirements for correct operation that in turn governs implementations of system optimizations that can directly impact programming models. So effectively, a low level memory consistency model can directly impact algorithm design. Hence a thorough understanding of memory consistency models is required while developing programs that run on distributed shared memory systems.

This material gives a brief explanation about the intuition behind using relaxed memory consistency models for scalable design of multiprocessors. It also explains about the consistency models in real multiprocessor systems like Digital Alpha, Sparc V9 , IBM Power PC and processors from Sun Microsystems. It also addresses memory consistency models on the Andriod platform specifically using the ARM CPU architecture as an example.

Sequential Consistency Model (SC)

Introduction to Sequential Consistency Model

To write correct and efficient shared memory programs, programmers need a precise notion of shared memory semantics. To ensure correct execution, a programmer expects that the data value read should be the same as the latest value written to it in the system.

However in many commercial shared memory systems,the processor may observe an older value, causing unexpected behavior. Intuitively, a read should return the value of the "last" write to the same memory location. In uniprocessors, "last" is precisely defined by the sequential order specified by the program called program order. This is not the case in multiprocessors. A write and read of a variable, are not related by program order because they reside on two different processors.

The uniprocessors model, however can be extented to apply to multiprocessors in a natural way. The resulting model is called Sequential consistency. In brief, sequential consistency requires that all memory operations

- appear to execute one at a time,

- all memory operations of a single processor appear to execute in the order described by that processor's program.

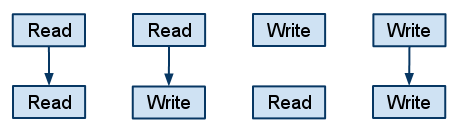

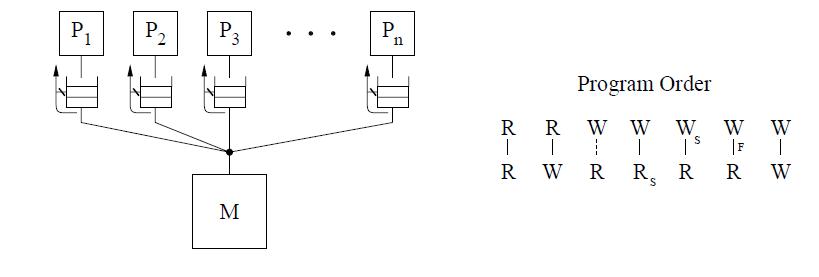

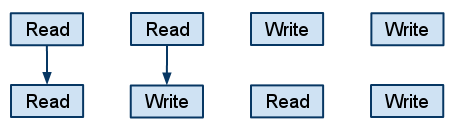

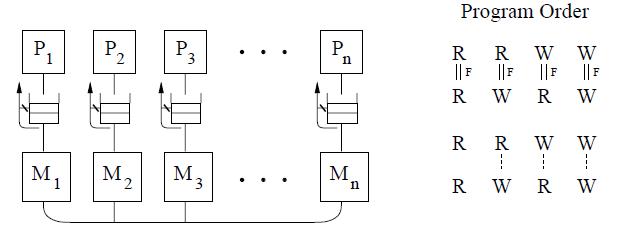

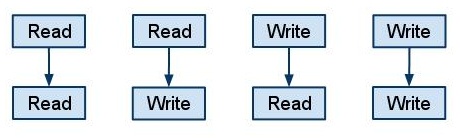

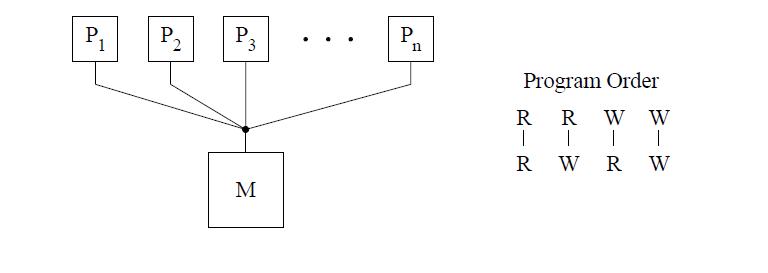

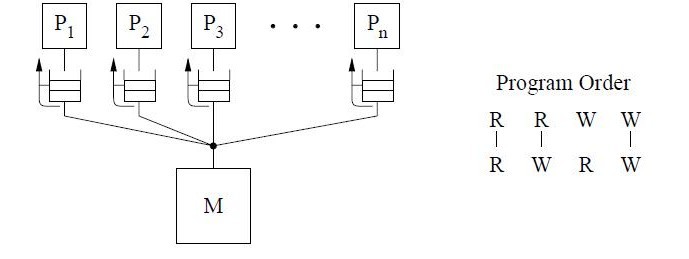

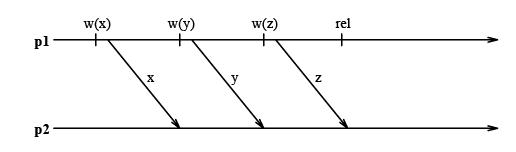

The figure above shows the basic representation for sequential consistency. This conceptual system for SC consists of n processors sharing a single logical memory. Though the figure does not show caches, an SC implementation may still cache data as long as the memory system appears as a single copy memory(i.e the writes should appear atomic). As SC requires program order to be maintained among all operation types, the pictorial representation of the program order shows all combinations of reads and writes, the line between them telling that the operations are required to complete in program order.

This model ensures that the reads of a variable, will return the new values written to it by a processor. Sequential consistency provides a simple, intuitive programming model. Because of its strict consistency requirements, sequential consistency, many of the architecture and compiler optimizations used in uniprocessors are not safely applicable to sequentially consistent multiprocessors.[ For more details on sequential consistency model and its advantages/disadvantages refer to Fundamentals of Parallel Computer Architecture textbook by Yan Solihin , page 284 through 292]. For this reason, many Relaxed consistency models have been proposed, most of which

are supported by commercial architectures.

Performance of Sequential Consistency on multiprocessors

Sequential Consistency (SC) is the most intuitive programming interface for shared memory multiprocessors. A system implementing SC appears to execute memory operations one at a time and in program order. A program written for an SC system requires and relies on a specified memory behavior to execute correctly. Implementing memory accesses according to the SC model constraints, however, would adversely impact performance because memory accesses in shared-memory multiprocessors often incur prohibitively long latencies (tens of times longer than in uniprocessor systems). To enforce sequential consistency, illegal reordering caused by hardware optimizations like Write buffers, Non-blocking caches etc and compiler optimizations like code motion, register allocation,eliminating common subexpressions, loop transformations etc resulting in reordering are not allowed. These are the optimizations which are implemented for better performance and are valid in uniprocessors. But in case of multiprocessors, these do not allow to satisfy the sequential consistency requirements and hence are not allowed. This affects the performance.

A number of techniques have been proposed to enable the use of certain optimizations by the hardware and compiler without violating sequential consistency, those having the potential to substantially boost performance. Some of them are mentioned below:

Hardware optimization techniques:

- Prefetching : A hardware optimization technique in which the processor automatically prefetches ownership for any write operations that are delayed due to the program order requirement (e.g., by issuing prefetch-exclusive requests for any writes delayed

in the write buffer), thus partially overlapping the service of the delayed writes with the operations preceding them in program order. This technique is only applicable to cache-based systems that use an invalidation-based protocol. This technique is suitable for statically scheduled processors.

- Speculative Reads : A hardware optimization technique in which read operations that are delayed due to the program order requirement are serviced speculatively ahead of time. Sequential consistency is guaranteed by simply rolling back and reissuing the read and subsequent operations in the infrequent case that the read line gets invalidated or updated before the read could have been issued in a more straightforward implementation. This is suitable for dynamically scheduled processors since much

of the roll back machinery is already present to deal with branch mispredictions.

More information about these two techniques can be found in this paper presented by Kourosh Gharachorloo, Anoop Gupta, and John Hennessy of Stanford University at International Conference on Parallel Processing, Two techniques to enhance the performance of memory consistency models

These two techniques of Prefetching and Speculative Reads are expected to be supported by several next generation microprocessors like MIPS R10000 and Intel P6, thus enabling more efficient hardware implementations of sequential consistency.

Software Optimization techniques

- Shasha and Snir's agorithm : A compiler algorithm proposed by Dennis Shasha and Marc Snir is used to detect when memory operations can be reordered without violating sequential consistency. It uses the technique where Sequential consistency can be enforced by delaying each access to shared memory until the previous access of the same processor has terminated. For performance reasons, it allows several accesses by the same processor to proceed concurrently. It performs an analysis to find a minimal set of delays that enforces sequential consistency. The analysis extends to interprocessor synchronization constraints and to code where blocks of operations have to execute atomically thus providing a new compiler optimization techniques for parallel languages that support shared variables.

In detail implementation of this algorithm can be studied from the paper : Efficient and correct execution of parallel programs that share memory

- Compiler algorithm for SPMD (Single Program multiple data) programs : The algorithm proposed by Sasha and Snir has exponential complexity. This new algorithm simplified the cycle detection analysis used in their algorithm to achieve the job in polynomial time.

More information about this can be found in this paper by Arvind Krishnamurthy and Katherine Yelick presented at 7th International Workshop on Languages and Compilers for Parallel Computing Optimizing parallel SPMD programs

In general the performance of sequential consistency model on multiprocessors with shared memory is low. But with the use of above techniques, better performance can be achieved.

All of the above schemes mentioned to improve performance of SC allow a processor to overlap or reorder its memory accesses without software support, however, either require complex or restricted hardware (e.g., hardware prefetching and rollback) or the gains are expected to be small. Further, the optimizations of these schemes can be exploited by hardware (or the runtime system software), but cannot be exploited by compilers. Work related to compiler optimizations including that by Shasha and Snir motivate their work for hardware optimizations. Hardware costs are thus high to implement these techniques.

Sequential consistency models can be quite prohibitive on mobile platforms. Until a few years ago, all Android devices were uniprocessors. More recently, a slew of Android devices based on SMP designs have been released. SMP architectures have to rely on relaxed memory consistency models for performance.

Hence researchers and vendors have alternatively relied on relaxed memory consistency models that embed the shared-address space programming interface with directives enabling software to inform hardware when memory ordering is necessary.

Relaxed Consistency Models

There are two main reasons to implement Relaxed consistency models

- It is not always necessary to maintain sequential consistency

- Hardware overhead and performance degradation are not justified for ease of programming

SC, for example, makes it hard to use write buffers,because write buffers cause operations to be presented to the cache-coherence protocol out of program order.Straightforward processors are also precluded from overlapping multiple reads and writes in the memory. system. This restriction is crippling in systems without caches, where all operations go to memory. In systems with cache coherence—which are the norm today—this restriction impacts activity whenever operations miss or bypass the cache. (Cache bypassing occurs on uncacheable operations to I/O space, some block transfer operations, and writes to some coalescing write buffers.)

The basic idea behind relaxed memory models is to enable the use of more optimizations by eliminating some of the constraints that sequential consistency places on the overlap and reordering of memory operations.relaxed models typically allow certain memory operations to execute out of program order or non-atomically.

Relaxed consistency models can be partitioned into subgroups using four comparisons: Type of Relaxation, Synchronizing vs. Non-Synchronizing, Issue vs. View-Based, and Relative Model Strength.

1. Type of Relaxation: - A simple and effective way of categorizing relaxed consistency models is by defining which requirement of sequential consistency is relaxed. relaxed consistency model either relaxes the program order or write atomicity requirement. Depending upon the sequence one or more than one of following order can be relaxed.

Read - Read

Read - Write

Write -Read

2. Synchronizing vs. Non-Synchronizing: A synchronizing model divides shared memory accesses into at least two groups and assigns a different consistency restriction to each group. A non-synchronizing model does not differentiate between individual memory accesses and assigns the same consistency model to all accesses collectively.

3. Issue vs. View-Based: An issue-based relaxation focuses on how the ordering of an instruction issue will be seen by the entire system, as a collective unit. On the other hand, a view-based method does not aim to simulate sequential consistency; in this category of models, each processor is allowed it’s own view of the ordering of events in the system, and these views do not need to match.

4. Relative Model Strength: Some models are inherently more strong (or strict) than other models. If rating the strength of a model by the relaxations of program order or atomicity, it may be possible to directly compare the strength of different models, as in a case where one model relaxes everything another model relaxes—plus more.

In this chapter we will mainly distinguish between different models based on type of relaxation of read-write ordering they allow. Each of these models have some flavors depending on some subtle differences.

Different Relaxed consistency Models

Let us now see the consistency models in some of the real multiprocessor systems listed above. We will not introduce those topics that are already covered in Solihin's textbook, like Weak ordering and Processor consistency models. RCsc and RCpc. RCsc maintains sequential consistency among synchronization operations, while RCpc maintains processor consistency among synchronization operations.

Relax Write-to-Read program order

Generally in all systems writes take more time than reads. Based on this, the key program order optimization enabled by these models is to allow a read to be reordered with respect to previous writes from the same processor. While maintaining sequential consistency typically requires the processor to wait for a previous write to complete before completing the next read operation, this optimization allows the processor to continue with a read without waiting for write operations to complete. As a result, the write latency can be effectively hidden. Also, many programs provide sequentially consistent results even if the program order from a write to a read is not maintained.[25]

This method is not sufficiently flexible for compiler optimizations but successfully mask the latency of the write operation. Compilers tend to require reordering both wrt reads and writes.

Here is an example of a program[29] that fails:

P1 P2

Flag1=1 Flag2=1

if(Flag2==0) if(Flag1==0)

Enter Critical Section Enter Critical Section

This is because the reads are allowed to bypass writes. This can cause P1 to enter critical section without setting the flag. This can cause P2 also to enter critical section.

However below code[29] works well with this model:

P1 P2

Data=2000 while(Flag==0);

Flag=1 Read Data

Since writes are not allowed to be reordered with respect to each other, this code works flawlessly.

Therefore, even though systems that exploit this optimization are not sequentially consistent, they appear sequentially consistent to a large class of programs.

Different flavors

- processor consistency- PC

- IBM 370

- Intel Pentium Pro

- Sun’s Total Store Order

The three models differ in when they allow a read to return the value of a write. Also, whether a processor is allowed to return the value of its own write before the write completes in memory.

IBM-370

The IBM 370 model is the most restrictive because it prevents a read from returning the value of a write before the write is made visible to all processors. Therefore, even if a processor issues a read to the same address as a previous pending write from itself, the read must be delayed until the write is made visible to all processors.

The IBM-370 model allows a write followed by a read to complete out of program order unless the two operations are to the same location, or if either operation is generated by a serialization instruction, or if there is a serialization instruction in program order between the two operations. As seen earlier write buffers are used to implement this reordering. As the writes are handled by write buffer reads can be performed before preceding write.

To enforce the program order constraint from a write to a following read, the IBM 370 model provides special serialization instructions that may be placed between the two operations. Some serialization instructions are special memory instructions that are used for synchronization (e.g., compare&swap), while others are non-memory instructions such as a branch. Referring back to the first example program in this section, placing a serialization instruction after the write on each processor provides sequentially consistent results for the program even when it is executed on the IBM 370 model.

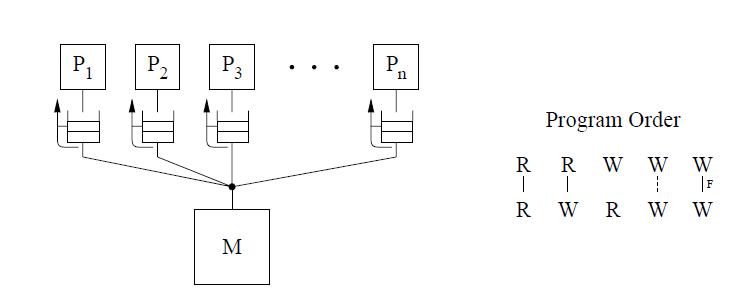

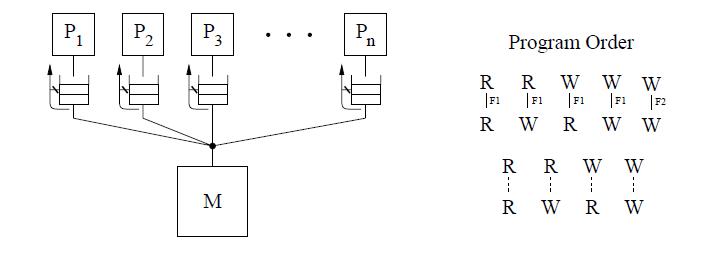

The conceptual system shown in the above figure is similar to that used for representing SC. The main difference is the presence of a buffer between each processor and the memory. Since we assume that each processor issues its operations in program order, we use the buffer to model the fact that the operations are not necessarily issued in the same order to memory. The cancelled reply path from the buffer to the processor implies that a read is not allowed to return the value of a writeto the same location from the buffer.

The IBM-370 model has two types of serialization instructions: special instructions that generate memory operations (e.g., compare-and-swap) and special non-memory instructions (e.g., a special branch).

Total Store Ordering (TSO)

The total store ordering (TSO) model partially relaxes the above system's requirement by allowing a read to return the value of its own processor’s write even before the write is serialized with respect to other writes to the same location. However, as with sequential consistency, a read is not allowed to return the value of another processor’s write until it is made visible to all other processors.

The TSO model allows reordering of read followed by write without any constraint. All other program orders are maintained. The conceptual system is almost identical to that of IBM-370 except the forwarding path from the buffer to a read is no longer blocked. Therefore, if a read matches (i.e., is to the same location as) a write in the write buffer, the value of the last such write in the buffer that is before it in program order is forwarded to the read. Otherwise, the read returns the value in memory, as in the SC and IBM-370 models.

If we consider operations as executing in some sequential order, the buffer-and-memory value requirement requires the read to return the value of either the last write to the same location that appears before the read in this sequence or the last write to the same location that is before the read in program order, whichever occurs later in the sequence.

For TSO, a safety net for write atomicity is required only for a write that is followed by a read to the same location in the same processor. The atomicity can be achieved by ensuring program order from the write to the read using read-modify-writes.

Unlike IBM 370, the TSO model does not provide explicit safety net. But read-modify-write operations can be used to provide the illusion that program order is maintained between a write and a following read. Program order appears to be maintained if either the write or the read is already part of a read-modify-write or is replaced by a read-modify-write.

Difference between IBM370 and TSO:

Let us consider the program segment below, taken from Technical Report by Kourosh Gharachorloo on Memory Consistency Models for shared-memory Multiprocessors, to demonstrate the difference between TSO and IBM 370 models.

a)

P1 P2 a1:A=1 a2:B=1 b1:u=A b2:v=b c1:w=B c2:x=A

b)

P3 P4 a1:A=1 a2:B=1 b1:C=1 b2:C=2 c1:u=C c2:v=C d1:w=B d2:x=A

First consider the program segment in (a). Under the SC or IBM-370 model, the outcome (u,v,w,x)=(1,1,0,0) is disallowed. However, this outcome is possible under TSO because reads are allowed to bypass all previous writes, even if they are to the same location; therefore the sequence (b1,b2,c1,c2,a1,a2) is a valid total order for TSO. Of course, consistency value requirement still requires b1 and b2 to return the values of a1 and a2, respectively, even though the reads occur earlier in the sequence than the writes. This maintains the intuition that a read observes all the writes issued from the same processor as the read. Consider the program segment in (b). In this case, the outcome (u,v,w,x)=(1,2,0,0)is not allowed under SC or IBM-370, but is possible under TSO.

Processor Consistency (PC)

Finally, the PC model relaxes both constraints, such that a read can return the value of any write before the write is serialized or made visible to other processors.

Unlike the previous two models, Processor Consistency, first introduced in [21], is both view-based and non-synchronizing. In other words, each processor is allowed to have its own view of the system, and all memory accesses are treated the same. The order in which writes are observed must be the same as the order in which they were issued. However, if two processors issue writes, those writes do not need to appear to execute in the same order, from the perspective of either of the two processors or a third processor. The conditions of Processor Consistency in another way is

- Before a read operation is allowed to perform with respect to any other processor, all previous read accesses must have already been performed.

- Before any write operation is allowed to perform with respect to any other processor, all previous reads and writes must have been performed. The two conditions above imply one important fact: only the read-after-write program order requirement is relaxed.

Even in PC model, there is no explicit safety net. Also the TSO approach to provide illusion of a safety net is enough in case of reads. But it cannot be used in case of writes.

Differences in IBM370, TSO and PC

Consider the below code:

a) b)

A = Flag1 = Flag2 = 0 A = B = 0

P1 P2 P1 P2 P3

Flag1 = 1 Flag2 = 1 A = 1

A = 1 A = 2 if (A == 1)

register1 = A register3 = A B = 1

register2 = Flag2 register4 = Flag1 if (B == 1)

Result: register1 = 1, register3 = 2, Result: B = 1, register1 = 0

register2 = register4 = 0

TSO and PC allow the results in part a to occur because they let the reads of the flags to happen before the writes. It is not possible as per IBM370 as the read of A is not allowed on each processor till the write on that processor is done. Similarly, given part b results are allowed as per PC but not by IBM370 or TSO.

Relax Write-to-Write program order

The second category of relaxed models that we consider allow two writes to execute out of program order in addition to allowing the reordering of a write followed by a read. This relaxation enables a number of hardware optimizations, including write merging in a write buffer and overlapping multiple write misses, all of which can lead to a reodering of write operations. Therefore, write operations can be serviced at a much faster rate.

Even this model is not flexible enough for compiler optimizations.

SPARC V8 Partial Store Ordering

Extra hardware optimization enabled by PSO in addition to the previous set of models is that writes to different locations from the same processor can be pipelined or overlapped and are allowed to reach memory or other cached copies out of program order. PSO and TSO have the same atomicity requirements by allowing a processor to read the value of its own write early, and preventing a processor from reading the value of another processor’s write before the write is visible to all other processors.[26]

This model can result in non sequentially consistent results in both of the below cases as opposed to the previously mentioned 3 protocols:

a)

P1 P2

Flag1=1 Flag2=1

if(Flag2==0) if(Flag1==0)

Enter Critical Section Enter Critical Section

b)

P1 P2 Data=2000 while(Flag==0); Flag=1 Read Data

As a safety net between a write and a following read, and to ensure atomicity of writes, same mechanism as TSO is used. For maintaining order between two writes, PSO provides an instruction called STBAR (Barrier). The safety net provided by PSO for imposing the program order from a write to a read, and for enforcing write atomicity, is the same as TSO. PSO provides an explicit STBAR instruction for imposing program order between two writes.

The Partial Store Ordering(PSO) model is very similar to the TSO model for SPARC V8. The figure below shows an identical conceptual system. There is only a slight difference in the program order, where writes to locations can be overlapped only if they are not to same locations. This is represented by the dashed line between the W's in the program order figure. Safety net for the program order is provided through a fence instruction, called the store barrier or STBAR, that may be used to enforce the program order between writes.

Let us consider an example from Technical Report by Kourosh Gharachorloo on Memory Consistency Models for shared-memory Multiprocessors to demonstrate the working of PSO.

P1 P2

a1:A=1 a2:while(Flag==0)

b1:B=1 b2:u=A

c1:Flag=1 c2:v=B

With relaxed Write to Read models, the only value for (u,v) is (1,1). With PSO, since it allows writes to different locations to complete out of program order, it also allows the outcomes (0,0) or (0,1) or (1,0) for (u,v). In this example, STBAR instruction needs to be placed immediately before c1 on P1 in order to disallow all outcomes except (1,1).

Relax Read-to-Read and Read-to-Write program orders

This model sheds the restriction on program order between all operations, including read to read and read followed by write to different locations . This flexibility provides the possibility of hiding the latency of read operations by implementing true non-blocking reads in the context of either static (in-order) or dynamic (out-of-order) scheduling processors, supported by techniques such as non-blocking (lockup-free) caches and speculative execution. The compiler also has full flexibility to reorder operations.

Weak ordering (WO), Release consistency (RC), DEC Alpha, Relaxed Memory Order (RMO), and PowerPC are examples of this model, with the last three models for commercial architectures. Except for Alpha,all the other models allow reordering of two reads to the same location.

All of the models in this group allow a processor to read its own write early with the exception of RCpc, a flavor of Release consistency (RC) and PowerPC. All the three commercial architectures provide explicit fence instructions to ensure program order. Frequent use of fence instructions can incur a significant overhead due to an increase in the number of instructions and the extra delay that may be associated with executing fence instructions.

DEC Alpha

The program order constraints allow reads and writes to different locations to complete out of program order

unless there is a fence instruction between them. Memory operations to the same location, including reads, are required to complete in program order. Safety net for program order is provided through the fence instructions, the memory barrier (MB) and the write memory barrier (WMB). The MB instruction can be used to maintain program order between any memory operations, while WMB instruction provides this guarantee only among write operations. The Alpha model does not require a safety net for write atomicity.

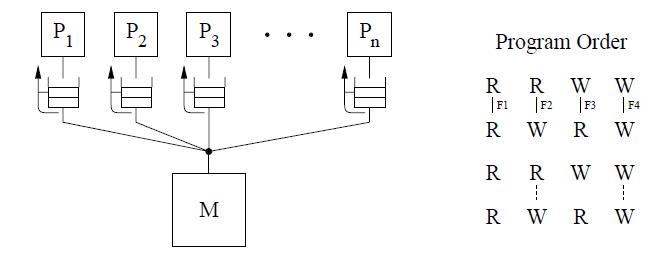

The conceptual system shown above is the same same as IBM 370 which requires a read to return the value of the last write operation to the same location. But since we can relax the program order from a write to a read, we can safely exploit optimizations such as read forwarding.

Relaxed Memory Order (RMO)

The SPARC V9 architecture uses RMO which is an extension of the TSO and PSO models used in SPARC V8.

The Read to Read, Read to write program order is relaxed in this model, like in PSO. But a Read to Write or the order between two writes to the same location are not relaxed. This is shown in the figure for program order below.

RMO provides four types of fences[F1 through F4] that allow program order to be selectively maintained between any two types of operations. A single fence instruction, MEMBAR, can specify a combination of the above fence types by setting the appropriate bits in a four-bit opcode. No safety net for write atomicity is required.

IBM PowerPC

This model constrains the program order

-Between sub-operations. That is each operation may consist of multiple sub-operations and that all sub-operations of the first operation must complete before any sub-operations of the second operation.[represented by the double lines between operations in the figure below].

-Writes to the same location.

-Among conflicting operations.

The IBM PowerPC model exposes multiple-copy semantics of memory to the programmer as shown in the conceptual system above. Safety net fence instruction is called SYNC which is similar to the MB fence instruction of the Alpha systems. However, SYNC between two reads allows to occur out of program order. Also PowerPC allows a write to be seen early by another processor’s read. Hence a read-modify-write operation may be needed to enforce program order between two reads to the same location as well as to make writes appear atomic.

Android Platform (ARM Architecture)

ARM SMP provides weak memory consistency guarantees. As we know with WO consistency, unless the programmer explicitly defines the ordering using synchronization primitives, the hardware doesn't guarantee any ordering of memory accesses.

There are four basic situations to consider:

1. store followed by another store

2. load followed by another load

3. load followed by store

4. store followed by load

Thread 1 Thread 2 A = 100 loop_until(B == 1) B = 1 print A

The Big Picture - Relationship Between Different Models

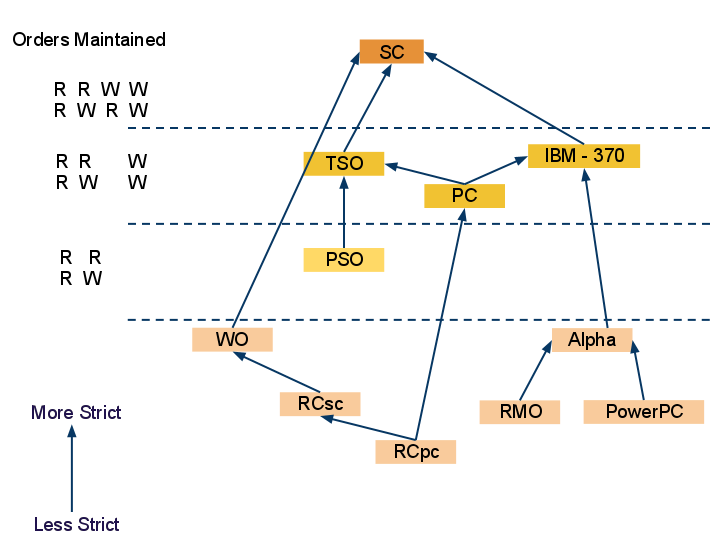

In this diagram we can see the different memory consistency models and how they are related to each other. The most strict model SC - sequential consistency is at the top which maintains all 4 orders of read-write. The first relaxation of write-read order is allowed in memory models TSO, PC and IBM - 370 which can be seen at second level from top. Third level which comprise of PSO model also allows write - write order relaxation and hence is less strict than any of the TSO, PC or IBM - 370. In the bottom level both read-read and read-write program orders are also relaxed and hence are the least strict. Different flavors of the model in this level are discussed later in chapter and in Yan Solihin[9] text.

Release Consistency Related Models

Release consistency is one of the consistency models used in the domain of the concurrent programming (e.g. in distributed shared memory, distributed transactions etc.). Systems of this kind are characterized by the existence of two special synchronization operations, release and acquire. Before issuing a write to a memory object a node must acquire the object via a special operation, and later release it. Therefore the application that runs within the operation acquire and release constitutes the critical region. The system is said to provide release consistency, if all write operations by a certain node are seen by the other nodes after the former releases the object and before the latter acquires it.

Release consistency provides these two kinds. Acquire accesses are used to tell the memory system that a critical region is about to be entered. Release accesses say that a critical region has just been exited. These accesses can be implemented either as ordinary operations on special variables or as special operations.

Release Consistency Models

We can broadly divide the types of models based on their implementations into the following.

Eager Release

Lazy Release

Eager Release Consistency Model

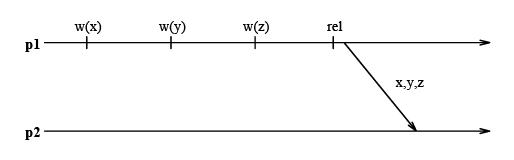

In Eager Release Consistency Model , the invalidation (or write notices) are propagated at release points. Munin's write shared protocol proposed by John K. Bennett, John B. Carter, and Willy Zwaenepoel of Rice University implemented this Eager Release Consistency Model. It is a software implementation of Release consistency Model which buffers writes until a release, instead of pipelining them as in the DASH implementation. At the release all writes going to the same destination are merged into a single message. This is illustrated in the following diagram:

This approach is conservative because the system does not know when the next acquire by another processor will occur or whether a given process will even perform an acquire and need to see those write notices.

More information about the implementation of this Eager Release Consistency Model can be studied from these two papers: Implementation and Performance of Munin and Munin: Distributed Shared Memory Using Multi-Protocol Release Consistency

Lazy Release Consistency Model

Lazy release consistency (LRC) is consistency model most frequently used in Software Distributed Shared Memory. It is used for implementing release consistency that lazily pulls modifications across the interconnect only when necessary. The basic concept behind the protocol is to allow processors to continue referencing cache blocks that have been written by other processors. Although write notices are sent as soon as a processor writes a shared block, invalidations occur only at acquire operations. This is sufficient to ensure that true sharing dependencies are observed. Lazy algorithms such as LRC do not make modications globally visible at the time of a release. Instead, LRC guarantees only that a processor that acquires a lock will see all modications that precede the lock acquire.

More information about Lazy Release Consistency Model can be obtained from these two papers : Lazy Release Consistency for Hardware-Coherent Multiprocessors and Lazy Release Consistency for Software Distributed Shared Memory

ARMv8's new one sided Release consistency model

Describe how this new feature adds value to this platform.

Comparison between Lazy and Eager Release Consistency Models

Eager release consistency represents the state of the art in release consistent protocols for hardware-coherent multiprocessors, while lazy release consistency has been shown to provide better performance for software distributed shared memory (DSM). Several of the optimizations performed by lazy protocols have the potential to improve the performance of hardware-coherent multiprocessors as well, but their complexity has precluded a hardware implementation.

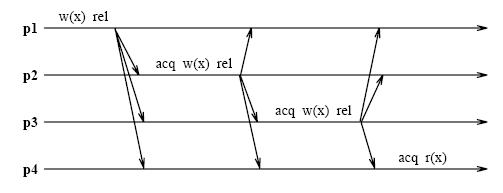

An Eager Release Consistency Model like Munin's write shared protocol may send more messages than a message passing implementation of the same application. The following figure shows an example where processors p1 through p4 repeatedly acquire the lock l, write the shared variable x, and then release l.

If an update policy is used in conjunction with Munin's write shared protocol and x is present in all caches, then all of these cached copies are updated at every release. Logically, however it suffices to update each processor's copy only when it acquires l. This results in a single message exchange per acquire as in a message passing implementation.

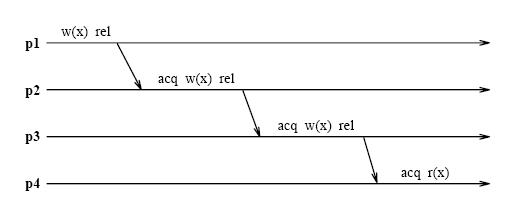

Unlike eager algorithms such as Munin's write shared protocol, lazy algorithms such as LRC (Lazy Release Consistency) do not make modications globally visible at the time of a release. Instead LRC guarantees only that a processor that acquires a lock will see all modications that precede the lock acquire. As indicated in the above figure , all modications that occur in program order before any of the releases in p1 through p4 precede the lock acquisition in p4. With LRC, modications are propagated at the time of an acquire. Only the modications that precede the acquire are sent to the acquiring processor. The modifications can be piggybacked on the message that grants the lock, further reducing message traffic. The following figure shows the message traffic in LRC for the same shared data accesses as in Figure under lazy consistency model section. l and x are sent in a single message at each acquire.

More information about differences between Eager and Lazy consistency Models can be found here : Lazy v. Eager Release Consistency and Lazy Release Consistency for Software Distributed Shared Memory

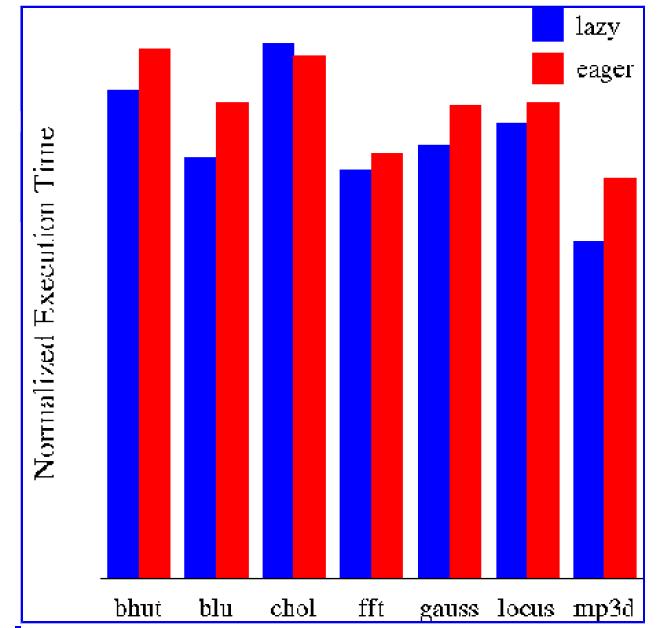

Performance Analysis of Lazy and Eager Consistency Models

Leonidas I. Kontothanassis, Michael L. Scott, and Ricardo Bianchini from University of Rochester have performed experimentations to evaluate a lazy release-consistent protocol suitable for machines with dedicated protocol processors. Their results indicate that the first protocol outperforms eager release consistency by as much as 20% across a variety of applications. The lazier protocol, on the other hand, is unable to recoup its high synchronization overhead. This represents a qualitative shift from the DSM world, where lazier protocols always yield performance improvements. Based on their results, they conclude that machines with flexible hardware support for coherence should use protocols based on lazy release consistency, but in a less aggressively lazy form than is appropriate for DSM.

These are the results they show from their experimentations to compare Lazy and Eager Consistency Models:

More information about this experimentation and results can be obtained from their paper : Lazy Release Consistency for Hardware-Coherent Multiprocessors

Comparison Between Different Models

In this section we will consider the comparison made between different consistency models in Performance Evaluation of Memory Consistency Models for Shared-Memory Multiprocessors by Kourosh Gharachorloo, Anoop Gupta, and John Hennessy.

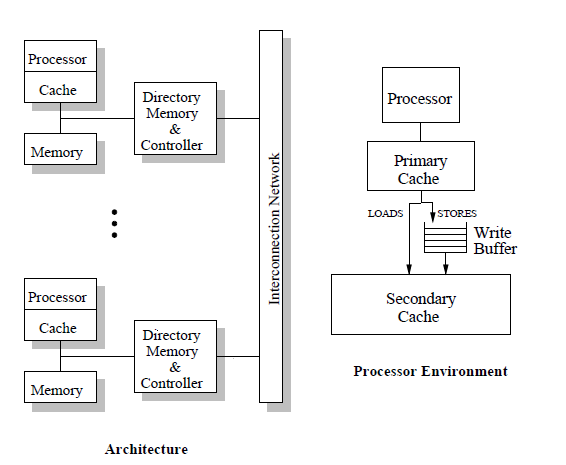

Architecture used

chosen an architecture that resembles

the DASH shared-memory multiprocessor [13],

Physical

memory is distributed among the nodes and cache coherence

is maintained using a distributed directory-based protocol. For

each memory block, the directory keeps track of remote nodes

caching the block, and point-to-point messages are sent to invalidate

remote copies. Acknowledgement messages are used to

inform the originating processing node when an invalidation has

been completed.

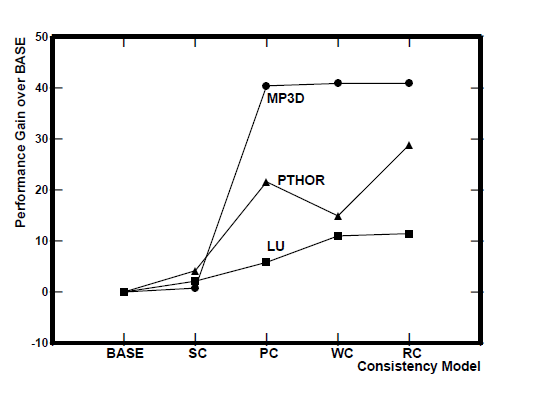

Here performance is defined as the processor utilization achieved in execution. reason for using processor utilization as the figure of merit is that it provides reasonable results even when the program’s control path is not deterministic and depends on relative timing of synchronization accesses. The processor utilization for each model is normalized to the performance of the BASE model for that program. The results show a wide range of performance gains due to the less strict models. Moving from BASE to SC, the gains are minimal. The largest gains in performance arise when moving from SC to PC. Surprisingly, WC does worse than PC for PTHOR. RC performs better than all the other models, but the gains over PC are small. The maximum gain from relaxing the consistency model is about 41% for MP3D, 29% for PTHOR, and 11% for LU.

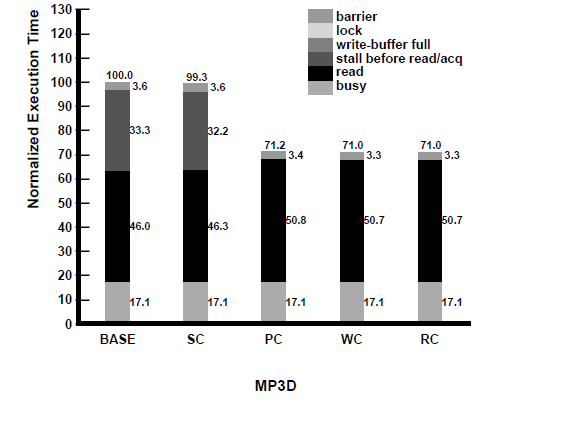

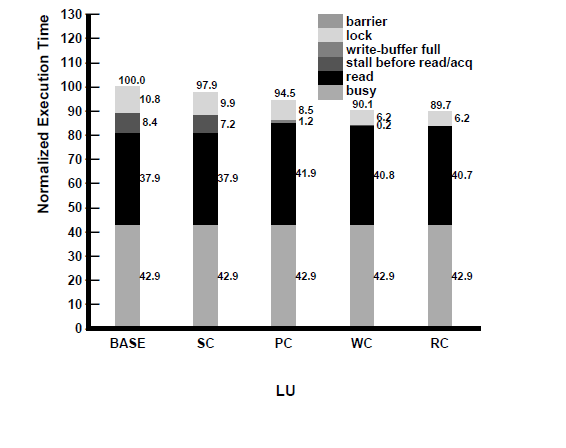

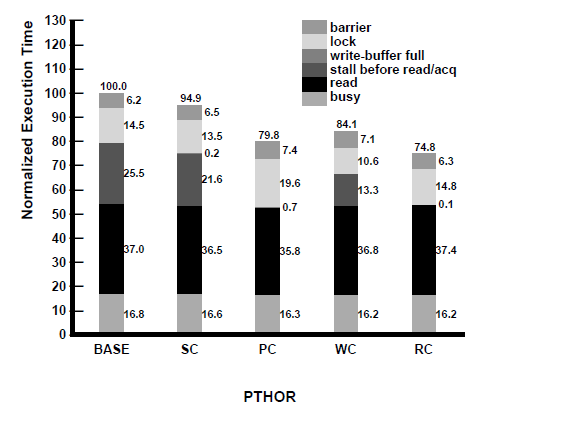

To better understand the above results, in Figure 4 we present a breakdown of the execution time for the applications under each of the models. The execution time of the models are normalized to the execution time of BASE for each application. The bottom section of each column represents the busy time or useful cycles executed by the processor. The black section above it represents the time that the processor is stalled waiting for reads. This time does not include the time that a read/acquire access may be stalled waiting for previous writes to perform. This time is represented by the section above it. The three sections on top of that represent the stalls due to write buffer being full, time spent spinning while waiting for acquires to complete, and time spent waiting at a barrier.2 Some general observations that can be made from the breakdown are: (i) the latency of read misses forms a large portion of the idle time, especially once we move to PC, WC, and RC; (ii) the major reason for BASE and SC to be worse than the other models is the stalling of the processor before reads (and acquires) for pending writes to complete; (iii) the write buffer being full does not seem to be a factor in hindering the performance of PC; and finally, (iv) the reason for WC performing worse than PC and RC is the extra processor stalls at acquires and the first read after release accesses (as described in Section 4). The small variation in busy times for PTHOR is due to the non-deterministic behavior of the application for the same input. We now look at the comparative performance of the models in greater detail.

In another study Ronald Bos et al. investigated the performance benefits of relaxed consistency models on multiprocessors executing a process network application. They used a trace-driven simulator, developed using SystemC, to model the distributed shared memory system of a

prototype multiprocessor developed at Philips called Philips CAKE (a nonuniform, distributed shared memory multiprocessor prototype). The simulator offers two consistency models: Sequential Consistency (SC) and a generalized Relaxed Consistency (RC) model. Input traces were generated by running a process network application in a cycle-accurate simulator of the prototype multiprocessor. The results showed that relaxed consistency has marginal performance benefits over sequential consistency. The advantage of relaxed memory consistency decreases for increasing network latency. More information about this study can be found in this paper Performance Benefits of Relaxed Memory Consistency for Process Network Applications

Software based concurrency models - C++ as an example

Describe how C++ provides concurrency with software as opposed to hardware level consistency provided by platforms described above

Some Shortcomings of Relaxed Models

Even though relaxed models enable desirable optimizations, their major drawback is increased programming complexity. Most programmers have implicit assumptions about the memory behavior of a shared-memory multiprocessor and use these assumptions when reasoning about the correctness of their programs. Correctness problems arise when certain orders that are implicitly assumed by the programmer are not maintained by the underlying memory model. The advantage of sequential consistency is that no matter what implicit assumptions a programmer makes regarding the program order or atomicity of memory operations, SC conservatively maintains all such orders. Therefore, the programmer’s implicit assumptions are never violated.

In contrast to sequential consistency, relaxed memory models require programmers to abandon their implicit and intuitive understanding of how memory behaves. Most of the relaxed models we have described require the programmer to reason with low level (and non-intuitive) reordering optimizations to understand the behavior of their programs. In addition, many of the models have been defined using complicated terminology, and in some cases, the original definitions have ambiguities which leave the semantics open to interpretation. These factors further exacerbate the difficulties in programming these models.

Another difficulty with relaxed models is the lack of compatibility among the numerous models and systems in existence. Many of the subtle differences among models make little difference in the actual performance of a model. However, such differences make the task of porting programs across different systems quite cumbersome. Similarly, the variety of models in existence make it difficult for programmers to adopt a programming methodology that works across a wide range of systems. With all their shortcomings, relaxed models are widely used in many commercial multiprocessor systems, including systems designed by major computer manufacturers such as Digital Equipment, IBM, and Sun Microsystems. The wide-spread use of these systems suggests that even though sequential consistency is simpler to use for programmers, performance often plays an important role in the ultimate choice made by system designers and programmers. Nevertheless, we would ideally like to provide the extra performance with as little programming complexity as possible.

Relaxed Memory Consistency Model Concept Quiz

Answers: 1. 2. 3. 4. 5. 6. 7. 8. 9. 10.

References

- Speculative Sequential Consistency with Little Custom Storage

- Two techniques to enhance the performance of memory consistency models

- Optimizing parallel SPMD programs

- Efficient and correct execution of parallel programs that share memory

- Shared Memory Consistency Models

- Designing Memory Consistency Models For Shared-Memory Multiprocessors

- Performance Evaluation of Memory Consistency Models for Shared-Memory Multiprocessors

- A Primer on Memory Consistency and Cache Coherence

- Multiprocessors Should Support Simple Memory-Consistency Models

- Technical Report by Kourosh Gharachorloo on Memory Consistency Models for shared-memory Multiprocessors

- Consistency Models

- Delayed Consistency and Its Effects on the Miss Rate of Parallel Programs

- Jaroslav Ŝevčik, Viktor Vafeiadis, Francesco Zappa Nardelli, Suresh Jagannathan, Peter Sewell. Relaxed-memory concurrency and verified compilation

- Joseph Devietti, Jacob Nelson, Tom Bergan, Luis Ceze, Dan Grossman. RCDC: a relaxed consistency deterministic computer

- Abdul Naeem, Xiaowen Chen, Zhonghai Lu, Axel Jantsch. Scalability of relaxed consistency models in NoC based multicore architectures

- Alexander Jaffe, Thomas Moscibroda, Laura Effinger-Dean, Luis Ceze, Karin Strauss. The Impact of Memory Models on Software Reliability in Multiprocessors

- Jacob Burnim Koushik Sen Christos Stergiou. Testing concurrent programs on relaxed memory models

- Sela Mador-Haim, Rajeev Alur, Milo M. K. Martin. Litmus tests for comparing memory consistency models: how long do they need to be?

- Implementation and Performance of Munin

- Munin: Distributed Shared Memory Using Multi-Protocol Release Consistency

- Lazy v. Eager Release Consistency

- Lazy Release Consistency for Software Distributed Shared Memory

- Memory Consistency Models, by Sarita Adve

- Abdul Naeem, Xiaowen Chen, Zhonghai Lu, Axel Jantsch. Realization and performance comparison of sequential and weak memory consistency models in network-on-chip based multi-core systems

- Jade Alglave, Luc Maranget. Stability in weak memory models. Computer Aided Verification: Lecture Notes in Computer Science Volume 6806, 2011, pp 50-66, 2011

- Kourosh Gharachorloo, Daniel Lenoski, James Laudon, Phillip Gibbons, Anoop Gupta, and John Hennessy. Memory consistency and event ordering in scalable shared-memory multiprocessors. In ISCA ’98: 25 Years of the International Symposia on Computer Architecture (Selected Papers), pages 376–387. ACM, 1998.

- James R. Goodman. Cache consistency and sequential consistency. Technical Report 61, IEEE Scalable Coherent Interface (SCI) Working Group, March 1989.

- Dan Lenoski, James Laudon, Kourosh Gharachorloo,Anoop Gupta, and John Hennessy. The directory-based cache coherence protocol for the DASH multiprocessor. In Proceedings of the 17th Annual International Symposium on Computer Architecture, May 1990.

- Jenny Mankin. Parallel Computing Memory Consistency Models: A Survey in Past and Present Research

- http://xenon.stanford.edu/~hangal/manovit_thesis.pdf

- http://www.cs.utah.edu/~rajeev/cs7820/pres/7820-12.pdf

- http://web.cecs.pdx.edu/~alaa/ece588/notes/mem-consistency.pdf

- http://www.cs.nyu.edu/~lerner/spring10/MCP-S10-Read06-ConsistencyTutorial.pdf

- http://rsim.cs.illinois.edu/~sadve/JavaWorkshop00/talk.pdf

- Consistency models by KFPM university