CSC/ECE 506 Spring 2011/ch11 BB EP: Difference between revisions

| Line 82: | Line 82: | ||

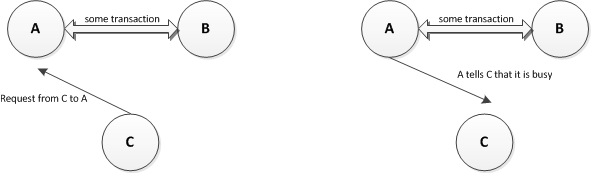

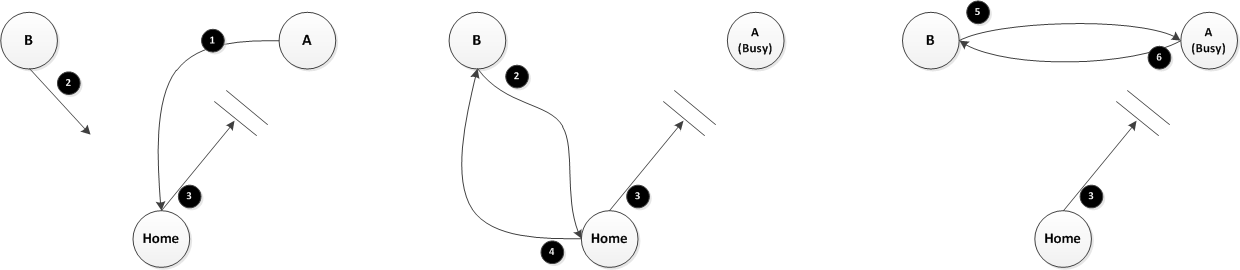

SCI's primary method for preventing race conditions is having atomic transactions. A transaction is defined as a set of sub-actions necessary to complete some requested action, such as reading from memory or writing to a variable. Suppose, for example, that node ''A'' is in the middle of a transaction with node ''B''. Node ''C'' then tries to make a request of node ''A''. Node ''A'' will respond to node ''C'' that it is busy, telling node ''C'' to try again, as shown in the following diagram. | SCI's primary method for preventing race conditions is having atomic transactions. A transaction is defined as a set of sub-actions necessary to complete some requested action, such as reading from memory or writing to a variable. Suppose, for example, that node ''A'' is in the middle of a transaction with node ''B''. Node ''C'' then tries to make a request of node ''A''. Node ''A'' will respond to node ''C'' that it is busy, telling node ''C'' to try again, as shown in the following diagram. | ||

[[Image:AtomicBusy.png|center]] | [[Image:AtomicBusy.png|center]] | ||

Thus, in the Early Invalidation race, node ''A'' would be in the middle of a transaction, which would prevent node ''B'' from invalidating it. | Thus, in the Early Invalidation race, node ''A'' would be in the middle of a transaction, which would prevent node ''B'' from invalidating it. Note, however, that nodes will not stay in the busy state indefinitely. Doing so would lead to potential deadlocks, so all transactions have time outs that will eventually cause the transaction to fail, thus moving the node out of the busy state, making it able to respond to requests again. | ||

==== Head Node ==== | ==== Head Node ==== | ||

Revision as of 19:17, 18 April 2011

History

Background

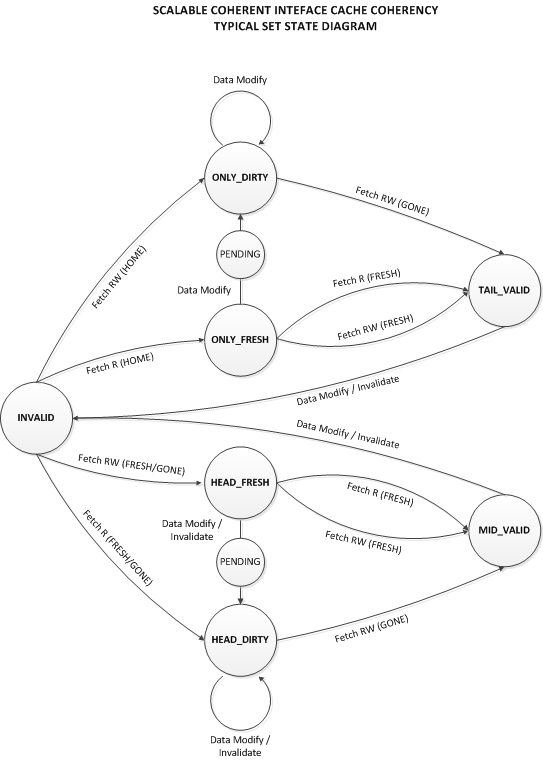

The Scalable Coherent Interface (SCI) cache coherence protocol defines a set of states for memory, a set of states for cache lines, and a set of actions that transition between these states when processors access memory.

Three sets of these attributes are defined for minimal, typical, and full applications:

- Minimal - for ‘trivial but correct’ applications that require the presence of the memory in only one cache line. It does not enable read-sharing, and is appropriate for small multi-processors or applications that don’t require significant sharing.

- Typical - enables read-sharing of a memory location and provisions for efficiency, such as DMA transfers, local caching of data, and error recovery. This set adds an additional stable memory state (FRESH) and multiple cache states. This set will be the focus of this article going forward.

- Full - adds support for pair-wise sharing, QOLB lock bit synchronization, and cleansing and washing of cache lines.

State Diagrams

Memory States

The memory states define the state of the memory block from the perspective of the home directory. This 2-bit field is maintained in the memory tag by the home directory along with the pointer to the head of the sharing list (forwId). This simple state model includes three stable states and one semi-stable state.

| Table 1: Memory States | ||||

|---|---|---|---|---|

| State | Description | Minimal | Typical | Full |

| HOME | no sharing list | Y | Y | Y |

| FRESH | sharing-list copy is the same as memory | Y | Y | |

| GONE | sharing-list copy may be different from memory | Y | Y | Y |

| WASH* | transitional state (GONE to FRESH) | Y | ||

Cache States

The cache-line states are maintained by each processors cache-coherency controller. This 7-bit field is stored in each cache line in the sharing list, along with the pointer to the next sharing-list node (forwId) and the previous sharing-list node (backID). Seven bits enable up to 128 possible values, and SCI defines twenty-nine stable-states for use in the minimal, typical, and full sets.

Stable states are those cache states that exist when a memory transaction is not in process. Their names are derived from a combination of the position of the node in the sharing list, such as ONLY, HEAD, MID, and TAIL the state of the data for that node, such as FRESH, CLEAN, DIRTY, VALID, STALE, etc.

Race Conditions

In a distributed shared memory system with caching, the emergence of race conditions is extremely likely. This is mainly due to the lack of a bus for serialization of actions. It is further compounded by the problem of network errors and congestion.

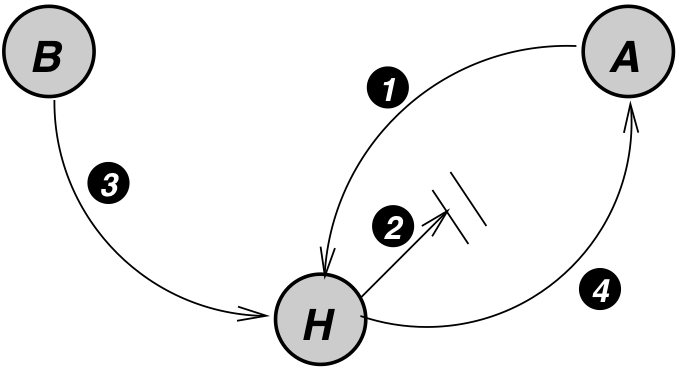

The early invalidation case from Section 11.4 in Solihin is an excellent example of a race condition that can arise in a distributed system. Recall the diagram from the text, and the cache coherence actions, as shown below.

The circled actions are as follows:

- A sends a read request to Home.

- Home replies with data (but the message gets delayed).

- B sends a write request to Home.

- Home sends invalidation to A, and it arrives before the ReplyD

The SCI protocol has a way to handle this race condition, as well as many others, and the following sections will discuss how the SCI protocol design can prevent this race condition.

Prevention in the SCI Protocol

Race conditions are almost non-existent in the SCI protocol, due primarily to the protocol's design. A brief discussion of how this is accomplished follows, followed by a discussion showing what happens if this condition arises in the SCI protocol.

Atomic Transactions

SCI's primary method for preventing race conditions is having atomic transactions. A transaction is defined as a set of sub-actions necessary to complete some requested action, such as reading from memory or writing to a variable. Suppose, for example, that node A is in the middle of a transaction with node B. Node C then tries to make a request of node A. Node A will respond to node C that it is busy, telling node C to try again, as shown in the following diagram.

Thus, in the Early Invalidation race, node A would be in the middle of a transaction, which would prevent node B from invalidating it. Note, however, that nodes will not stay in the busy state indefinitely. Doing so would lead to potential deadlocks, so all transactions have time outs that will eventually cause the transaction to fail, thus moving the node out of the busy state, making it able to respond to requests again.

Head Node

Another method for preventing race conditions is to have the Head node of a sharing list perform many of the coherence actions. As a result, only one node is performing actions such as writes and invalidations of other sharers. Since only one node is performing these actions, the possibility of concurrent actions is decreased. If another node wants to write, for instance, it must become the Head Node of the sharing list for the cache line to which it wants to write, displacing the current Head node as the node that can write. All state changes in the sharing list occur at the behest of the Head node, with the exception of nodes deleting themselves from the list.

When simply sharing a read-only copy of a node, as in when the memory is a FRESH state, the Head node is somewhat irrelevant. All the sharing nodes have their own cached value of the cache line. If any node wants to write, including the Head node, it must perform an additional action in order to do so. Likewise, when a sharing list is sharing a line of data in the dirty, or GONE, memory state, as long as the Head node has not written to the line, then all the sharing nodes stay in the list with their cached line. However, at the point the Head node wants to write, it can write its data immediately, but it then must invalidate all other shared copies, via the forward pointers in the sharing list.

This, however, does not protect against a race condition where the memory is FRESH, the Head node wants to write, and another node also wants to join the list. The Memory Access mechanism prevents this condition.

Memory Access

The final way that the SCI protocol minimizes race conditions is by changing the directory structure. In Solihin 11.4, there is a Home node which keeps track of the cache state and has a certain amount of knowledge about the system as a result. In the SCI protocol, there is still a Home node of sorts, but this node is only responsible for keeping track of who the current Head. Such a Home node is usually the node where the memory block physically resides. When a node wants to access a block of memory, it sends a request to the Home node of that memory block, assuming it isn't already in the sharing list for that block. In the event that the data is already being used, the Home node will include in its response the address of the Head node of the sharing list. This requirement necessarily serializes the order of access, as one access request is not serviced at the Home node until the previous request is finished, and the requests are processed in FIFO order. As well, whichever node is the Head of the sharing list will be notified by the new Head when it is demoted. All parties involved in a potential race are thus talking to each other, preventing the race from occurring in the first place.

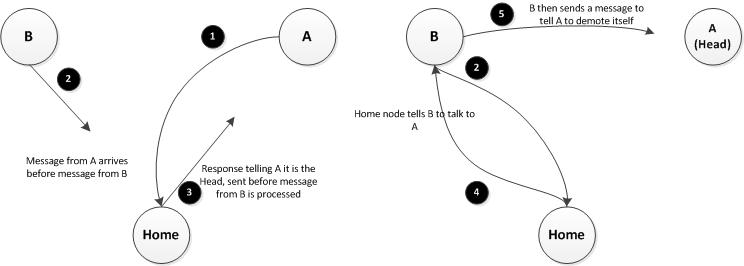

Because of this requirement, the projected race condition from the previous section, where the Head node is in a FRESH state and wants to write while another node also wants to read, cannot occur. This is because both nodes must make their request to the Home node; the Head node must request the ability to write, and the other node must request the data from the Home node. One of these requests will make it to the Home node first, resulting in the second request being deferred to the first node to reach to Home node. Such a scenario is pictured below.

Solihin 11.4 Resolution

The three previous sections discussed individual parts of how the SCI protocol reduces race conditions. Putting all three of these parts together yields the following scenario which resolves the Early Invalidation race as described in the text.

- A sends a request to Home for access to the memory block. It then goes into a busy state while it waits for a response.

- B also sends a request to Home for access to the same memory block. A's request is received first

- Home responds to A with the data, but this response gets caught in network traffic and delayed. This response is sent before Home processes the request from B.

- Home responds to the request from B, telling it that A is the current Head node.

- B then sends a request to A to tell it to demote itself.

- A still hasn't received the response from Home, so it is still in a transaction, and it tells B that it is busy. B will have to retry the request.

It should be noted that B is also in the busy state, so any requests made of it will also be rejected. This could lead to a chaining of nodes in the busy state, but as soon as node A receives its response from Home or times out, the requests in the chain will be successively accepted and processed.

Possible Race Conditions

Concurrent List Deletions

Simultaneous Deletion and Invalidation

Summary

Definitions and Terms

References

[1] "IEEE Standard for Scalable Coherent Interface (SCI).," IEEE Std 1596-1992 , vol., no., pp.i, 1993. doi: 10.1109/IEEESTD.1993.120366 URL: http://ieeexplore.ieee.org.proxied.lib.ncsu.edu/stamp/stamp.jsp?tp=&arnumber=347683&isnumber=8049