Chp8 my: Difference between revisions

| Line 153: | Line 153: | ||

The snoop request always happens as a read or write request. When a processor has a write request, it will invalid other processors and asks them to evict the cache line. The two protocols used for x86 microprocessors are MESI (Intel: x86 and IPF) and MOESI (AMD). MESI is a two hop protocol and every cache line is held in one of four states, which track meta data, such as whether the data is shared or has been modified. | The snoop request always happens as a read or write request. When a processor has a write request, it will invalid other processors and asks them to evict the cache line. The two protocols used for x86 microprocessors are MESI (Intel: x86 and IPF) and MOESI (AMD). MESI is a two hop protocol and every cache line is held in one of four states, which track meta data, such as whether the data is shared or has been modified. | ||

In contrast, MOESI is a three hop protocol with slightly modified states. Under MOESI, a processor can write to a line in the cache, and then put it in the O state and share the data, without writing the cache line back to memory (i.e. it allows for sharing dirty data). This can be advantageous, particularly for multi-core processors that do not share the last level of cache. For the MESI protocol, this would require an additional write back to main memory<ref> | In contrast, MOESI is a three hop protocol with slightly modified states. Under MOESI, a processor can write to a line in the cache, and then put it in the O state and share the data, without writing the cache line back to memory (i.e. it allows for sharing dirty data). This can be advantageous, particularly for multi-core processors that do not share the last level of cache. For the MESI protocol, this would require an additional write back to main memory<ref>{15}</ref>. | ||

==References== | ==References== | ||

Revision as of 02:53, 27 March 2010

In computing, cache coherence (also cache coherency) refers to the consistency of data stored in local caches of a shared resource. Cache coherence is a special case of memory coherence.

Cache Coherence

Definition

In computing, cache coherence (also cache coherency) refers to the integrity of data stored in local caches of a shared resource. Cache coherence is a special case of memory coherence. In order to maintain the property of correct accesses to memory, system engineers develop kinds of coherence protocols to tackle them down. In this section, coherence protocols in bus-based multiprocessors are discussed.[1]

The coherence of caches is obtained if the following conditions are met:

- A read made by a processor P to a location X that follows a write by the same processor P to X, with no writes of X by another processor occurring between the write and the read instructions made by P, X must always return the value written by P. This condition is related with the program order preservation, and this must be achieved even in monoprocessed architectures.

- A read made by a processor P1 to location X that follows a write by another processor P2 to X must return the written value made by P2 if no other writes to X made by any processor occur between the two accesses. This condition defines the concept of coherent view of memory. If processors can read the same old value after the write made by P2, we can say that the memory is incoherent.

- Writes to the same location must be sequenced. In other words, if location X received two different values A and B, in this order, by any two processors, the processors can never read location X as B and then read it as A. The location X must be seen with values A and B in that order.

Bus sniffing is the process where the individual caches monitor address lines for accesses to memory locations that they have cached. When a write operation is observed to a location that a cache has a copy of, the cache controller invalidates its own copy of the snooped memory location.

Coherency protocol

All the protocols talked about here are write back caches.[6]

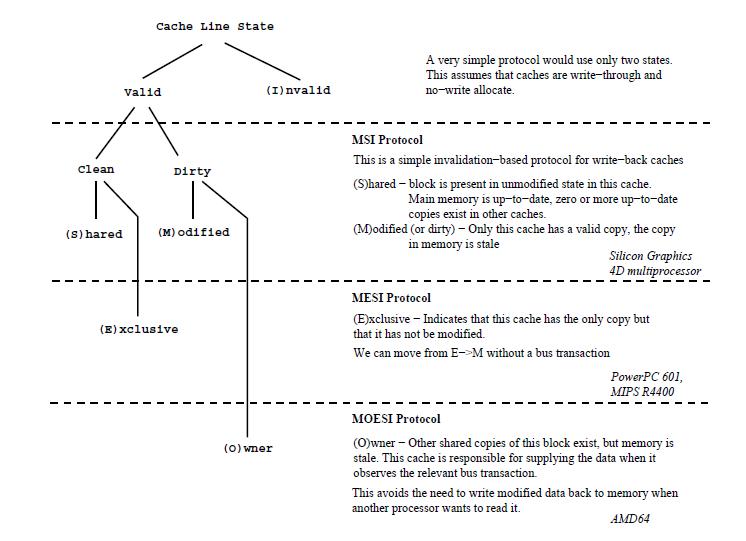

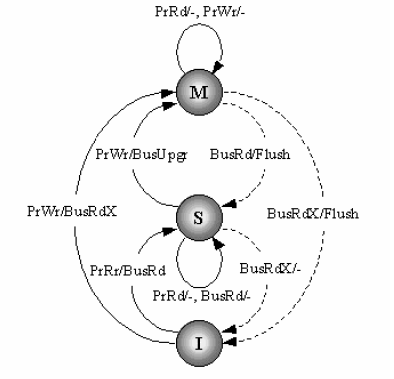

MSI protocol

The simplest write back protocol is MSI protocol, therefore it is not deployed in real processors. Although it is not really in use, it is a good start to understand complicated protocols derived from this basic prototype.[14]

In MSI protocol, there are two processor requests and four bus side requests.

- PrRd: processor side request to read to a cache block;

- PrWr: processor side request to write to a cache block;

- BusRd: snooped request that indicates there is a read request to a cache block made by another processor;

- BusRdX: snooped request that indicates there is a write(read exclusive) request to a cache block made by another processor if that processor does not have a valid copy of the block;

- BusUpgr: snooped request that indicates there is a write(read exclusive) request to a cache block made by another processor if that processor already has a valid copy of the block;

- Flush: snooped request that indicates that an entire cache block is written back to the main memory by another processor;

Each cache block has an associated state which could be one of the following three:

- Modified(M): the cache block is valid in only one cache, and it implies exclusive ownership of the cache. Modifed state means both the cache is different from the value in the main memory, and it is cached only in one location.

- Shared(S): the cache block is valid, and maybe shared by multiple processors. Shared also means the value is the same as the one in the main memory.

- Invalid(I): the cache block is invalid.

The state transition diagram for MSI protocol is showed below.

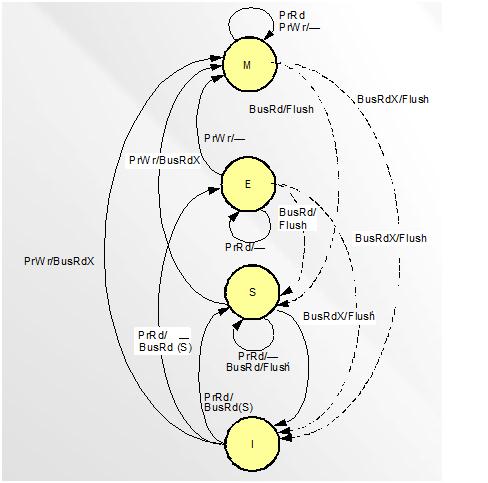

MESI protocol (Intel)

MESI basics

In MESI protocol, there are four cache block status[13]:

- 1. Modified (M): the cache block valid in only one cache and the value is like different from the main memory.

- 2. Exclusive (E): the cache block is valid and clean, but only resides in one cache.

- 3. Shared (S): the cache block is valid and clean, but may exist in multiple caches.

- 4. Invalid (I): the cache block is invalid.

Here we will introduce how Intel implements MESI. There are still four states, Modified, Exclusive, Shared and Invalid state.[2,9,10,11]

In Modified state: Read request: it still keeps in Modified state and transfer the data to the CPU. Write request: it still keeps in Modified state and writes in the cache. Snooping result: cache line might write-back to main memory and changes states from Modified to Shared. Or, it might write-back to main memory and changes from Modified to Invalid.

In Exclusive state: Read request: it still keeps in Exclusive state and transfer the data to the CPU. Write request: it will become Modified state and hold in the cache. Snooping result: it might changes to Shared or Invalid state.

In Shared state: Read request: it will still in Shared state and transfers the data to the CPU. Write request: There are two situations might happen here. The first one is it goes to Exclusive state and being exclusive. The other one is to still keep Shared state with write-through cache and update data to the main memory. Snooping request: there will be transitions which are Shared or Invalid.

In Valid state: Read request: There are three transitions might happen here. First of all, if the data read into to the cache, then it will become exclusive. Secondly, it might become Shared state after reading the data into the cache. Last, it will be still Invalid if there is a read/write miss happens. Write request: there will happen write miss and still in Invalid state. Snooping result: it will still go back to Invalid state, because the cache does have any data checked.

We are going to introduce those requests.

- PrRd: processors request to read a cache block.

- PrWr: processors request to write a cache block.

- BusRd: snooped request a read request to a cache block made by another processor.

- BusRdX: snooped request a read exclusive (write) request to a cache block made by another processor which doesn't already have the block. Shortly, write cache-to-memory

- BusUpgr: snooped request indicates that there is a write request to a cache block that another processor already has in its cache.

- Flush: snooped request indicates than an entire cache block is written back to main memory by another processors.

- FlushOpt: snooped request indicates that an entire block cache block is posted on the bus in order to supply it to another processor. Shortly, cache-to-cache.

This figure shows the status change when bus traction generated.

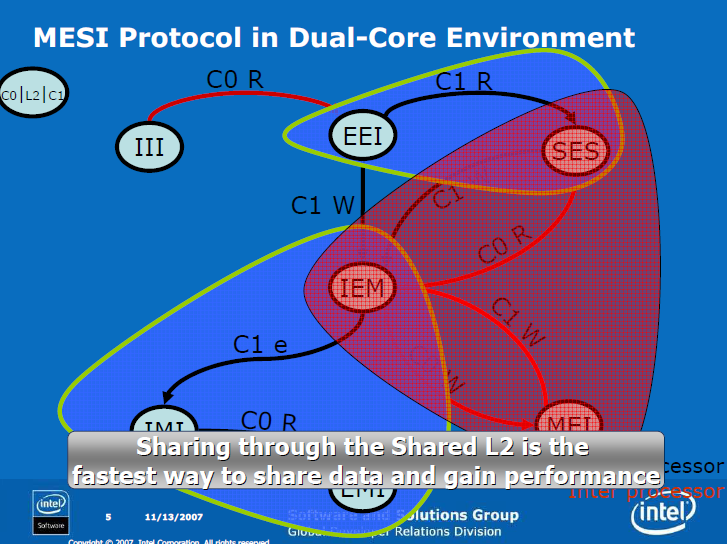

MESI protocol in Dual-Core architecture

Cache-line states have two variations: core scope means state in L1 data cache; processor scope means state in L1 + L2 caches. Data shared by cores can be exclusively owned by the processor. Data can be Modified in L1 data cache and Exclusive in the L2.

Only modified data is transferred from one core to another. Non-modified data that is missing in the L2 is fetched from memory.

Effective Sharing

- Two cores in the same processor load data from the same cache line

- Cache line is brought only once from the memory to the L2 cache

- Reduces bus utilization

- One core produces data and the other core later consumes it

- As long as the produced data chunks are bigger than the L1 data cache and smaller than the L2 cache

- Produced data is evicted from the L1 data cache to the L2 cache

- Consumed data is fetched from the L2 cache

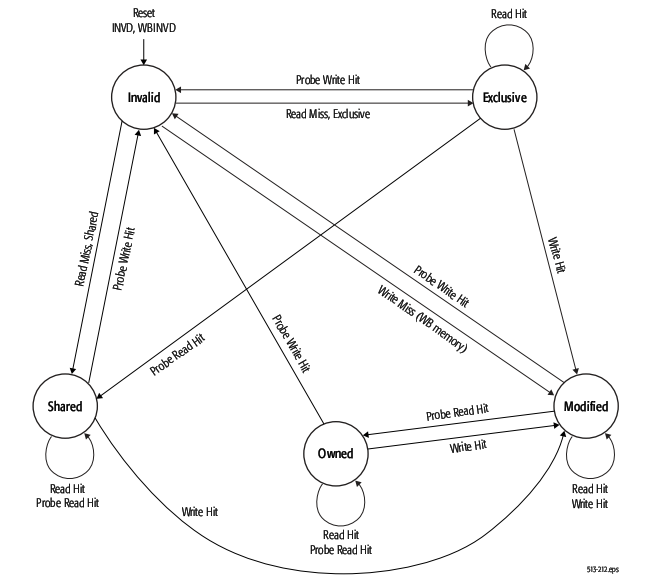

MOESI protocol (AMD)

MOESI basics

AMD Opteron is using MOESI (modified, owned, exclusive, shared, invalid) protocol for cache sharing. In addition to the four states in MESI, which is adopted by Intel for their Xeon processors, a fifth state "Owned" appears here representing data that is both modified and shared. Using MOESI, writing modified data back to main memory is avoided before being shared, which could save bandwidth and gain much faster access to users to the cache.

The states of the MOESI protocol are:

- Invalid—A cache line in the invalid state does not hold a valid copy of the data. Valid copies of the data can be either in main memory or another processor cache.

- Exclusive—A cache line in the exclusive state holds the most recent, correct copy of the data. The copy in main memory is also the most recent, correct copy of the data. No other processor holds a copy of the data.

- Shared—A cache line in the shared state holds the most recent, correct copy of the data. Other processors in the system may hold copies of the data in the shared state, as well. If no other processor holds it in the owned state, then the copy in main memory is also the most recent.

- Modified—A cache line in the modified state holds the most recent, correct copy of the data. The copy in main memory is stale (incorrect), and no other processor holds a copy.

- Owned—A cache line in the owned state holds the most recent, correct copy of the data. The owned state is similar to the shared state in that other processors can hold a copy of the most recent, correct data. Unlike the shared state, however, the copy in main memory can be stale (incorrect). Only one processor can hold the data in the owned state—all other processors must hold the data in the shared state.

The first figure below shows the five different states of MOESI protocol. There are four valid states: M(odified) and E(xclusive) are not shared with other cache, while O(wned) and S(hared) with other caches. The second figure shows the state transitions of MOESI protocol.

AMD Special Coherency Considerations

In some cases, data can be modified in a manner that is impossible for the memory-coherency protocol to handle due to the effects of instruction prefetching. In such situations software must use serializing instructions and/or cache-invalidation instructions to guarantee subsequent data accesses are coherent. An example of this type of a situation is a page-table update followed by accesses to the physical pages referenced by the updated page tables. The following sequence of events shows what can happen when software changes the translation of virtual-page A from physical-page M to physical-page N:

- Software invalidates the TLB entry. The tables that translate virtual-page A to physical-page M are now held only in main memory. They are not cached by the TLB.

- Software changes the page-table entry for virtual-page A in main memory to point to physicalpage N rather than physical-page M.

- Software accesses data in virtual-page A. During Step 3, software expects the processor to access the data from physical-page N. However, it is possible for the processor to prefetch the data from physical-page M before the page table for virtualpage A is updated in Step 2. This is because the physical-memory references for the page tables are different than the physical-memory references for the data. Because the physical-memory references are different, the processor does not recognize them as requiring coherency checking and believes it is safe to prefetch the data from virtual-page A, which is translated into a read from physical page M. Similar behavior can occur when instructions are prefetched from beyond the page table update instruction.

To prevent this problem, software must use an INVLPG or MOV CR3 instruction immediately after the page-table update to ensure that subsequent instruction fetches and data accesses use the correct virtual-page-to-physical-page translation. It is not necessary to perform a TLB invalidation operation preceding the table update.

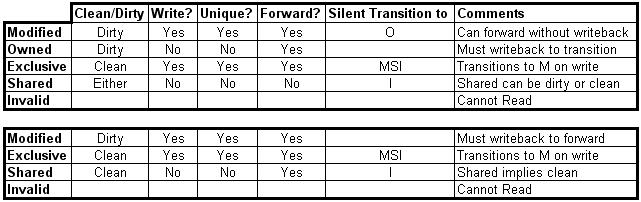

Cache Coherence Protocol Comparision

We are going to introduce the difference between MOESI and MESI protocols. Here we put a chart to show the difference among them.

The snoop request always happens as a read or write request. When a processor has a write request, it will invalid other processors and asks them to evict the cache line. The two protocols used for x86 microprocessors are MESI (Intel: x86 and IPF) and MOESI (AMD). MESI is a two hop protocol and every cache line is held in one of four states, which track meta data, such as whether the data is shared or has been modified.

In contrast, MOESI is a three hop protocol with slightly modified states. Under MOESI, a processor can write to a line in the cache, and then put it in the O state and share the data, without writing the cache line back to memory (i.e. it allows for sharing dirty data). This can be advantageous, particularly for multi-core processors that do not share the last level of cache. For the MESI protocol, this would require an additional write back to main memory<ref>{15}</ref>.

References

- Cache Coherence

- Cache consistency & MESI Intel

- A closer look at AMD's dual-core architecture

- Trace-Driven Simulation of the MSI, MESI and Dragon Cache Coherence Protocols

- Understanding the Detailed Architecture of AMD's 64 bit Core

- MSI,MESI,MOESI sheet

- AMD64 Architecture Programmer’s Manual

- Intel PENTIUM

- Intel Corporation (1998). “Embedded Pentium Processor Family Developer’s Manual.”

- Intel Corporation (2002).Intel Architecture Software Developer's Manual, Volume 1:basic Architecture

- Intel Corporation (2002). “An Overview of Cache

- Embedded Pentium® Processor Family

- NCSU CSC 506 Parallel Computing Systems

- Fundamentals of Parallel Computer Archiecture by Yan Solihin

- An Introduction to Multiprocessor Systems