CSC/ECE 506 Spring 2010/ch 2 aj/Data Parallel Programming: Difference between revisions

| Line 11: | Line 11: | ||

= Example = | = Example = | ||

This section shows a simple example | This section shows a simple example adapted from Solihin textbook that illustrates the data-parallel programming model. Each of the codes below are written in pseudo-code style. | ||

Suppose we want to perform the following task on an array <code>a</code>: updating each element of <code>a</code> by the product of itself and its index, and adding together the elements of <code>a</code> into the variable <code>sum</code>. The corresponding code is shown below. | Suppose we want to perform the following task on an array <code>a</code>: updating each element of <code>a</code> by the product of itself and its index, and adding together the elements of <code>a</code> into the variable <code>sum</code>. The corresponding code is shown below. | ||

| Line 35: | Line 35: | ||

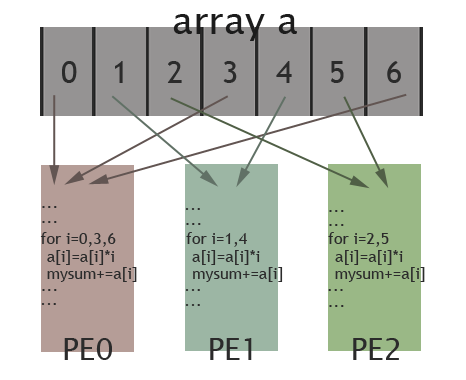

In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. In our example, elements assigned to each PE are interleaved instead of continuous. The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes(0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved. | In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. In our example, elements assigned to each PE are interleaved instead of continuous. The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes(0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved. | ||

[[Image:506wiki1.png|frame|center|150px|Illustration of data parallel programming(adapted from [ | [[Image:506wiki1.png|frame|center|150px|Illustration of data parallel programming(adapted from [http://computing.llnl.gov/tutorials/parallel_comp/#ModelsData Introduction to Parallel Computing])]] | ||

// data reorganization via shared memory | // data reorganization via shared memory | ||

'''shared''' sum; | '''shared''' sum; | ||

Revision as of 03:34, 4 February 2010

Data-Parallel Programming Model

Introduction

Data-parallel processing is when multiple processing elements perform an action separately on different sets of data and exchange information globally before processing more code synchronously. Even though this model is offered in contrast to the shared memory model and the message passing model, data-parallel processing can be accomplished by actually passing messages or sharing memory at a deeper level. The main distinction between the data-parallel model and the other two models has to do with the outcome of the individual steps instead of the method of communication.

Importance

Data-parallel processing has been found to be effective in situations where computations involve every element of a matrix in a uniform way. This allows the processing to be divided spatially over memories. Originally, the need for this model was discovered in scientific calculations and is still used today.

Example

This section shows a simple example adapted from Solihin textbook that illustrates the data-parallel programming model. Each of the codes below are written in pseudo-code style.

Suppose we want to perform the following task on an array a: updating each element of a by the product of itself and its index, and adding together the elements of a into the variable sum. The corresponding code is shown below.

// a simple code

sum = 0;

for (i = 0; i < a.length; i++)

{

a[i] = a[i] * i;

sum = sum + a[i];

}

When we implement the task using data-parallel programming model, the program can be divided into two parts. The first part performs the same operations on separate elements of the array for each processing element, and the second part reorganizes data among all processing elements (In our example data reorganization is summing up values across different processing elements). Since data-parallel programming model only defines the overall effects of parallel steps, the second part can be accomplished either through shared memory or message passing. The three code fragments below are examples for the first part of the program, shared-memory version of the second part, and message passing for the second part, respectively.

// data parallel programming: let each PE perform the same task on different pieces of distributed data

pe_id = getid();

my_sum = 0;

for (i = pe_id; i < a.length; i += number_of_pe) //separate elements of the array are assigned to each PE

{

a[i] = a[i] * i;

my_sum = my_sum + a[i]; //all PEs accumulate elements assigned to them into local variable my_sum

}

In the above code, data parallelism is achieved by letting each processing element perform actions on array's separate elements, which are identified using the PE's id. In our example, elements assigned to each PE are interleaved instead of continuous. The picture below illustrates how elements of the array are assigned among different PEs for the specific case: length of the array is 7 and there are 3 PEs available. Elements in the array are marked by their indexes(0 to 6). As shown in the picture, PE0 will work on elements with index 0, 3, 6; PE1 is in charge of elements with index 1, 4; and elements with index 2, 5 are assigned to PE2. In this way, these 3 PEs work collectively on the array, while each PE works on different elements. Thus, data parallelism is achieved.

// data reorganization via shared memory shared sum; lock(); //prevent race condition sum = sum + my_sum; //each PE adds up their local my_sum to shared variable sum unlock(); barrier;

The above code shows how data reorganization is done by shared memory programming model. A shared variable is declared to store the global sum. Each PE accumulates their local my_sum into this variable. lock() and unlock() are used to prevent race condition and barrier ensures that the all the local my_sum have been accumulated into the shared variable sum before the code proceeds.

// data reorganization via message passing

if (pe_id != 0) send_msg (0, my_sum); //PE other than PE0 send their local my_sum

else //PE0 does this

{

for (i = 1; i < number_of_pe; i++) //for each other PE

{

recv_msg (i, temp); //receive local sum from other PEs

my_sum = my_sum + temp; //accumulate into total

}

sum = my_sum;

}

The above code shows how data reorganization is done by message passing programming model. PE0 acts as the one that collects local my_sum from all the other PEs. This is done by send_msg() and recv_msg() routine: PEs other than PE0 send their my_sum as message to PE0 and PE0 receives the messages from everyone of them by specifying the sender in the for loop.

Recent changes

As mentioned previously, the data-parallel programming model in itself is a contrast to the shared memory programming model and the message passing programming model. Now, with the evolution of SIMD to single-program-multiple data (SPMD) machines, multiple processing elements run identical copies of the same program. In addition, SPMD now indeed uses a form of shared memory and message-passing to communicate between processing elements. As a result, even though each of the three parallel programming models have distinct features, it appears the trends are for a convergence that integrates multiple models into a single system.

References

1) David E. Culler, Jaswinder Pal Singh, and Anoop Gupta, Parallel Computer Architecture: A Hardware/Software Approach, Gulf Professional Publishing, August 1998.

2) Yan Solihin, Fundamentals of Parallel Computer Architecture: Multichip and Multicore Systems, Solihin Books, August 2009.